Quick answer

Most directory listing services sell the same promise: more visibility with less manual work. The offers look similar, but the outcomes are not. The winners are usually providers with stricter filtering, better execution controls, and clearer post-submission reporting.

If you compare only price or raw directory count, you can buy a package that looks productive but is hard to connect to business outcomes. A better approach is to evaluate delivery quality, risk controls, and decision-ready reporting before you start.

For teams evaluating directory listing services, the core question is not "who submits to the biggest list," but "who can run a repeatable process with quality gates and measurable progress."

sbb-itb-8e44301

Problem framing

The market has matured, but buyer behavior often has not. Many teams still purchase directory listing services with a checklist that overweights volume and underweights process reliability.

That creates predictable issues:

- low-fit placements mixed into high-fit submissions,

- inconsistent profile data across destinations,

- weak traceability between submission activity and business signals,

- unclear next actions after each reporting cycle.

When these issues stack up, teams get "activity success" without "decision success." Work gets done, but the operating model remains noisy.

Why service outcomes diverge

Two providers can submit to a similar number of directories and still produce very different outcomes. The difference usually comes from three operating layers:

- selection logic: how candidates are included or excluded,

- execution controls: how submissions are sequenced and quality-checked,

- reporting discipline: how outcomes are measured and translated into next steps.

If one of these layers is weak, performance tends to flatten early.

Service-model comparison table

| Service model | Best for | Typical strengths | Typical weaknesses | Risk level |

|---|---|---|---|---|

| Freelancer/manual operator | Very small budget tests | Low cost, flexible requests | Inconsistent QA, hard to scale, sparse reporting | Medium to high |

| One-time bulk package | Teams optimizing for speed only | Fast completion, simple buying process | Relevance quality varies, weak iteration loop | High |

| Traditional citation agency | Local multi-location businesses | Process maturity, local listing knowledge | Can be slow, may rely on rigid workflows | Medium |

| Niche submission service | Startups with category-specific goals | Better niche match, focused destination sets | Quality depends on internal standards | Medium |

| Productized workflow tool (ListingBott model) | Teams wanting repeatable process and visibility | Approval gates, operational consistency, status reporting | Requires clear input goals to perform best | Low to medium |

Buyer scorecard (use before purchase)

| Evaluation criterion | Weight | What to verify | Pass signal | Warning signal |

|---|---|---|---|---|

| Relevance filtering quality | 30% | How directories are selected and excluded | Clear filter criteria with examples | "We submit everywhere" language |

| Profile QA standard | 20% | Copy consistency and field validation process | Defined QA checklist before publish | No pre-publish QA step |

| Submission control model | 20% | Batch design, error handling, status tracking | Controlled waves and issue logs | One-shot push with no checkpoints |

| Reporting and actionability | 20% | Clarity of outcomes and next actions | Report includes decisions, not just logs | Activity-only reports |

| Iteration loop | 10% | How cycle 2 improves from cycle 1 | Monthly or wave-based optimization | No optimization plan |

Use weighted scoring instead of yes/no comparisons. It helps you separate polished sales pages from reliable operations.

Red flags during vendor review

- Heavy emphasis on count, little detail on quality controls.

- No clear answer on directory exclusion logic.

- No approval step before full publishing.

- Reports that list completed tasks but no optimization actions.

- Guarantee language without explicit conditions.

A practical buy/no-buy rule: if you cannot map vendor process into a 30-60-90 day execution plan, the offer is not mature enough for reliable outcomes.

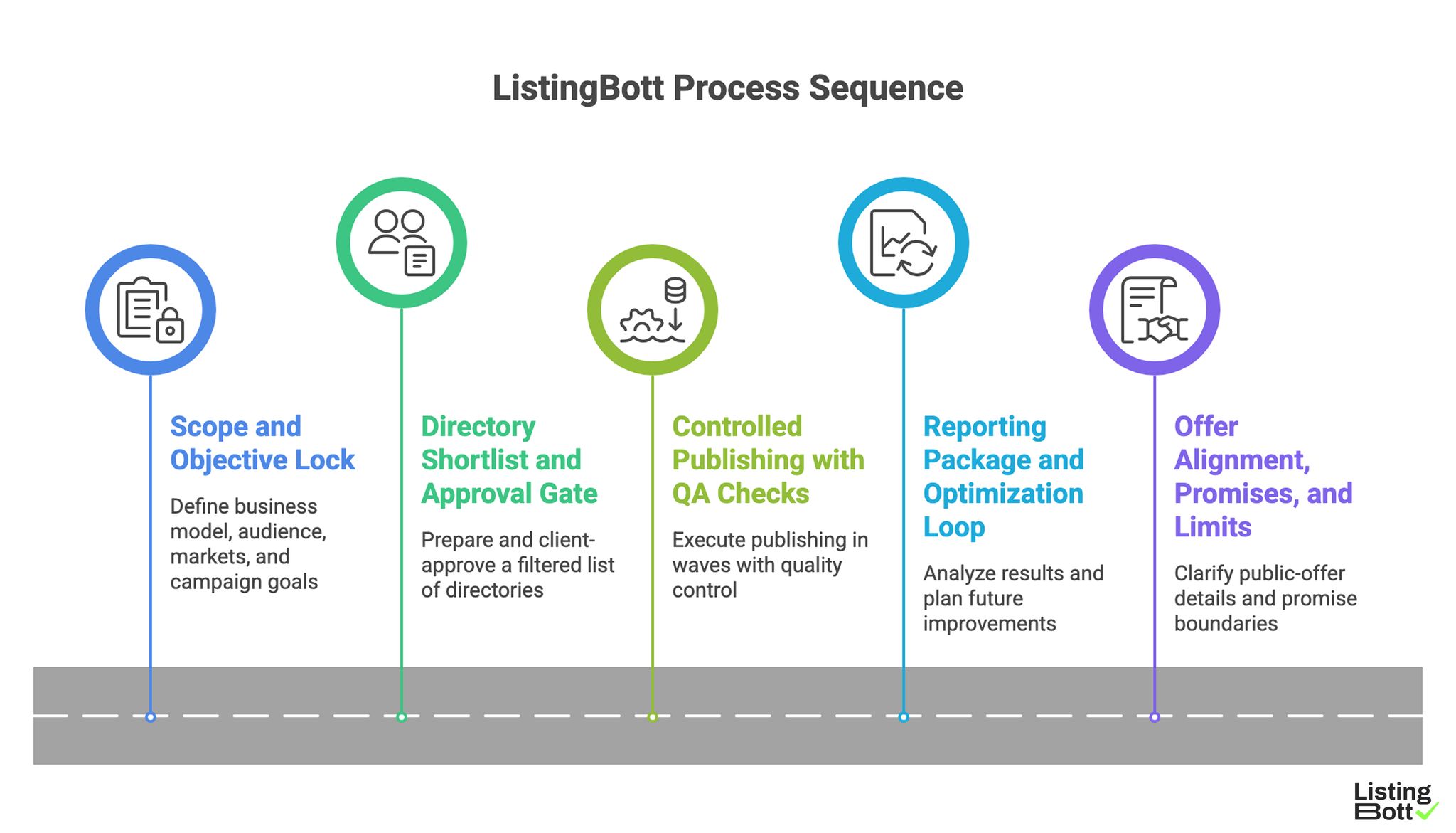

How ListingBott works

ListingBott is built as a productized execution flow rather than a one-off directory list package. The focus is operational consistency: define scope, approve targets, execute in controlled waves, then improve from real reporting.

1) Scope and objective lock

Work starts with structured intake through a client form. This defines:

- business model and audience,

- markets and constraints,

- campaign objective,

- baseline for later comparison.

Without this step, directory work drifts into generic submissions. With this step, each cycle can be evaluated against explicit goals.

2) Directory shortlist and approval gate

ListingBott prepares a filtered shortlist. Before large-scale publishing, the client reviews and approves the list. This approval gate prevents low-fit expansion and aligns expectations early.

This is also where campaign intent matters. If the chosen objective is domain growth, acceptance criteria and monitoring are set accordingly from the start.

3) Controlled publishing with QA checks

Execution runs in controlled waves, not uncontrolled blasts. This makes quality control practical and gives room to correct profile-level issues before scale.

Operationally, this is where directory submission automation reduces manual overhead while keeping process checks in place.

4) Reporting package and optimization loop

After each cycle, reporting is expected to show:

- what was submitted,

- current status by destination,

- quality notes,

- recommendations for the next cycle.

The key outcome is not a list of actions completed. The key outcome is a list of decisions for what to expand, what to fix, and what to deprioritize.

5) Offer alignment, promises, and limits

ListingBott public-offer alignment in current materials:

- one-time payment model,

- publication to 100+ directories (per current website language),

- refund can apply if process has not started,

- no hidden extra fees (per current FAQ language).

Promise boundaries are explicit. ListingBott does not promise guaranteed ranking position, guaranteed traffic by a specific date, guaranteed indexing speed, or outcomes controlled by third-party platforms.

For DR-specific goals, there is one qualified promise case: if starting DR is below 15, the goal is explicitly set to domain growth, and the client approves the directory list, growth to DR 15 can be promised under that qualified setup.

ListingBott Process Sequence

Proof/results

This page avoids fabricated outcomes and uses an evidence model you can apply to any provider.

What to measure in the first 90 days

| Signal type | Example metric | Review cadence | What it helps decide |

|---|---|---|---|

| Execution quality | Accepted vs planned listings by wave | Weekly during active waves | Whether process controls are working |

| Data consistency | Listing profile error rate | Weekly or per wave | Whether QA standards need tightening |

| Discovery support | Referral sessions from listing sources | Monthly | Which directory categories are worth expanding |

| Brand support | Branded query trend direction | Monthly | Whether awareness support is building |

| Commercial support | Assisted visits to money pages | Monthly | Whether listing activity supports pipeline motion |

Treat these as directional signals, not instant-win promises. Early cycles usually reveal process quality first, then performance trends.

What strong reporting looks like

A useful report should let a non-technical stakeholder answer five questions quickly:

- What was delivered in this cycle?

- What quality issues were found?

- Which issues were resolved?

- What changed vs baseline?

- What should happen next cycle?

If a report cannot answer all five, it is incomplete for operational decisions.

Promise clarity table

| Topic | Can be promised | Cannot be promised |

|---|---|---|

| Delivery process | Structured workflow, approval gates, reporting handoff | Exact behavior of third-party platforms |

| Costs/offer terms | One-time payment, no hidden extra fees, refund condition if process not started | Price outcomes beyond stated offer terms |

| DR outcome language | Qualified path to DR 15 under documented conditions | Blanket DR increase guarantees for all cases |

| Search impact | Better process quality and improved measurement discipline | Guaranteed ranking position or fixed-date traffic outcomes |

This clarity helps prevent expectation mismatch and reduces refund friction caused by ambiguous promises.

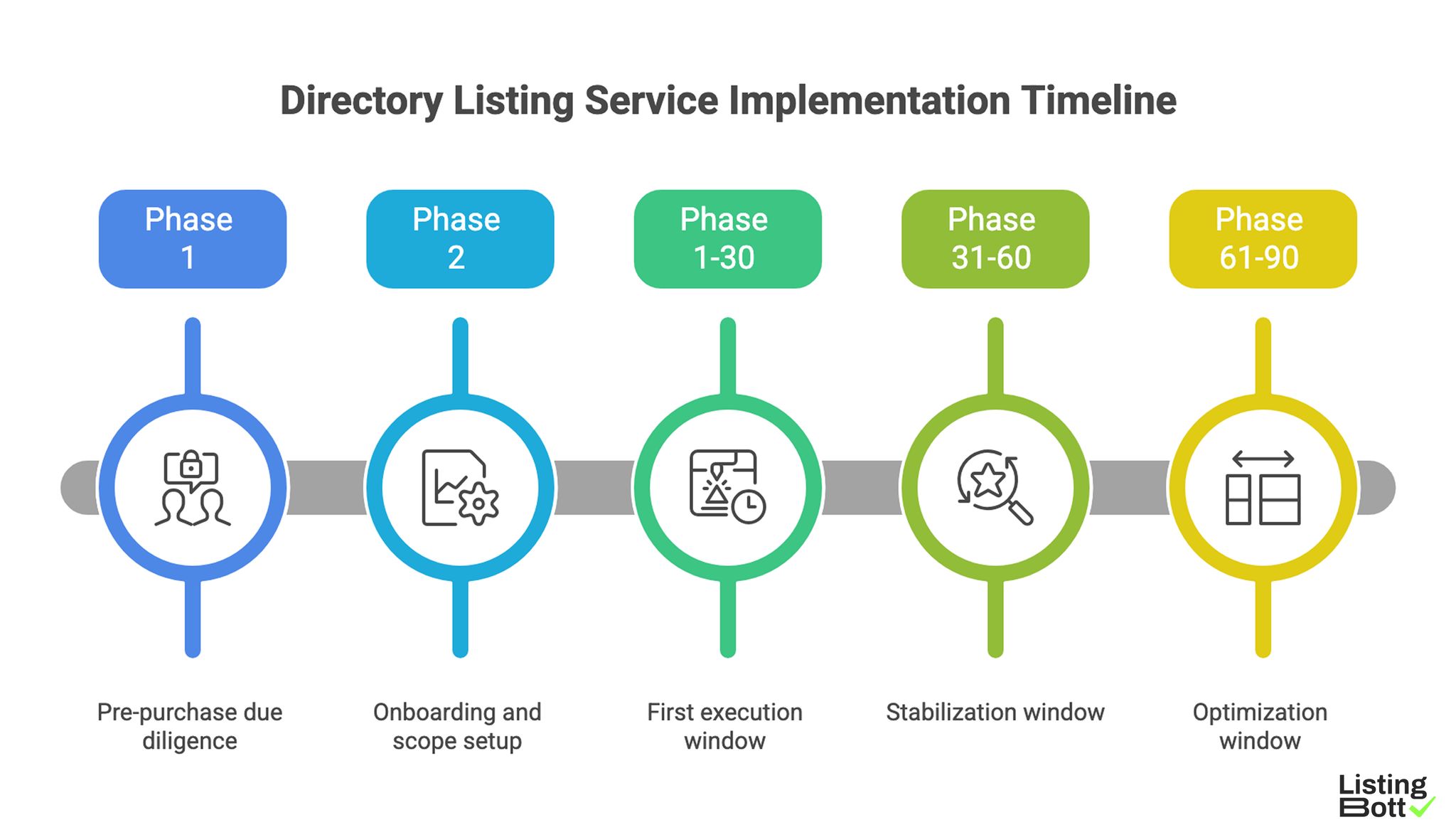

Implementation checklist

Use this checklist to evaluate and run directory listing services without guesswork.

Phase 1: Pre-purchase due diligence

- Define the primary campaign objective in one sentence.

- Set a "must exclude" directory policy before vendor calls.

- Require the vendor to explain inclusion/exclusion logic.

- Ask for sample reporting format before purchase.

- Confirm whether a pre-publish approval gate exists.

- Confirm escalation path for quality corrections.

Phase 2: Onboarding and scope setup

- Complete intake with full business context.

- Lock baseline metrics before first wave.

- Confirm messaging and profile-field consistency rules.

- Approve initial directory shortlist.

- Set first reporting checkpoint date.

Phase 3: First execution window (days 1-30)

- Run wave 1 with controlled volume.

- Track accepted, pending, and correction-needed statuses.

- Document quality issues and remediation steps.

- Validate that process remains within approved scope.

Phase 4: Stabilization window (days 31-60)

- Re-score directory categories by observed quality.

- Remove weak-fit categories from next wave.

- Tighten profile consistency rules where needed.

- Verify reporting includes actionable recommendations.

Phase 5: Optimization window (days 61-90)

- Compare directional metrics against baseline.

- Expand only categories with clear quality signals.

- Update next-cycle plan using evidence, not assumptions.

-

Keep a single decision log for leadership visibility.

Directory Listing Service Implementation Timeline

Common implementation mistakes

| Mistake | Operational impact | Correction |

|---|---|---|

| Buying by count alone | High activity, weak outcomes | Use weighted scorecard and approval gate |

| Skipping baseline setup | No reliable before/after view | Capture baseline before first wave |

| Inconsistent profile inputs | Rework and trust issues | Standardize core profile assets |

| Treating reports as final output | No optimization loop | Require next-cycle action plan in each report |

| Overpromising outcomes | Expectation mismatch and churn risk | Use explicit promise boundaries in all client communication |

FAQ

1) What is the fastest way to compare directory listing services?

Use a weighted scorecard across relevance filtering, QA standards, submission controls, reporting quality, and iteration plan.

2) Is the cheapest service usually the best choice?

Not necessarily. Lower cost can be outweighed by weak quality controls and poor reporting, which increases hidden operational cost later.

3) How many directories should a first wave include?

Start with a controlled shortlist you can quality-check properly, then expand only after signal quality is acceptable.

4) What should I ask before signing any provider?

Ask how they filter directories, how they handle approval, what reporting includes, and how they improve cycle 2 vs cycle 1.

5) What can ListingBott promise and not promise?

ListingBott can promise structured execution and defined offer terms. It does not promise guaranteed rankings, fixed-date traffic outcomes, or third-party platform behavior.

6) When can a DR-related promise be made?

Only in the qualified case where starting DR is below 15, the goal is explicitly domain growth, and the directory list is client-approved.

Final takeaway

Directory listing services are not interchangeable. The best option is usually the one with stronger process controls, clearer reporting, and tighter promise discipline, not the one with the biggest number on a sales page. If you adopt a scorecard-first buying model, you reduce risk and make outcomes easier to evaluate over time.