Quick answer

Local directory work performs best when it is treated as an operations system, not a one-time blast. The core sequence is simple: prioritize the right local destinations, standardize location data, publish in controlled waves, and iterate from reporting.

Most teams lose performance by spreading effort across low-fit directories or publishing inconsistent profile information across locations. A better path is to segment directories by local relevance and run a repeatable QA workflow.

If your team wants a practical execution model, use local business directory submission as a scoped, measurable program with clear quality gates.

sbb-itb-8e44301

Problem framing

Local search visibility depends on consistency and trust signals more than submission volume alone. When local listings are inconsistent, even strong businesses struggle to get predictable performance from directory campaigns.

The most common local failure patterns are operational:

- inconsistent NAP details (name, address, phone),

- weak category mapping per location,

- duplicate or conflicting profiles,

- missing location-level ownership of updates,

- no structured reporting after submissions.

These issues create two costs at the same time: slower visibility gains and higher rework burden for your team.

Local directory tier model (where to list first)

| Tier | Directory group | Priority logic | Typical risk if ignored |

|---|---|---|---|

| Tier 1 | Core local platforms and high-trust business directories | Start here for all local campaigns | Missing foundational local coverage |

| Tier 2 | Industry-specific local directories | Prioritize when category fit is strong | Qualified local demand may miss your listing |

| Tier 3 | City/regional ecosystem directories | Add after Tier 1 and Tier 2 are stable | Weaker local relevance in key geographies |

| Tier 4 | Low-quality mass directories | De-prioritize or avoid | Higher noise, low signal, unnecessary cleanup |

This tiering model prevents low-value expansion and keeps your first cycles focused on quality.

Single-location vs multi-location complexity

| Business model | Main challenge | Required control |

|---|---|---|

| Single location | Accuracy and completeness of one profile set | Strict profile QA before publish |

| Few locations (2-10) | Consistent fields with minor local variations | Location template + approval workflow |

| Multi-location (10+) | Scale without data drift | Centralized data source + wave-based publishing |

Local programs fail when teams use one location as template but skip verification against real location details.

Decision framework: should you scale now?

Scale local submissions only when all three conditions are true:

- Profile accuracy check passes for current locations.

- Directory acceptance quality is stable in recent waves.

- Reporting translates outcomes into next-cycle actions.

If one condition fails, fix process quality before scaling footprint.

How ListingBott works

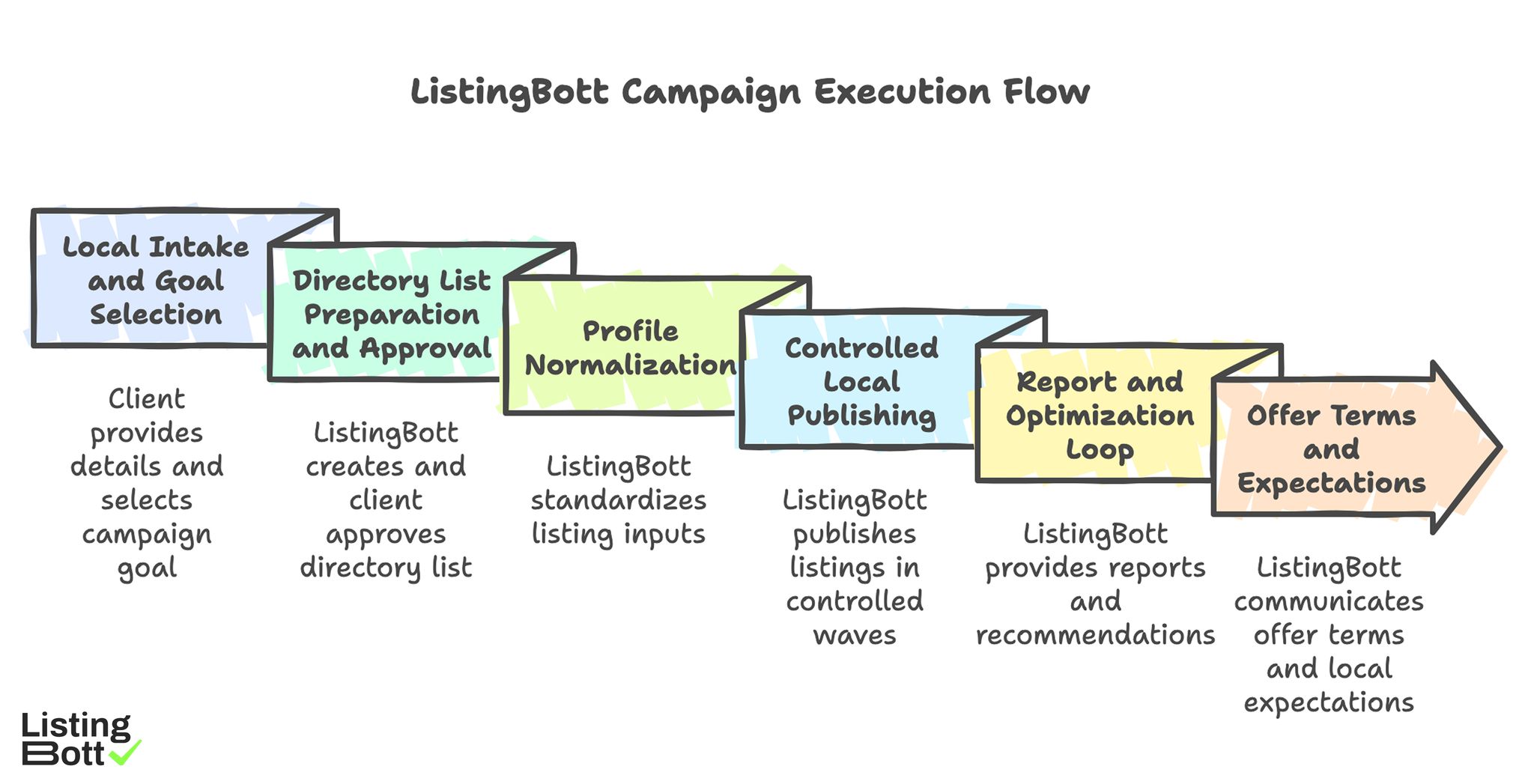

ListingBott runs local directory campaigns as a structured execution flow: client form, directory list approval, publishing, and reporting. The goal is consistent delivery quality with clear status visibility.

1) Local intake and goal selection

Local projects start with a complete client form. Intake should lock:

- exact location details,

- service areas,

- category and positioning data,

- campaign goal.

When DR is the chosen campaign goal, the goal must be explicitly set to domain growth.

2) Directory list preparation and approval

ListingBott prepares a local-fit directory list based on project scope. The list is shared for client approval before full publishing.

This approval gate is critical for local campaigns because directory fit varies by location and industry.

3) Profile normalization before publish

Before submission waves, core listing inputs are standardized:

- name/address/phone format,

- business description baseline,

- category consistency,

- local variation rules (when needed).

This step reduces downstream correction cycles and improves reporting clarity.

4) Controlled local publishing and status tracking

Publishing runs in controlled waves rather than one large push. That supports quality checks and issue isolation if a subset needs changes.

Teams that need to manage local listings at scale generally benefit from this controlled-wave model because it reduces manual tracking chaos.

5) Report and optimization loop

After publishing, ListingBott provides a status-led report covering submitted directories, current states, pending items, and next recommendations. The standard milestone is clear: report is ready.

Operationally, each cycle should answer:

- what was delivered,

- what changed,

- what remains blocked,

- what to do next.

6) Offer terms, promise boundaries, and local expectations

Public-offer alignment in current ListingBott materials:

- one-time payment model,

- publication to 100+ directories (per current website language),

- refund possible if process has not started,

- no hidden extra fees (per current FAQ language).

Local campaigns should be sold on process quality and reporting transparency, not on guaranteed ranking dates or guaranteed traffic by a fixed deadline.

ListingBott Campaign Execution Flow

Proof/results

Local directory work should be evaluated with explicit before/after measurement windows. Without that, teams confuse activity volume with performance progress.

Local performance scorecard (first 90 days)

| Signal | Why it matters | Measurement cadence | Healthy direction |

|---|---|---|---|

| Local profile accuracy rate | Indicates data consistency quality | Weekly during active waves | Error rate declines over time |

| Directory acceptance quality | Shows destination fit and execution quality | Per wave | Higher acceptance in prioritized tiers |

| Local referral contribution | Indicates discoverability support | Monthly | Trend improves cycle to cycle |

| Branded local query trend | Indicates local awareness support | Monthly | Stable or upward trajectory |

| Commercial assist actions | Connects local visibility to business path | Monthly | More assisted visits/actions over time |

This scorecard avoids inflated claims and gives teams a repeatable decision model.

What ListingBott can and cannot promise

ListingBott can promise structured execution, transparent status updates, submission delivery within agreed scope, and report delivery.

ListingBott cannot promise guaranteed rankings, guaranteed traffic by a specific date, guaranteed indexing speed, or outcomes controlled by third-party platforms.

For DR-specific campaigns, there is one qualified promise path: if starting DR is below 15, the goal is explicitly set to domain growth, and the client approves the directory list, growth to DR 15 can be promised under that qualified setup.

Practical evidence discipline for local campaigns

Maintain one source of truth for each cycle:

- baseline metrics before first submission,

- approved directory set by tier,

- status log by wave,

- correction and blocker log,

- post-cycle KPI readout,

- next-cycle decision list.

This makes local scaling auditable and keeps expectations aligned with real delivery.

Implementation checklist

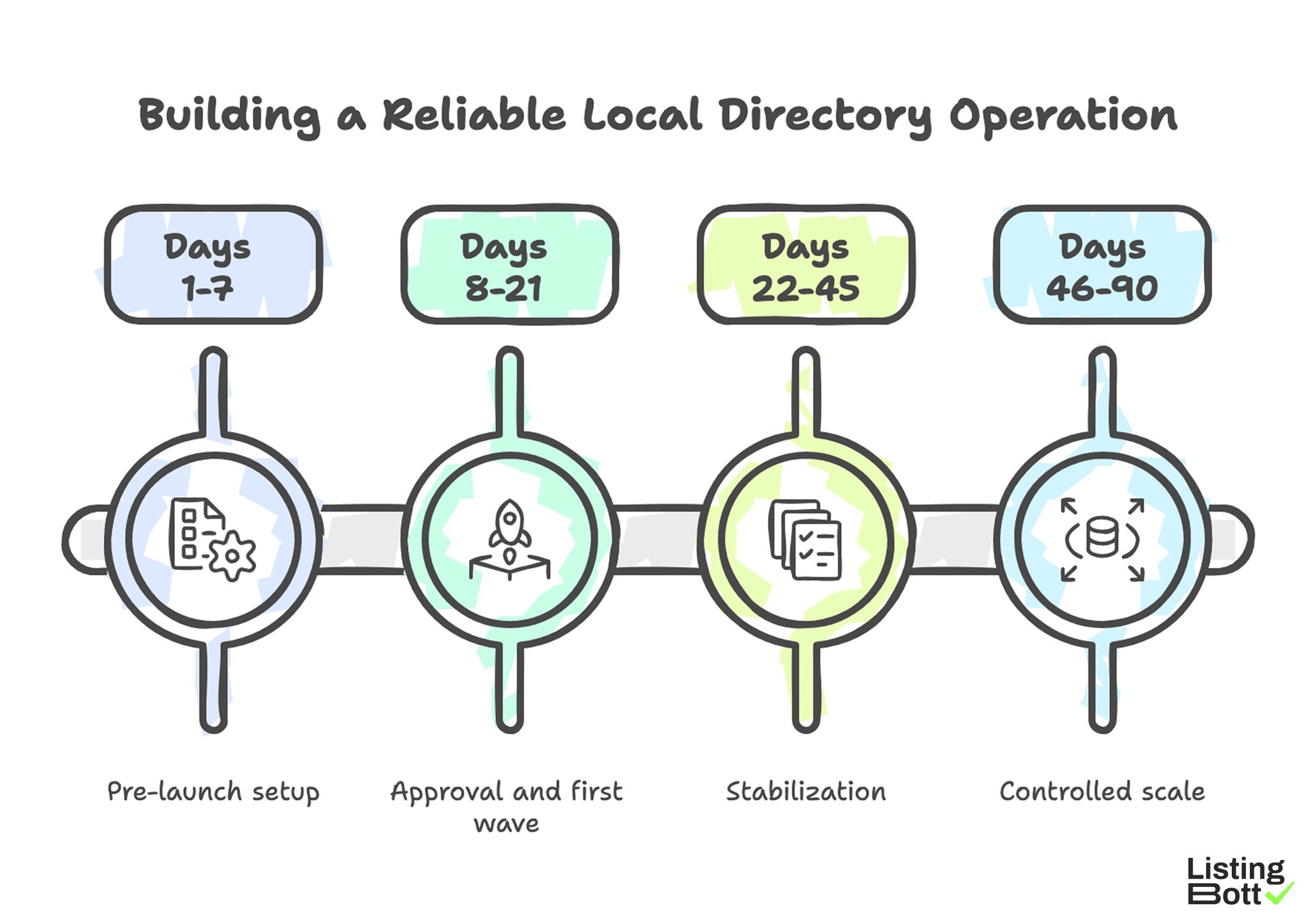

Use this checklist to build a reliable local directory operation instead of a one-off task list.

Phase 1: Pre-launch setup (days 1-7)

- Confirm all location records are complete and current.

- Lock core listing fields and formatting rules.

- Define tier-based directory priority.

- Establish baseline measurements before publish.

- Assign owner for local profile corrections.

Phase 2: Approval and first wave (days 8-21)

- Review and approve the initial local directory list.

- Launch controlled wave 1.

- Track acceptance and pending statuses.

- Log corrections by location.

- Escalate blocker patterns quickly.

Phase 3: Stabilization (days 22-45)

- Audit profile consistency across published listings.

- Remove weak-fit destinations from next wave.

- Refine category mapping where needed.

- Tighten QA rules based on recurring errors.

Phase 4: Controlled scale (days 46-90)

- Expand to additional local tiers only after quality pass.

- Keep wave size manageable for correction throughput.

- Compare results against baseline and prior wave.

-

Build next-quarter local listing plan from evidence.

Building a Reliable Local Directory Operation

Local mistakes and fixes

| Mistake | Operational impact | Fix |

|---|---|---|

| Publishing without NAP QA | Data conflicts and correction backlog | Run pre-publish NAP validation checklist |

| Overweighting low-quality local directories | High effort, low business signal | Use tier model and quality threshold |

| No location-level ownership | Slow correction cycles | Assign owner per location cluster |

| Scaling before quality stabilizes | Error amplification across locations | Use scale-now gate (quality first) |

| Reporting without next actions | No optimization loop | Require action-oriented report summary |

FAQ

1) How many local directories should I start with?

Start with a smaller, high-fit tiered set and expand only after data consistency and acceptance quality are stable.

2) Is local directory submission still useful in 2026?

Yes, when executed with relevance filtering, profile consistency controls, and clear reporting. It is weaker when treated as volume-only activity.

3) What is the biggest local execution risk?

Inconsistent location data across directories. It increases rework and reduces trust signals.

4) How soon should I scale from one location to many?

Scale only after your first location cycle passes quality checks and reporting clearly supports expansion.

5) Can ListingBott guarantee local rankings?

No. ListingBott does not guarantee ranking positions or fixed-date traffic outcomes.

6) When is a DR promise valid?

Only when starting DR is below 15, the goal is explicitly domain growth, and the directory list is approved by the client.

Final takeaway

Local directory growth is mostly an execution-quality problem. Teams that prioritize tiered destination selection, strict profile consistency, and wave-based scaling usually build more reliable outcomes than teams that optimize for count alone.