Quick answer

A domain rating checker is useful for SaaS only when you treat it as a decision input, not as a standalone success metric. Domain Rating (DR) helps estimate backlink profile strength, but it does not directly guarantee ranking or revenue outcomes.

The practical use of DR is to prioritize actions: which link sources to pursue, which directories to ignore, and where quality controls are failing.

For most SaaS teams, the best process is monthly DR trend review plus weekly source-quality checks. If you need an operational workflow for submissions and tracking, Start with ListingBott after defining your acceptance criteria.

Methodology

This framework helps SaaS teams use DR checkers correctly and avoid common metric traps.

1) Understand what DR can and cannot do

DR can help you:

- compare relative link-profile strength,

- spot directional authority growth,

- audit whether link acquisition quality is improving.

DR cannot reliably tell you:

- if target pages will rank by a specific date,

- if traffic will increase on a fixed timeline,

- if link sources are contextually relevant to your ICP.

2) Review DR with a multi-signal scorecard

Use DR with supporting indicators, not alone.

| Signal | Why it matters | Review cadence |

|---|---|---|

| DR trend | Shows directional authority movement | Monthly |

| Referring-domain relevance | Prevents low-fit source accumulation | Weekly |

| Commercial keyword movement | Connects authority to business pages | Monthly |

| Qualified traffic trend | Validates discovery quality | Monthly |

| Link-source quality ratio | Tracks trusted vs weak source mix | Weekly |

This scorecard keeps strategy tied to outcomes.

3) SaaS benchmark model (practical)

Define benchmark bands by your market stage and competition level.

| Stage | Typical DR operating goal | Notes |

|---|---|---|

| Early SaaS | Build consistent baseline trend | Focus on relevance and clean execution |

| Growth SaaS | Improve quality mix of sources | Reduce low-trust submissions |

| Mature SaaS | Maintain stability and efficiency | Protect against profile drift |

Benchmarks should be reviewed against competitor movement, not copied blindly.

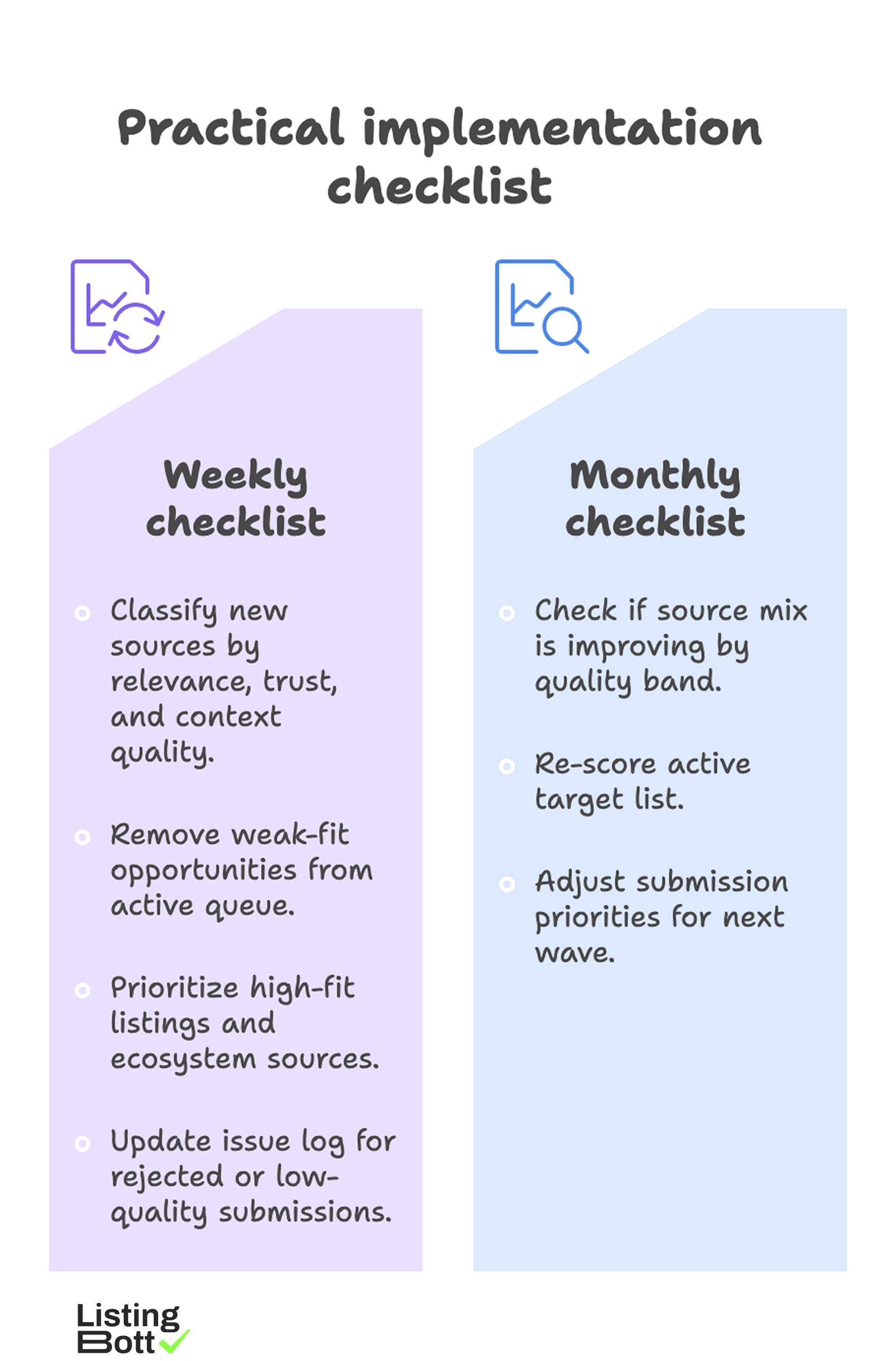

Practical implementation checklist

Practical Implementation Checklist

Weekly checklist

- Pull DR and referring-domain changes from your checker.

- Classify new sources by relevance, trust, and context quality.

- Remove weak-fit opportunities from active queue.

- Prioritize high-fit listings and ecosystem sources.

- Update issue log for rejected or low-quality submissions.

Monthly checklist

- Compare DR trend with commercial page performance.

- Check if source mix is improving by quality band.

- Re-score active target list.

- Adjust submission priorities for next wave.

Real example listings from your categories dataset

The examples below are sourced from Backlinks for tool our tools - Сategories.tsv (category: JustSaasListings). They are examples for evaluation workflow, not blanket endorsements.

| Directory example | DA | Traffic | Type |

|---|---|---|---|

| https://shipybara.com/ | 41 | 1,000.00 | Nofollow |

| https://openhunts.com/ | 29 | 1,000.00 | Dofollow |

| https://www.findyoursaas.com | 19 | 1,000.00 | Dofollow |

| https://www.go-publicly.com/ | 21 | 1,000.00 | Dofollow |

| https://ramen.tools/ | 53 | 17,700.00 | Dofollow |

| https://peerpush.net/ | 58 | 2,900.00 | Dofollow |

| https://open-launch.com | 38 | 7,400.00 | Nofollow |

| https://stashli.st | 24 | 1,000.00 | Dofollow |

How to use this table:

- score relevance before DA,

- balance quality and maintainability,

- validate profile quality after each wave.

How ListingBott solves this

Many SaaS teams know what to measure but struggle to execute submissions consistently. Common issues include fragmented tracking, inconsistent profile fields, and unclear ownership.

ListingBott provides a one-time-payment workflow with intake, approved directory list, publication process, and report handoff. It is a tool workflow, not a consulting-call model.

For DR-oriented execution, the value is process reliability: what was submitted, where, and with what quality controls.

If your team is moving from ad hoc tasks to structured operations, use a business directory submission workflow to standardize execution.

What you get

A disciplined DR-checker workflow plus structured submission operations usually produces:

- cleaner source-quality decisions,

- fewer low-value submissions,

- more consistent listing data,

- better visibility into authority-building actions.

Offer language alignment:

- one-time payment model,

- publication to 100+ directories (per current website language),

- refund possible if process has not started,

- no hidden extra fees (per current FAQ language).

For ongoing maintenance after submissions, pairing with a directory listing management tool helps keep updates controlled and auditable.

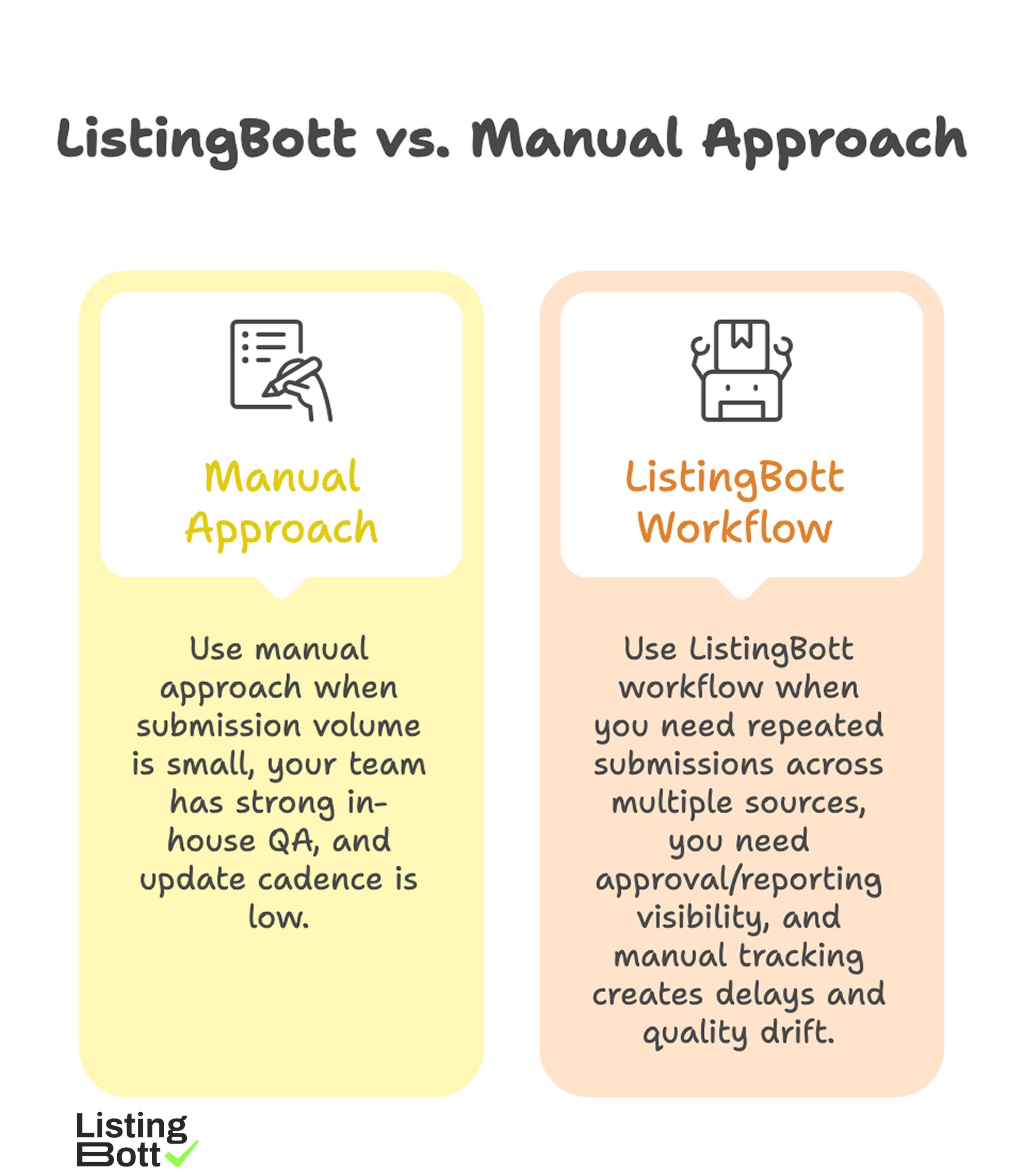

When to use manual vs ListingBott

ListingBott vs Manual Approach

Use manual approach when:

- submission volume is small,

- your team has strong in-house QA,

- update cadence is low.

Use ListingBott workflow when:

- you need repeated submissions across multiple sources,

- you need approval/reporting visibility,

- manual tracking creates delays and quality drift.

Expectation boundary: no DR workflow can guarantee fixed ranking positions, fixed-date traffic outcomes, or outcomes controlled by third-party platforms.

If DR growth to 15 is discussed, it is valid only under qualified conditions: starting DR below 15, explicit domain growth goal, and client-approved directory list.

FAQ

Which DR checker should SaaS teams use?

Use a tool your team can operate consistently and pair with source-quality review. The process quality matters more than brand preference.

How often should I check DR?

Weekly for source changes and monthly for trend interpretation against business signals.

Is higher DR always better for SaaS?

Only when source relevance and quality are strong. High metric values from weak-fit sources can be misleading.

Should I ignore nofollow directory sources?

Not always. Some nofollow placements can still support discovery and brand trust in relevant ecosystems.

Can DR alone predict SEO success?

No. DR is one signal and should be evaluated with rankings, traffic quality, and conversion context.