Quick answer

Business listing management works when teams treat listings as governed data, not scattered profile pages. The practical model is an SOP: define canonical fields, control changes, run QA before updates, and review outcomes on a fixed cadence.

Most performance loss in listing operations comes from inconsistency and weak process control, not lack of effort. A reliable SOP gives teams repeatability, faster issue resolution, and clearer measurement.

If your team needs consistency across channels and time, structure business listing management as an operational system with explicit ownership and quality gates.

sbb-itb-8e44301

Problem framing

As companies scale, listing data usually spreads across many contributors: growth, support, operations, franchise managers, agency partners, and legacy vendors. Without controls, each update can create new data drift.

Common breakdowns include:

- conflicting business descriptions across profiles,

- different phone/address formatting by source,

- stale attributes after product or service changes,

- duplicate entries created during expansion,

- no traceable record of who changed what and why.

These breakdowns create two strategic risks:

- trust risk: customers receive inconsistent business information,

- decision risk: teams cannot reliably evaluate which listing work drove improvement.

Failure-mode map for listing operations

| Failure mode | Root cause | Early indicator | Downstream impact |

|---|---|---|---|

| Field inconsistency | No canonical dataset | Same field has multiple active versions | Frequent correction cycles |

| Update collisions | Multiple editors without control policy | Rapid back-and-forth edits | Unstable profile quality |

| Duplicate profile growth | Expansion without dedupe protocol | New listings overlap old records | Conflicting signals and wasted effort |

| Blind execution | No change log or issue log | Team cannot explain recent deltas | Slow debugging and poor decisions |

| Reporting without actions | Metrics reported but no owner decisions | Repeated issues across cycles | Low operational learning |

A strong SOP addresses every failure mode with a defined control.

Canonical data model (minimum fields)

| Field group | Required fields | Quality rule | Update owner |

|---|---|---|---|

| Identity | business name, legal variant policy | One canonical naming standard | Operations owner |

| Contact | phone, primary email, support route | One approved format policy | Support/ops |

| Location | address, service area, timezone | Match source-of-truth location data | Local ops lead |

| Service details | categories, core services, short description | Version-controlled copy rules | Growth/content |

| Media and trust fields | logo, imagery, profile completeness attributes | Use latest approved assets only | Brand/design owner |

This model reduces random edits and makes QA objective.

Change-control policy matrix

| Change type | Risk level | Approval required | QA depth | Publish cadence |

|---|---|---|---|---|

| Critical customer data (phone/address/hours) | High | Required | Full validation | Immediate or next cycle |

| Category/service updates | Medium | Required | Standard validation | Planned cycle |

| Copy refinements | Medium | Conditional | Standard validation | Planned cycle |

| Cosmetic formatting updates | Low | Optional | Spot check | Batched |

| Expansion to new directories | High | Required | Full validation + dedupe | New wave only |

Without this matrix, teams treat all edits as equal and lose control of priority.

Decision framework: what to do first when backlog is large

Use this sequence:

- Resolve high-impact customer-facing errors.

- Remove duplicates and profile conflicts.

- Reconcile canonical fields across priority directories.

- Apply category/service precision updates.

- Defer cosmetic tasks to low-load windows.

This prevents low-impact tasks from consuming the team’s correction bandwidth.

How ListingBott works

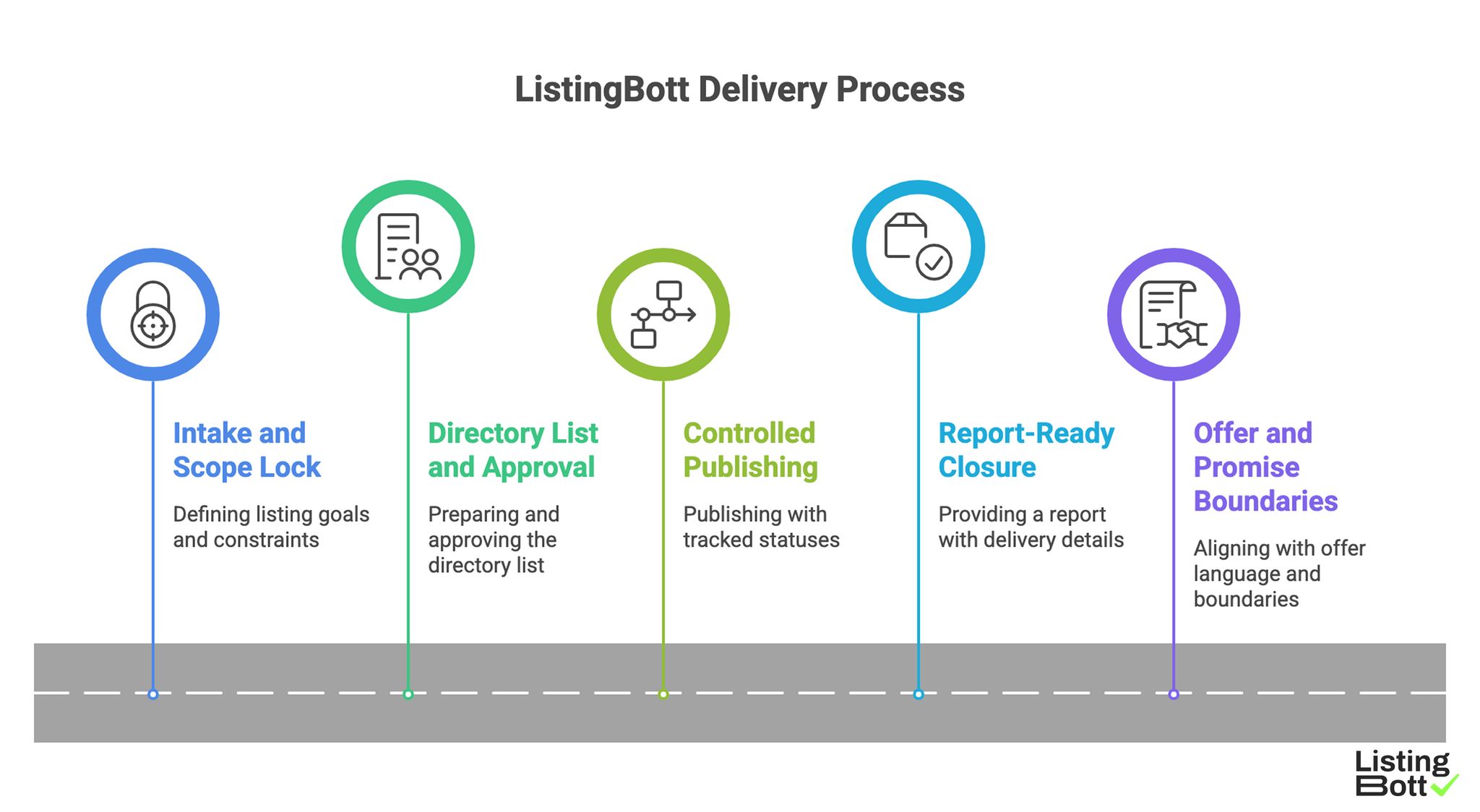

ListingBott provides a structured delivery flow: intake through client form, directory-list preparation and approval, controlled publishing, and report handoff.

1) Intake and scope lock

The workflow starts with complete intake so listing goals and constraints are defined before execution.

2) Directory list and approval step

A proposed directory list is prepared and approved before full publishing, helping avoid low-fit expansion.

3) Controlled publishing with tracking

Publishing runs with tracked statuses, which improves issue handling and cycle visibility.

4) Report-ready closure

Delivery includes a report with submitted status, pending items, and next recommendations.

5) Offer and promise boundaries

Current offer language aligns with one-time payment model, publication to 100+ directories (per current website language), no hidden extra fees (per current FAQ language), and refund possibility if process has not started.

ListingBott does not promise guaranteed ranking position, guaranteed traffic by a specific date, guaranteed indexing speed, or third-party platform outcomes.

For DR-goal projects, growth to DR 15 is only in the qualified setup: starting DR below 15, explicit domain growth goal, and approved directory list.

When teams move from manual process to a systemized workflow, they often evaluate an automated directory submission tool setup alongside their governance model.

ListingBott Delivery Process

Proof/results

The strongest listing programs measure both process quality and outcome support. If process metrics are unstable, outcome interpretation is usually unreliable.

KPI ladder for business listing management

| KPI layer | Example KPIs | Purpose | Good signal |

|---|---|---|---|

| Data quality | consistency score, mandatory-field completeness | Validate information integrity | Upward trend each cycle |

| Workflow quality | correction SLA, issue age, approval turnaround | Validate operational control | Lower unresolved backlog |

| Delivery quality | accepted updates, dedupe completion rate | Validate execution reliability | Stable cycle completion |

| Outcome support | referral trend, branded query trend, assisted actions | Validate business contribution | Directional improvement over time |

This ladder prevents teams from over-optimizing vanity metrics.

Evidence package each cycle should include

Every cycle should ship with:

- canonical data snapshot version,

- change log by field group,

- issue log with severity and owner,

- before/after quality metrics,

- next-cycle decision list.

This package makes each cycle auditable and easier to improve.

Quality thresholds for scale readiness

| Threshold | Minimum standard before scaling | Why it matters |

|---|---|---|

| Data consistency | Sustained high consistency across priority profiles | Prevents scaled inconsistency |

| Correction latency | Issues resolved within agreed SLA window | Protects trust and response speed |

| Duplicate control | New duplicate rate is low and monitored | Reduces signal conflicts |

| Decision clarity | Reports include owner + due-date actions | Keeps optimization active |

Scaling without these thresholds usually multiplies operational noise.

Interpreting performance without false guarantees

Listing operations can support visibility and conversion pathways, but they do not justify guaranteed ranking outcomes by fixed dates. Teams should evaluate direction, stability, and repeatability rather than chase short-window certainty.

This expectation model aligns operations with realistic market behavior and protects client trust.

Risk-control dashboard for leadership

| Risk area | What leaders should monitor weekly | Escalation trigger |

|---|---|---|

| Data integrity | top inconsistency categories | Repeated critical-field errors |

| Delivery execution | wave completion and issue backlog | Backlog grows 2+ cycles |

| Reporting quality | number of actionable recommendations | Reports with no clear owners |

| Resource load | time spent on recurring corrections | Same root cause repeats monthly |

| Scope governance | out-of-scope requests per cycle | Scope expansion without approval |

Leadership visibility is what keeps listing work strategic instead of reactive.

Implementation checklist

Use this SOP rollout to operationalize business listing management in a repeatable way.

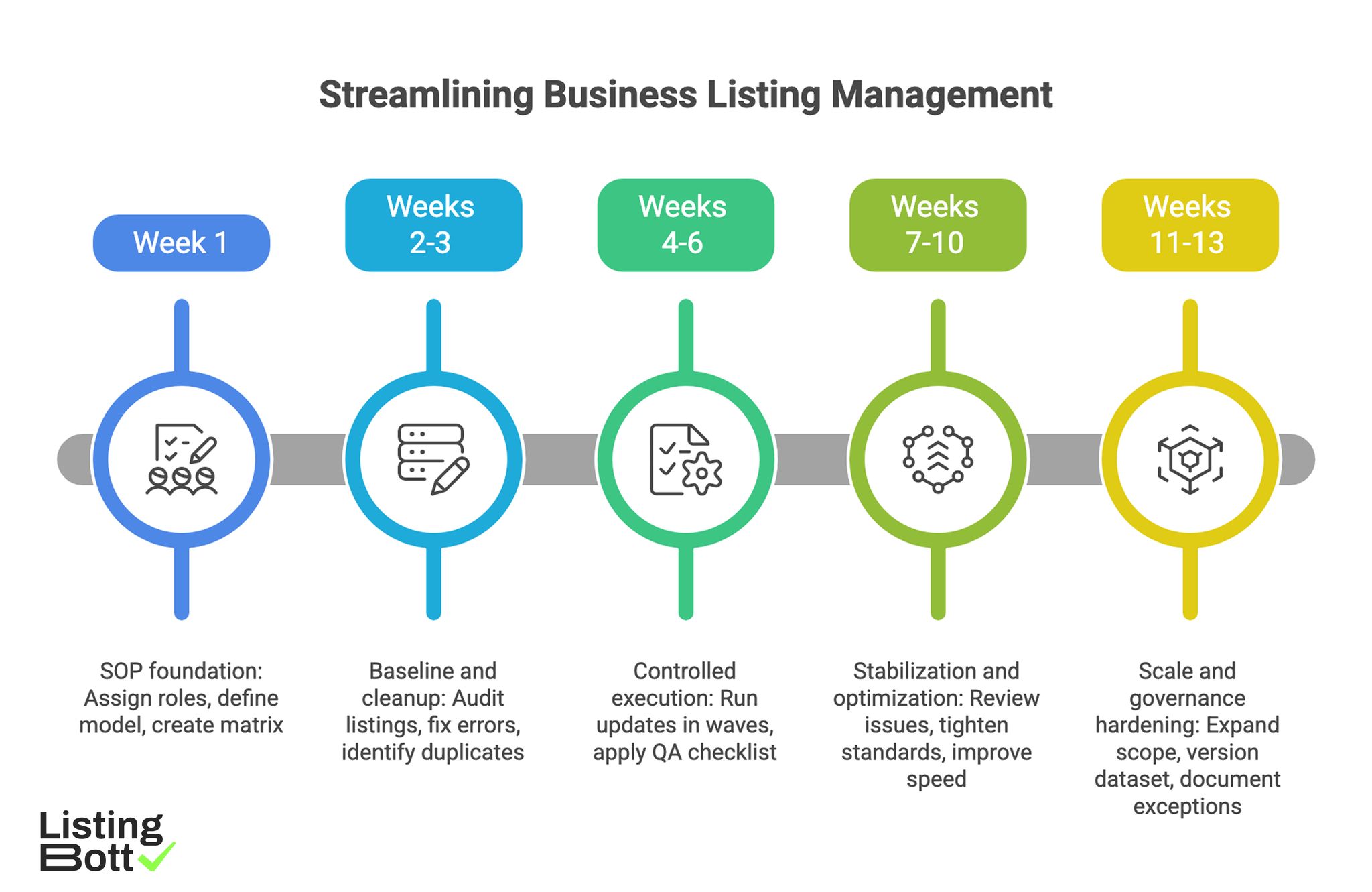

Phase 1: SOP foundation (week 1)

- Assign accountable owner and supporting roles.

- Define canonical field model and source-of-truth doc.

- Create change-control matrix by risk level.

- Establish issue severity taxonomy.

- Define reporting template and ownership format.

Phase 2: Baseline and cleanup (weeks 2-3)

- Audit current listings against canonical dataset.

- Flag and fix critical customer-facing errors first.

- Identify duplicate records and conflict clusters.

- Start change log with editor + reason + timestamp.

- Capture baseline process and outcome KPIs.

Phase 3: Controlled execution (weeks 4-6)

- Run updates in planned waves.

- Apply QA checklist before publish each wave.

- Track correction turnaround and unresolved issue age.

- Keep approval records for high-risk updates.

- Close each wave with action-focused report summary.

Phase 4: Stabilization and optimization (weeks 7-10)

- Review recurring issues by root cause.

- Tighten field standards where drift persists.

- Improve approval and escalation speed.

- Adjust workload by impact-weighted priority.

- Remove low-value recurring tasks.

Phase 5: Scale and governance hardening (weeks 11-13)

- Expand scope only after threshold checks pass.

- Keep canonical dataset versioned per cycle.

- Validate that reporting drives owner actions.

- Document SOP exceptions and lessons learned.

- Publish quarterly roadmap based on measured trends.

Weekly operator checklist

- Check critical fields across priority profiles.

- Resolve urgent customer-impacting issues.

- Update change log and issue log.

- Verify no duplicate regressions.

-

Confirm owners and due dates for open actions.

Streamlining Business Listing Management

Common SOP mistakes and fixes

| Mistake | Operational consequence | Immediate fix |

|---|---|---|

| No change log discipline | Unknown cause of data drift | Enforce mandatory change logging |

| Approval skipped for high-risk edits | Error propagation | Apply risk-based approval gate |

| KPI overload | Team loses focus on priorities | Keep KPI ladder concise and tiered |

| Reporting with no owner actions | Repeated unresolved issues | Require owner + due date in every recommendation |

| Scaling before consistency | Volume amplifies errors | Gate scale with defined thresholds |

FAQ

1) What is the first step in business listing management?

Create a canonical source-of-truth dataset and assign one accountable owner.

2) How do I prevent listing data drift over time?

Use change-control approvals, weekly QA checks, and mandatory change logs.

3) Which metrics matter most at the start?

Track consistency score, correction SLA, issue age, and duplicate rate before expanding outcome metrics.

4) When should teams scale listing operations?

Only after consistency and correction thresholds remain stable across multiple cycles.

5) Can a listing SOP guarantee ranking gains?

No. It improves reliability and decision quality, but fixed-date ranking guarantees are not realistic.

6) How often should SOPs be updated?

Review monthly for operational tuning and quarterly for structural changes.

Final takeaway

Business listing management becomes scalable when it is governed like a process, not treated like scattered profile edits. A clear SOP with change control, QA gates, and KPI discipline turns listing work into a reliable growth-support operation.