Table of Contents

- The STARTUP-6 Scoring Model

- Implementation Checklist

- Startup-specific Risk Controls

- Common Mistakes

- 90-Day Startup Listing Plan

- FAQ

sbb-itb-8e44301

Quick Answer

Startup directory submission services work best when teams prioritize channel quality and lifecycle control, not raw submission volume. In 2026, the strongest results usually come from a focused rollout that combines high-fit startup directories, consistent profile governance, and recurring quality checks.

A practical sequence is:

- build one canonical startup profile baseline,

- score channels before submission,

- launch a controlled first wave,

- run QA and correction cycles,

- expand only when maintenance load stays stable.

This approach prevents the common pattern where startups publish widely but cannot keep listings accurate over time.

Why Startup Submission Strategy Matters in 2026

Early-stage teams use directories for more than backlinks. They use them for discovery, trust validation, launch momentum, and distribution support. Buyers, investors, partners, and talent often encounter startup profiles across multiple ecosystems before they visit the main website.

That changes the quality standard. If listings are inconsistent, outdated, or generic, discovery value drops quickly. Teams usually see four failure patterns:

- unclear product positioning across channels,

- weak destination mapping from listing to landing page,

- duplicate profiles and stale records,

- rising maintenance burden without measurable output.

So submission should be treated as an operating workflow, not as one batch task.

For teams exploring article submission sites, local citation submission, and global channels together, process separation is critical. Each track should have clear goals and quality rules.

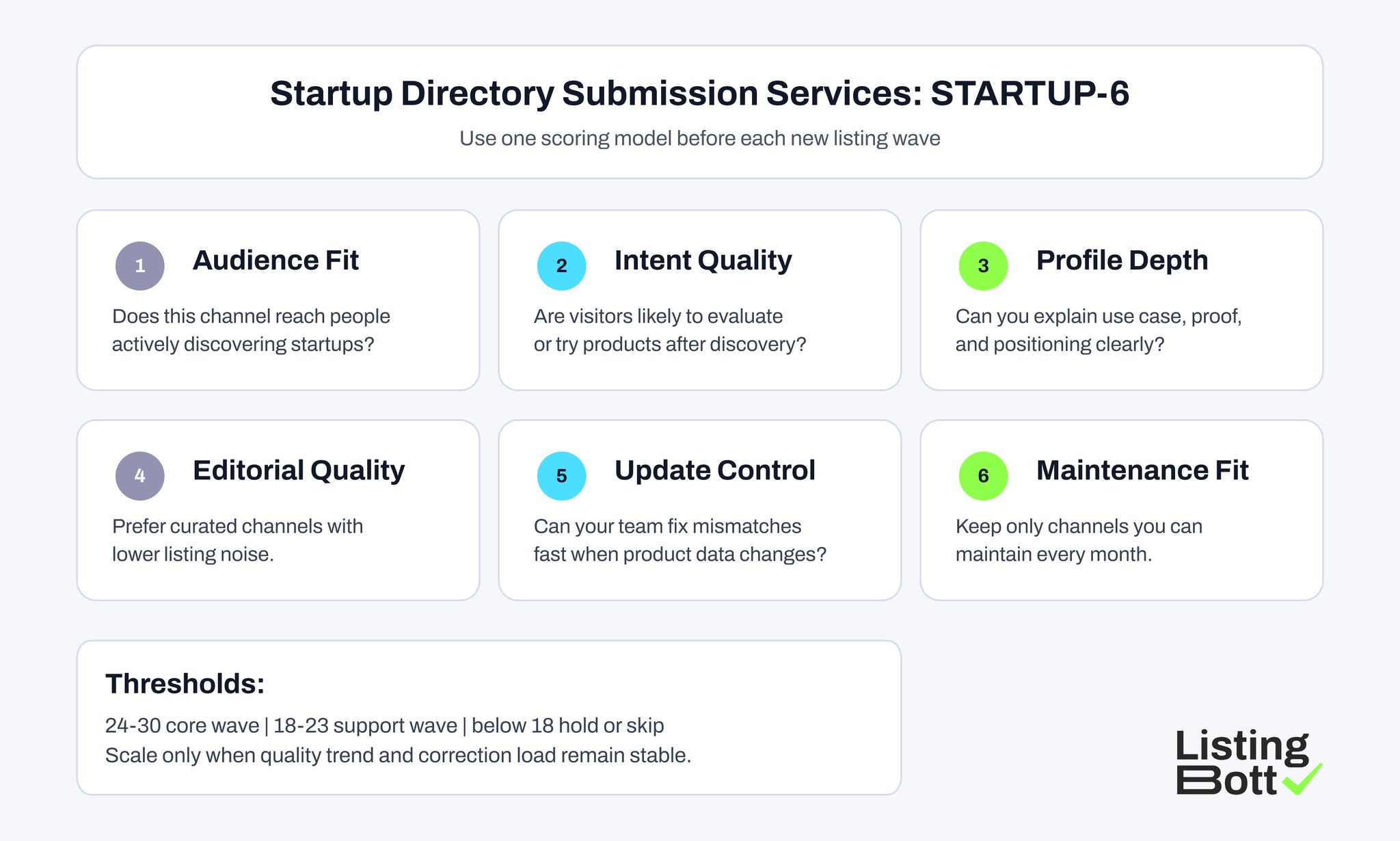

The STARTUP-6 Scoring Model

Use this model to choose startup directory submission services and channel portfolios.

| Factor | Practical question | Why it matters | Score (1-5) |

| Startup audience fit | Does the platform reach users who actively discover startup products? | improves relevance | 1-5 |

| Intent quality | Is user intent close to trial, signup, or deeper evaluation? | supports conversion quality | 1-5 |

| Profile depth | Can listings show use case, positioning, and trust proof clearly? | improves qualification | 1-5 |

| Editorial quality | Is the channel curated enough to avoid low-quality noise? | protects context quality | 1-5 |

| Update control | Can corrections and updates be applied quickly? | reduces drift risk | 1-5 |

| Maintenance cost | Can this channel be maintained with current team capacity? | protects scalability | 1-5 |

Threshold guidance:

- 24-30: core wave,

- 18-23: support wave,

- below 18: hold or skip.

This framework helps teams evaluate seo submission opportunities with consistent criteria instead of assumptions.

Startup Directory Submission Services: STARTUP-6

Best-fit Listing Platforms for Startup Directory Submission Services

This set combines startup-native launch ecosystems and high-intent software discovery channels.

| Platform | URL | Why it is a best fit | Ideal company profile | Submission note |

| Product Hunt | https://www.producthunt.com/ | High launch visibility and early adopter discovery behavior | product-led startups launching new tools | prepare launch messaging and proof assets first |

| BetaList | https://betalist.com/ | Early startup exposure channel with discovery-first users | pre-seed and seed startups | keep positioning concise and problem-driven |

| F6S | https://www.f6s.com/ | Startup ecosystem exposure for founders and programs | accelerator-linked and fundraising-stage startups | keep company status and details current |

| Crunchbase | https://www.crunchbase.com/ | Strong entity and company profile visibility | funded startups and B2B products | ensure profile fields remain accurate over time |

| Wellfound | https://wellfound.com/ | Startup ecosystem trust and profile visibility layer | early-stage teams hiring and scaling | align company profile with latest product positioning |

| G2 | https://www.g2.com/ | High-intent software comparison behavior | B2B SaaS and tool startups | complete category mapping and profile depth fields |

| Capterra | https://www.capterra.com/ | Mid-funnel buyer research platform for software evaluation | startups selling workflow software | keep pricing and plan context aligned |

| AlternativeTo | https://alternativeto.net/ | Useful for discovery via comparison intent | mature startups in known categories | keep alternative positioning clean and honest |

| SaaSHub | https://www.saashub.com/ | SaaS-oriented discovery and competitor context | startup SaaS products | optimize tags and use-case descriptions |

| AppSumo Marketplace | https://appsumo.com/marketplace/ | Discovery channel for new software buyers and early adopters | startup tools seeking traction | maintain offer clarity and onboarding path |

How to apply this list:

- select 5-6 channels for the first wave,

- keep 3-4 channels in support queue,

- expand only after quality gates stay stable.

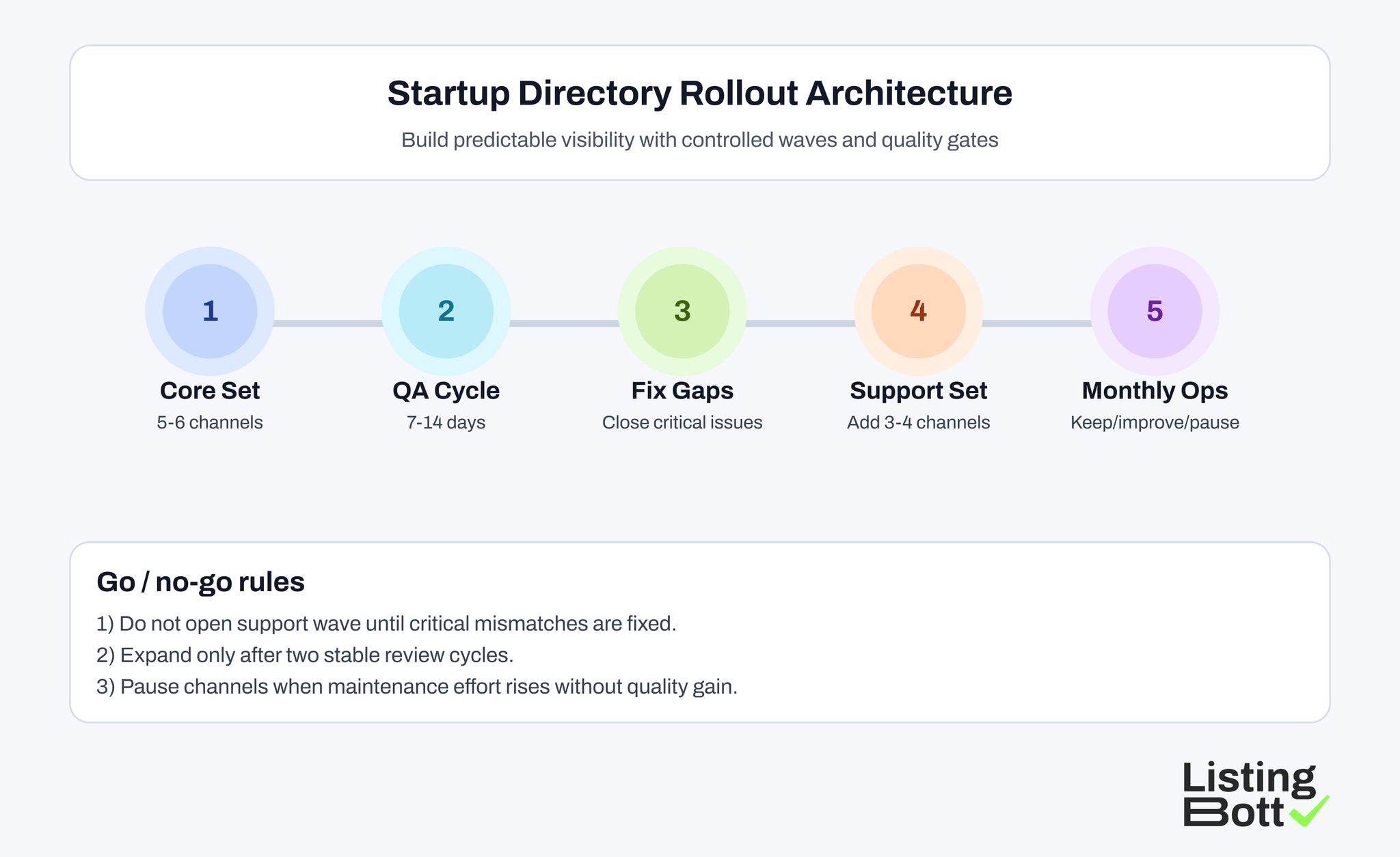

Core Wave Architecture for Startup Teams

A strong first wave is usually structured like this:

- 2 startup-native channels,

- 1 launch/discovery community,

- 2 high-intent software comparison channels.

This gives sufficient visibility diversity without overwhelming small teams.

Practical first-wave checklist:

- finalize canonical profile source,

- map one core message per audience segment,

- assign destination URL per platform,

- assign launch and correction owners,

- set post-launch QA checkpoint for days 7-14.

This is also the point where teams decide whether local directories submission should run in parallel or later. If local intent is important, run it as a separate track with distinct KPIs.

Service Models for Startup Submission Execution

Teams selecting startup directory submission services usually choose one of four models.

| Model | Best for | Main risk | What to validate |

| Founder-managed manual process | very small scope launches | inconsistency during product iteration | owner bandwidth and checklist rigor |

| Freelancer-led execution | short launch windows | variable quality standards | QA workflow and correction ownership |

| Agency-managed execution | teams outsourcing throughput | process quality differs by provider | channel scoring logic and reporting clarity |

| Tool-led controlled workflow | teams scaling repeatable submissions | weak outcomes without governance | baseline rules, QA cadence, and accountability |

The best model is usually the one that can maintain listing quality for months, not the one that publishes fastest.

Startup Directory Rollout Architecture

Implementation Checklist

Step 1: define canonical startup profile pack

Include:

- short and long product description,

- category and use-case taxonomy,

- pricing and trial context,

- trust and proof elements,

- destination URL mapping.

Step 2: score candidate channels

Apply STARTUP-6 and classify each platform as core, support, or hold.

Step 3: prepare reusable assets

Before launch, prepare:

- listing copy variants,

- screenshots and logo set,

- proof snippets,

- platform-specific description constraints,

- QA checklist.

Step 4: launch core wave

Publish to core channels and record:

- submission date,

- approval state,

- live profile URL,

- assigned owner.

Step 5: run post-launch QA

Validate:

- profile consistency,

- category and tag alignment,

- URL correctness,

- duplicate risk,

- completeness level.

Step 6: close critical issues first

Do not launch support wave until critical mismatches are resolved.

Step 7: open support wave selectively

Add support channels only when two consecutive review cycles remain stable.

Step 8: run monthly keep/improve/pause decisions

Review each channel using:

- quality trend,

- correction burden,

- intent quality,

- contribution potential.

This process helps teams combine image submission sites and international channels only when core execution quality is already under control.

KPI Board for Startup Listing Operations

Use a KPI board that supports decisions and de-prioritization.

| KPI | Why it matters | Healthy signal | Risk signal |

| Listing consistency rate | confirms profile data integrity | 95%+ and stable | recurring mismatch trend |

| Correction closure speed | shows operational discipline | predictable closure cycle | aging backlog |

| Duplicate rate | tracks profile hygiene | low and declining | repeated duplicate creation |

| Channel intent quality | tests traffic relevance | stable high-intent behavior | low-intent visits dominate |

| Maintenance load ratio | checks scalability | controlled effort per cycle | effort rises without quality gain |

This board makes decisions around seo submission and support channels evidence-based.

Startup-specific Risk Controls

1) Launch-message drift control

Startups often iterate messaging quickly. Keep listing copy synchronized to avoid conflicting value statements.

2) Destination mapping control

Every channel should map to the most relevant landing page, not always homepage.

3) Fast correction ownership

Assign clear SLAs for profile corrections.

4) Duplicate suppression routine

Run duplicate checks after launch waves and major brand or product naming updates.

5) Expansion gate

Require two stable review cycles before adding more channels.

6) Quarterly channel pruning

Pause channels with high maintenance effort and low contribution quality.

These controls prevent maintenance debt from overtaking distribution value.

When to Add Local and International Tracks

Many startup teams eventually combine startup-native directories with broader distribution paths such as local directories submission and engine international search submission. That expansion can work, but only after the core startup track is stable.

A practical sequencing rule:

- stabilize startup-core channels first,

- add local track only for pages with clear location intent,

- add international track only when localization ownership is defined,

- keep each track measured separately.

Local track use case

Local directories submission is useful when the startup has:

- region-specific landing pages,

- city or country pricing variations,

- sales workflows tied to local availability,

- local proof assets such as regional customers or partners.

Without those signals, local distribution often creates maintenance work with weak intent quality.

International track use case

Engine international search submission makes sense when:

- core messaging is already translated or localized,

- product support and onboarding can handle those regions,

- compliance and policy checks are documented,

- correction ownership exists for each market.

If those conditions are not in place, international expansion usually increases inconsistency risk across profile fields, screenshots, and destination mapping.

Split KPI views by track

Avoid one blended dashboard for all channels. Use a split board:

- startup-core: launch momentum and high-intent product discovery,

- local: geo relevance and profile consistency by market,

- international: localization accuracy and correction velocity by country.

This gives clearer keep/improve/pause decisions and reduces false positives from mixed traffic patterns.

Keep Expansion Quality-First

A startup can have strong early performance on startup-focused channels and still fail after over-expansion. The safest path is controlled pacing:

- lock process quality in core channels,

- test one additional track at a time,

- run two stable review cycles before expanding again,

- pause low-signal channels quickly.

This approach protects delivery focus while keeping visibility growth sustainable.

Common Mistakes

1) Submission volume without governance

Large coverage with weak QA creates persistent cleanup work.

2) One generic profile for every platform

Intent and audience differ across channels. Generic copy weakens relevance.

3) Mixing all channels into one metric

If local and global tracks are blended, teams lose decision clarity.

4) Ignoring post-launch QA

Quality decays quickly when listings are not reviewed.

5) No channel retirement policy

Low-value channels remain active and consume resources.

6) Over-reliance on free channels

Free channels can still create significant maintenance costs.

7) Reporting only launch counts

Submission count alone does not reflect business contribution.

90-Day Startup Listing Plan

Days 1-30: foundation

- build profile baseline,

- score channels with STARTUP-6,

- launch core wave,

- assign ownership.

Days 31-60: stabilization

- run QA cycles,

- close critical mismatches,

- resolve duplicates,

- improve weak profiles.

Days 61-90: optimization

- open support wave if gates pass,

- pause weak channels,

- refine destination mapping,

- lock monthly review cadence.

By day 90, the goal is a stable and maintainable startup visibility system, not only a larger channel list.

90-Day Startup Listing Operations Plan

Where ListingBott Fits

ListingBott supports structured directory publication and reporting workflows for teams that need repeatable execution.

Typical flow:

- onboarding details are collected,

- listing scope is approved,

- publication is executed,

- reporting is delivered.

Offer alignment:

- one-time payment model,

- publication to 100+ directories,

- no hidden extra fees,

- refund possible if process has not started.

Promise limits:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guaranteed outcomes controlled by third-party platforms.

Qualified DR statement: DR growth to 15 can be promised only when starting DR is below 15, the selected goal is domain growth, and the approved listing set is in place.

FAQ: Startup Directory Submission Services

How many channels should a startup launch first?

Most teams should start with 5-6 core channels, then expand only after quality and correction metrics stabilize.

Are startup directories better than general software directories?

They serve different functions. Startup-native channels support discovery and momentum, while software directories can support evaluation intent.

Should local citation submission be included in wave one?

Only when local intent is part of the growth model. Otherwise, run it as a separate track after core startup channels stabilize.

Can startup directory submission services guarantee rankings?

No. Listings support visibility and trust signals, but rankings depend on broader SEO and market conditions.

How often should startup listings be reviewed?

Monthly review is a practical baseline, with faster refreshes after major product or positioning updates.