Table of Contents

- Why this Matters in 2026

- Step-by-step Implementation Checklist

- Common Mistakes in Local Citation Building for SaaS

- 90-Day Operating Plan

- FAQ

sbb-itb-8e44301

Quick Answer

Local citation building for saas works when SaaS teams treat citations as an operating system for trust and entity consistency, not as a one-time link task.

A practical model is:

- define one canonical company profile source,

- publish only to high-fit citation and discovery platforms,

- validate every live profile after launch,

- run monthly maintenance to prevent drift.

Most teams lose value because profiles become inconsistent after product updates, pricing changes, or branding edits. Citation quality, not submission volume, is what protects long-term outcomes.

Why this Matters in 2026

In 2026, SaaS buyers check multiple external sources before they trust a vendor. They compare directories, map ecosystems, review platforms, and brand references. Search systems and AI answer engines also rely on these external references to interpret entity identity.

That means citation work now affects three layers at once:

- trust layer: does your company identity look reliable across sources,

- discovery layer: can buyers find consistent details where they research,

- conversion layer: do listings route users to the right destination pages.

Citation building seo is no longer a technical afterthought. It is part of demand capture and qualification. If your citations conflict, you create friction in both ranking signals and buyer confidence.

What Counts as a Citation for SaaS Teams

Many SaaS operators think local citations apply only to brick-and-mortar businesses. That is incomplete.

For SaaS, citations include any trusted third-party profile that repeats stable brand identity and destination signals, such as:

- company name and legal/brand variants,

- website URL and key landing paths,

- contact/support details,

- category and product descriptors,

- short proof context (awards, ratings, customer context where available).

So local citation building for SaaS is less about street-level map ranking and more about consistent entity signals across high-quality platforms. Hybrid SaaS businesses with regional sales teams or office locations still get direct local benefits too.

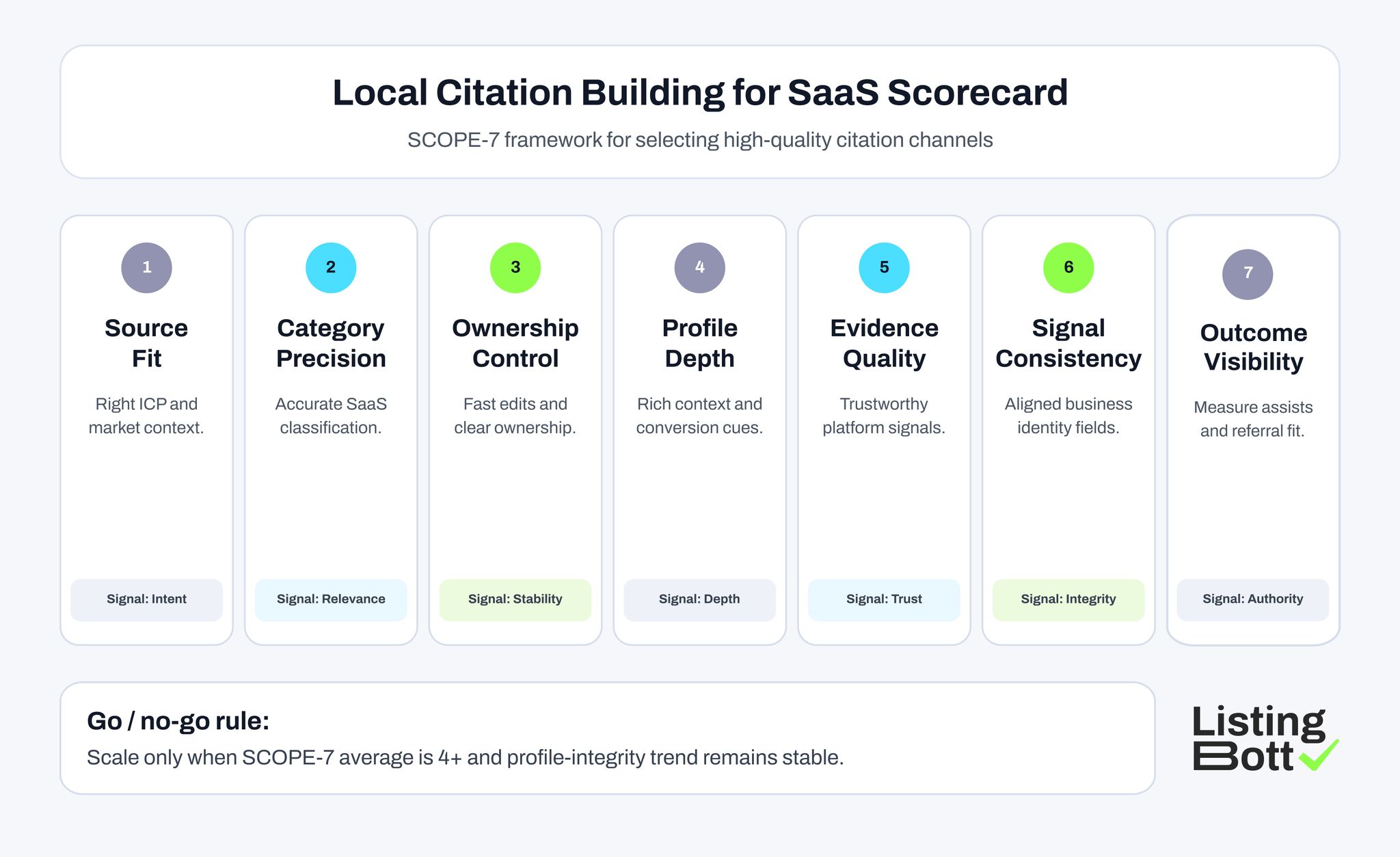

The SCOPE-7 Framework for Local Citation Building Service Quality

Use this framework before you scale any local citation building services workflow.

| Dimension | Core question | Why it matters | Score range |

| Source fit | Is this platform relevant to your SaaS ICP? | Filters out low-value submissions | 1-5 |

| Category precision | Can you classify product/use case correctly? | Improves match quality | 1-5 |

| Ownership control | Can your team edit and maintain profile quickly? | Prevents stale data | 1-5 |

| Profile depth | Can you publish meaningful context and proof? | Supports conversion quality | 1-5 |

| Evidence quality | Does platform have trustworthy moderation or structure? | Reduces noise and low-trust exposure | 1-5 |

| Signal consistency | Can core business data stay aligned across channels? | Protects entity integrity | 1-5 |

| Outcome visibility | Can you track referral quality and assist impact? | Enables smart keep/pause decisions | 1-5 |

Scoring rule:

- 28-35: core platform candidates

- 22-27: pilot/test candidates

- below 22: de-prioritize unless strategic reason exists

This approach gives SaaS teams a repeatable selection logic for seo citation building programs.

Local Citation Building for SaaS Scorecard

Best-Fit Listing Platforms for Local Citation Building for SaaS

Use this as a practical shortlist. It is not a submit-everywhere list.

| Platform | URL | Why this is a fit | Best for | Submission note |

| Google Business Profile | https://www.google.com/business/ | Strong trust/entity signal and high visibility in branded checks | SaaS with office presence, hybrid local-market motions | Keep legal name, category, and website path exact |

| Bing Places | https://www.bingplaces.com/ | Useful secondary search ecosystem citation source | SaaS teams wanting broader engine consistency | Mirror core business profile fields from canonical source |

| Apple Business Connect | https://businessconnect.apple.com/ | Adds consistency in Apple ecosystem discovery contexts | SaaS brands with iOS-heavy user base or local offices | Ensure address and contact policies stay synchronized |

| Yelp for Business | https://business.yelp.com/ | Trusted local/business citation layer with user-facing profile exposure | B2B and SMB-oriented SaaS serving local operators | Use clear category selection and accurate landing URL |

| Better Business Bureau | https://www.bbb.org/ | High-trust external profile reference for credibility checks | SaaS teams in reputation-sensitive categories | Keep support/contact and business identity current |

| Crunchbase | https://www.crunchbase.com/ | Strong company-entity reference for tech discovery and validation | Startup and growth-stage SaaS with fundraising/partner visibility | Keep funding, category, and company narrative consistent |

| Capterra | https://www.capterra.com/ | Category-led software discovery with structured profile fields | SaaS products with defined use-case segmentation | Maintain feature and positioning updates after releases |

| G2 | https://www.g2.com/ | Evaluation-stage platform where profile depth and trust context matter | Mid-market and enterprise-oriented SaaS | Align messaging with product reality and review context |

| SaaSHub | https://www.saashub.com/ | Useful alternative/discovery environment for tool comparison | Product-led SaaS with comparison intent traffic | Keep listing descriptions and positioning current |

How to use this platform block

Practical wave design:

- Wave 1: 4-5 core platforms (highest SCOPE-7 scores)

- Wave 2: 2-3 support platforms (citation/trust support)

- Wave 3: 1-2 experiment platforms (controlled tests)

This keeps a local citation building service program focused and maintainable.

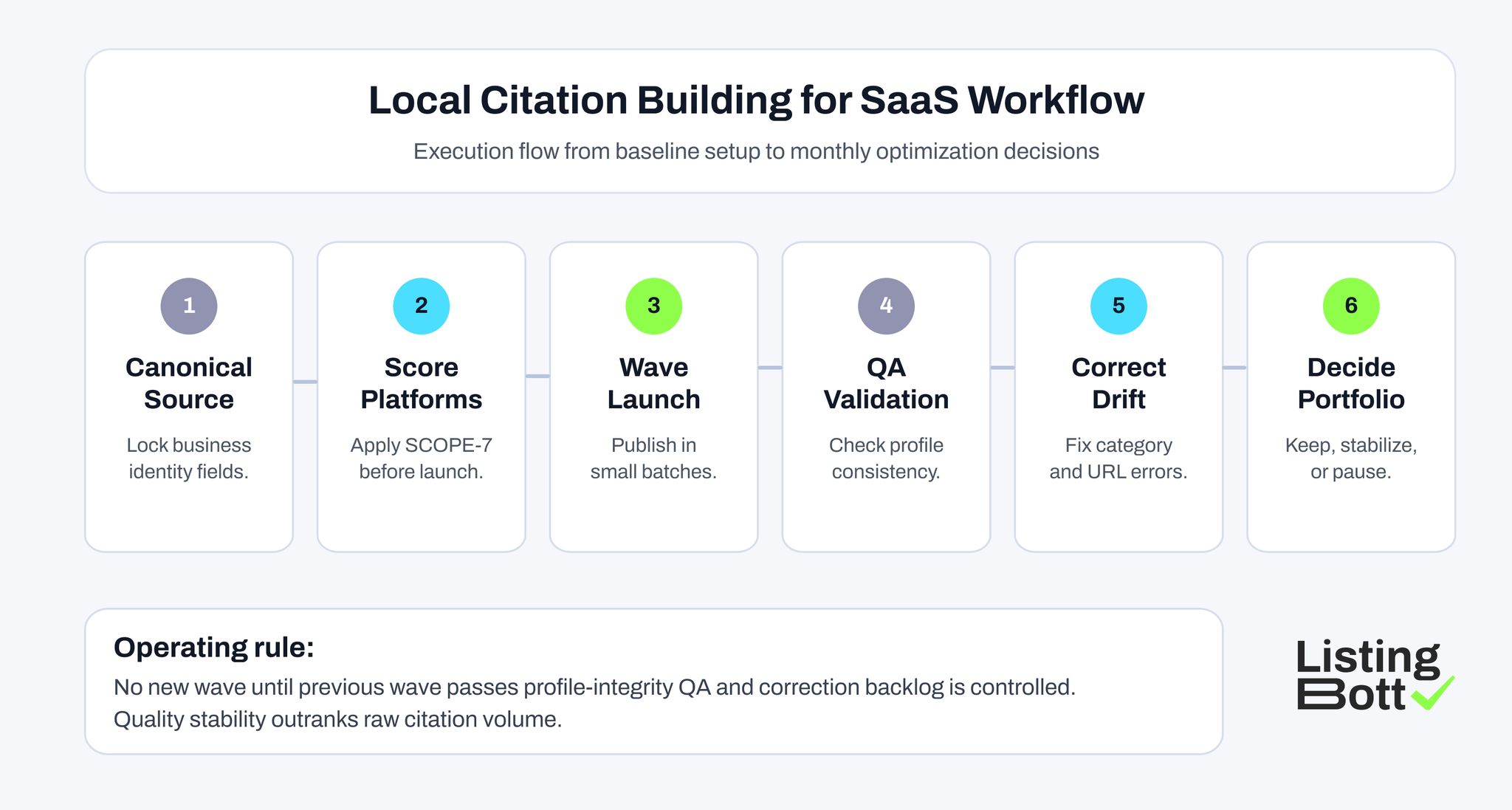

Local Citation Building for SaaS Workflow

Step-by-step Implementation Checklist

Step 1: build a canonical profile source

Before any submission, lock one source file with:

- company name rules (primary + accepted variants),

- website URL standards and UTM policy,

- short and long descriptions,

- category mapping by platform,

- support/contact fields,

- approved media/proof snippets.

Without this source, teams create drift immediately.

Step 2: assign citation roles

Define ownership up front:

- profile owner (data quality),

- submission owner (execution),

- QA owner (post-publish checks),

- analytics owner (outcome tracking).

Local citation building services fail when ownership is shared vaguely and no one closes correction loops.

Step 3: score candidate platforms

Use SCOPE-7 and only advance platforms that meet threshold rules. Do not approve channels because they appear on generic lists.

Step 4: submit in controlled waves

Batch submissions by wave and log:

- submission date,

- status,

- published profile URL,

- rejected fields,

- required corrections.

Step 5: run launch QA within 72 hours

Check every live profile for:

- business identity consistency,

- correct landing URLs,

- category accuracy,

- duplicate profile risk,

- outdated copy blocks.

Step 6: stabilize and maintain monthly

A stable monthly cycle should include:

- integrity checks,

- correction queue cleanup,

- profile refresh for product changes,

- keep/pause decisions by measured value.

This is where local seo citation building becomes a durable growth asset rather than a noisy one-off project.

Pre-launch data hygiene check

Before Wave 1 starts, run one strict hygiene pass:

- remove duplicate company-name variants that are not approved,

- normalize destination URLs to one canonical path pattern,

- verify legal and brand naming rules by platform,

- confirm support email and contact references are current,

- ensure category labels match actual product positioning.

This one check sharply lowers rejection loops and makes correction cycles faster after publication.

Decision Model: Keep, Stabilize, or De-prioritize

After 45-60 days, classify each platform:

Keep

Use when platform shows:

- acceptable data stability,

- strong referral quality,

- measurable assist value,

- manageable maintenance effort.

Stabilize

Use when platform is strategically relevant but operationally weak:

- repeated category mismatch,

- recurring profile edits,

- low-quality referral traffic with improvement potential.

Action: keep in portfolio but run targeted fixes for one cycle.

De-prioritize

Use when platform creates effort without quality contribution:

- high maintenance burden,

- weak buyer intent,

- no measurable assist signal across cycles.

A mature seo citation building stack always removes low-yield channels.

Metrics that Make Citation Programs Accountable

| KPI | What to monitor | Healthy range | Warning sign |

| Profile integrity rate | % profiles with no critical inconsistencies | 95%+ on core platforms | recurring mismatch trend |

| Correction cycle time | days to close citation issues | stable or improving | growing queue age |

| Referral quality score | engagement quality of listing traffic | steady depth and relevance | high bounce + low intent |

| Assisted conversion share | influence on conversion paths | visible contribution trend | flat over multiple cycles |

| Maintenance efficiency | effort per maintained platform | predictable monthly load | effort spikes with low value |

This KPI board prevents vanity metrics like raw listing counts from guiding decisions.

Common Mistakes in Local Citation Building for SaaS

Mistake 1: treating citations as a one-time launch task

Fix:

- run monthly operating cycles,

- assign explicit owners and SLAs.

Mistake 2: using one generic profile copy everywhere

Fix:

- keep canonical core data,

- adjust category and context language by platform.

Mistake 3: overexpanding from generic lists

Fix:

- score each platform first,

- cap each wave before expansion.

Mistake 4: no correction ledger

Fix:

- track every rejection and mismatch,

- document root cause patterns so they do not repeat.

Mistake 5: no destination URL logic

Fix:

- map platform intent to correct page destination,

- keep links current after pricing/product updates.

How SaaS Teams should Choose Local Citation Building Services

When evaluating local citation building services, ask these practical questions:

- Do they work from a canonical data baseline, or submit from ad-hoc inputs?

- Is there a transparent platform-selection framework, or only mass-volume promises?

- Are post-launch QA checks included with correction cycles?

- Can they explain promise limits clearly?

- Will they provide reporting that supports keep/pause decisions?

If a provider cannot answer these clearly, quality risk is high.

Practical Handoff Rules between Marketing and Operations

Citation quality often drops when teams split responsibility between growth and operations without a clear handoff rule. A simple model works better:

- growth team defines channel priorities and page mapping,

- operations team executes submissions and correction cycles,

- one QA owner signs off each wave before expansion.

This avoids repeated loops where marketing asks for more channels while unresolved data issues remain in the current set. The goal is to keep output quality stable while still moving fast enough for launch timelines.

Where ListingBott Fits

ListingBott is a tool workflow for structured directory and citation execution.

Typical process:

- submit onboarding form,

- review and approve selected listing set,

- publication runs,

- receive a report with outcomes and next actions.

Offer alignment:

- one-time payment model,

- publication to 100+ directories,

- no hidden extra fees,

- refund possible if process has not started.

Promise limits:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guaranteed outcomes controlled by third-party platforms.

Qualified DR statement: DR growth to 15 is promised only when starting DR is below 15, the selected goal is domain growth, and the approved directory list is in place.

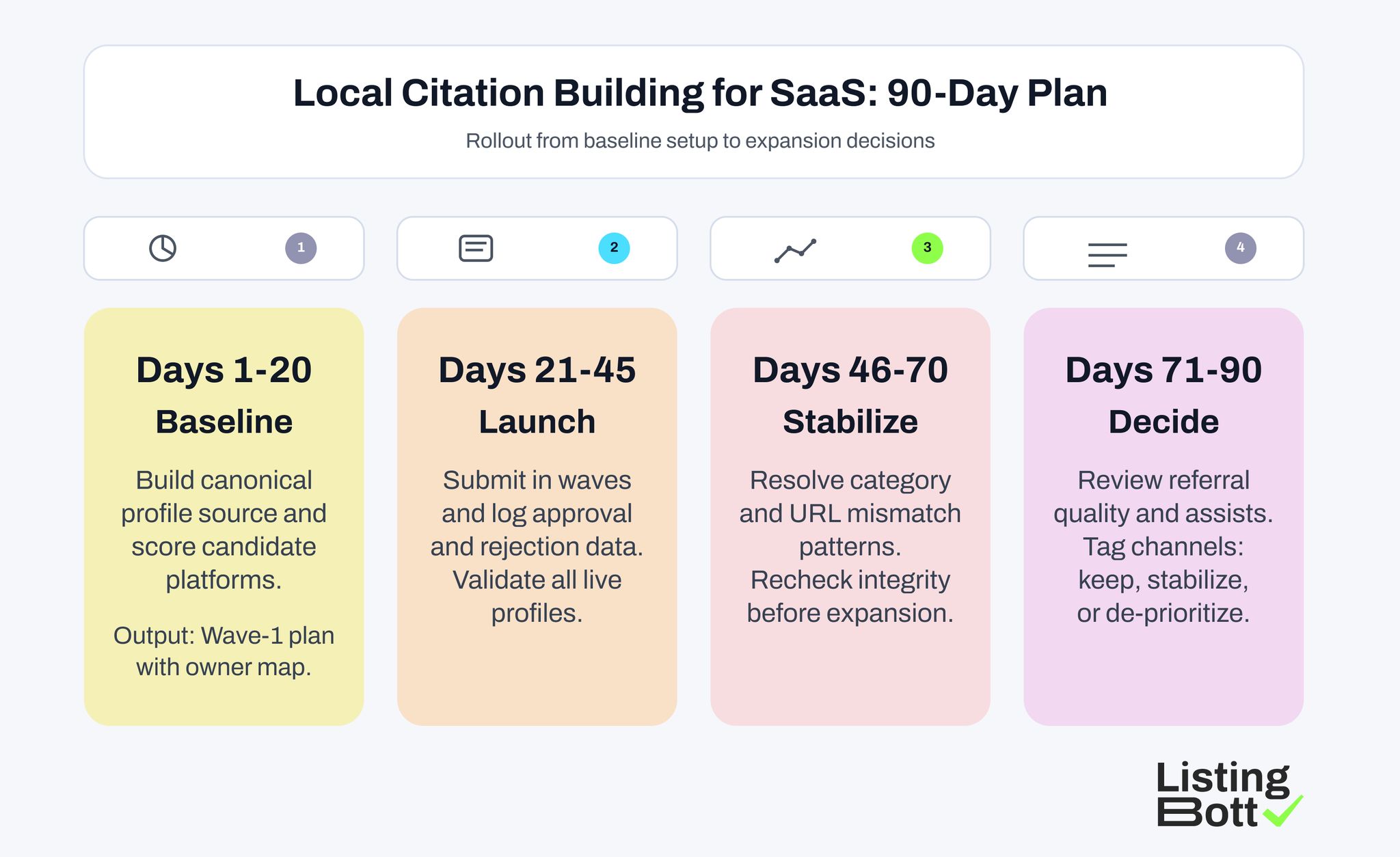

90-Day Operating Plan

Local Citation Building for SaaS: 90-Day Plan

Days 1-20: foundation and prioritization

- finalize canonical profile source,

- score and shortlist first-wave platforms,

- define ownership and QA checklist.

Days 21-45: launch and validation

- publish first wave,

- verify profile integrity,

- resolve initial mismatch issues.

Days 46-70: stabilization and correction control

- run correction sprint,

- refresh weak profiles,

- align destination URLs with intent.

Days 71-90: performance and expansion decisions

- review KPI board,

- classify platforms (keep/stabilize/de-prioritize),

- open next wave only if quality remains stable.

FAQ: Local Citation Building for SaaS

What is local citation building for saas in simple terms?

It is the process of publishing and maintaining consistent SaaS business identity across trusted external platforms so discovery, trust, and entity clarity stay strong.

Is local citation building still useful for SaaS without physical branches?

Yes. Even fully digital SaaS brands benefit from consistent external references on trustworthy platforms used during evaluation and credibility checks.

What is the difference between local citation building and link building?

Citation work prioritizes consistent business identity and structured profile quality. Link building usually focuses on acquiring backlinks from broader content and authority sources.

How many platforms should we start with?

Most SaaS teams should start with 6-10 high-fit platforms in controlled waves, then expand based on measured quality and maintenance capacity.

Should we use every citation list we find online?

No. Use lists for discovery only, then score platforms by fit, quality, and maintainability before submission.