Quick answer

Local business directory submission in Ohio should be managed like an operational risk portfolio, not a simple submission checklist. Different rollout waves carry different correction risk, and unmanaged risk is the main reason expansion slows down later.

A practical Ohio sequence is:

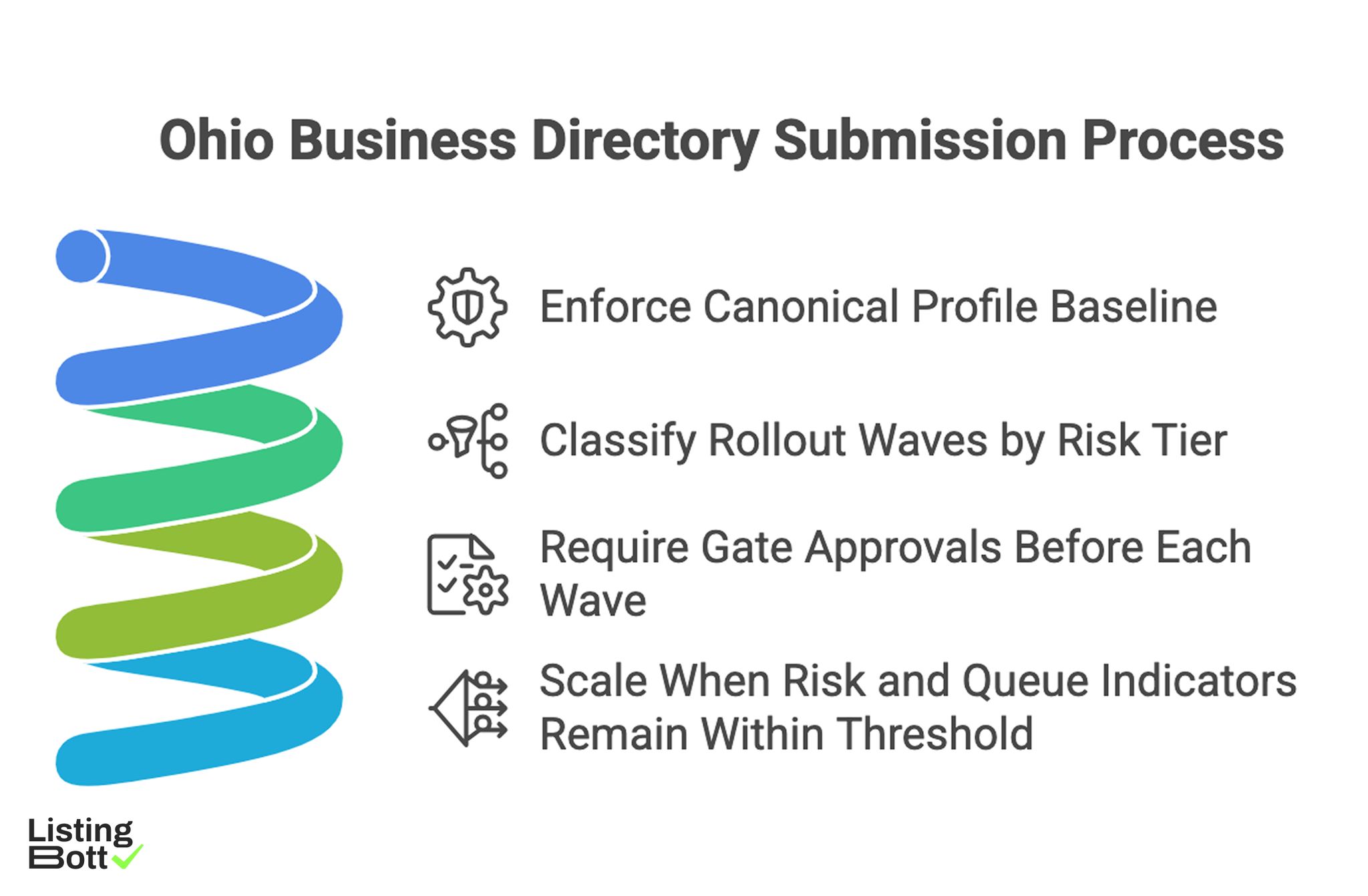

Ohio Business Directory Submission Process

- enforce one canonical profile baseline,

- classify rollout waves by risk tier,

- require gate approvals before each wave,

- scale only when risk and queue indicators remain within threshold.

For broader U.S. planning, see Local business directory submission USA.

sbb-itb-8e44301

Methodology

This page uses a risk-portfolio method that treats each execution wave as a unit with measurable risk and control requirements.

The PORT framework (Prioritization, Ownership, Risk scoring, Throughput)

| Component | Weight | Purpose |

|---|---|---|

| Prioritization quality | 25 | ensures wave order follows readiness and business value |

| Ownership coverage | 25 | keeps gate and correction decisions accountable |

| Risk scoring discipline | 30 | quantifies expansion risk before launch |

| Throughput stability | 20 | keeps correction and reporting capacity aligned with volume |

How to apply PORT:

- score each component from 1-5 every two weeks,

-

hold expansion if

Risk scoringorThroughput stabilityfalls below 3, - reopen expansion only after two stable cycles.

This model avoids growth driven by output pressure alone.

Ohio wave risk classes

| Wave class | Typical execution profile | Primary target | Risk signature | Launch condition |

|---|---|---|---|---|

| Class A wave | high-activity rollout zones | establish strict baseline | fast volume + correction bottlenecks | critical issue SLA stable |

| Class B wave | mixed-intensity zones | replicate baseline controls | ownership handoff variance | owner map verified |

| Class C wave | distributed coverage | maintain closure velocity while scaling | delayed issue aging control | closure trend on target |

| Class D wave | long-tail support zones | expand breadth with low debt | hidden backlog growth | backlog pressure below threshold |

Risk-score matrix

| Factor | Low risk (1) | Medium risk (3) | High risk (5) |

|---|---|---|---|

| Data integrity risk | no baseline conflicts in audits | occasional baseline drift | frequent conflicting profile sources |

| Correction risk | SLA stable and queue age low | intermittent SLA misses | recurring high-severity aging |

| Governance risk | all gate artifacts complete | partial documentation gaps | missing gate artifacts for active waves |

| Capacity risk | team load within plan | periodic overload windows | sustained overload and backlog growth |

| Reporting risk | fresh wave-level dashboards | occasional reporting lag | stale dashboards block decisions |

Use weighted score totals to determine whether a wave can launch.

Decision thresholds

| Total weighted risk score | Decision | Required action |

|---|---|---|

<= 2.2 |

Launch | proceed with planned wave |

2.3 - 3.2 |

Conditional launch | limited scope + additional monitoring |

>= 3.3 |

Hold | run mitigation sprint before launch |

This threshold system creates a repeatable go/no-go mechanism.

Control stack by workstream

| Workstream | Control requirement | Failure mode | Countermeasure |

|---|---|---|---|

| Data governance | one-source profile policy with audits | conflicting listings across waves | baseline audit before every wave |

| Scope governance | signed inclusion/exclusion set per wave | scope drift after launch | lock scope at gate approval |

| Correction operations | SLA policy + issue-owner matrix | unresolved critical queue during scale | escalation clock and weekly review |

| Reporting operations | weekly wave-level dashboard refresh | delayed risk visibility | fixed reporting cadence |

| Expansion governance | threshold-based launch decisions | calendar-driven wave rollout | PORT scorecard approval step |

Queue taxonomy for risk control

| Queue lane | Entry criteria | Priority logic | Exit target |

|---|---|---|---|

| Lane 1: routine fixes | low-impact profile inconsistencies | process in batch cadence | closed in standard cycle |

| Lane 2: active risk fixes | repeated mismatches in active wave | prioritize over new submissions | closed within weekly SLA |

| Lane 3: blocker fixes | cross-wave integrity breakdown | expansion freeze until resolved | closed before next gate decision |

Queue lanes make escalation explicit and measurable.

94-day Ohio rollout plan

| Phase | Days | Focus | Exit criteria |

|---|---|---|---|

| Governance setup | 1-16 | baseline policy, owner matrix, risk scorecard | governance package approved |

| Class A launch | 17-38 | execute first wave with full QA and SLA instrumentation | integrity and closure trend stable |

| Risk mitigation sprint | 39-62 | reduce lane 2/3 queue pressure and reopen rate | risk score back in launch range |

| Controlled class B-D expansion | 63-94 | add waves through threshold approvals | no post-launch KPI regression |

Skipping mitigation usually leads to hidden debt before later waves.

Pre-wave checklist

| Checkpoint | Validation step | Pass condition |

|---|---|---|

| Canonical baseline | random audit across active wave scope | no conflicting source edits |

| Ownership readiness | gate owner + escalation owner assigned | full ownership matrix active |

| SLA stability | high-severity closure trend review | SLA in range for two cycles |

| Reporting freshness | wave-level dashboard timestamp check | no stale KPI panels |

| Artifact completeness | gate packet contains required files | no missing approvals |

Comparison table

| Execution model | Best for | Benefit | Tradeoff | Ohio suitability |

|---|---|---|---|---|

| Uniform statewide rollout | very small pilots | fast startup | high risk under mixed wave conditions | Low |

| Manual segmented process | limited-scope teams | flexible local adjustments | poor repeatability and high coordination cost | Medium-low |

| Managed risk-governed execution | teams needing controlled speed | clear process with lower internal load | depends on provider transparency | Strong |

| Hybrid governance model | teams with internal QA leadership | strong control plus scalable throughput | requires strict role accountability | Very strong |

Model selection by maturity

| Team profile | Recommended model | Why |

|---|---|---|

| Low internal operations capacity | Managed risk-governed execution | retains controls without high internal burden |

| Moderate maturity with growth pressure | Hybrid governance | supports scale with explicit oversight |

| High process maturity | Hybrid or software-led | enables detailed internal optimization |

| Persistent queue instability | Managed pilot + reset | restores stable baseline first |

Weekly KPI board

| KPI | Operational meaning | Expansion stop trigger |

|---|---|---|

| Wave integrity pass rate | quality stability by active wave | sustained decline in any active wave |

| High-severity closure velocity | correction responsiveness | critical issues aging beyond SLA |

| Queue reopen ratio | fix durability | two-cycle increase trend |

| Backlog pressure score | debt accumulation | consecutive weekly rise |

| BOFU progression actions | business outcome linkage | informational sessions with weak progression |

KPI governance keeps expansion decisions evidence-based.

Best by use case

1) Single-location operator

Best fit: managed risk-governed execution with clear queue reporting.

Reason: quality controls stay visible with low operational overhead.

2) Multi-location organization

Best fit: hybrid governance with wave-level gate approvals.

Reason: scaling remains controlled and ownership remains explicit.

3) Product-led SaaS team

Best fit: phased rollout controlled by risk-score thresholds.

Reason: threshold-based expansion reduces queue debt accumulation.

4) Agency delivery teams

Best fit: standardized queue taxonomy and escalation protocol.

Reason: repeatable execution patterns reduce delivery variance.

5) Governance-heavy environments

Best fit: approval-first operations with full gate evidence.

Reason: traceable decision records improve predictability and auditability.

For benchmark references, evaluate execution transparency and governance depth via best directory listing services and listing management software vs service.

Where ListingBott fits in Ohio execution

What ListingBott does

ListingBott is a workflow-based directory submission tool for teams that need structured execution, approval checkpoints, and visible status reporting.

How ListingBott works

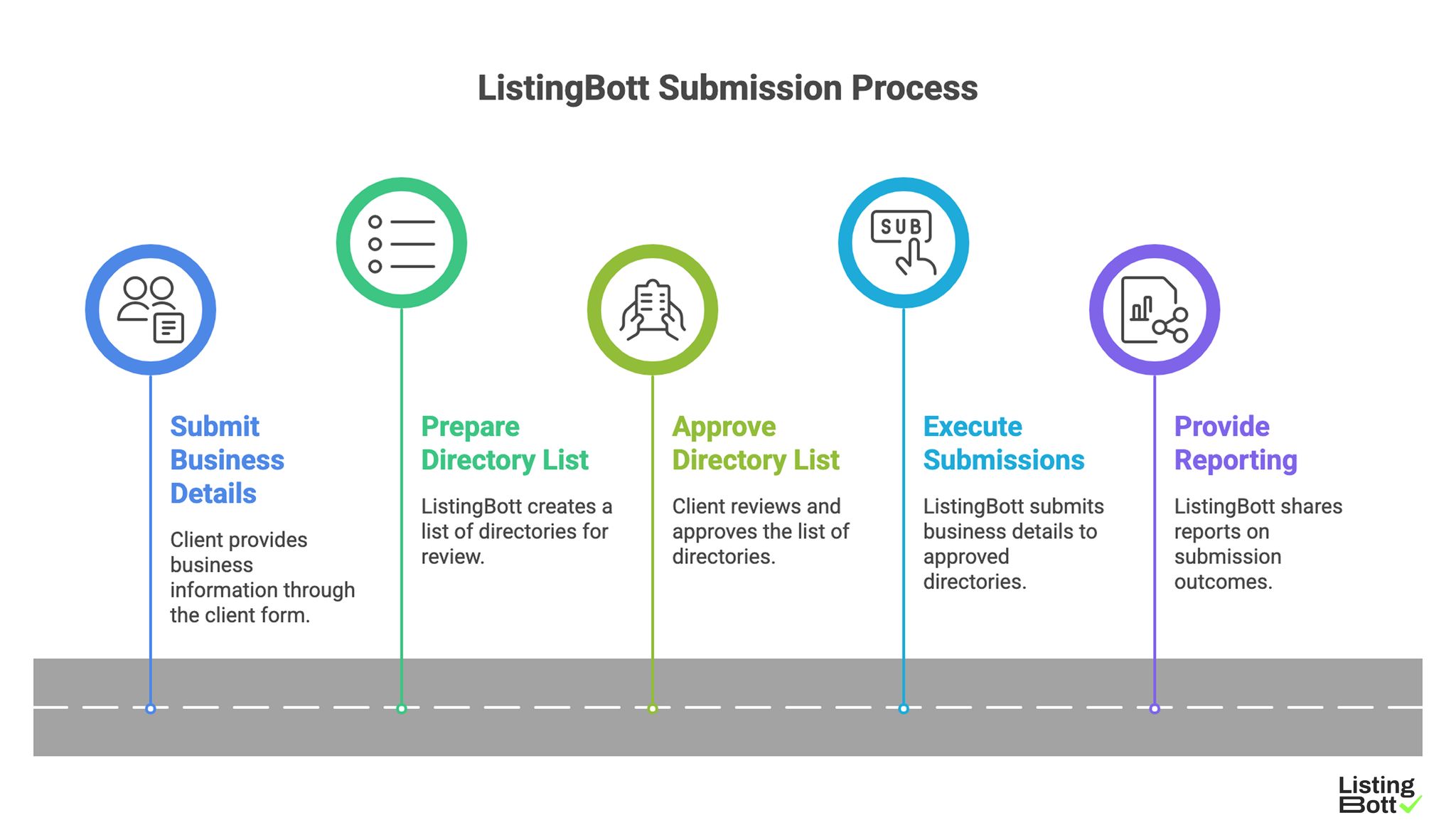

ListingBott Submission Process

-

You submit business details through the

client form. -

ListingBott prepares a

list of directoriesfor approved scope review. - You approve the list before launch.

- ListingBott executes submissions based on approved scope.

- ListingBott provides reporting for completed and pending outcomes.

Key features and practical value

- Intake validation: reduces preventable profile-data issues before launch.

- Approval checkpoint: aligns scope and expectations prior to execution.

- Workflow transparency: supports ownership and escalation control.

- Reporting handoff: supports evidence-based next-wave decisions.

Teams that prioritize workflow reliability generally preserve stronger long-term execution quality than teams focused only on output volume.

Expected outcomes and limits

Expected outcomes:

- structured submission execution,

- clear wave-level visibility,

- repeatable process for additional expansions.

Limits to keep explicit:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guaranteed outcomes controlled by third-party platforms.

DR commitment is conditional only. A promise to reach DR 15 can apply when starting DR is below 15, the client explicitly selects domain growth, and the directory list is approved before process launch. Refunds may apply if process has not started, and public offer language remains no hidden extra fees.

Risks/limits

Common failure patterns

- Launching new waves without complete gate artifacts.

- Running multiple profile baselines in active execution.

- Expanding while lane 3 queue issues remain open.

- Tracking output only without risk-score controls.

- Escalating issues without clear owner accountability.

Practical limits

- Directory submission supports discoverability and consistency, but does not replace broader SEO systems.

- Timing and impact vary by category, competition, and third-party platform behavior.

- Expansion without risk controls can create compounding correction debt.

Minimum control layer

- wave-based gate approvals,

- SLA-bound correction ownership,

- weekly risk and KPI review,

- required artifacts for every expansion step.

FAQ

Why use a risk model in Ohio?

Because expansion reliability depends on measured risk and queue stability, not raw submission output.

Should all waves launch at the same time?

Usually no. Launch sequentially, stabilize metrics, then expand.

Which KPI should block expansion first?

Use high-severity closure velocity together with wave integrity pass rate.

Can directory submission guarantee rankings?

No. It supports consistency and discoverability, but rankings depend on external factors.

Is DR growth guaranteed for every project?

No. DR commitments are conditional and apply only to qualified setups.

What is the minimum governance stack?

Canonical data control, gate ownership, correction SLA, and recurring wave-level KPI reviews.