Quick answer

Local business directory submission in Nevada should be controlled through decision cadence, not only submission volume. Teams lose consistency when launch timing, correction capacity, and approval latency move at different speeds.

Nevada Local Business Directory Submission Rollout Plan

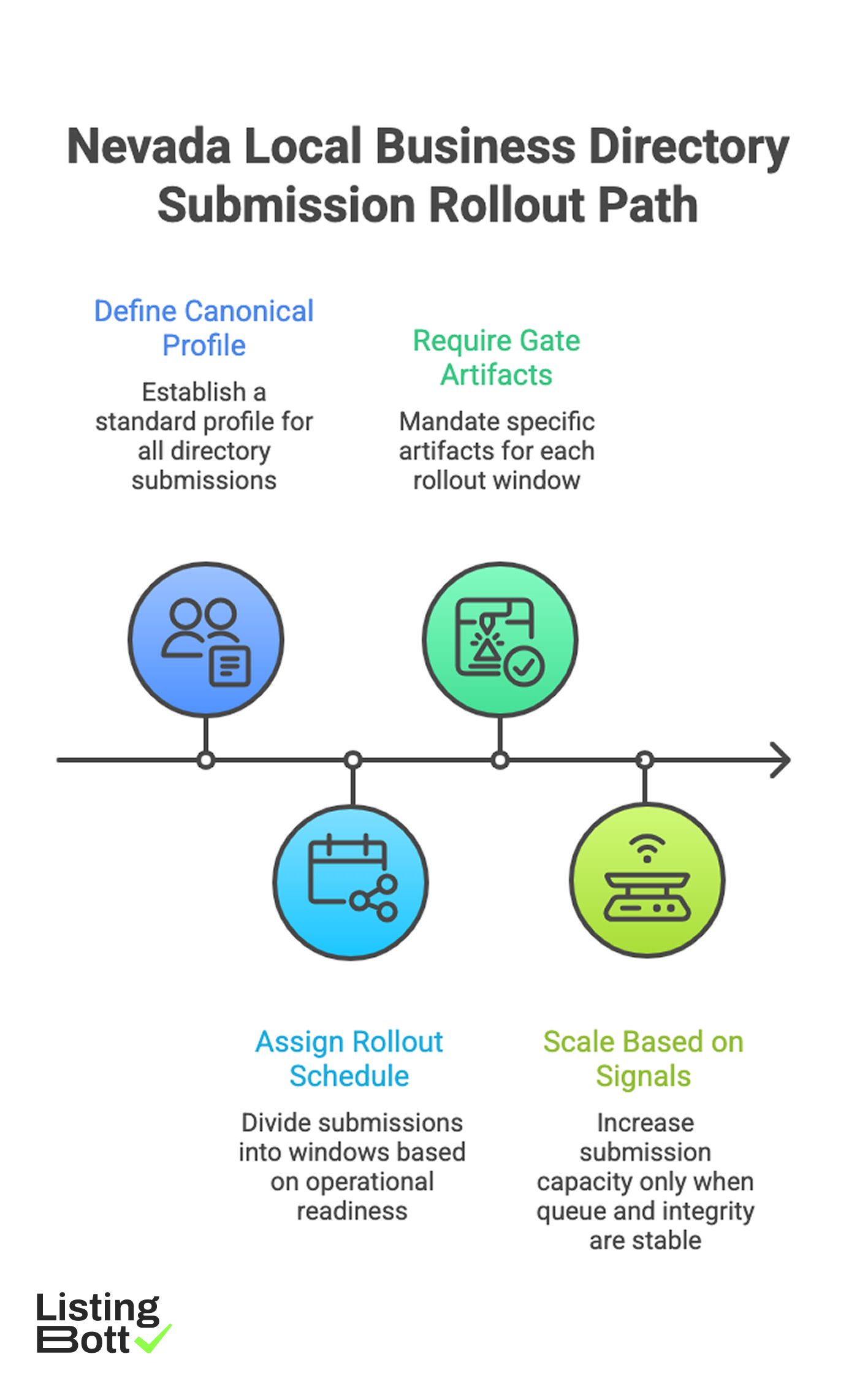

A practical Nevada operating path:

- define one canonical profile baseline,

- assign rollout windows by operational readiness,

- require explicit gate artifacts for every window,

- scale only when queue pressure and integrity signals stay in range.

For broader U.S. planning, see Local business directory submission USA.

sbb-itb-8e44301

Methodology

This page uses a clock-based model designed to stabilize operations under shifting wave pressure.

The CLOCK framework (Cadence, Latency, Ownership, Coverage, KPI loops)

| Dimension | Weight | Control objective |

|---|---|---|

| Cadence discipline | 20 | keep launch, review, and correction cycles synchronized |

| Latency control | 20 | reduce decision lag between issue detection and action |

| Ownership clarity | 20 | assign accountable owners for gates and escalations |

| Coverage sequencing | 20 | open rollout windows by readiness, not urgency |

| KPI loop quality | 20 | use up-to-date metrics for expansion decisions |

How CLOCK operates:

- score each dimension from 1-5 every two weeks,

-

pause new rollout windows if

Latency controlorKPI loop qualitydrops below 3, - reopen expansion after two stable cycles with no high-severity queue drift.

Nevada launch windows

| Window | Launch order | Objective | Typical instability pattern | Open condition |

|---|---|---|---|---|

| Window 1 | 1 | establish baseline governance and data quality | throughput accelerates before corrections stabilize | high-severity SLA stable |

| Window 2 | 2 | expand with controlled owner handoffs | decision lag on approvals | gate owner map current |

| Window 3 | 3 | scale distributed execution with queue discipline | backlog aging hidden by aggregate totals | queue-age trend in range |

| Window 4 | 4 | controlled long-tail extension | reopened issues re-enter queue repeatedly | reopen trend normalized |

Decision clock table

| Clock event | Frequency | Input required | Output decision |

|---|---|---|---|

| Daily queue check | daily | queue-age deltas and blocker count | assign urgent corrections |

| Weekly quality review | weekly | integrity pass rate and reopen trend | maintain, tighten, or freeze wave |

| Biweekly launch board | biweekly | readiness score + gate artifact pack | approve next window or hold |

| Monthly system review | monthly | cost-quality trend + BOFU progression | rebalance capacity and priorities |

A decision clock prevents reactive expansion caused by stale metrics.

Latency budget model

| Decision step | Target latency | If exceeded | Mitigation action |

|---|---|---|---|

| Issue classification | < 24h | priority queue congestion | triage owner escalation |

| Gate approval | < 48h | stalled expansion planning | decision-board intervention |

| Critical correction assignment | < 12h | SLA breach risk | emergency owner reassignment |

| KPI dashboard refresh | weekly max | blind decision-making | reporting lock before expansion |

Latency budgets keep governance responsive as operations scale.

Queue architecture

| Queue lane | Entry trigger | Priority rule | Exit criteria |

|---|---|---|---|

| Lane R (routine) | low-impact formatting issues | batch processing | closed in standard cycle |

| Lane C (critical) | repeated mismatch in active window | prioritized before new submissions | closed within weekly SLA |

| Lane B (blocker) | cross-window integrity failure | pause expansion and resolve first | no blocker carryover to next gate |

Queue separation clarifies what must be solved before scale continues.

Gate artifact stack

| Gate | Trigger moment | Required artifact | Hard stop signal |

|---|---|---|---|

| Gate 1: baseline | before first rollout window | canonical data policy + ownership matrix | conflicting source rules |

| Gate 2: scope | before each new window | approved inclusion/exclusion package | unapproved scope edits |

| Gate 3: quality | after first batch in window | integrity report + queue lane status | blocker lane above threshold |

| Gate 4: capacity | before opening next window | closure velocity trend + latency budget review | decision lag or closure degradation |

84-day clock schedule

| Phase | Days | Focus | Exit criteria |

|---|---|---|---|

| Setup cycle | 1-14 | policy lock, owner mapping, clock setup | governance package approved |

| Window 1 cycle | 15-34 | launch first window with strict queue tracking | integrity + SLA trend stable |

| Stabilization cycle | 35-56 | reduce blocker carryover and reopen growth | blocker lane normalized |

| Expansion cycle | 57-84 | launch windows 2-4 via gate approvals | no KPI regression after each window |

Readiness checklist before each window

| Checkpoint | Verification method | Pass criteria |

|---|---|---|

| Canonical baseline compliance | random record audit | no conflicting edits |

| Gate ownership coverage | gate and escalation owners assigned | complete owner map |

| Latency budget health | decision-time audit | all steps within budget |

| Queue stability | blocker and critical lane trend review | no upward risk trend |

| Artifact completeness | gate packet validation | all required documents present |

Comparison table

| Execution style | Best fit | Advantage | Limitation | Nevada fit |

|---|---|---|---|---|

| Fixed-speed statewide rollout | short test cycles | easy startup | fragile under volatility | Low |

| Manual segmented execution | small teams with narrow scope | local flexibility | inconsistent governance quality | Medium-low |

| Managed clock-based execution | teams needing stable scale pace | structured cadence and lower internal burden | relies on process transparency | Strong |

| Hybrid governance execution | teams with in-house QA leadership | strongest control with scalable throughput | requires strict ownership discipline | Very strong |

Selection guidance by maturity

| Operating maturity signal | Recommended model | Why |

|---|---|---|

| Limited internal ops capacity | Managed clock-based execution | keeps control quality with lower overhead |

| Moderate maturity with scale pressure | Hybrid governance | balances speed and internal oversight |

| High maturity with robust SOP | Hybrid or software-led | allows advanced internal control tuning |

| Recurring blocker accumulation | Managed pilot + control reset | restores stable baseline before expansion |

KPI loop for weekly decisions

| KPI | Decision role | Expansion hold signal |

|---|---|---|

| Window integrity pass rate | validates quality stability | sustained decline in active window |

| Critical closure velocity | tracks correction performance | SLA breaches across two cycles |

| Reopen trend | measures fix durability | persistent upward trajectory |

| Blocker carryover count | indicates unresolved systemic issues | blocker lane not cleared before gate |

| BOFU progression actions | validates commercial relevance | informational engagement with weak progression |

Best by use case

1) Single-location operator

Best fit: managed clock-based execution with clear queue visibility.

Reason: minimizes operational overhead while preserving control clarity.

2) Multi-location operator

Best fit: hybrid governance with window-by-window gate checks.

Reason: scale remains controlled and decision latency stays measurable.

3) Product-led SaaS team

Best fit: phased expansion with latency budgets and KPI gates.

Reason: prevents overexpansion when signals become stale.

4) Agency portfolio management

Best fit: standardized queue lanes and board-driven decision cadence.

Reason: repeatable governance reduces delivery variance.

5) Governance-sensitive programs

Best fit: approval-first workflow with full artifact discipline.

Reason: traceable decisions improve auditability and reliability.

For benchmark references, compare execution transparency and governance depth on best directory listing services and listing management software vs service.

Where ListingBott fits in Nevada execution

What ListingBott does

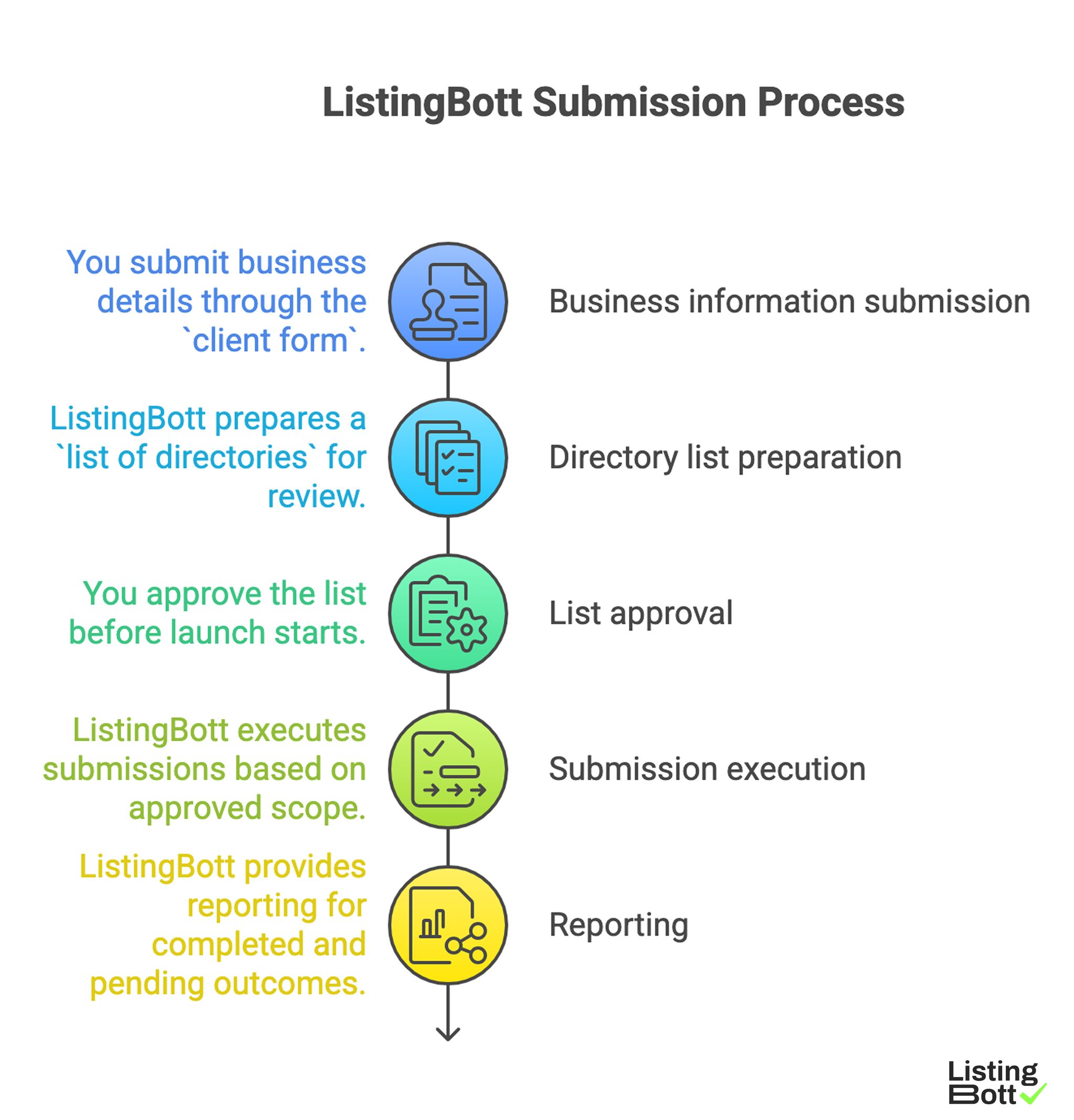

ListingBott is a workflow-based directory submission tool for teams that need structured execution, approval checkpoints, and status transparency.

How ListingBott works

ListingBott Submission Process

-

You submit business details through the

client form. -

ListingBott prepares a

list of directoriesfor review. - You approve the list before launch starts.

- ListingBott executes submissions based on approved scope.

- ListingBott provides reporting for completed and pending outcomes.

Key features and practical value

- Intake validation: prevents avoidable profile-data issues before launch.

- Approval checkpoint: aligns scope and expectations before execution.

- Workflow transparency: supports accountability and escalation control.

- Reporting handoff: enables evidence-based launch decisions for next windows.

Teams that prioritize workflow reliability generally maintain better long-term execution quality than teams focused only on submission volume.

Expected outcomes and limits

Expected outcomes:

- structured submission execution,

- clear wave-level operational visibility,

- repeatable framework for next expansion windows.

Limits to keep explicit:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guaranteed outcomes controlled by third-party platforms.

DR commitment is conditional only. A promise to reach DR 15 can apply when starting DR is below 15, the client explicitly selects domain growth, and the directory list is approved before process launch. Refunds may apply if process has not started, and public offer language remains no hidden extra fees.

Risks/limits

Common failure patterns

- Opening new windows without complete gate artifacts.

- Expanding while blocker-lane issues remain unresolved.

- Running mixed profile baselines during active operations.

- Optimizing for output totals while ignoring latency budgets.

- Allowing escalation without clear owner accountability.

Practical limits

- Directory submission supports discoverability and consistency, but does not replace broader SEO systems.

- Timing and impact vary by competition, category, and third-party platform behavior.

- Expansion without decision-cadence controls can create compounding correction debt.

Minimum control layer

- window-based gate approvals,

- SLA-bound correction ownership,

- weekly KPI and queue reviews,

- mandatory gate artifacts before every expansion decision.

FAQ

Why use a decision-cadence model in Nevada?

Because rollout stability depends on synchronized decisions, queue control, and fresh KPI signals.

Should all windows launch together?

Usually no. Launch sequentially, validate stability, then expand.

Which KPI should block expansion first?

Use critical closure velocity together with window integrity pass rate.

Can directory submission guarantee rankings?

No. It supports consistency and discoverability, but rankings depend on external factors.

Is DR growth guaranteed for every project?

No. DR commitments are conditional and apply only to qualified setups.

What is the minimum governance stack?

Canonical data control, gate ownership, correction SLA, and recurring window-level KPI reviews.