Quick answer

The best directory listing service is not the one with the biggest directory count. It is the one that consistently matches your business category, maintains data quality, and provides transparent workflow and reporting.

For most teams, the safest selection method is: define your goal, score providers with a fixed rubric, run a controlled first wave, and expand only when quality and correction metrics hold.

If you need a structured starting point, teams usually begin with a clear business directory submission workflow before scaling volume.

sbb-itb-8e44301

Methodology

This comparison uses a practical operator-first framework instead of marketing claims.

Evaluation criteria

| Criterion | Weight | Why it matters |

|---|---|---|

| Relevance matching | 25 | Ensures submissions align with business category and audience intent |

| Data consistency controls | 20 | Reduces NAP/profile drift across listings |

| Directory quality filtering | 20 | Avoids low-signal directories that add maintenance burden |

| Workflow transparency | 15 | Clarifies intake, approval, submission, and reporting steps |

| Correction handling | 10 | Measures how well errors and rejections are resolved |

| Ongoing maintainability | 10 | Determines if the process scales without quality decay |

Scoring approach

- Score each provider from 1-5 on every criterion.

- Multiply by weight to get weighted score.

- Prioritize providers with strong quality controls over high-volume promises.

Baseline exclusion rules

Exclude providers when they:

- cannot explain directory selection logic,

- cannot show correction process,

- rely on one-size-fits-all submission bundles,

- cannot provide clear reporting handoff.

Comparison table

| Service model | Best for | Strengths | Tradeoffs | Typical fit |

|---|---|---|---|---|

| Manual agency-style submission | Low volume, highly custom campaigns | High flexibility per listing | Slow throughput, higher coordination cost | Small teams with niche requirements |

| Productized workflow submission | Repeatable campaigns with quality controls | Better consistency, clearer operating flow | Requires disciplined intake and approval | Growth teams scaling listings |

| Pure bulk automation | Fast high-volume distribution | Speed and broad initial coverage | Lower quality control risk if unmanaged | Early visibility tests with strict QA layer |

| Local-specialized listing management | Multi-location and local discovery | Strong local consistency processes | Can be narrow outside local use cases | Local/regional operators |

| Hybrid automation + human QA | Balanced speed and quality | Better correction handling and governance | Needs clear ownership and review cadence | Teams with sustained listing operations |

Example weighted scorecard template

| Option | Relevance | Consistency | Quality filter | Transparency | Corrections | Maintainability | Weighted total (/100) |

|---|---|---|---|---|---|---|---|

| Option A | 4 | 5 | 4 | 4 | 4 | 4 | 83 |

| Option B | 3 | 3 | 2 | 3 | 2 | 3 | 56 |

| Option C | 5 | 4 | 4 | 5 | 4 | 4 | 87 |

Use this template with your own evidence from demos, trial cycles, and reporting samples.

Selection checklist before you buy

Use this checklist during vendor evaluation to reduce rework after onboarding.

| Check | What to ask | Minimum acceptable answer |

|---|---|---|

| Directory curation logic | How are directories selected and excluded? | Clear quality filters, category logic, and exclusion rules |

| Data governance | How is profile consistency maintained across submissions? | Canonical profile model with validation before publish |

| Ownership model | Who is responsible for edits, approvals, and corrections? | Named owner and review workflow |

| Correction process | How are rejection/fix loops handled? | Transparent correction cycle and status tracking |

| Reporting quality | What gets reported and how often? | Action-level reporting tied to outcomes |

If a provider cannot answer these questions clearly, it is usually a sign of operational risk.

90-day implementation model

Even strong providers fail when rollout is rushed. Use staged deployment:

| Phase | Window | Primary focus | Gate to proceed |

|---|---|---|---|

| Foundation | Days 1-14 | Data readiness, category mapping, provider setup | Canonical profile approved |

| Controlled rollout | Days 15-40 | Tier-1 submissions and QA checks | Error rate stable |

| Stabilization | Days 41-65 | Corrections, profile improvements, process cleanup | Backlog under control |

| Expansion | Days 66-90 | Additional directories and use-case scaling | Quality holds after expansion |

This model helps teams avoid the common failure pattern: high initial volume followed by cleanup debt.

What to measure in the first 90 days

Track outcome-linked metrics instead of activity-only counts.

| KPI | Why it matters | Bad signal |

|---|---|---|

| Profile completeness rate | Shows quality of execution | Large share of thin/incomplete listings |

| Correction turnaround time | Reflects process reliability | Slow, unresolved correction cycles |

| High-fit directory share | Validates relevance discipline | Expansion into low-fit categories |

| Internal click-through to BOFU pages | Shows commercial path performance | Informational traffic with no progression |

| Assisted conversion trend | Measures contribution to pipeline | Flat or declining despite more submissions |

A service can look productive on volume metrics while still underperforming on business contribution.

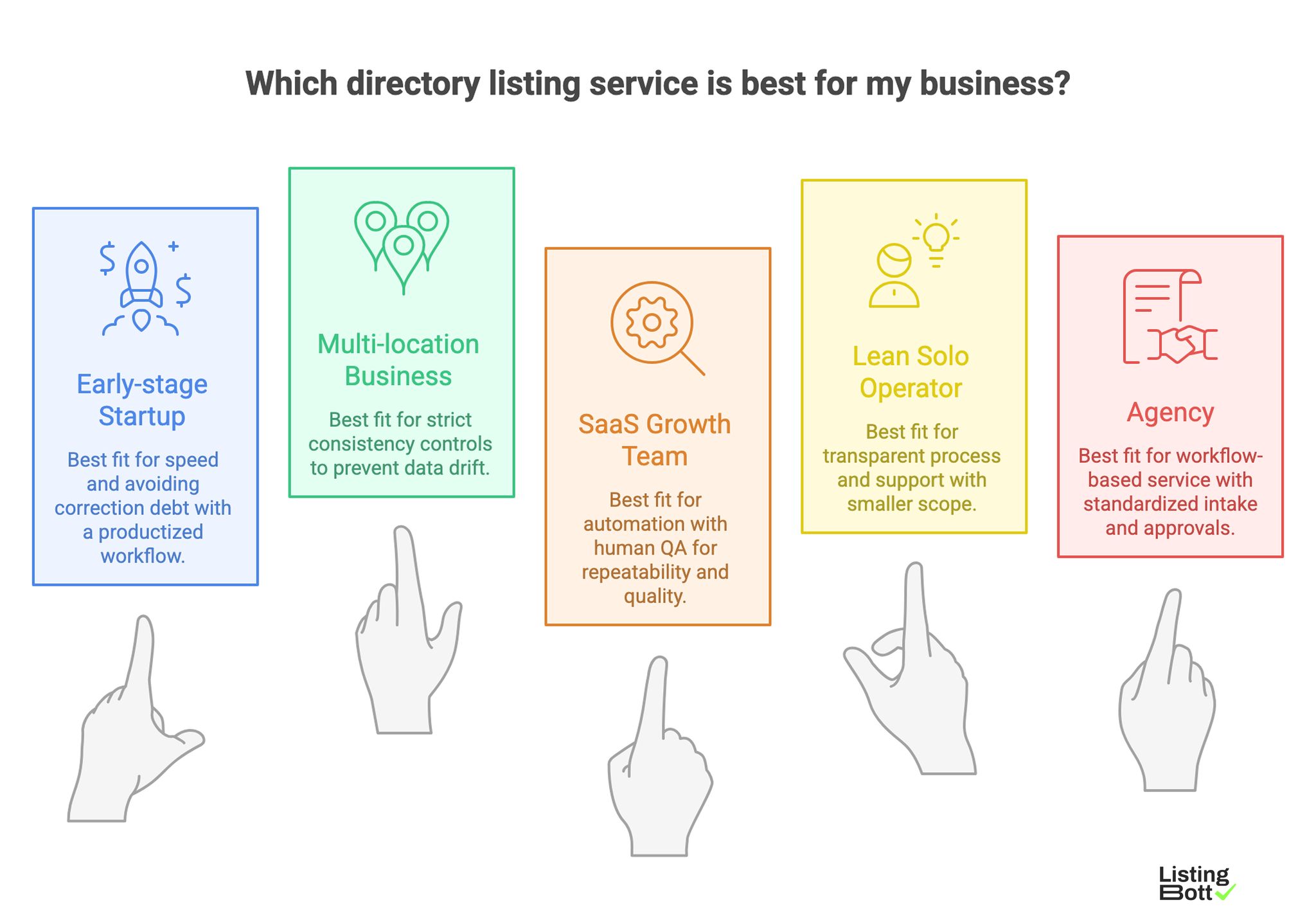

Best by use case

Best Listing Directory For Your Business

1) Early-stage startup

Best fit: productized workflow with controlled wave submission.

Reason: startup teams need speed, but also need to avoid correction debt from low-quality listings.

2) Multi-location local business

Best fit: listing management model with strict consistency controls.

Reason: local data drift creates costly trust and visibility issues across locations.

3) SaaS growth team

Best fit: hybrid model (automation + human QA).

Reason: repeatability matters, but category relevance and profile quality still require review.

4) Lean solo operator

Best fit: smaller-scope service with transparent process and support.

Reason: bandwidth is limited, so clarity and maintainability matter more than max volume.

5) Agency handling multiple clients

Best fit: workflow-based service with standardized intake, approvals, and reporting.

Reason: agencies need predictable operations and handoff discipline across client accounts.

For teams comparing options in this segment, using automated directory submissions with a review layer usually performs better than unmanaged bulk posting.

What makes AI-citation performance better

If your goal includes AI-answer visibility, prioritize providers that support citation-friendly content patterns:

- clear definitions and answer-first summaries,

- transparent methodology statements,

- scannable comparison tables,

- caveats and limits written in plain language,

- consistent update cadence.

These patterns improve the chance that your page is referenced as a clear source in AI-generated summaries.

Where ListingBott fits in this decision

If you shortlist productized providers, this is the practical model ListingBott follows so you can compare it against alternatives on equal terms.

What ListingBott does

ListingBott is a tool-driven submission workflow for businesses that want structured directory execution instead of ad hoc manual posting. The offer is positioned as a one-time payment model with publication to 100+ directories, based on current website language.

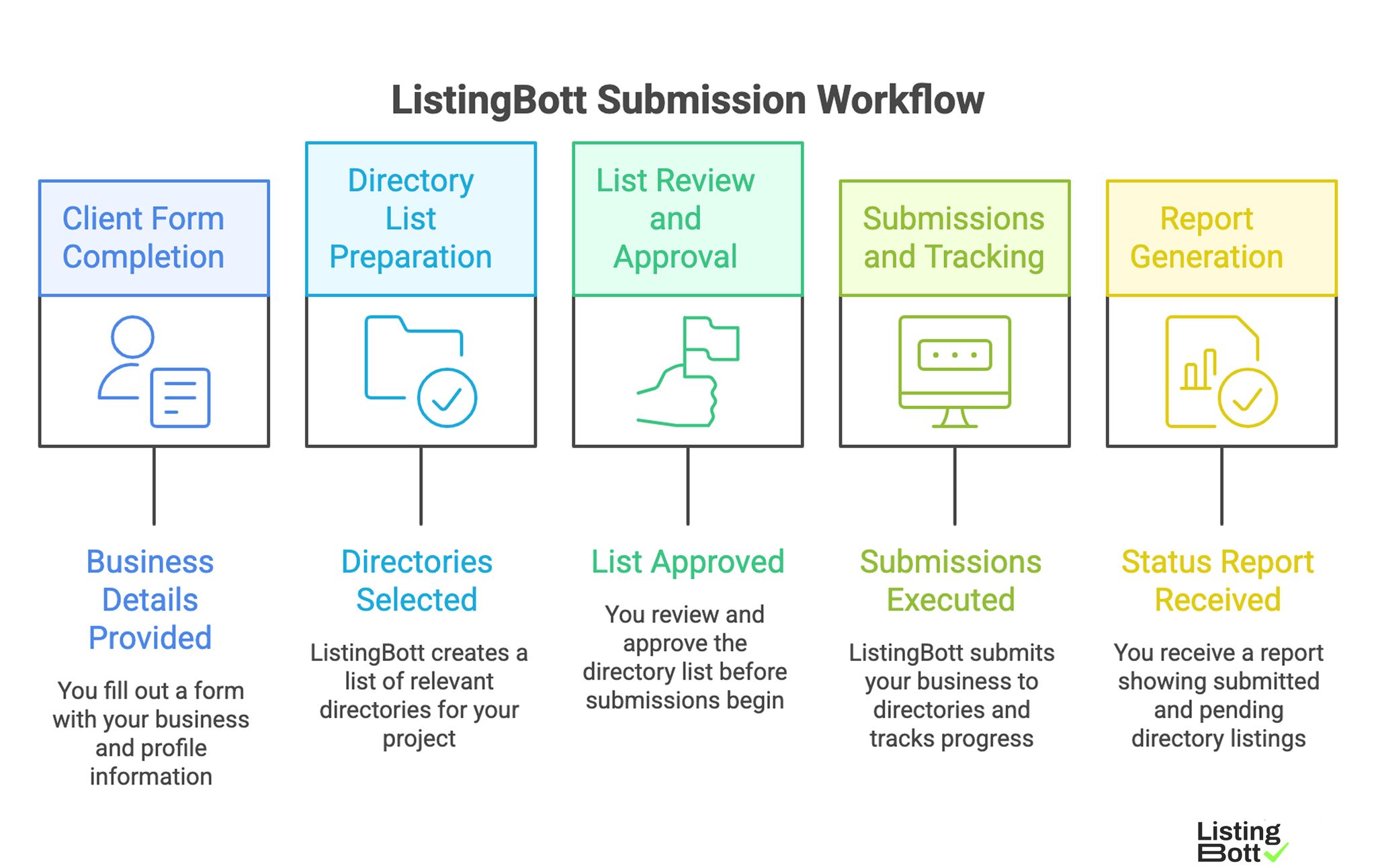

How ListingBott works

ListingBott Submission Workflow

- You complete a client form with business/profile details.

- ListingBott prepares a list of directories for your project.

- You review and approve that list before publishing starts.

- Submissions are executed and tracked.

- You receive a report showing submitted and pending status.

This is why teams evaluating options often compare providers by submission workflow clarity before comparing pure volume claims.

Key features and what they mean in operations

- Intake gating: incomplete data is resolved before publish, reducing downstream correction loops.

- Approval before publish: directory scope is confirmed in advance, so expectations are aligned.

- Status visibility: progress is communicated through clear stage updates and report handoff.

- Structured delivery: useful when internal teams need repeatable execution, not one-off submissions.

Expected results and limits

What you can reasonably expect:

- directory submission execution within agreed scope,

- transparent status communication,

- final reporting on delivered/pending items.

What no provider can safely guarantee:

- exact ranking position,

- specific traffic by a fixed date,

- indexing speed or third-party platform outcomes.

For DR-focused projects, any promise must be conditional. ListingBott can promise growth to DR 15 only when all conditions are met: starting DR below 15, explicit goal set to domain growth, and client-approved directory list. Refund policy remains scope-based, including refund eligibility when process has not started. Commercial terms should also be explicit up front, including no hidden extra fees.

Risks/limits

Common selection mistakes

- Choosing by directory count alone.

- Ignoring correction process and ownership.

- Not validating directory quality standards.

- Skipping first-wave QA before expansion.

Practical limits to acknowledge

- No directory service can guarantee exact ranking position.

- No service can guarantee traffic by a specific date.

- Outcomes influenced by third-party platforms cannot be guaranteed.

- Authority metrics should be treated as directional, not deterministic.

Risk controls to require

- explicit intake + approval process,

- directory filtering criteria,

- correction SLA or correction workflow,

- reporting that maps actions to outcomes.

FAQ

What makes a directory listing service “best” for SEO?

Process quality, category fit, and consistency controls are usually stronger predictors than raw volume.

Is bulk submission always a bad choice?

No. It can be useful in controlled campaigns, but should include QA gates and correction handling.

Should local businesses use the same service model as SaaS products?

Usually no. Local businesses need stricter consistency and location-level governance.

How many directories should a first wave include?

Use a limited, high-fit set first. Expand only after quality checks pass.

Can directory services guarantee DR growth?

No blanket guarantee is valid for every project. DR commitments should be conditional and tied to starting DR, campaign goal, and approved directory scope.

How do I compare providers quickly but safely?

Use a weighted rubric, request process transparency, and test one controlled cycle before scaling.