Quick answer

Automation improves listing operations when it removes repetitive manual work without removing quality controls. The best programs automate stable tasks, keep risk-sensitive tasks under human review, and use clear escalation rules when exceptions appear.

Most teams either automate too little and stay slow, or automate too much and create avoidable quality issues. The practical goal is a hybrid operating model with explicit boundaries.

For teams building repeatable online listing management, the highest-leverage decision is defining the automation boundary clearly.

sbb-itb-8e44301

Problem framing

Listing workflows include both predictable and judgment-heavy tasks. Problems start when teams do not separate those task types.

Typical symptoms of weak automation design:

- repetitive tasks remain manual and consume team capacity,

- critical profile updates are automated without validation,

- exception handling is undefined,

- no owner is assigned for post-automation QA,

- reports show throughput but not quality impact.

These issues create a false sense of efficiency. Velocity rises, but reliability declines.

Automation failure patterns

| Failure pattern | Why it happens | Operational impact |

|---|---|---|

| Full automation of high-risk edits | Team optimizes for speed only | Critical data errors scale quickly |

| No exception queue | Workflow design ignores edge cases | Issues remain unresolved longer |

| No QA gate after automated runs | Output assumed correct by default | Quality drift appears late |

| Manual review with no rubric | Review is subjective and inconsistent | High variance across reviewers |

| Reporting without quality metrics | Focus on completion count | Weak decision-making for next cycle |

Automation is beneficial only when paired with control logic.

Task classification model: automate vs manual

| Task type | Recommended mode | Reason |

|---|---|---|

| Structured data sync (stable fields) | Automate | High repeatability and low ambiguity |

| Routine status tracking | Automate | Reduces manual overhead and lag |

| Critical field changes (phone, address, hours) | Human review required | High customer and trust impact |

| Category/service intent changes | Human review required | Requires context judgment |

| Duplicate conflict resolution | Human-led with tooling support | Needs investigative decisions |

| Report packaging | Partially automate | Keep manual recommendation layer |

This model gives teams a practical default and reduces decision friction.

Decision matrix for automation boundaries

| Decision factor | Signal for more automation | Signal for more manual control |

|---|---|---|

| Data stability | Low variance across cycles | Frequent field changes |

| Error tolerance | Low business impact of mistakes | High customer-impact risk |

| Team maturity | Strong QA and ownership discipline | Unclear ownership or weak QA |

| Exception volume | Low and predictable | High or unpredictable |

| Compliance sensitivity | Low | Medium to high |

Use this matrix before expanding automation scope.

Manual review rubric (minimum)

| Review checkpoint | Pass condition |

|---|---|

| Critical-field validation | No mismatch in high-impact fields |

| Category/service accuracy | Classification reflects actual offer |

| Duplicate conflict status | No unresolved high-severity conflicts |

| Exception resolution | Every exception has owner + due date |

| Report actionability | Recommendations include accountable owners |

A lightweight rubric is better than unstructured review discussions.

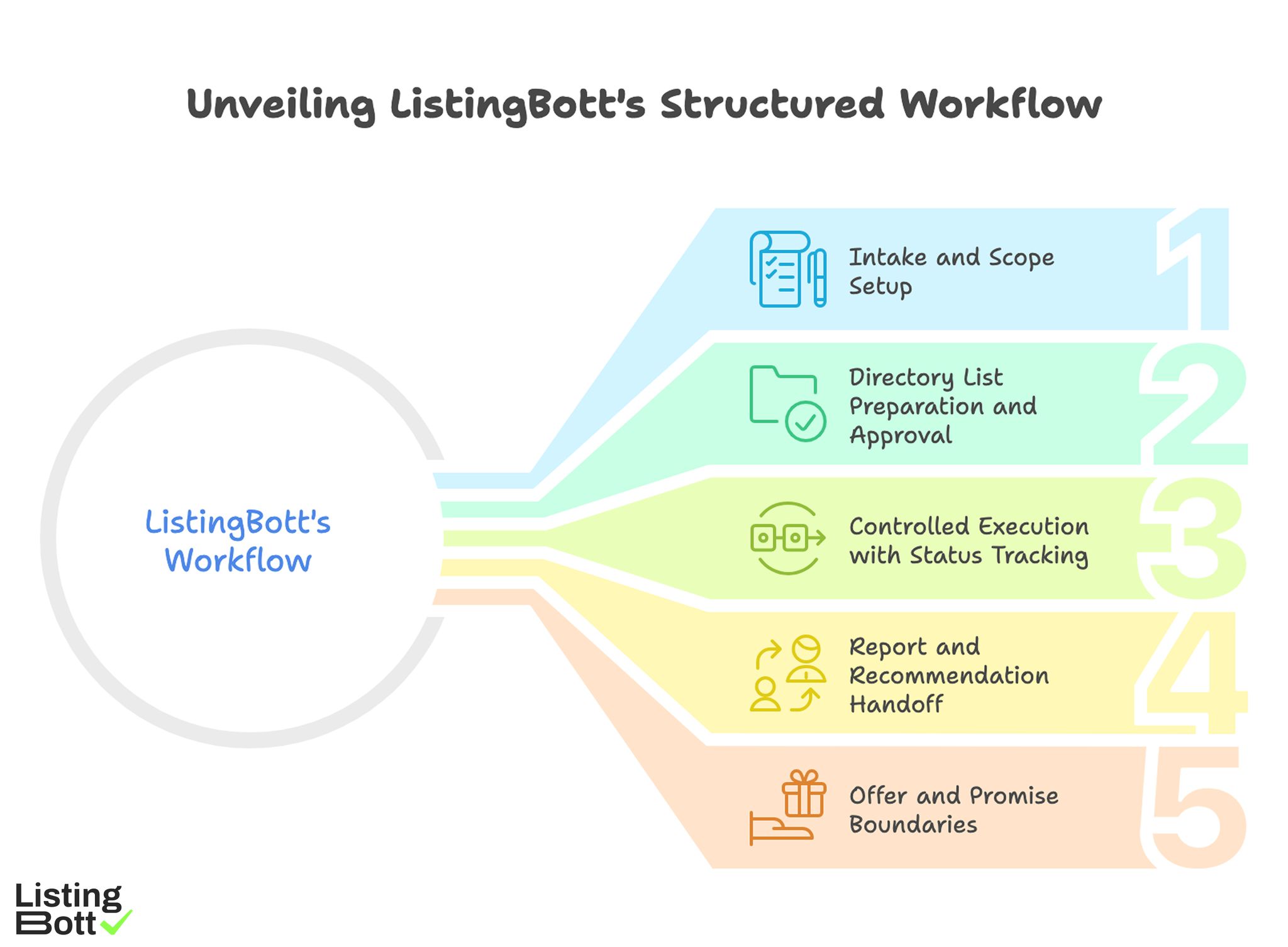

How ListingBott works

ListingBott uses a structured workflow: client-form intake, directory-list preparation and approval, publishing, and report handoff. The process is designed to keep execution traceable and decisions clear.

1) Intake and scope setup

Execution starts with complete intake so scope and objective are clear before workflow actions begin.

2) Directory list preparation and approval

A proposed directory list is prepared and approved before broad publishing, helping keep relevance and quality aligned.

3) Controlled execution with status tracking

Publishing runs with tracked status transitions, improving visibility into pending and blocked items.

4) Report and recommendation handoff

Each cycle closes with report output that includes submitted status, pending items, and next recommendations.

5) Offer and promise boundaries

Current offer framing includes one-time payment model, publication to 100+ directories (per current website language), no hidden extra fees (per current FAQ language), and refund possibility if process has not started.

ListingBott does not promise guaranteed ranking positions, guaranteed traffic by a specific date, guaranteed indexing speed, or outcomes controlled by third-party platforms.

For DR-goal projects, DR growth to 15 is only in the qualified setup: starting DR below 15, explicit domain growth goal, and approved directory list.

Teams implementing this model typically pair automation rules with automation with human review safeguards.

Unveiling ListingBott’s Structured Workflow

Proof/results

Automation quality should be evaluated by both throughput and error behavior. Throughput alone can hide process deterioration.

Automation health scorecard

| Metric group | Example metrics | Review cadence | Why it matters |

|---|---|---|---|

| Throughput | tasks completed per cycle | Weekly | Measures processing capacity |

| Quality | critical-field error rate, recurrence rate | Weekly | Measures reliability |

| Exception handling | exception closure SLA, backlog age | Weekly | Measures control responsiveness |

| Review effectiveness | manual-review override rate | Weekly | Measures rubric quality |

| Outcome direction | referral trend, assisted actions | Monthly | Measures business support |

This scorecard helps teams find the right automation balance instead of chasing volume.

30-60-90 automation maturity model

| Period | Primary objective | Expected signal |

|---|---|---|

| Days 1-30 | Stabilize task classification and review rubric | Fewer uncontrolled exceptions |

| Days 31-60 | Improve exception handling speed | Lower backlog and faster closure |

| Days 61-90 | Expand safe automation scope | Higher throughput without quality decline |

If quality declines during expansion, reduce scope and reinforce manual gates.

Reporting model for automation programs

A useful automation report should show:

- which tasks were automated,

- which tasks required manual intervention,

- what exceptions were unresolved,

- what rule adjustments are recommended.

Without these sections, teams cannot improve automation logic systematically.

Risk controls for automated listing operations

| Risk | Early signal | Control action |

|---|---|---|

| Over-automation | quality errors increase after automation expansion | roll back scope to prior stable set |

| Under-automation | manual queue grows despite stable task patterns | automate repeatable low-risk tasks |

| Review inconsistency | high variance between reviewers | standardize rubric and reviewer training |

| Exception stagnation | backlog age exceeds SLA | escalate and assign dedicated owner |

| Reporting blind spots | high throughput with unclear quality trend | add mandatory quality metrics block |

These controls keep automation from becoming a hidden risk source.

Expectation discipline for automation initiatives

Automation can improve consistency and efficiency, but it should not be framed as a guarantee of ranking outcomes by specific dates. Strong teams communicate automation as an execution-quality lever and track directional performance trends over time.

This avoids expectation mismatches and improves long-term planning.

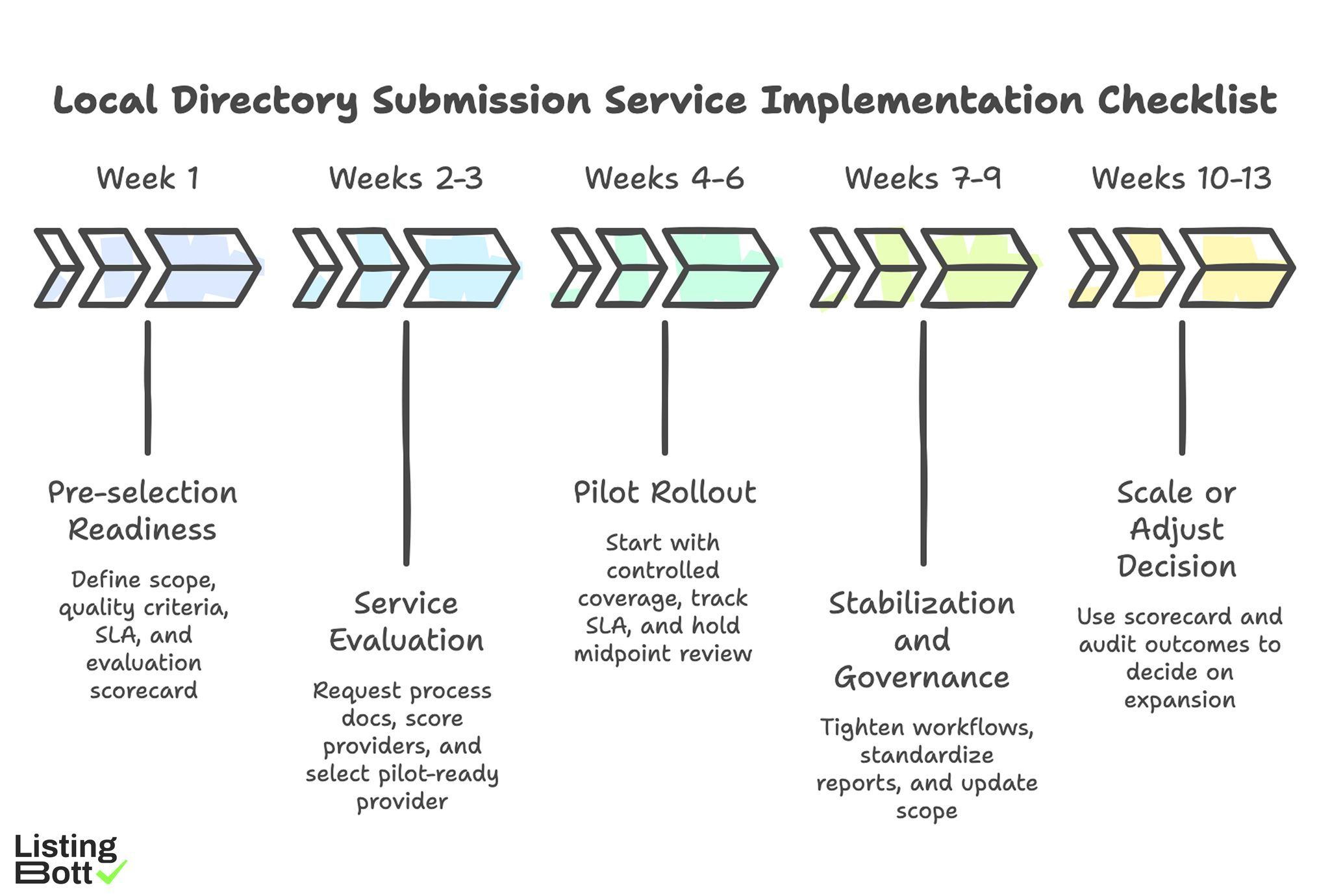

Implementation checklist

Use this checklist to deploy automation safely in listing workflows.

Phase 1: Automation design (week 1)

- Classify tasks by risk and repeatability.

- Define manual-review checkpoints.

- Set exception severity and SLA rules.

- Assign owners for automation and QA.

- Build baseline quality and throughput metrics.

Phase 2: Controlled rollout (weeks 2-3)

- Automate low-risk stable tasks first.

- Keep critical fields under manual review.

- Start exception queue with owner + due date.

- Validate output quality after each run.

- Document rule changes in change log.

Phase 3: Stabilization (weeks 4-6)

- Track override rates and recurring exceptions.

- Tighten task classification rules.

- Improve reviewer consistency with rubric checks.

- Adjust SLA targets based on observed load.

- Publish weekly automation health summaries.

Phase 4: Measured expansion (weeks 7-9)

- Expand automation only for stable task classes.

- Revalidate quality thresholds before each expansion.

- Keep rollback criteria pre-defined.

- Audit exception root causes monthly.

- Update SOP for new automation boundaries.

Phase 5: Scaled governance (weeks 10-13)

- Maintain ongoing throughput + quality reporting.

- Keep manual controls for high-risk tasks.

- Review automation scope quarterly.

- Retire unstable rules quickly.

- Publish next-quarter optimization roadmap.

Weekly operator checklist

- Review automated run outputs.

- Validate critical fields manually.

- Triage exception backlog by severity.

- Confirm owner and due date for open issues.

-

Approve next scope changes only if quality gates pass.

Local Directory Submission Service Implementation Checklist

Common mistakes and fixes

| Mistake | Effect | Fix |

|---|---|---|

| Automating critical edits too early | High-impact data errors | keep critical fields manual |

| No exception owner | unresolved backlog grows | assign accountable owner per queue |

| No review rubric | inconsistent QA outcomes | standardize review criteria |

| Throughput-only reporting | hidden quality decay | add quality and exception metrics |

| Expanding without gates | instability at scale | enforce pre-expansion thresholds |

FAQ

1) What should be automated first in listing workflows?

Start with low-risk, repeatable tasks that have clear structured inputs.

2) What should remain manual for now?

Critical customer-facing data changes and high-context classification decisions.

3) How do we know if automation is hurting quality?

Track critical-field error rate, exception backlog age, and manual override trends.

4) How often should automation rules be reviewed?

Review weekly during rollout and at least monthly after stabilization.

5) Can automation guarantee SEO outcomes?

No. It improves process quality and efficiency but cannot guarantee fixed-date ranking outcomes.

6) When should automation scope be expanded?

Only after quality, SLA, and review consistency metrics remain stable across cycles.

Final takeaway

Online listing management automation works when teams automate stable tasks and keep high-risk decisions under human control. The winning model is not maximum automation; it is controlled automation with measurable quality protection.