Quick answer

Local SEO directory submission works best when teams focus on consistency and relevance before scale. A practical implementation checklist should cover data integrity, local category fit, submission sequencing, and post-submission monitoring.

The fastest way to lose performance is to push volume without quality controls. The fastest way to improve reliability is to run a repeatable checklist with clear pass/fail gates.

A strong local seo directory submission process starts with preparation discipline and continues through monitoring, not just first-wave publishing.

sbb-itb-8e44301

Problem framing

Local SEO submission programs often fail for operational reasons, not strategic reasons. Teams know where they want to appear, but execution quality breaks down in the details.

Typical operational issues:

- business data inconsistencies across profiles,

- low-fit directory selection for local intent,

- duplicate listing conflicts,

- unclear ownership of issue resolution,

- no structured reporting cadence after publishing.

These issues reduce trust signals and make performance attribution harder. In local SEO, small inconsistencies can have disproportionate effects over time.

Local SEO checklist failure modes

| Failure mode | Why it appears | Practical impact |

|---|---|---|

| Inconsistent NAP and core profile fields | Multiple sources used during submission | Data conflicts and correction backlog |

| Category misalignment | Generic categories chosen too quickly | Lower local-intent fit |

| Weak directory relevance filter | Quantity prioritized over fit | Lower signal quality and more maintenance load |

| No duplicate governance | Existing records not reconciled | Conflicting profiles |

| No post-launch checks | Teams assume completion equals quality | Drift and unresolved issues persist |

A checklist should prevent these five failure modes by design.

Directory prioritization by local intent

| Priority tier | Directory type | Inclusion logic |

|---|---|---|

| Tier 1 | Core local trust channels | Always include when profile readiness passes |

| Tier 2 | Industry-aligned local directories | Include when service/category fit is verified |

| Tier 3 | Regional ecosystem channels | Include after Tier 1/2 quality is stable |

| Tier 4 | Low-trust or low-fit channels | Exclude by default unless specific rationale exists |

This structure reduces low-signal expansion and keeps local relevance central.

Local SEO quality controls that matter most

| Control | What to validate | Pass condition |

|---|---|---|

| Identity consistency | name, address, phone, hours | Zero critical mismatches |

| Category precision | category/service mapping | Category set matches local intent |

| Profile completeness | core attributes, descriptions, assets | Meets predefined completeness standard |

| Duplicate handling | detection and reconciliation | No unresolved high-severity duplicates |

| Issue response | SLA and owner assignment | Every critical issue has owner and due date |

When teams skip these controls, short-term speed usually creates long-term rework.

Decision framework: submit now or fix first

Use this order:

- Fix critical profile inconsistencies.

- Resolve duplicate conflicts.

- Validate category and service mapping.

- Confirm issue-response ownership and SLA.

- Submit next wave only after all four checks pass.

This gate-first model keeps local SEO execution stable during scale.

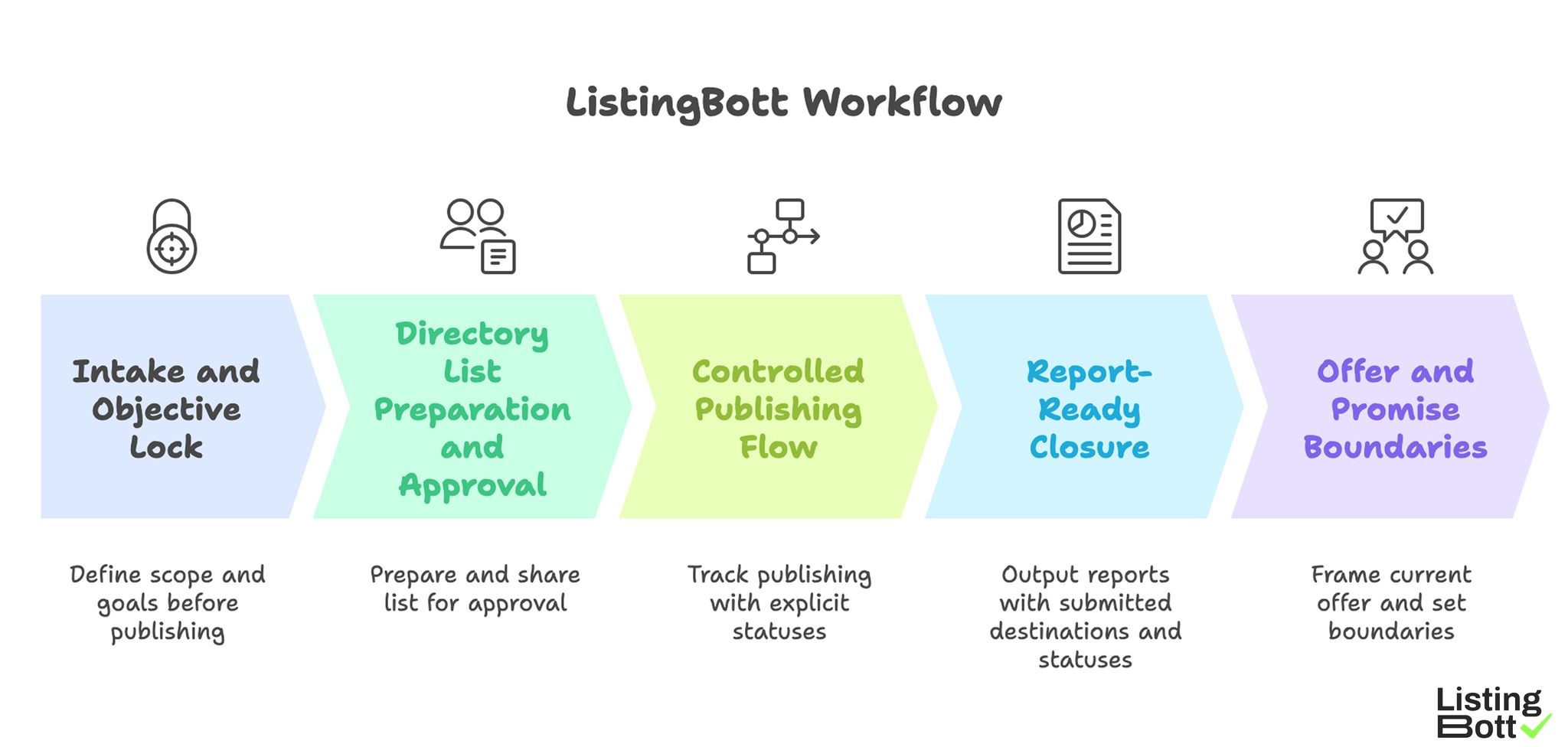

How ListingBott works

ListingBott uses a structured process: client-form intake, directory-list preparation and approval, publishing, and report handoff. The workflow is designed to keep scope and status visible through the cycle.

1) Intake and objective lock

Execution starts with complete intake to define scope and goals before publishing begins.

2) Directory list preparation and approval

A proposed list is prepared and shared for approval before broad publishing. This supports relevance and expectation alignment.

3) Controlled publishing flow

Publishing is tracked with explicit statuses so teams can diagnose and resolve issues reliably.

4) Report-ready closure

Each cycle ends with report output: submitted destinations, current statuses, pending items, and recommended next actions.

5) Offer and promise boundaries

Current offer framing includes one-time payment model, publication to 100+ directories (per current website language), no hidden extra fees (per current FAQ language), and refund possibility if process has not started.

ListingBott does not promise guaranteed ranking positions, guaranteed traffic by a specific date, guaranteed indexing speed, or outcomes controlled by third-party platforms.

For DR-goal projects, DR growth to 15 is only in the qualified setup: starting DR below 15, explicit domain growth goal, and approved directory list.

Teams that run repeated local cycles can combine this process with broader local listing management governance.

ListingBott Workflow

Proof/results

Local SEO submission quality should be measured with operational and directional metrics together. Operational metrics reveal execution reliability; directional metrics show whether the program is supporting business outcomes.

Local SEO submission scorecard

| Metric group | Example metrics | Review cadence | Why it matters |

|---|---|---|---|

| Data quality | profile consistency score, critical-field error rate | Weekly | Indicates trust-signal health |

| Process quality | issue closure SLA, unresolved issue age | Weekly | Indicates operational reliability |

| Coverage quality | accepted high-fit listing share | Per wave | Indicates relevance discipline |

| Reporting quality | recommendation action rate | Per cycle | Indicates decision-loop strength |

| Outcome direction | referral trend, assisted actions | Monthly | Indicates business support trend |

This scorecard is more useful than raw submission count.

Reporting checklist for local SEO decisions

Each cycle report should answer:

- What changed in this cycle?

- Which critical issues remain open?

- Which issues repeated from previous cycle?

- What decisions are required now?

- Who owns each next action and by when?

If reports cannot answer these, process quality is still underdeveloped.

Quality thresholds before scaling

| Threshold | Minimum standard |

|---|---|

| Data consistency | Stable high consistency across priority profiles |

| SLA stability | Critical issues resolved within defined window |

| Duplicate control | Low unresolved duplicate conflict rate |

| Recommendation execution | High follow-through on action items |

| Capacity readiness | Team can absorb next-wave exception volume |

Scale should be gated by quality readiness, not timeline pressure.

Local SEO risk controls

| Risk | Early signal | Control |

|---|---|---|

| Over-expansion | More Tier 3/4 additions without evidence | Enforce tiered inclusion policy |

| Recurring inconsistencies | Same critical errors reappear | Root-cause review and template updates |

| Slow issue closure | Pending high-severity issues increase | SLA escalation protocol |

| Weak reporting discipline | Reports lack owner actions | Mandatory action-owner section |

| Decision drift | Next-wave scope changes without rationale | Approval checkpoint before each wave |

These controls reduce volatility and improve cycle predictability.

Expectation discipline in local SEO

Local directory submission can strengthen consistency and support visibility trends, but it should not be framed as fixed-date ranking guarantees. Reliable teams optimize for process quality and directional progress over repeated cycles.

This mindset keeps planning realistic and improves trust.

Implementation checklist

Use this implementation checklist to run local SEO directory submissions with fewer errors and clearer decisions.

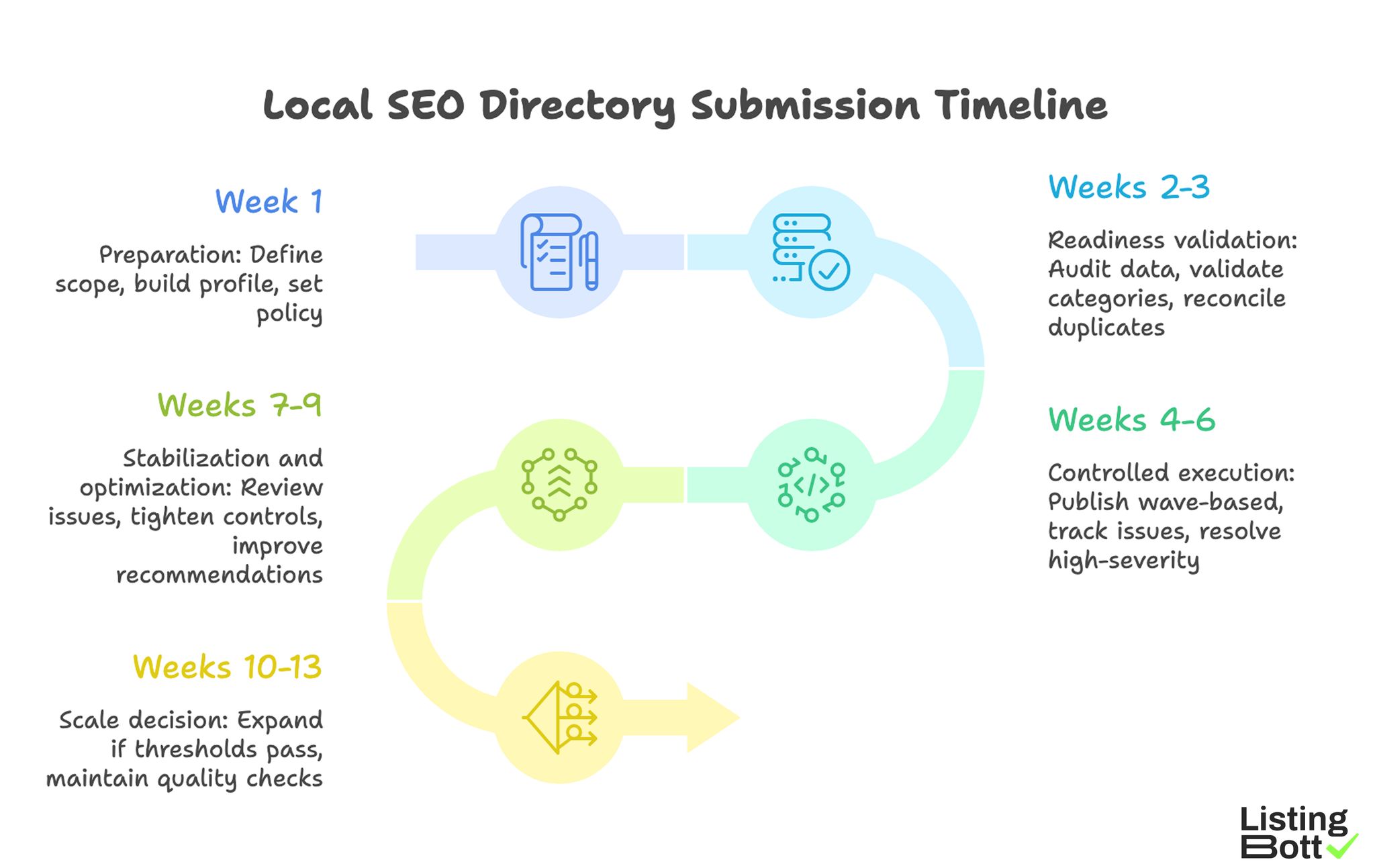

Phase 1: Preparation (week 1)

- Define local scope and target geography.

- Build canonical profile data sheet.

- Set directory tier policy and exclusions.

- Define SLA and issue severity rules.

- Assign owner for cycle governance.

Phase 2: Readiness validation (weeks 2-3)

- Run full data consistency audit.

- Validate category/service mapping.

- Screen and reconcile known duplicates.

- Confirm report template and decision fields.

- Approve first controlled wave.

Phase 3: Controlled execution (weeks 4-6)

- Publish with wave-based sequencing.

- Track status and issues daily during active windows.

- Resolve high-severity items first.

- Update checklist pass/fail records.

- Close wave with recommendation summary.

Phase 4: Stabilization and optimization (weeks 7-9)

- Review recurring issue categories.

- Tighten template and QA controls.

- Reduce low-fit inclusion drift.

- Improve recommendation follow-through.

- Revalidate scale readiness thresholds.

Phase 5: Scale decision (weeks 10-13)

- Expand only if thresholds pass consistently.

- Keep weekly quality checks mandatory.

- Preserve approval checkpoint before each wave.

- Document lessons and SOP updates.

- Set next-quarter local SEO submission plan.

Weekly operator checklist

- Verify critical fields across priority listings.

- Review open issues by severity and SLA age.

- Confirm owner/due-date on next actions.

- Check duplicate conflict status.

-

Approve or hold next-wave scope based on gates.

Local SEO Directory Submission Timeline

Common implementation mistakes and fixes

| Mistake | Effect | Fix |

|---|---|---|

| Rushing submission without readiness checks | High correction load | Enforce pre-wave checklist gate |

| No tier strategy | Low-fit coverage creep | Use intent-based tier policy |

| Ignoring duplicate risk | Conflicting local signals | Run recurring duplicate audit |

| Weak reporting format | Slow decisions | Require owner-action recommendations |

| Scaling on schedule, not quality | Error amplification | Gate scale with quality thresholds |

FAQ

1) What is the first step in local SEO directory submission?

Create a canonical profile dataset and define your tiered directory policy before publishing.

2) How many directories should we start with?

Start with a controlled high-fit set and expand only after quality thresholds stabilize.

3) Which metric is most useful in early cycles?

Consistency and issue-closure metrics are the strongest early indicators.

4) How often should local SEO submission reports be reviewed?

Weekly for operations and monthly for strategic decisions.

5) Can local SEO directory submissions guarantee rankings?

No. They support consistency and directional visibility, but fixed-date guarantees are not reliable.

6) When should teams scale their submission program?

Only after data quality, SLA, and recommendation execution are consistently stable.

Final takeaway

Local SEO directory submission is most effective when run as a checklist-driven operations process. Teams that enforce readiness gates, tiered coverage logic, and action-oriented reporting typically achieve better reliability and clearer long-term outcomes.