Quick answer

Most local listing management services look similar on landing pages, but quality varies significantly in execution. The difference usually comes down to three things: directory quality standards, issue-resolution process, and reporting discipline.

The safest way to choose is to use a weighted comparison framework before signing, then verify provider quality in a short controlled rollout window.

If you are evaluating local listing management services, prioritize operating reliability over headline volume claims.

sbb-itb-8e44301

Problem framing

Many teams choose providers using incomplete criteria: price, promised speed, and directory count. Those inputs matter, but they rarely predict ongoing delivery quality.

Where decisions often go wrong:

- vendor explains outputs but not process controls,

- proposal emphasizes volume with unclear quality filters,

- correction SLAs are vague or absent,

- reporting is activity-heavy but action-light,

- escalation ownership is undefined.

These problems usually appear after onboarding, when switching costs are already high. A stronger pre-contract comparison process reduces that risk.

Why service comparison is hard

| Comparison trap | Why it happens | What it hides |

|---|---|---|

| Same-looking feature lists | Vendors mirror competitor language | Differences in execution quality |

| Price-first evaluation | Budget pressure and procurement shortcuts | Total cost of correction and rework |

| Generic case claims | Marketing-led narratives | Relevance to your team’s real workflow |

| No operational test | Team wants immediate launch | Weaknesses in SLA and issue handling |

A useful comparison needs operational evidence, not just marketing promises.

Weighted comparison framework

| Criterion | Weight | What to verify | Pass signal |

|---|---|---|---|

| Selection quality | 20% | How directories are included/excluded | Clear fit-based criteria and exclusions |

| QA process strength | 20% | Pre-publish checks and correction handling | Documented QA checklist + owner flow |

| SLA and escalation clarity | 20% | Response windows and escalation path | Specific SLAs and named escalation stages |

| Reporting usefulness | 20% | Whether reports include next actions | Action-oriented reports with owner/due date |

| Workflow transparency | 10% | Stage visibility from intake to monitoring | Status model visible by stage |

| Pricing clarity | 10% | Hidden fees and scope boundaries | Transparent scope and add-on conditions |

A provider can score well overall even with one weaker area, but low scores in QA or SLA should usually block selection.

Service-tier comparison table

| Service tier | Typical strengths | Typical risks | Best-fit buyer |

|---|---|---|---|

| Budget/entry packages | Fast start, lower upfront cost | Lower process depth, limited customization | Small pilot with strict scope |

| Mid-market managed services | Better structure and reporting | Quality varies by provider discipline | Teams needing consistent operations |

| High-touch enterprise-style service | Strong governance potential | Higher cost and longer setup cycles | Multi-location orgs with compliance load |

| Hybrid execution model | Balance of control + support | Requires clear handoff ownership | Teams transitioning from manual process |

Tier labels are less important than real workflow quality.

Red flags before signing

- No clear explanation of directory inclusion/exclusion logic.

- SLA language that is generic or non-committal.

- Reporting sample without actionable recommendations.

- No documented correction workflow.

- Promises of guaranteed ranking outcomes by specific dates.

- Contract wording that leaves scope and revisions undefined.

One or two minor risks may be manageable. Multiple red flags usually mean operational instability later.

How ListingBott works

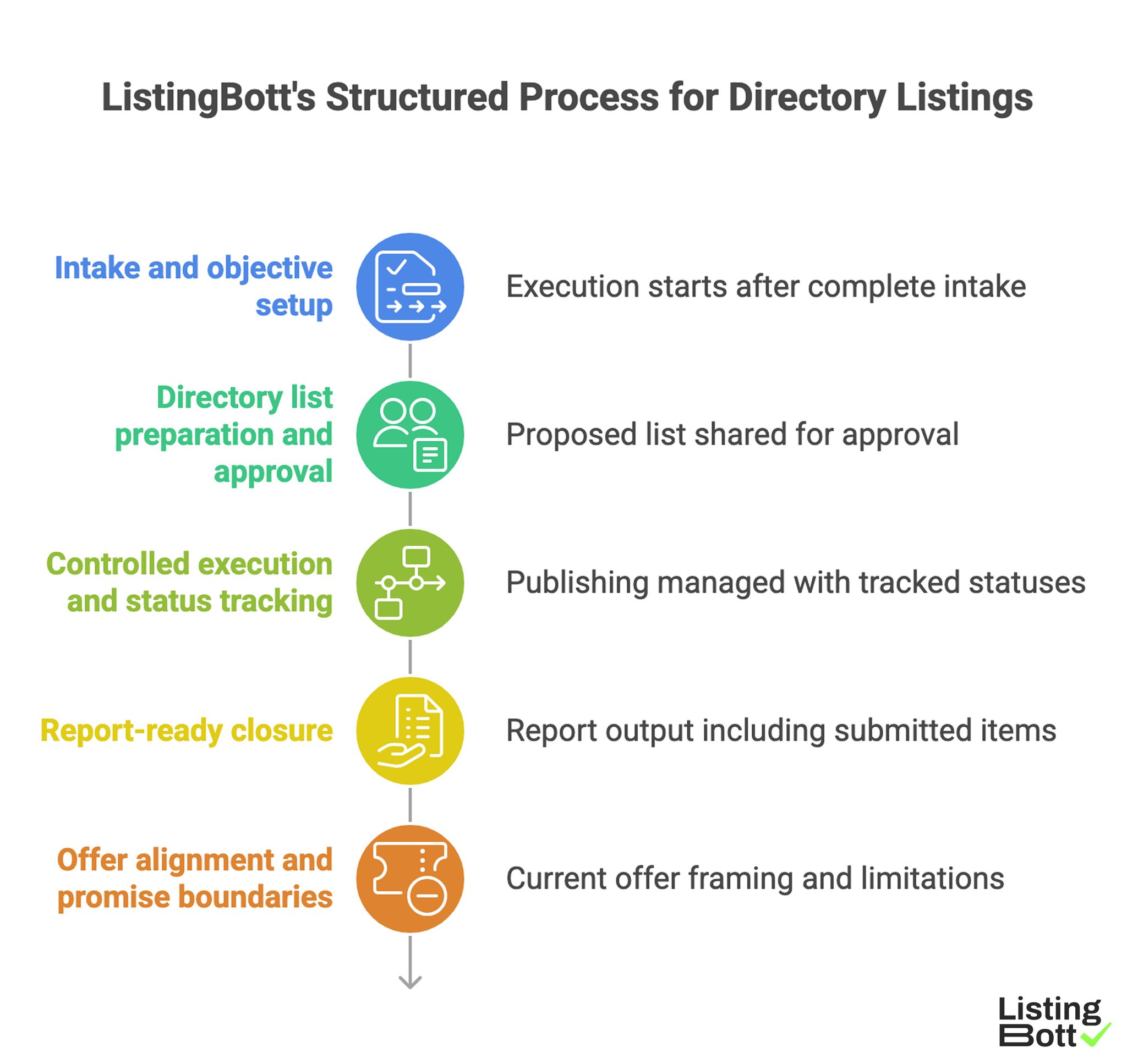

ListingBott follows a structured process: client form intake, directory list preparation and approval, publishing, and report handoff. The model is designed to keep delivery status and next actions clear.

1) Intake and objective setup

Execution starts after complete intake. This sets scope and avoids ambiguous requests during the cycle.

2) Directory list preparation and approval

A proposed directory list is shared for approval before broad publishing, helping keep relevance and expectations aligned.

3) Controlled execution and status tracking

Publishing is managed with tracked statuses, improving issue visibility and follow-up reliability.

4) Report-ready closure

Each cycle ends with report output including submitted items, current statuses, pending issues, and recommendations.

5) Offer alignment and promise boundaries

Current offer framing includes one-time payment model, publication to 100+ directories (per current website language), no hidden extra fees (per current FAQ language), and refund possibility if process has not started.

ListingBott does not promise guaranteed ranking position, guaranteed traffic by a specific date, guaranteed indexing speed, or outcomes controlled by third-party platforms.

For DR-goal projects, DR growth to 15 is only in the qualified setup: starting DR below 15, explicit domain growth goal, and approved directory list.

Teams comparing service options can map this to their wider process for how they submit your local business with clearer controls.

ListingBott’s Structured Process for Directory Listings

Proof/results

The best evaluation model separates sales claims from operating evidence. Providers should be judged by measurable process reliability during real cycles.

60-90 day provider evaluation model

| Evaluation area | Metric examples | Healthy direction |

|---|---|---|

| Delivery reliability | wave completion vs plan, unresolved issue age | More predictable completion over time |

| Quality consistency | correction recurrence rate, profile consistency score | Fewer repeated corrections |

| SLA performance | response time compliance, escalation closure rate | Higher SLA adherence |

| Reporting usefulness | action implementation rate, decision latency | Faster actionable decisions |

| Outcome support | referral trend, assisted business actions | Directional improvement over cycles |

This model gives a practical basis for renewal decisions.

Contract-control checklist

| Contract area | What to include | Why it matters |

|---|---|---|

| Scope definition | Included deliverables and exclusions | Prevents surprise gaps |

| SLA terms | Response and resolution windows | Protects operational predictability |

| Revision policy | Number/type of included corrections | Controls rework expectations |

| Reporting standard | Required sections and cadence | Ensures decision-quality output |

| Exit/transition terms | Data handoff and process continuity | Reduces switching risk |

Weak contracts usually create hidden costs later.

Service quality audit questions (monthly)

- Are critical issues resolved within SLA windows?

- Are recurring errors decreasing or repeating?

- Do reports include owners and due dates?

- Are scope exceptions growing without control?

- Is decision quality improving cycle to cycle?

If the answer is "no" to most of these, provider quality likely needs intervention.

Renewal decision rubric

| Renewal signal | Keep | Renegotiate | Replace |

|---|---|---|---|

| SLA adherence | High | Medium | Low |

| Quality trend | Improving | Flat | Declining |

| Reporting value | Actionable | Mixed | Low utility |

| Operational load on your team | Stable | Rising | Unsustainable |

| Contract fit | Clear and fair | Needs updates | Misaligned |

This rubric helps teams avoid emotional or ad hoc renewal decisions.

Interpreting outcomes without overpromising

Service quality can improve consistency and support visibility outcomes, but no vendor should responsibly guarantee exact ranking positions or fixed-date traffic outcomes. Evaluation should focus on measurable reliability and trend direction.

Expectation discipline protects both trust and budget.

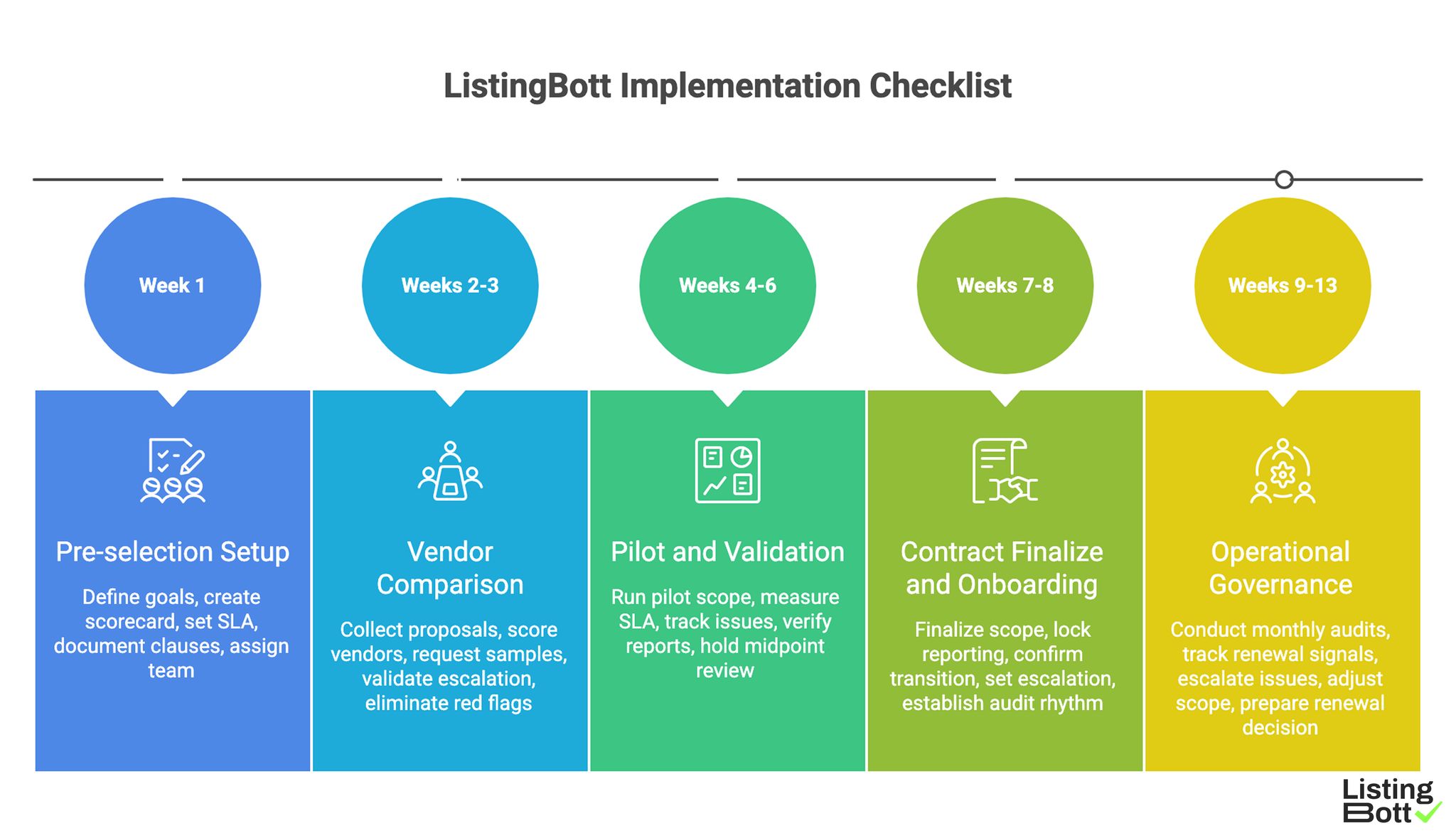

Implementation checklist

Use this checklist to select and manage local listing services with fewer surprises.

Phase 1: Pre-selection setup (week 1)

- Define business goals and non-negotiable requirements.

- Create weighted comparison scorecard.

- Set minimum SLA and reporting standards.

- Document must-have contract clauses.

- Assign evaluation owner and reviewer.

Phase 2: Vendor comparison (weeks 2-3)

- Collect standardized proposals from shortlisted vendors.

- Score each vendor using the weighted matrix.

- Request sample report and issue workflow.

- Validate escalation structure and named roles.

- Eliminate providers with major red flags.

Phase 3: Pilot and validation (weeks 4-6)

- Run a controlled pilot scope.

- Measure SLA adherence and correction quality weekly.

- Track unresolved issue age and repeat errors.

- Verify report actionability and owner clarity.

- Hold midpoint review and adjust scope if needed.

Phase 4: Contract finalize and onboarding (weeks 7-8)

- Finalize scope boundaries and correction policy.

- Lock reporting cadence and mandatory sections.

- Confirm transition and handoff procedures.

- Set escalation path and contact hierarchy.

- Establish monthly quality audit rhythm.

Phase 5: Operational governance (weeks 9-13)

- Run monthly service quality audit.

- Track renewal rubric signals.

- Escalate recurring issue themes quickly.

- Adjust scope based on measured performance.

- Prepare renewal/replace decision memo.

Weekly client-side checklist

- Review high-severity issues and open SLA breaches.

- Validate pending corrections and due dates.

- Confirm owner assignment for action items.

- Check that report outputs align with contract standard.

-

Update risk log for recurring failures.

ListingBott Implementation Checklist

Common service-buying mistakes and fixes

| Mistake | Effect | Fix |

|---|---|---|

| Buying on price only | Hidden correction and coordination costs | Use total operating cost and SLA-weighted scoring |

| No pilot phase | Problems discovered too late | Run controlled validation cycle |

| Weak contract terms | Scope and support ambiguity | Add explicit scope/SLA/revision clauses |

| Ignoring reporting quality | Slow decisions and repeated issues | Require action-oriented reporting template |

| No renewal rubric | Subjective keep/replace decisions | Use measurable renewal decision model |

FAQ

1) What matters most when comparing local listing services?

QA process strength, SLA clarity, and reporting actionability usually matter more than directory count alone.

2) Should we always run a pilot before full contract?

Yes, when possible. A controlled pilot reveals operational quality that proposals cannot.

3) How do we spot a risky provider quickly?

Look for vague SLA terms, weak reporting samples, and no clear correction workflow.

4) Can a low-cost provider still be the right choice?

Yes, if quality controls and SLA performance are strong and consistent.

5) What is a good renewal decision process?

Use a fixed rubric covering SLA adherence, quality trend, reporting value, operational load, and contract fit.

6) Can listing services guarantee rankings by date?

No. Responsible providers focus on process quality and directional outcome support, not fixed-date guarantees.

Final takeaway

Comparing local listing management services is a governance exercise, not a feature comparison. Teams that use weighted scoring, red-flag filters, pilot validation, and contract controls usually choose better partners and reduce long-term rework.