Quick answer

A strong local directory submission service is defined by process quality, not submission volume. The most reliable programs combine the right coverage map, strict QA controls, and reporting that tells you what to do next.

If one of those three pieces is weak, results become noisy and hard to scale. The goal is not to buy submissions. The goal is to buy predictable execution quality.

A local directory submission service should be managed as an operating system decision with measurable quality gates.

sbb-itb-8e44301

Problem framing

Many local campaigns underperform for avoidable reasons: coverage is broad but low-fit, profile quality is inconsistent, and reports do not support decisions.

The most common structural issues are:

- no tiered coverage strategy,

- weak review process before submissions,

- no explicit correction SLA,

- no status normalization in reporting,

- no link between report outputs and next-cycle actions.

When these issues repeat, local teams experience rising correction costs and low confidence in what is actually improving.

Coverage design mistakes that reduce signal

| Mistake | Why teams do it | What goes wrong |

|---|---|---|

| Chasing maximum directory count | Easy KPI to communicate | Coverage quality drops and maintenance load rises |

| Ignoring local relevance tiers | Faster procurement process | High-fit local opportunities underweighted |

| Mixing low-trust directories into core waves | Short-term completion pressure | More noise, less clarity in outcomes |

| No geography-specific filters | One global rule seems simpler | Mismatch between business footprint and listing footprint |

Coverage quality must be designed, not assumed.

Local coverage framework (practical)

| Coverage tier | Role in strategy | Typical inclusion rule |

|---|---|---|

| Tier 1: Core local trust channels | Foundation for presence and consistency | Always include if profile quality is ready |

| Tier 2: Industry-relevant local channels | Qualified local discovery support | Include when category fit is proven |

| Tier 3: Regional/local ecosystem channels | Incremental local support | Include after Tier 1/2 quality is stable |

| Tier 4: Low-trust volume channels | Usually low strategic value | Exclude by default unless clear reason |

A good service should justify inclusion/exclusion decisions tier by tier.

QA checklist before any submission wave

| QA area | Required check | Pass condition |

|---|---|---|

| Business identity | name, phone, address, hours consistency | Zero critical mismatches |

| Category/service mapping | category precision and service clarity | Category set aligned with business intent |

| Profile assets | approved descriptions/media | Uses current approved assets only |

| Duplicate risk | existing conflicting records screened | No unresolved high-risk duplicates |

| Escalation path | owner and SLA for issue closure | Explicit owner + response window |

This pre-submission QA block is where long-term quality is usually won.

Reporting quality checklist

| Reporting element | Minimum standard | Why it matters |

|---|---|---|

| Status model | Standard states across all destinations | Prevents ambiguous progress reporting |

| Issue register | Severity, owner, due date | Enables reliable follow-up |

| Delta tracking | What changed since last cycle | Supports decision clarity |

| Recommendation block | Next actions with owners | Converts report into execution |

| Performance context | Basic KPI movement by cycle | Connects activity to outcomes |

A report without recommendation ownership is documentation, not decision support.

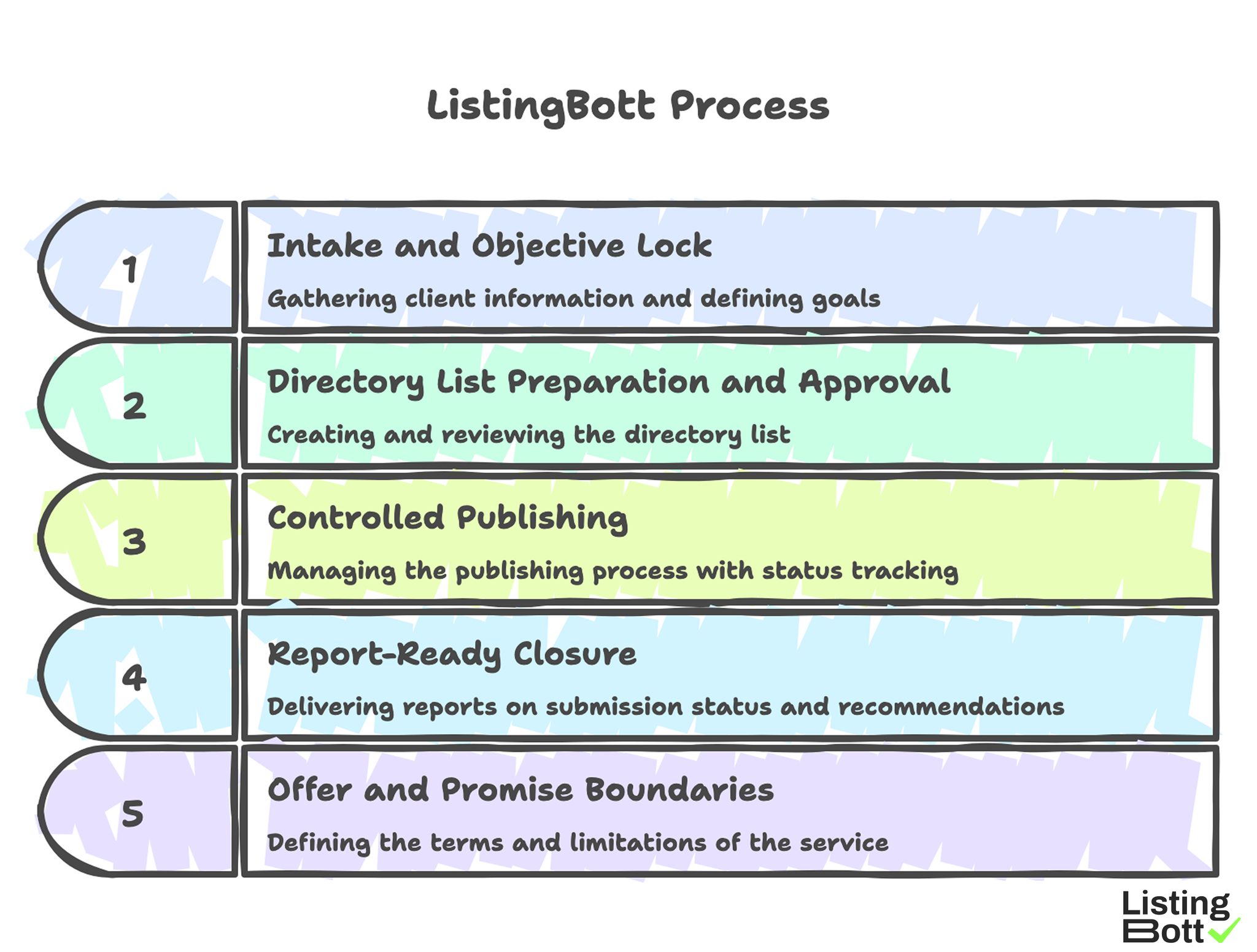

How ListingBott works

ListingBott runs a structured process: client-form intake, directory-list preparation and approval, publishing, and report handoff. The process is designed for predictable execution and clear status communication.

1) Intake and objective lock

Execution starts with complete intake so scope and goal are explicit before work begins.

2) Directory list preparation and approval

A proposed list is prepared and shared for approval before full publishing. This controls relevance and expectation alignment.

3) Controlled publishing

Publishing is managed with status tracking to improve reliability and issue visibility.

4) Report-ready closure

Delivery includes reporting on what was submitted, current status, pending items, and next recommendations.

5) Offer and promise boundaries

Current offer language includes one-time payment model, publication to 100+ directories (per current website language), no hidden extra fees (per current FAQ language), and refund possibility if process has not started.

ListingBott does not promise guaranteed ranking positions, guaranteed traffic by a specific date, guaranteed indexing speed, or outcomes controlled by third-party platforms.

For DR-goal work, growth to DR 15 is only in the qualified setup: starting DR below 15, explicit domain growth goal, and approved directory list.

For teams mapping execution depth, this can sit inside a broader directory submission service workflow with clearer stage controls.

ListingBott Process

Proof/results

Reliable proof in local submission work is operational first: quality, consistency, and cycle-to-cycle learning. Outcome signals become easier to interpret when execution is stable.

90-day service quality scorecard

| Dimension | Metric examples | Healthy trend |

|---|---|---|

| Coverage quality | share of accepted high-fit listings | Improves by cycle |

| QA reliability | critical error recurrence rate | Declines consistently |

| SLA adherence | issue response and closure within window | Increases toward target |

| Reporting actionability | recommendations with owner + due date | Near-complete coverage |

| Decision speed | time from report to next-cycle decision | Decreases over time |

This scorecard helps teams evaluate service quality without overpromising short-term outcome jumps.

Coverage effectiveness interpretation

Coverage should be judged by fit-adjusted performance, not raw count. Practical signals include:

- fewer low-value destinations over time,

- higher completion quality in priority tiers,

- reduced correction volume after each wave,

- more predictable cycle planning.

If these are not improving, the service model likely needs adjustment.

Local reporting audit template

Review each report with this pass/fail set:

- Are all destinations mapped to a standard status taxonomy?

- Are unresolved issues ranked by severity?

- Are recommendations assigned to named owners?

- Is there clear delta vs prior cycle?

- Is next-wave scope justified by evidence?

A report that fails multiple checks should trigger process correction before scale.

Risk controls to keep service quality stable

| Risk | Leading indicator | Control |

|---|---|---|

| Over-expansion | wave size grows while error rate is flat/rising | Scale gate based on QA thresholds |

| Reporting drift | reports get longer but less actionable | enforce decision-summary section |

| Correction backlog | unresolved issue age increases | SLA escalation protocol |

| Low-fit coverage creep | more Tier 4 inclusions over time | require inclusion rationale |

| Owner ambiguity | recommendations without accountable names | mandatory owner field |

These controls reduce rework and keep cycles predictable.

Outcome expectations and trust discipline

Local submission services can support visibility and consistency outcomes, but they should not be framed as guaranteed ranking results by date. Sustainable evaluation should focus on repeatable process quality and directional performance movement.

This expectation model protects trust and budget planning.

Implementation checklist

Use this checklist to choose, onboard, and manage a local directory submission service with fewer surprises.

Phase 1: Pre-selection readiness (week 1)

- Define local scope and non-negotiable quality criteria.

- Set coverage tier framework and exclusion rules.

- Define minimum SLA and reporting requirements.

- Build weighted service evaluation scorecard.

- Assign internal owner for service governance.

Phase 2: Service evaluation (weeks 2-3)

- Request process docs for QA and escalation flows.

- Score providers on coverage, QA, SLA, and reporting.

- Review sample report against audit template.

- Eliminate providers with major red flags.

- Select pilot-ready provider.

Phase 3: Pilot rollout (weeks 4-6)

- Start with controlled tiered coverage scope.

- Run pre-submission QA checklist before each wave.

- Track SLA adherence and issue quality weekly.

- Validate report actionability after each cycle.

- Hold midpoint correction review.

Phase 4: Stabilization and governance (weeks 7-9)

- Tighten weak workflow steps identified in pilot.

- Reduce low-fit coverage and correction loops.

- Standardize report format and owner requirements.

- Update service scope based on evidence.

- Confirm readiness gates before expansion.

Phase 5: Scale or adjust decision (weeks 10-13)

- Use scorecard + audit outcomes to decide scale.

- Expand only after QA/SLA thresholds are stable.

- Keep monthly reporting audits mandatory.

- Document recurring root causes and fixes.

- Publish next-quarter local submission plan.

Weekly management checklist

- Review open issues by severity and SLA status.

- Confirm owners and due dates for pending actions.

- Validate status consistency across destinations.

- Track repeat error categories.

-

Approve next-wave scope only when quality gates pass.

Local Directory Submission Service Implementation Checklist

Common implementation mistakes and fixes

| Mistake | Effect | Fix |

|---|---|---|

| Coverage-first buying | High activity, weak signal quality | Use tiered fit-first coverage design |

| Skipping pre-wave QA | Recurring corrections and delays | Enforce mandatory QA gate |

| Weak SLA terms | Slow issue closure | Define response and resolution windows |

| Report without owners | Low follow-through | Require owner + due-date for actions |

| Scaling too soon | Error amplification | Apply scale gate tied to quality metrics |

FAQ

1) What should I evaluate first in a local directory submission service?

Start with coverage-fit logic, QA process quality, and SLA clarity.

2) Is directory count a reliable quality indicator?

Not by itself. Fit, consistency, and issue resolution quality are stronger indicators.

3) How often should service reporting be reviewed?

Review weekly for operational issues and monthly for strategic decisions.

4) What is the biggest red flag after onboarding?

Reports that list activity but provide no clear next actions with owners.

5) Can local submission services guarantee rankings?

No. Reliable providers focus on execution quality and directional outcomes, not fixed-date guarantees.

6) When should a team scale coverage?

Only when QA reliability and SLA performance are stable across multiple cycles.

Final takeaway

A local directory submission service should be selected and managed like an operations partner, not a one-time vendor. Teams that enforce coverage rules, QA gates, and reporting audits typically get more reliable execution and better long-term decision quality.