Quick answer

Local business directory submission in Pennsylvania performs better when operations are structured by region and governed by strict approval gates. Teams that treat the state as one uniform market often run into uneven data quality, delayed correction loops, and weak reporting comparability.

A practical Pennsylvania execution path is:

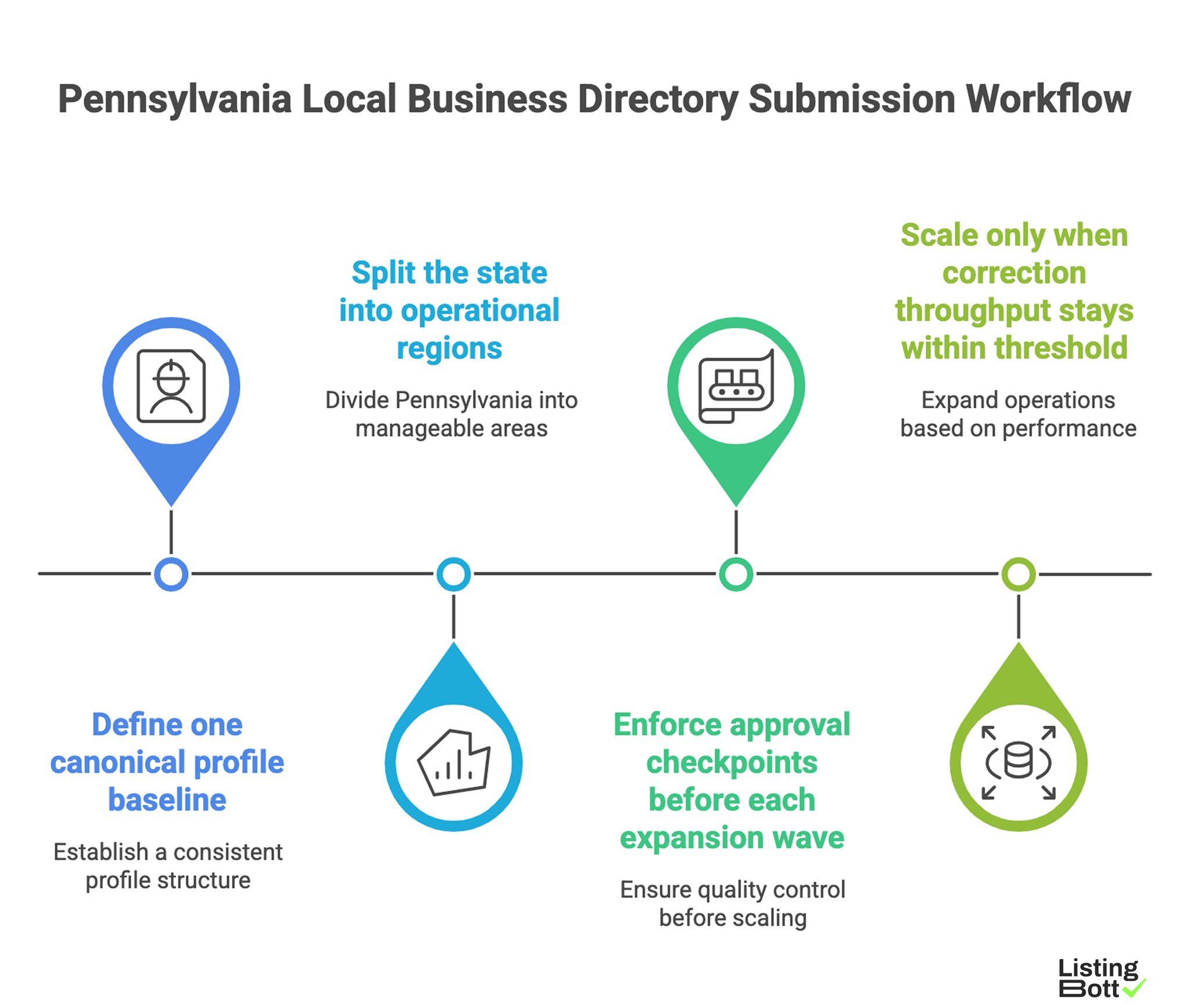

Pennsylvania Local Business Directory Submission Workflow

- define one canonical profile baseline,

- split the state into operational regions,

- enforce approval checkpoints before each expansion wave,

- scale only when correction throughput stays within threshold.

For broader U.S. planning, see Local business directory submission USA.

sbb-itb-8e44301

Methodology

This page uses a Pennsylvania-specific framework focused on regional variance management and approval discipline.

The RAMP model (Regionalization, Approval, Monitoring, Prioritization)

| Factor | Weight | Why it matters in Pennsylvania |

|---|---|---|

| Regionalization quality | 25 | Reduces process mismatch between high-density and distributed markets |

| Approval-gate discipline | 30 | Prevents uncontrolled expansion and scope drift |

| Monitoring reliability | 25 | Maintains visibility into quality and correction performance |

| Prioritization logic | 20 | Keeps limited execution capacity focused on highest-value regions first |

How to run RAMP:

- score each factor from 1-5 at fixed review intervals,

-

freeze expansion if

ApprovalorMonitoringdrops below 3, - require region-level pass criteria before launching the next region.

This avoids calendar-driven expansion that outpaces operational control.

Pennsylvania regional execution map

| Region | Wave | Main objective | Typical risk | Gate to proceed |

|---|---|---|---|---|

| Southeast (Philadelphia corridor) | 1 | establish strongest QA baseline | high volume with inconsistent source inputs | high-priority corrections within SLA |

| Southwest (Pittsburgh corridor) | 1 | replicate baseline with local variance controls | ownership handoff friction | named owner + escalation path active |

| Central PA markets | 2 | extend process with consistent reporting cadence | mixed data standards across teams | integrity pass rate aligned with wave 1 |

| Northeast + Lehigh Valley | 2-3 | expand coverage without backlog growth | delayed correction closure | backlog remains below threshold |

| Long-tail statewide | 3 | controlled breadth expansion | process fatigue and exception creep | two consecutive quality-pass cycles |

Approval-gate ladder

| Gate | When it applies | Required artifacts | If gate fails |

|---|---|---|---|

| Gate 1: Data readiness | Before any submission starts | canonical profile set + source ownership map | stop launch, correct source conflicts |

| Gate 2: Scope approval | Before region wave launch | approved inclusion/exclusion list | hold wave, re-review scope |

| Gate 3: Quality confirmation | After first regional batch | integrity pass-rate review + critical fix status | continue corrections, no expansion |

| Gate 4: Expansion approval | Before opening next region | trend report (quality, backlog, SLA) | freeze expansion until stable |

Teams that skip explicit gate artifacts usually lose traceability when correction volume increases.

84-day Pennsylvania rollout blueprint

| Phase | Window | Focus | Exit condition |

|---|---|---|---|

| Baseline setup | Days 1-16 | Canonical profile, role assignment, approval policy | baseline approved |

| Wave 1 execution | Days 17-36 | Southeast + Southwest initial submission batches | quality trend stable |

| Stabilization | Days 37-58 | correction backlog reduction and SLA normalization | critical fixes below threshold |

| Wave 2 expansion | Days 59-84 | Central + Northeast rollout under same controls | no quality regression after expansion |

A stabilization window is mandatory in mixed-region states where execution variance emerges after early rollout.

Pre-expansion checklist for Pennsylvania

| Checkpoint | Review question | Pass criteria |

|---|---|---|

| Canonical profile enforcement | Is one source of truth used everywhere? | Yes, no parallel baselines |

| Gate ownership | Is each approval gate mapped to a decision owner? | Yes, named owner per gate |

| SLA policy | Are critical fix timelines explicit and tracked? | Yes, reviewed weekly |

| Region status visibility | Is reporting segmented by region wave? | Yes, region dashboard exists |

| Expansion block rule | Is next wave blocked on measurable thresholds? | Yes, documented and active |

Comparison table

| Execution model | Best for | Strengths | Tradeoffs | Pennsylvania suitability |

|---|---|---|---|---|

| One-size statewide rollout | Small low-complexity pilots | Simple setup | High failure risk in mixed-density markets | Low |

| Region-first manual operations | Small teams with narrow scope | Flexible local handling | Operationally heavy, low scalability | Medium-low |

| Approval-first managed workflow | Teams needing predictable outcomes at scale | Strong governance and execution consistency | Depends on transparent process handoff | Strong |

| Hybrid governance model | Teams with internal QA leadership | Balanced control + scalability | Requires strict role clarity | Often strongest |

Model fit by operational maturity

| Current maturity state | Recommended model | Why |

|---|---|---|

| Low execution bandwidth | Approval-first managed workflow | reduces overhead while preserving control |

| Moderate bandwidth with growth targets | Hybrid governance | keeps quality oversight while scaling |

| High internal process maturity | Hybrid or software-led | enables fine-grained process control |

| Persistent backlog instability | Managed pilot + governance reset | stabilizes before expansion |

KPI set for region-based execution

| KPI | Why it matters | Early warning sign |

|---|---|---|

| Region integrity pass rate | core quality signal per wave | declining pass rate in newer regions |

| Critical issue closure velocity | indicates correction responsiveness | rising age of unresolved high-priority issues |

| Approval lead time | shows governance efficiency | gates delayed by missing artifacts |

| Backlog pressure score | tracks operational debt | sustained backlog growth across reviews |

| BOFU progression actions | ties visibility work to commercial outcomes | strong traffic, weak action progression |

These KPIs reduce the common bias toward counting submissions while ignoring quality cost.

Best by use case

1) Single-location business in major metro areas

Best fit: approval-first managed workflow.

Reason: it lowers process overhead while maintaining quality in high-activity environments.

2) Multi-location operator across Pennsylvania regions

Best fit: hybrid governance with region-level expansion gates.

Reason: this supports controlled rollout while preserving correction accountability.

3) SaaS team entering local markets statewide

Best fit: phased regional expansion tied to measurable thresholds.

Reason: scale is safer when readiness gates are satisfied before each wave.

4) Agency managing multiple client profiles

Best fit: repeatable workflow with explicit owner mapping and escalation logic.

Reason: agencies need consistent delivery standards across mixed regions.

5) Compliance-sensitive organizations

Best fit: approval-first model with complete audit trail.

Reason: formal gate artifacts improve traceability and reduce policy drift.

For evaluation benchmarks, compare provider workflows against practical execution criteria, not just listing volume claims. Useful references: best directory listing services and listing management software vs service.

Where ListingBott fits in Pennsylvania execution

What ListingBott does

ListingBott is a tool-based directory submission workflow for teams that need a structured process rather than ad hoc spreadsheet operations.

How ListingBott works

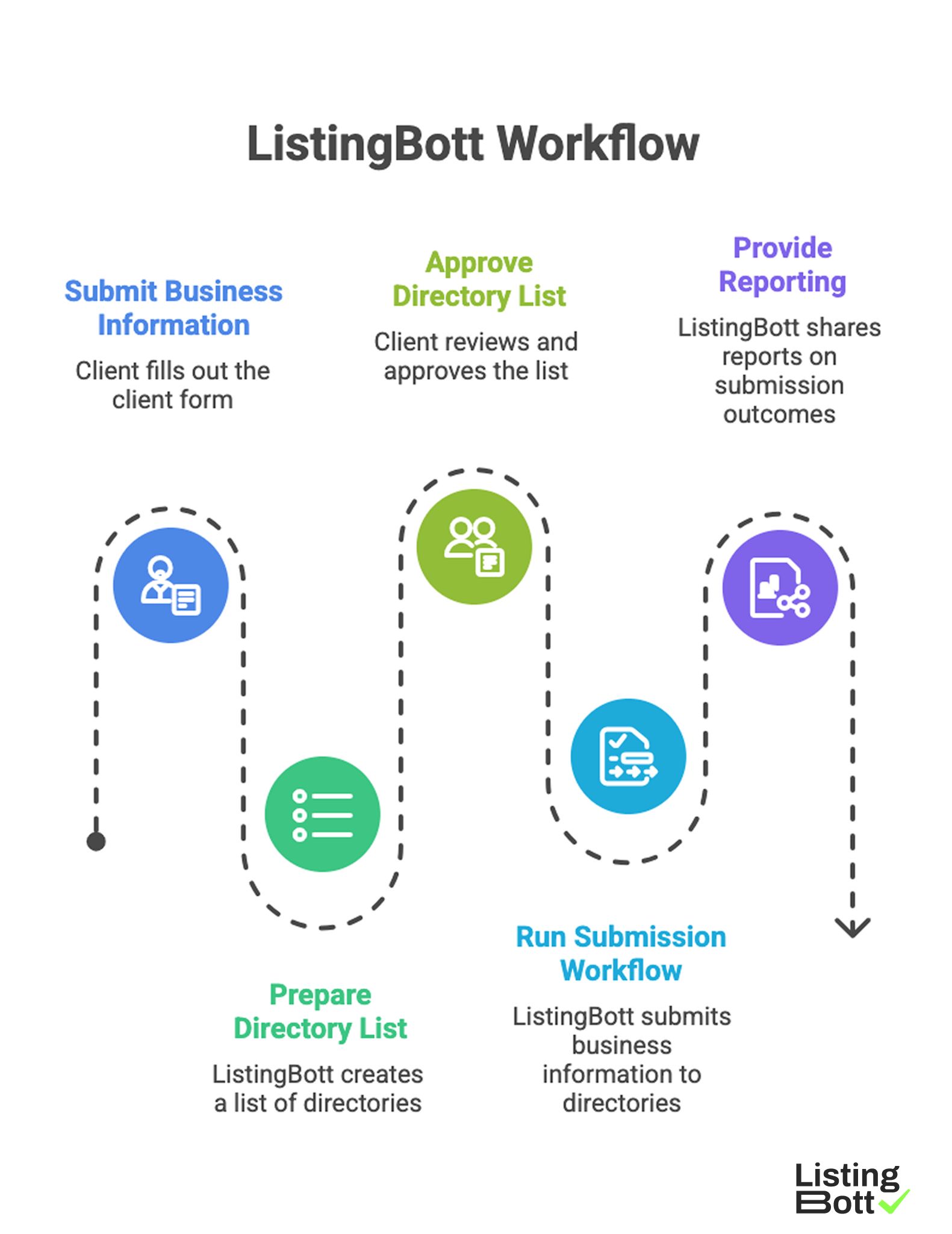

ListingBott Workflow

-

You submit business information through the

client form. -

ListingBott prepares a

list of directoriesfor the selected scope. - You approve that list before execution starts.

- ListingBott runs submission workflow according to approved scope.

- ListingBott provides reporting for completed and pending outcomes.

Key features and what they mean in operations

- Intake controls: reduce preventable profile errors before launch.

- Approval-before-publish: aligns scope and expectations early.

- Workflow visibility: supports coordination across owners.

- Reporting handoff: makes next-wave QA decisions easier.

For teams expanding across multiple regions, workflow transparency is a stronger long-term selection criterion than broad promises tied to raw output.

Expected outcomes and limits

Expected outcomes:

- structured submission execution,

- clearer status tracking,

- repeatable process for additional regions.

Limits to keep explicit:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guaranteed outcomes controlled by third-party platforms.

DR commitment is conditional only. A promise to reach DR 15 can apply when starting DR is below 15, the client selects domain growth as the explicit goal, and the directory list is approved before process launch. Refunds may apply if process has not started, and public offer language remains no hidden extra fees.

Risks/limits

Frequent failure patterns in Pennsylvania rollout

- Launching all regions simultaneously without gate controls.

- Allowing multiple profile baselines during active execution.

- Expanding despite unresolved critical correction backlog.

- Tracking totals only and ignoring region-level quality signals.

- Missing gate-owner accountability during escalation cycles.

Practical limits

- Directory submission supports discoverability and profile consistency, but does not replace broader SEO systems.

- Impact timing varies by competition, category dynamics, and third-party platform behavior.

- Fast expansion without governance creates quality debt that slows future execution.

Risk controls to enforce

- approval-gate artifacts for each wave,

- region-level status reporting cadence,

- SLA-bound correction ownership,

- expansion freeze rules when quality thresholds fail.

FAQ

Why is a region-first model important in Pennsylvania?

Because market density and operational conditions vary by region, and one workflow rhythm rarely fits the whole state.

Should every region launch at once?

Usually no. Start with highest-priority regions, stabilize quality, then expand in waves.

Which metrics should block expansion?

Use region integrity pass rate, critical-fix closure velocity, and backlog pressure score as minimum go/no-go controls.

Can directory submission guarantee ranking improvements?

No. It supports execution quality and discoverability, but rankings and timing depend on external factors.

Is DR growth automatically promised?

No. DR commitments are conditional and apply only to qualified setups.

What is the minimum governance layer needed?

At minimum: canonical data control, gate ownership, correction SLA, and region-level reporting cadence.