Quick answer

Local business directory submission in Colorado should be run with terrain-aware scheduling instead of a single statewide rollout rhythm. Operational load changes by zone, so expansion should follow readiness and correction capacity, not fixed dates.

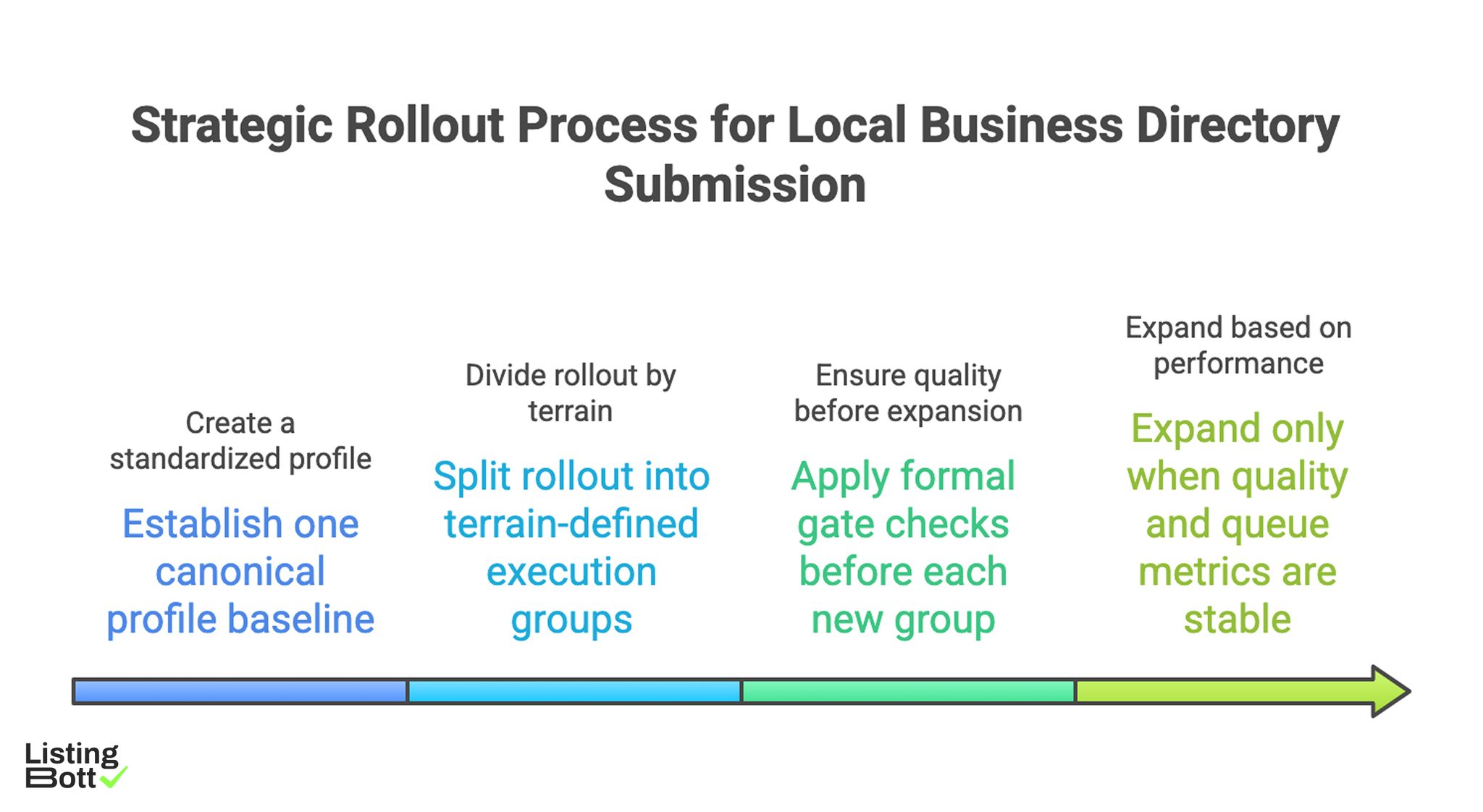

A practical operating flow is:

Strategic Rollout Process for Local Business Directory Submissions

- establish one canonical profile baseline,

- split rollout into terrain-defined execution groups,

- apply formal gate checks before each new group,

- expand only when quality and queue metrics are stable.

For broader U.S. planning, see Local business directory submission USA.

sbb-itb-8e44301

Methodology

This methodology uses queue discipline and staged rollout governance designed for Colorado's mixed operating conditions.

The TIMBER framework (Timing, Inputs, Monitoring, Backlog, Escalation, Review)

| Framework dimension | Weight | Execution objective |

|---|---|---|

| Timing discipline | 20 | sequence rollout by readiness, not calendar pressure |

| Input control | 20 | enforce single-source profile policy across active groups |

| Monitoring depth | 20 | make quality and issue trends visible per group |

| Backlog management | 20 | stop hidden correction debt from compounding |

| Escalation reliability | 10 | route high-severity exceptions to clear owners |

| Review cadence | 10 | maintain regular decision cycles during growth |

Operating rule:

- score each dimension from 1-5 every two weeks,

-

stop expansion if

Input controlorBacklog managementis below 3, - restart expansion after two stable review cycles with no high-severity drift.

Colorado execution groups

| Group | Wave | Primary purpose | Typical overload signal | Readiness gate |

|---|---|---|---|---|

| Group A | 1 | build baseline under highest operational pressure | correction queue growth after initial launch | critical issue SLA stable |

| Group B | 1-2 | duplicate baseline with controlled handoffs | inconsistent data ownership | owner handoff map validated |

| Group C | 2 | expand coverage while preserving closure velocity | issue age trend rising | closure velocity on target |

| Group D | 3 | broaden footprint with low maintenance risk | silent backlog accumulation | backlog pressure within threshold |

Workstream synchronization map

| Workstream | Required checkpoint | Failure pattern | Mandatory control |

|---|---|---|---|

| Profile governance | canonical source audit before every wave | multiple conflicting profile variants | one-source policy with weekly spot checks |

| Scope governance | inclusion/exclusion sign-off pre-launch | mid-wave scope mutation | signed scope artifact per wave |

| Correction pipeline | SLA and issue aging tracked weekly | critical queue aging during expansion | SLA dashboard + escalation timer |

| Reporting pipeline | zone and group-level KPI updates | delayed visibility, late decisions | fixed reporting calendar |

| Expansion governance | threshold-based go/no-go | date-driven launches without readiness | gate decision card per wave |

Queue discipline model

| Queue type | Entry trigger | Priority rule | Exit requirement |

|---|---|---|---|

| Q1: routine fixes | minor formatting/metadata mismatch | process in batch cycles | corrected in standard review window |

| Q2: active-risk fixes | repeated mismatch in active group | prioritize over new submissions | closure within weekly SLA |

| Q3: expansion blockers | cross-group consistency breakdown | halt expansion and triage first | root-cause resolved before new wave |

A queue model keeps operational debt measurable and prevents expansion from hiding unresolved risk.

Decision gate stack

| Gate | Activation point | Required evidence | Blocker state |

|---|---|---|---|

| Gate 1: source lock | before first live execution | canonical profile policy + owner matrix | conflicting source policies |

| Gate 2: scope lock | before each group wave | approved wave scope with exclusions | scope changed without approval |

| Gate 3: quality lock | after first batch in each group | integrity report + queue status | Q3 volume above threshold |

| Gate 4: capacity lock | before next expansion | closure velocity trend + backlog score | deteriorating capacity trend |

90-day operating timeline

| Phase | Days | Core work | Exit criteria |

|---|---|---|---|

| Foundation | 1-14 | source lock, owner mapping, queue definitions | governance artifacts approved |

| Baseline launch | 15-34 | execute group A with full quality instrumentation | stable integrity + SLA adherence |

| Stabilization | 35-58 | reduce queue age and reopen rate | queue pressure normalized |

| Controlled expansion | 59-90 | launch groups B-D through gate stack | no KPI regression after each wave |

Pre-expansion readiness table

| Readiness checkpoint | Verification method | Pass rule |

|---|---|---|

| Source consistency | random audit of active groups | zero conflicting baseline edits |

| Ownership completeness | gate and escalation owners listed | all roles assigned |

| Queue health | Q2/Q3 aging trend review | no sustained upward trend |

| Reporting freshness | weekly dashboard completeness | no stale KPI views |

| Artifact completeness | gate records for active wave | all required artifacts attached |

Comparison table

| Operating style | Best for | Benefit | Tradeoff | Colorado applicability |

|---|---|---|---|---|

| Single-rhythm statewide launch | short pilot only | fast start | poor resilience across variable groups | Low |

| Manual group management | very small teams | flexible local handling | high coordination overhead | Medium-low |

| Managed staged execution | teams needing speed and process clarity | predictable rollout with lower internal load | depends on provider transparency | Strong |

| Hybrid governance plus execution | teams with internal QA leadership | strongest balance of scale and control | requires strict accountability design | Very strong |

Selection guidance by team maturity

| Maturity indicator | Preferred model | Why |

|---|---|---|

| Limited operations bandwidth | Managed staged execution | preserves control without heavy internal burden |

| Moderate maturity with growth targets | Hybrid governance | supports expansion with explicit oversight |

| High maturity and strong SOP | Hybrid or software-led | allows deeper internal optimization |

| Ongoing queue instability | Managed pilot + reset | restores stable baseline before scaling |

Weekly KPI dashboard

| KPI | Operational meaning | Expansion hold trigger |

|---|---|---|

| Group integrity pass rate | quality stability by rollout group | sustained decline in active group |

| Critical closure velocity | correction response performance | SLA breaches on high-severity issues |

| Queue reopen rate | correction durability | two-cycle upward trend |

| Backlog pressure score | debt accumulation trend | persistent increase across reviews |

| BOFU progression actions | commercial relevance of execution | informational activity with weak progression |

Best by use case

1) Single-location program

Best fit: managed staged execution with clear queue reporting.

Reason: quality controls stay visible without heavy operational complexity.

2) Multi-location operator

Best fit: hybrid governance with group-based decision gates.

Reason: expansion stays measurable and owner accountability remains explicit.

3) SaaS team entering local discovery workflows

Best fit: phased launch controlled by readiness scores.

Reason: threshold-led expansion lowers queue debt risk.

4) Agency operations with multiple clients

Best fit: standardized queue taxonomy and escalation protocol.

Reason: repeatable workflows reduce delivery variance and rework.

5) Teams with strict governance requirements

Best fit: approval-first operating system with documented artifacts.

Reason: auditable decisions improve reliability under scaling pressure.

For benchmark references, evaluate workflow clarity and governance depth through best directory listing services and listing management software vs service.

Where ListingBott fits in Colorado execution

What ListingBott does

ListingBott is a workflow-based directory submission tool for teams that need structured execution, approval checkpoints, and transparent status reporting.

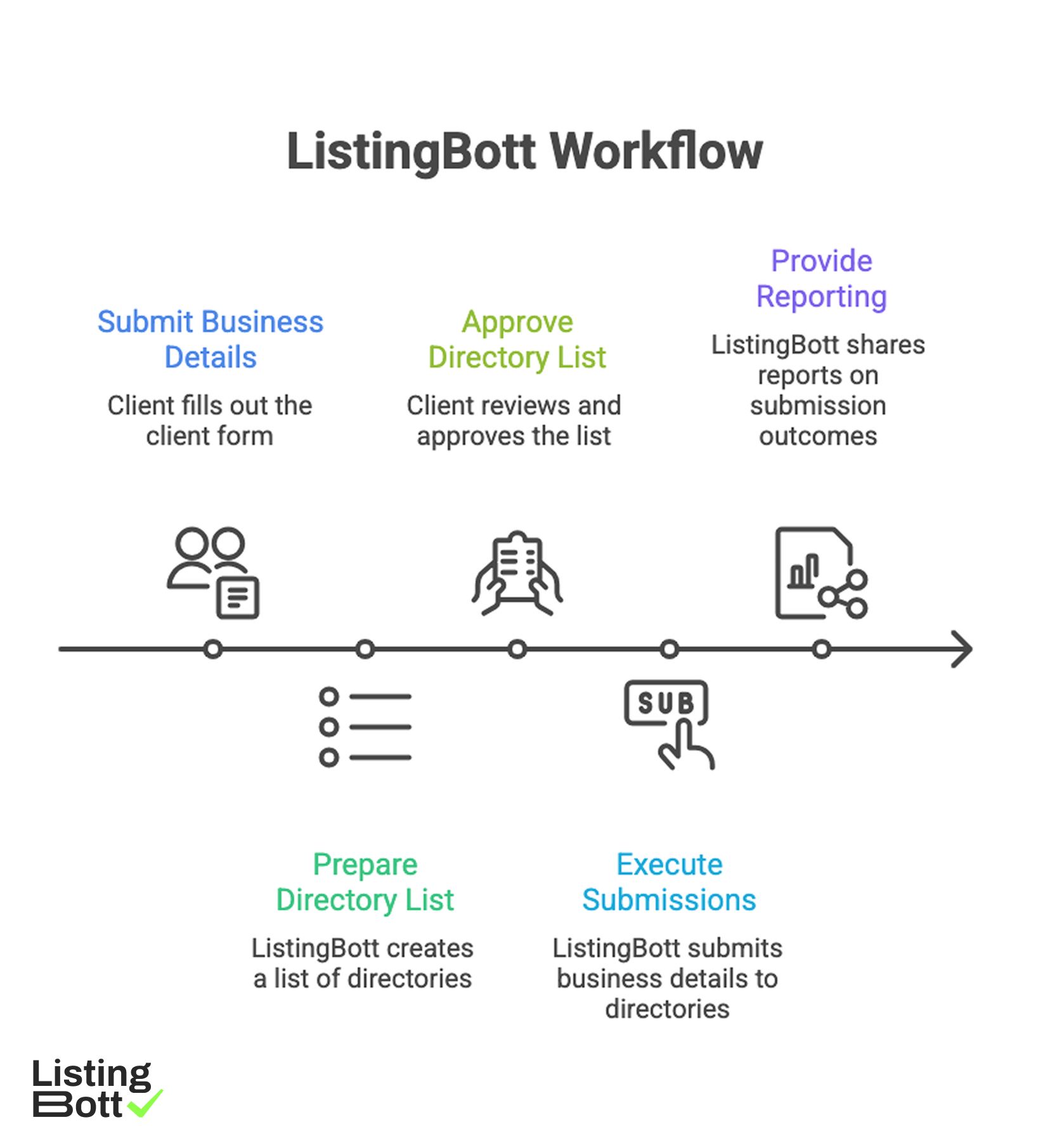

How ListingBott works

ListingBott Workflow

-

You submit business details through the

client form. -

ListingBott prepares a

list of directoriesfor your selected scope. - You approve the list before launch.

- ListingBott executes submissions based on approved scope.

- ListingBott provides reporting for completed and pending outcomes.

Key features and practical value

- Intake validation: reduces avoidable data-quality errors before execution.

- Approval checkpoint: aligns scope and expectations before rollout.

- Workflow transparency: supports owner coordination and escalation.

- Reporting handoff: enables go/no-go decisions for next waves.

Teams that prioritize workflow reliability typically maintain better long-term execution quality than teams focused only on volume output.

Expected outcomes and limits

Expected outcomes:

- structured submission execution,

- clear visibility across active waves,

- repeatable rollout process for additional groups.

Limits to keep explicit:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guaranteed outcomes controlled by third-party platforms.

DR commitment is conditional only. A promise to reach DR 15 can apply when starting DR is below 15, the client explicitly selects domain growth, and the directory list is approved before process launch. Refunds may apply if process has not started, and public offer language remains no hidden extra fees.

Risks/limits

Common failure patterns

- Launching new groups without completed gate evidence.

- Allowing multiple profile baselines in active waves.

- Expanding while Q3 queue issues are unresolved.

- Tracking output volume but ignoring queue age and reopen trends.

- Running escalation without clear owner accountability.

Practical limits

- Directory submission supports discoverability and consistency, but it does not replace broader SEO systems.

- Timing and impact vary by category, competition, and third-party platform behavior.

- Expansion without queue discipline can create compounding correction debt.

Minimum control layer

- group-based gate approvals,

- SLA-bound correction ownership,

- weekly KPI and queue review,

- required artifacts for every expansion wave.

FAQ

Why use a terrain and queue model in Colorado?

Because rollout quality depends on matching execution pace to group conditions and correction capacity.

Should all groups launch simultaneously?

Usually no. Launch in waves, stabilize queue health, then expand.

Which KPI should block expansion first?

Use critical closure velocity together with group integrity pass rate.

Can directory submission guarantee rankings?

No. It improves consistency and discoverability support, but rankings depend on external factors.

Is DR growth guaranteed for every project?

No. DR commitments are conditional and apply only to qualified setups.

What is the minimum governance stack?

Canonical data control, gate ownership, correction SLA, and recurring group-level KPI review.