Quick answer

Local business directory submission in Canada should run as a country-hub program with explicit launch charters and quality gates. The most expensive failure mode is not low submission volume; it is uncontrolled variance between rollout waves that creates rework and slows future expansion.

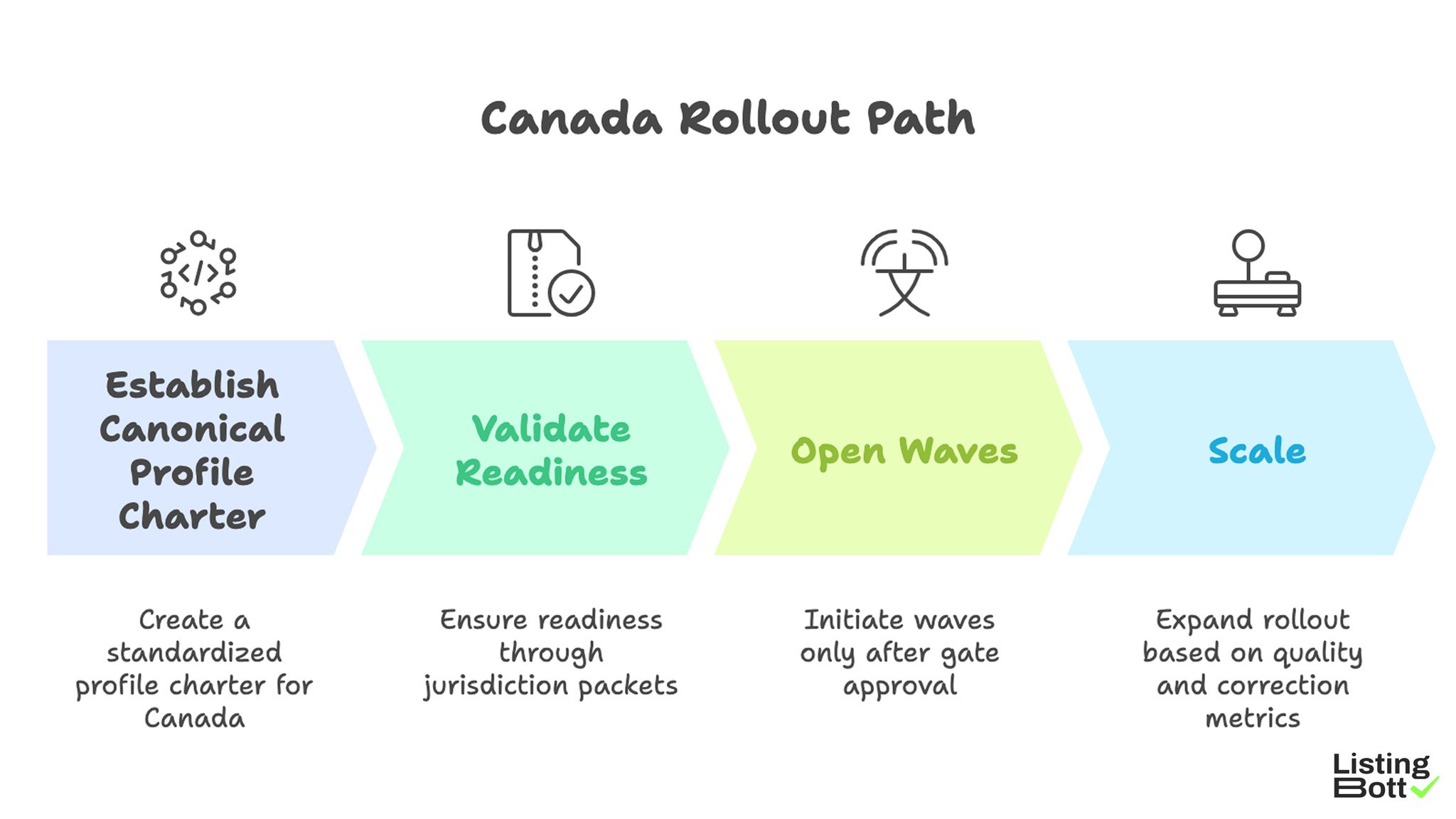

Canada Rollout Path

A practical Canada rollout path:

- establish one canonical profile charter,

- validate readiness through jurisdiction packets,

- open waves only after gate approval,

- scale when quality and correction ledgers stay within limits.

For broader U.S. planning, see Local business directory submission USA.

sbb-itb-8e44301

Methodology

This page uses a charter-and-ledger operating model built to keep country-hub expansion stable under changing regional demand.

The NORTH framework (Normalization, Ownership, Readiness, Throughput, Health)

| Dimension | Weight | Operating purpose |

|---|---|---|

| Normalization | 25 | keep one consistent profile standard across all active waves |

| Ownership | 20 | assign accountable decision-makers for launch, correction, and escalation |

| Readiness | 20 | prevent launches without validated prerequisites |

| Throughput | 20 | align execution pace with correction capacity |

| Health | 15 | monitor early warning signals before debt compounds |

NORTH decision rule:

- score each dimension 1-5 biweekly,

-

expansion blocked if

ReadinessorThroughputdrops below 3, - expansion resumes after two healthy cycles.

Country-hub architecture

| Hub component | Function | Primary control signal | Typical breakdown |

|---|---|---|---|

| Policy charter | defines data, scope, and quality rules | charter compliance rate | conflicting profile standards |

| Launch office | approves rollout packets by wave | packet approval velocity | delayed launches from missing evidence |

| Correction office | runs issue queues and SLA controls | high-severity closure speed | unresolved blockers during expansion |

| Performance office | tracks KPI ledger and board decisions | dashboard freshness | late decisions based on stale data |

Jurisdiction packet checklist

| Packet block | Must include | Gate outcome if missing |

|---|---|---|

| Data block | required fields and canonical profile source | wave hold |

| Scope block | approved inclusion/exclusion list | wave hold |

| Owner block | launch owner + escalation owner | wave hold |

| SLA block | correction targets and review cadence | conditional hold |

| Reporting block | KPI dashboard with update timestamps | conditional hold |

This packet structure lowers ambiguity before execution begins.

Wave classification ledger

| Wave class | Objective | Risk signature | Mandatory control |

|---|---|---|---|

| Class 1 | baseline validation | high early rejection probability | daily acceptance review |

| Class 2 | controlled scale | owner handoff gaps | owner map lock |

| Class 3 | distributed expansion | queue aging drift | weekly queue reconciliation |

| Class 4 | efficiency optimization | hidden reopen growth | reopen audit and rollback trigger |

Decision tree for expansion

| Decision node | Question | If yes | If no |

|---|---|---|---|

| Node A | Is packet complete and approved? | go to node B | block launch |

| Node B | Are high-severity issues inside SLA? | go to node C | run correction sprint |

| Node C | Is reopen trend stable over two cycles? | go to node D | hold expansion |

| Node D | Are dashboards current for all active waves? | approve expansion | delay decision until refresh |

A structured decision tree prevents ad hoc go/no-go calls.

Queue and correction ledger

| Queue | Entry pattern | Ownership | Exit criteria |

|---|---|---|---|

| Queue L1 | low-impact metadata issues | correction coordinator | close in normal cycle |

| Queue L2 | repeated mismatch in active wave | wave owner | close within weekly SLA |

| Queue L3 | systemic cross-wave quality conflict | escalation lead | clear before next launch vote |

Ledger-based queue management makes debt trends visible earlier.

Governance calendar

| Meeting | Frequency | Inputs | Decision output |

|---|---|---|---|

| Acceptance review | weekly | funnel pass/fail breakdown | tighten or relax entry criteria |

| Queue review | weekly | L2/L3 age and closure metrics | continue, freeze, or rollback scope |

| Launch council | biweekly | NORTH score + packet evidence | approve next wave or hold |

| Program review | monthly | quality-cost trend + BOFU progression | reprioritize capacity and roadmap |

92-day implementation map

| Phase | Days | Focus | Exit criteria |

|---|---|---|---|

| Charter setup | 1-18 | finalize policy charter, packet standard, owner map | governance assets approved |

| Baseline wave | 19-40 | execute class 1 with strict queue tracking | SLA and integrity trend stable |

| Stabilization cycle | 41-62 | reduce L2/L3 carryover and reopen growth | corrective debt normalized |

| Controlled scale | 63-92 | open class 2-4 waves through launch council | no KPI regression post-launch |

Pre-launch compliance checklist

| Compliance item | Validation method | Pass threshold |

|---|---|---|

| Charter adherence | sample audit on active records | 100% baseline compliance |

| Ownership completeness | role matrix verification | no missing owners |

| SLA readiness | closure trend audit | two-cycle stability |

| Dashboard currency | timestamp and completeness check | no stale KPI panels |

| Evidence completeness | packet artifact review | all mandatory documents attached |

Audit sampling protocol

| Audit stream | Sample rule | Frequency | Failure threshold | Required action |

|---|---|---|---|---|

| Baseline field audit | minimum 30 records per active class | weekly | >5% mismatch rate | pause class expansion |

| Ownership audit | 100% active owner assignments | weekly | any missing escalation owner | block next gate decision |

| Queue audit | all L3 plus random L2 cases | weekly | unresolved blocker beyond SLA | trigger launch-council escalation |

| Dashboard audit | all mandatory KPI cards | weekly | stale card older than 7 days | reporting lock before next launch |

| Artifact audit | all gate packets in current month | biweekly | missing required document | invalidate packet approval |

Sampling audits reduce false confidence from aggregate-level reporting.

Simulation test catalog

| Test case | Trigger condition | What is stress-tested | Pass condition | Mitigation if fail |

|---|---|---|---|---|

| Intake spike stress | acceptance queue grows >40% week-over-week | funnel resilience and triage speed | queue age remains within target | temporary intake cap + triage reassignment |

| Reopen surge stress | reopen ratio rises two cycles in a row | correction quality and classification logic | reopen trend normalizes next cycle | mandatory root-cause sprint |

| Ownership transition stress | gate owner changes during active class | continuity of approvals and escalation | no delay in gate timeline | freeze class until handoff evidence exists |

| Parallel-wave stress | two classes active simultaneously | dashboard completeness and queue isolation | no KPI blind spots | revert to sequential launch mode |

| Artifact drift stress | approvals attempted with partial evidence | governance discipline under load | zero incomplete approvals | reset approval stage for affected class |

Simulation tests help validate controls before high-risk expansion windows.

KPI formula card

| KPI | Formula | Operational meaning | Review owner |

|---|---|---|---|

| Acceptance rate | accepted records / evaluated records | measures intake quality and criteria fit | acceptance board owner |

| Integrity rate | records passing audit / records sampled | measures consistency in live execution | quality board owner |

| Critical closure velocity | high-severity tickets closed / week | tracks correction throughput | correction lead |

| Reopen ratio | reopened tickets / closed tickets | indicates fix durability | QA lead |

| Queue pressure index | weighted age of L2+L3 queues | shows debt accumulation trend | operations owner |

| Decision latency | average hours from issue detection to gate action | measures governance speed | launch council owner |

Explicit KPI formulas prevent metric drift between reporting cycles.

Evidence retention and rollback controls

| Control | Evidence stored | Retention window | Rollback trigger |

|---|---|---|---|

| Gate decision log | decision, owner, timestamp, rationale | 12 months | conflicting approvals for same class |

| Scope-change log | before/after scope with approver identity | 12 months | unauthorized scope modification |

| Escalation log | issue, action, closure proof | 12 months | unresolved blocker carried to next class |

| Dashboard snapshot log | weekly KPI exports by class | 6 months | no historical baseline for active class |

| SLA exception register | breach details and mitigation actions | 12 months | repeated breach pattern across two cycles |

Rollback procedure:

- Freeze expansion when L3 queue threshold is breached.

- Revert to last approved class scope.

- Revalidate gate packet with updated evidence.

- Resume only after launch council approves the corrected packet.

Rollback controls prevent compounding debt from unresolved expansion mistakes.

Comparison table

| Operating model | Best fit | Advantage | Tradeoff | Canada hub fit |

|---|---|---|---|---|

| Single-pass rollout | tiny tests only | fast startup | weak variance control | Low |

| Manual split execution | narrow-scope teams | localized flexibility | low repeatability and heavy overhead | Medium-low |

| Managed country-hub operations | teams needing structured speed | predictable flow with lower internal load | requires transparent workflow | Strong |

| Hybrid governance operations | teams with internal QA leadership | strongest control at scale | requires disciplined ownership | Very strong |

Model selection by maturity

| Operating maturity signal | Recommended model | Why |

|---|---|---|

| Limited internal ops resources | Managed country-hub operations | preserves controls without heavy internal load |

| Moderate maturity and growth targets | Hybrid governance operations | balances speed and control depth |

| High maturity with strong SOP | Hybrid or software-led | supports deeper customization |

| Recurring queue instability | Managed pilot + reset | restores baseline before scale |

KPI ledger for weekly decisions

| KPI | Why it matters | Expansion hold trigger |

|---|---|---|

| Funnel acceptance rate | intake quality and readiness | sustained decline in active class |

| Wave integrity score | execution consistency | repeated class-level drop |

| High-severity closure velocity | correction responsiveness | SLA breaches on critical queue |

| Reopen incidence | correction durability | two-cycle increase trend |

| BOFU progression actions | commercial linkage | informational traffic with weak progression |

Best by use case

1) Single-location launch

Best fit: managed country-hub operations with clear acceptance criteria.

Reason: provides operational clarity without heavy overhead.

2) Multi-location rollout

Best fit: hybrid governance with launch council gate approvals.

Reason: scale remains controlled while accountability remains explicit.

3) Product-led SaaS entering local markets

Best fit: phased class-based expansion tied to readiness packets.

Reason: packet-led gating reduces avoidable correction debt.

4) Agency handling multiple client portfolios

Best fit: standardized packet checks plus queue-led escalation.

Reason: repeatable governance reduces client-to-client variance.

5) Compliance and oversight-heavy teams

Best fit: approval-first model with full artifact traceability.

Reason: documented decision flow improves reliability and auditability.

For benchmark context, evaluate workflow rigor on best directory listing services and best local business directories.

Where ListingBott fits in Canada execution

What ListingBott does

ListingBott is a workflow-based directory submission tool that supports structured execution with approvals and transparent status reporting.

How ListingBott works

-

You submit business details through the

client form. -

ListingBott prepares a

list of directoriesfor review. - You approve the list before launch.

- ListingBott executes submissions based on approved scope.

-

ListingBott provides reporting for completed and pending outcomes.

ListingBott Process

Key features and practical value

- Intake validation: catches profile issues before execution starts.

- Approval checkpoint: aligns scope and expectations before launch.

- Workflow transparency: supports ownership and escalation control.

- Reporting handoff: supports evidence-based expansion decisions.

Teams that prioritize workflow reliability usually maintain stronger long-term execution quality than teams focused only on output volume.

Expected outcomes and limits

Expected outcomes:

- structured submission execution,

- clear class-level operational visibility,

- repeatable rollout process for future waves.

Limits to keep explicit:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guaranteed outcomes controlled by third-party platforms.

DR commitment is conditional only. A promise to reach DR 15 can apply when starting DR is below 15, the client explicitly selects domain growth, and the directory list is approved before process launch. Refunds may apply if process has not started, and public offer language remains no hidden extra fees.

Risks/limits

Frequent failure patterns

- Launching without complete jurisdiction packets.

- Expanding with unresolved L3 queue items.

- Allowing mixed baseline standards in active classes.

- Measuring output count while ignoring queue and reopen signals.

- Running escalation with unclear owner accountability.

Practical limits

- Directory submission supports discoverability and consistency, but it does not replace broader SEO systems.

- Timing and outcomes vary by category, competition, and third-party platform behavior.

- Expansion without packet and queue discipline can create compounding correction debt.

Minimum control layer

- class-based gate approvals,

- SLA-bound correction ownership,

- weekly KPI and queue ledger reviews,

- complete launch packet before each expansion vote.

FAQ

Why use a country-hub model in Canada?

Because stable expansion depends on consistent charter rules, packet validation, and queue discipline.

Should all classes launch together?

Usually no. Launch sequentially, validate stability, then expand.

Which KPI should block expansion first?

Use high-severity closure velocity with funnel acceptance and wave integrity rates.

Can directory submission guarantee rankings?

No. It supports consistency and discoverability, but rankings depend on external factors.

Is DR growth guaranteed for every project?

No. DR commitments are conditional and apply only to qualified setups.

What is the minimum governance stack?

Canonical data control, gate ownership, correction SLA, and recurring class-level KPI reviews.