Quick answer

Local business directory submission in California requires tighter process control than many single-market programs. Teams often operate across very different local contexts in one state, so profile consistency and correction speed matter more than raw submission volume.

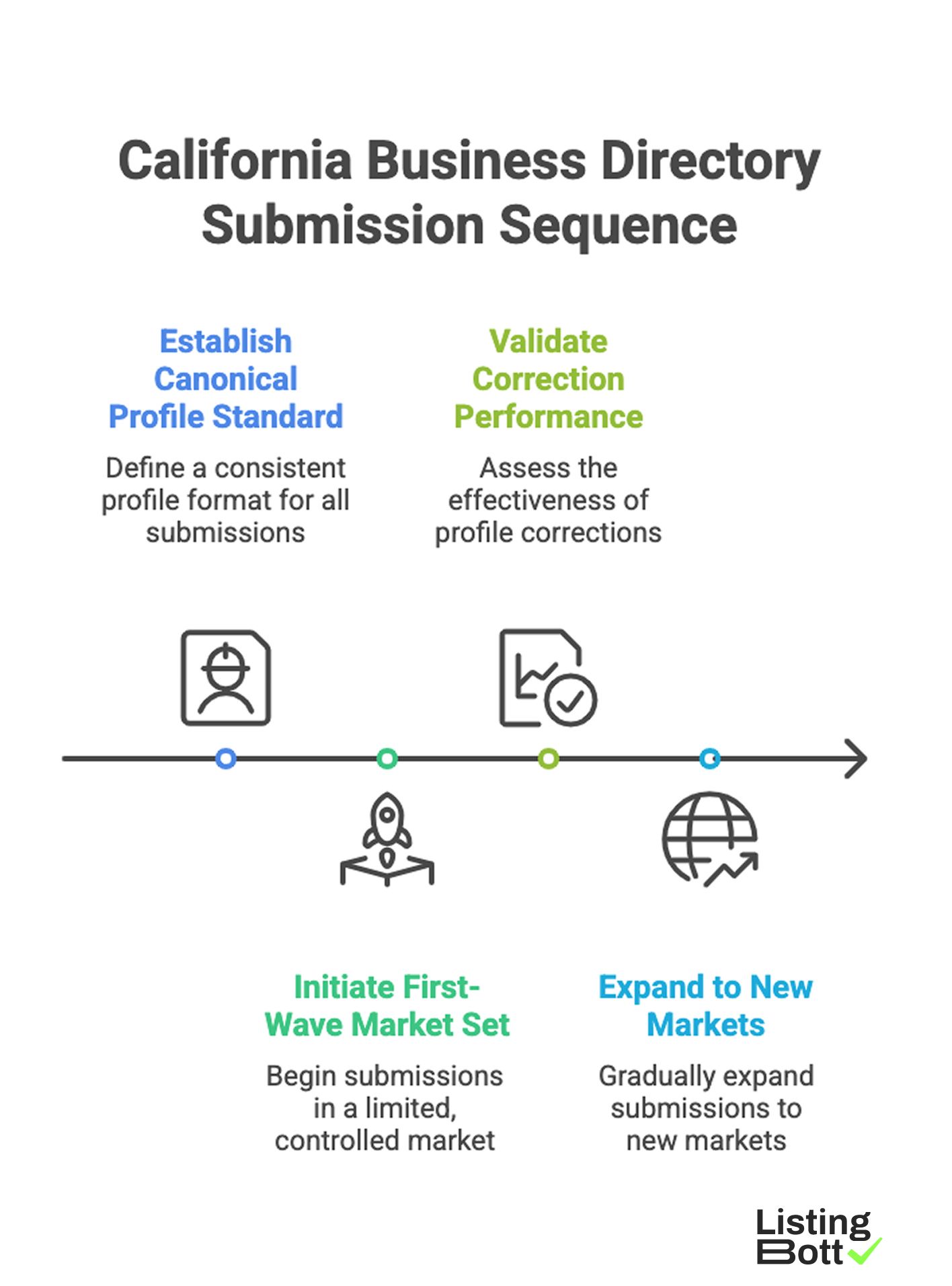

A practical California sequence is:

California Business Directory Submission Sequence

- lock one canonical profile standard,

- start with a controlled first-wave market set,

- validate correction performance,

- expand only when quality thresholds hold.

For broader U.S. planning, see Local business directory submission USA.

sbb-itb-8e44301

Methodology

This page uses a California-specific operating framework focused on practical execution risk.

The CALI model (Coverage, Accuracy, Local fit, Iteration)

| Factor | Weight | Why it matters in California |

|---|---|---|

| Coverage design | 20 | Helps avoid random market expansion and weak execution sequencing |

| Accuracy controls | 30 | Prevents profile drift across high-volume location activity |

| Local relevance fit | 25 | Improves category and intent alignment by local market context |

| Iteration speed | 25 | Measures how quickly teams can detect and fix listing issues |

How to apply CALI

- Score each factor from 1-5 before launch.

-

Do not expand market coverage if

AccuracyorIteration speedis below 3. - Re-score every 2-4 weeks during active rollout.

This keeps expansion aligned to process quality rather than submission count.

California rollout map by market cluster

| Cluster | Primary rollout objective | Typical execution challenge | Expansion trigger |

|---|---|---|---|

| Bay Area | Maintain profile consistency across high-competition categories | Category overlap and profile detail drift | Correction cycle stability |

| Los Angeles + Orange County | Scale without fragmenting ownership | High listing volume and coordination load | Clear owner + SLA adherence |

| San Diego | Keep quality high while expanding vertical coverage | Incremental scope creep | Verified quality thresholds |

| Sacramento and Central Valley | Preserve data consistency across distributed service areas | Address and service-area mismatch | Low unresolved fix backlog |

| Inland Empire and secondary metros | Controlled growth with repeatable SOP | Uneven process maturity | Repeatable pass rates across two cycles |

60-day California rollout workflow

| Phase | Window | Focus | Gate |

|---|---|---|---|

| Baseline | Days 1-10 | Canonical profile, ownership, category map | All required fields approved |

| Controlled launch | Days 11-25 | First-wave submissions and QA | Error rate remains controlled |

| Stabilization | Days 26-40 | Corrections and process refinement | Critical issues resolved |

| Expansion | Days 41-60 | Add additional markets with same SOP | Quality holds after expansion |

Skipping stabilization is the most common source of rework.

California QA checklist before adding new markets

| Checkpoint | Question | Pass condition |

|---|---|---|

| Profile governance | Is one source of truth enforced for business details? | Yes, no conflicting profile sources |

| Ownership | Who owns fixes and escalation? | Named owner and backup |

| Correction loop | Are fix requests tracked to closure? | SLA and closure log in place |

| Reporting | Can status be reviewed by cluster? | Recurring cluster-level report available |

| Expansion rule | What blocks next rollout wave? | Explicit quality and backlog thresholds |

Comparison table

| Execution model | Best for | Advantages | Tradeoffs | California fit |

|---|---|---|---|---|

| Manual internal process | Very small scope and one operator | Direct control, low vendor dependency | Hard to scale reliably | Low fit beyond small pilots |

| Software-only execution | Teams with mature internal ops | Better control and repeatability | Requires strong in-house governance | Medium fit if team capacity is high |

| Service-led execution | Teams prioritizing speed and operational support | Faster implementation, lower internal burden | Needs transparent provider process | Strong fit for early and mid-stage rollout |

| Hybrid governance model | Teams balancing speed, quality, and oversight | Better scale-control balance | Requires clear role boundaries | Often highest fit for multi-cluster programs |

Decision matrix: what to choose by operating readiness

| Readiness profile | Recommended path | Why |

|---|---|---|

| Limited internal operations bandwidth | Service-led first | Faster launch with controlled process |

| Moderate bandwidth, expanding market footprint | Hybrid | Preserves quality while increasing throughput |

| Strong process team and QA maturity | Software-led or hybrid | More direct control with lower process risk |

| Unclear ownership or weak correction tracking | Service-led pilot + governance reset | Prevents scale before process clarity |

Metrics to track per California cluster

| Metric | Why it matters | Early warning signal |

|---|---|---|

| Consistency pass rate | Detects profile quality stability | Declining pass rate across clusters |

| Correction turnaround | Measures process reliability | Growing unresolved correction queue |

| Expansion readiness score | Prevents premature scaling | New markets launched below threshold |

| Submission-to-status lag | Measures reporting discipline | Delayed status visibility for stakeholders |

| BOFU path engagement | Tracks commercial contribution | Informational traffic with weak progression |

The goal is durable quality, not temporary activity spikes.

Best by use case

1) Single-location California business

Best fit: service-led rollout with strict baseline controls.

Reason: execution consistency can be built quickly without overloading internal staff.

2) Multi-location operator across California metros

Best fit: hybrid model with centralized governance.

Reason: clusters can scale while maintaining quality and correction ownership.

3) SaaS company targeting California local discovery

Best fit: staged rollout using CALI scoring and expansion gates.

Reason: this model limits risk from rapid expansion without process readiness.

4) Agency managing multiple California clients

Best fit: repeatable workflow model with cluster-level reporting.

Reason: agencies need predictable operations and transparent account-level status.

5) Team with compliance-sensitive categories

Best fit: approval-before-publish model with strict correction tracking.

Reason: change control and accountability need to be explicit at every step.

When comparing options, teams usually prioritize workflow reliability and delivery clarity over vendor claims that focus only on listing count.

Where ListingBott fits in California execution

What ListingBott does

ListingBott provides a productized directory submission workflow for teams that need structured execution. Current public offer language is a one-time payment model with publication to 100+ directories.

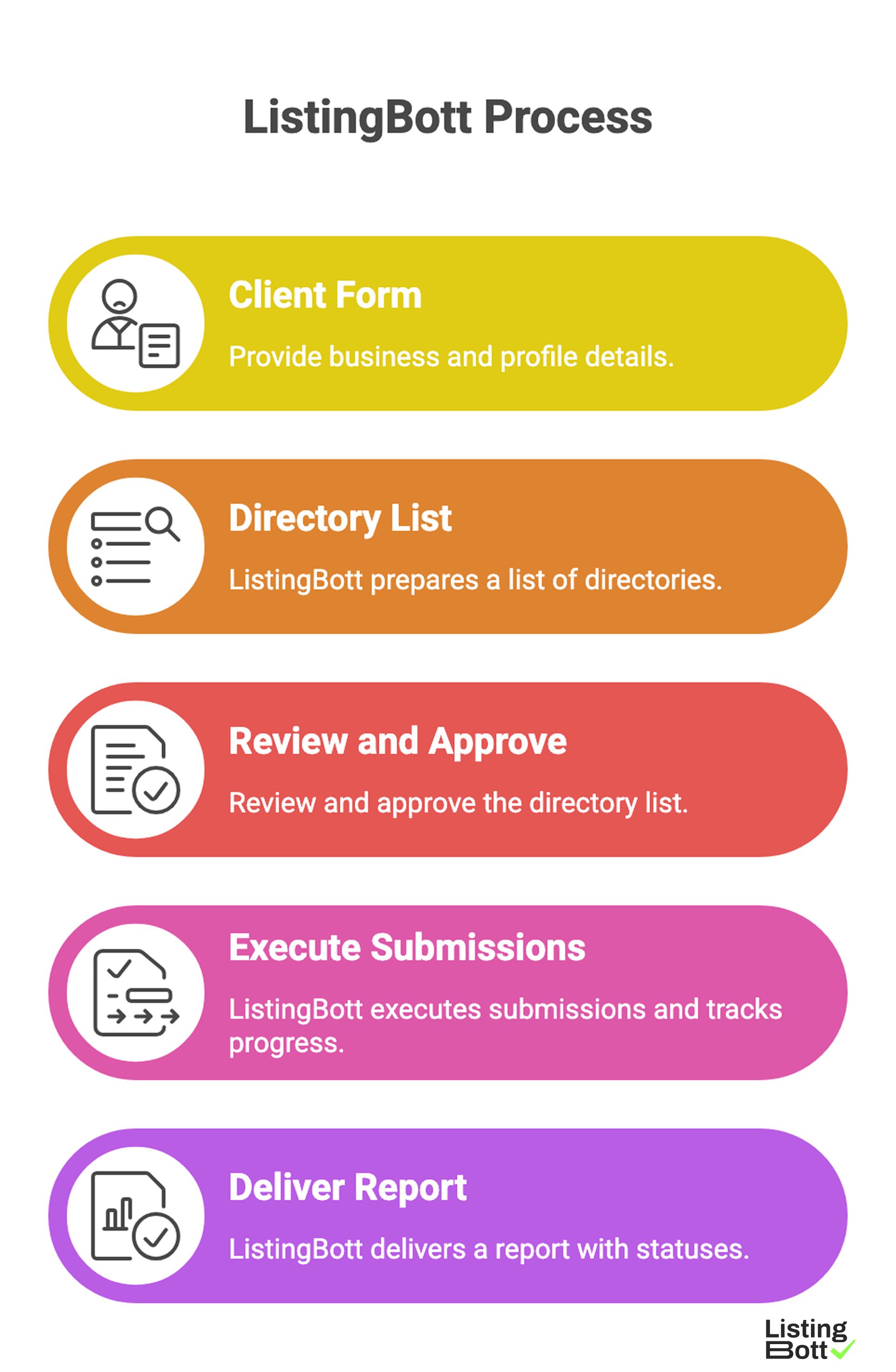

How ListingBott works

ListingBott Process

-

You provide business and profile details in the

client form. -

ListingBott prepares a

list of directoriesfor your project. - You review and approve that directory list.

- ListingBott executes submissions and tracks progress.

- ListingBott delivers a report with submitted and pending statuses.

This process helps reduce manual coordination overhead while keeping rollout steps transparent.

Key features and what they mean in operations

- Intake gating before publish: lowers preventable data-quality errors.

- Approval gate: aligns execution scope before launch.

- Status visibility: improves cross-team coordination and expectation management.

- Report handoff: supports QA review and next-wave planning.

For teams evaluating options, submission process transparency is usually more predictive of sustainable outcomes than volume-focused messaging.

Expected results and limits

Expected outcomes:

- workflow clarity,

- execution within agreed scope,

- report-based visibility into what is completed and pending.

Limits that must remain explicit:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guarantees over third-party platform outcomes.

DR commitments are conditional only. A promise to reach DR 15 applies only for qualified projects with starting DR below 15, explicit domain growth goal, and approved directory list. Refunds can apply if process has not started, and commercial terms should stay clear with no hidden extra fees.

Risks/limits

Common California rollout mistakes

- Expanding cluster coverage before fixing first-wave quality issues.

- Letting multiple teams edit profile data without one source of truth.

- Measuring only submission count and ignoring correction throughput.

- Running state expansion without explicit ownership.

- Treating all California markets as identical operationally.

Practical limits

- Directory submission contributes to discoverability but does not replace broader SEO fundamentals.

- Results timing varies by category competition and external platform behavior.

- Rapid expansion without QA controls often creates long-term maintenance debt.

Risk controls to enforce

- cluster-level rollout gates,

- correction workflow with owner and escalation path,

- recurring reporting cadence,

- documented inclusion and exclusion criteria.

FAQ

Why does California need a dedicated state page instead of only a USA page?

California rollout usually involves multiple market clusters and higher execution complexity, so state-level controls improve quality and planning.

Should we start with all California markets at once?

Usually no. Start with a controlled first wave, stabilize corrections, then expand by readiness criteria.

What is the main KPI for deciding expansion inside California?

Use consistency pass rate plus correction turnaround as your primary expansion gate.

Is service-led always better than software-led?

Not always. It depends on internal capacity and governance maturity. Hybrid often performs best when scaling.

Can directory submission guarantee local ranking outcomes?

No. It improves execution quality and visibility support, but ranking and traffic timing depend on many factors.

Can DR growth be promised by default?

No. DR commitments are conditional and require a qualified setup with specific criteria.