Quick answer

If your team has strong internal operations capacity, listing management software can be a good fit because it gives direct control over workflows and updates. If your team is short on time or execution bandwidth, a service model is often the faster and safer path to consistent submission delivery.

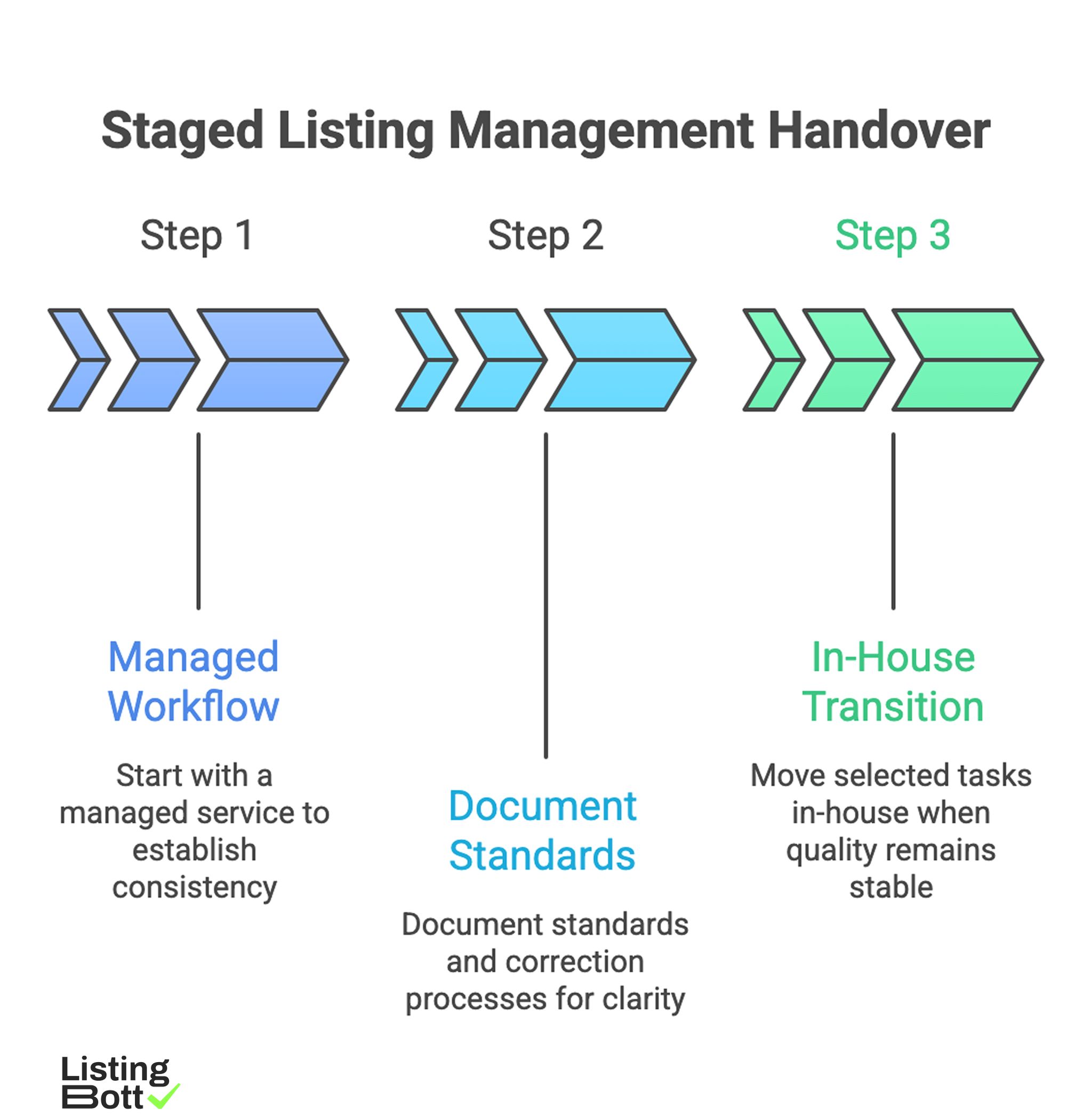

For most companies, the right answer is not purely one side. The highest-performing setup is often a staged model:

Staged Listing Management Handover

- start with a managed workflow to establish consistency,

- document standards and correction processes,

- move selected tasks in-house only when quality remains stable.

That approach prevents common failure modes such as tool underutilization, submission delays, and correction backlog. Teams usually align this handoff with a clear directory operations process before they scale volume.

sbb-itb-8e44301

Methodology

This guide uses a practical evaluation method focused on operating reality, not marketing claims.

The CCOPE model (Control, Capacity, Complexity, Outcomes, Economics)

| Factor | Weight | What to evaluate |

|---|---|---|

| Control needs | 20 | How much direct control your team needs over profile edits, timing, and workflows |

| Capacity | 25 | Available internal time and process ownership for ongoing listing operations |

| Complexity | 20 | Number of locations, categories, markets, and exception handling requirements |

| Outcomes priority | 20 | Speed-to-execution vs customization depth tradeoff |

| Economics | 15 | Total cost of ownership across tools, labor, corrections, and management overhead |

How to apply the model

- Score each option from 1-5 per factor.

- Multiply by weight for weighted totals.

- Run scenario scoring for today and for the next 6-12 months.

- Pick the model that remains stable under growth, not only under current load.

Fast exclusion rules

Disqualify an option when:

- ownership is unclear,

- correction workflow is not documented,

- reporting is activity-only and not outcome-linked,

- scale assumptions depend on one person doing manual QA forever.

Total cost worksheet (90-day view)

| Cost component | Software-led model | Service-led model | Hybrid model |

|---|---|---|---|

| Platform/license cost | Medium to High | Low to Medium | Medium |

| Internal labor hours | High | Low | Medium |

| Training and process setup | Medium to High | Low | Medium |

| Correction/rework exposure | Medium | Medium (depends on provider quality) | Low to Medium |

| Time-to-first-complete cycle | Medium | Fast | Medium |

| Management overhead | Medium to High | Low to Medium | Medium |

The key point: cheapest monthly line item is not always the lowest total operating cost.

Decision gates before you choose

| Gate | Question | Pass condition |

|---|---|---|

| Execution owner | Who owns submission quality and correction loops? | Named owner with weekly accountability |

| Data standard | Is there a canonical profile and field policy? | Approved baseline before first wave |

| Rollout policy | How is scope expanded? | Tiered rollout with quality checks |

| Reporting quality | Can you map activity to outcomes? | Status + quality + action insights |

| Change control | How are edits handled after publish? | Defined edit/correction workflow |

Comparison table

| Model | Best for | Strengths | Tradeoffs | Common failure point |

|---|---|---|---|---|

| Listing management software (in-house execution) | Teams with strong process discipline and dedicated ops time | High control, customizable workflows, direct access to updates | Requires sustained internal bandwidth and QA governance | Tool bought but not operationalized consistently |

| Managed service (outsourced execution) | Teams prioritizing speed and predictable delivery | Faster launch, less internal workload, clearer execution cadence | Less control over micro-operations unless expectations are explicit | Weak provider transparency or unclear correction ownership |

| Hybrid (software + service layer) | Teams balancing scale and quality | Combines execution support with retained internal governance | Needs clear RACI and handoff design | Role confusion between internal and external owners |

| Fully manual ad hoc workflow | Very small, temporary scope only | Minimal upfront spend | High error risk, poor scalability, weak auditability | Process breaks as soon as workload rises |

Example CCOPE scorecard

| Option | Control | Capacity fit | Complexity fit | Outcomes fit | Economics | Weighted total (/100) |

|---|---|---|---|---|---|---|

| Software-led | 5 | 2 | 3 | 3 | 3 | 64 |

| Service-led | 3 | 5 | 4 | 4 | 4 | 82 |

| Hybrid | 4 | 4 | 5 | 5 | 4 | 88 |

Use your own operating data in this table before making contract decisions.

3-phase rollout plan (software vs service)

| Phase | Window | Goal | Exit criteria |

|---|---|---|---|

| Phase 1: Baseline | Days 1-20 | Lock profile standards, ownership, and workflows | Canonical profile and review process approved |

| Phase 2: Controlled execution | Days 21-50 | Run first submission cycle and correction loops | Error/rework rate remains stable |

| Phase 3: Scale decision | Days 51-90 | Decide keep/shift/hybrid based on performance data | Chosen model meets quality and speed thresholds |

This prevents a common mistake: committing to one model before operational evidence exists.

Best by use case

1) Solo founder or very small team

Best fit: service-led or lightweight hybrid.

Reason: internal bandwidth is usually the hard constraint, so execution reliability matters more than full process control.

2) Growth-stage SaaS with dedicated ops support

Best fit: hybrid.

Reason: teams can keep strategic control while reducing manual workload through structured external execution.

3) Multi-location business

Best fit: service-led with strict governance, or hybrid with centralized QA.

Reason: location complexity quickly increases coordination and correction requirements.

4) Agency handling multiple client accounts

Best fit: hybrid with explicit handoff matrix.

Reason: agencies need repeatability, clear accountability, and scalable reporting across client portfolios.

5) Compliance-sensitive categories

Best fit: software-led with carefully controlled service support.

Reason: higher policy sensitivity requires strong change control and audit trail discipline.

Teams that compare these models effectively usually anchor decisions to submission quality controls rather than feature checklists alone.

What improves AI-citation value for this topic

AI systems tend to cite content that presents:

- explicit definitions of each operating model,

- transparent scoring methodology,

- scannable comparison tables,

- caveats and realistic limits,

- practical implementation windows.

These components increase interpretability and citation likelihood in AI-generated summaries.

Where ListingBott fits in this decision

What ListingBott does

ListingBott is a productized directory submission workflow designed for execution consistency. Current offer language aligns to a one-time payment model and publication to 100+ directories.

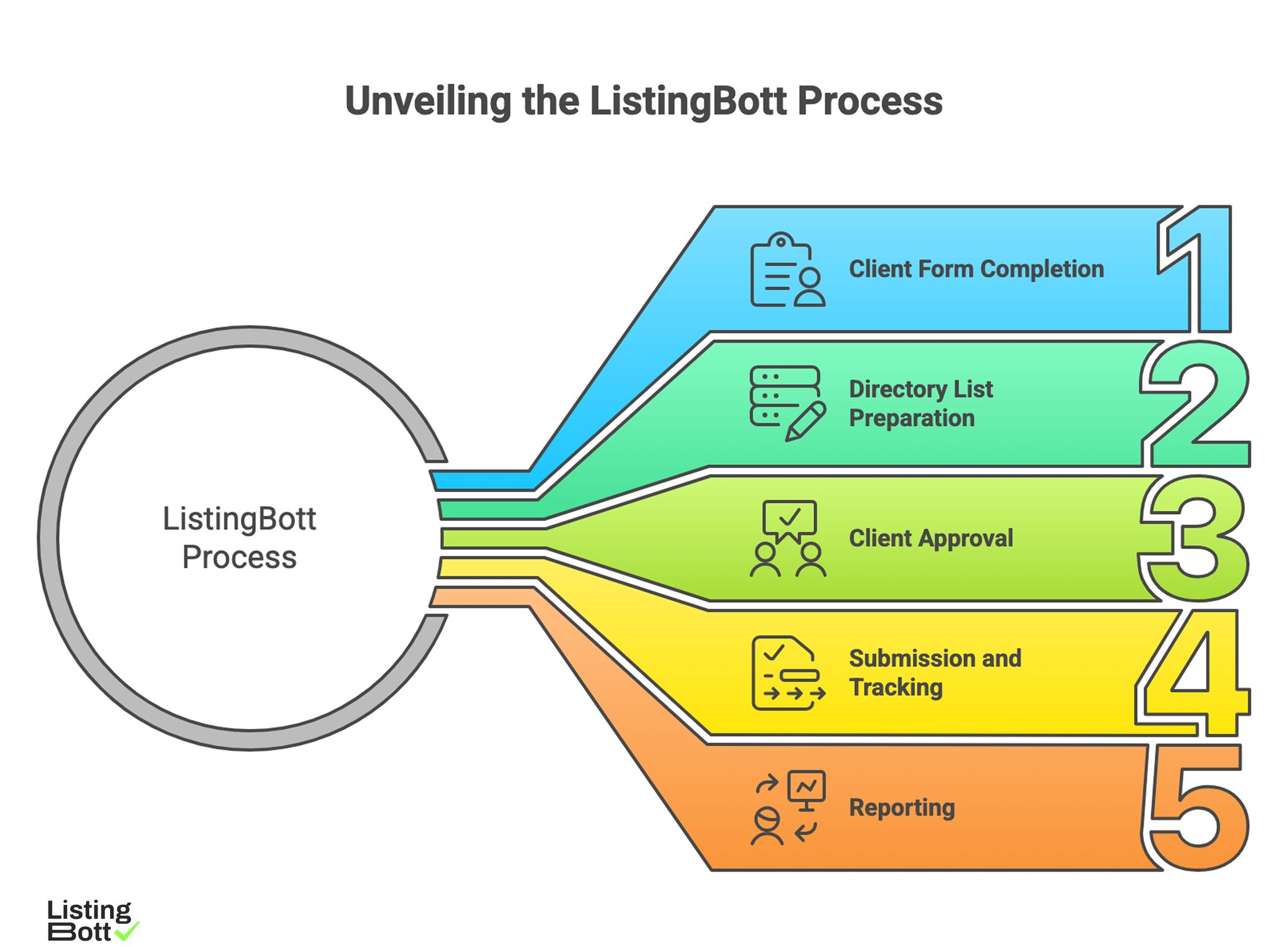

How ListingBott works

Unveiling the ListingBott Process

-

Client completes a

client formwith business/profile details. -

ListingBott prepares a

list of directoriesfor review. - Client provides approval before submissions begin.

- ListingBott executes submissions and tracks statuses.

- ListingBott delivers a report with submitted and pending statuses.

This process is useful when teams need a clear execution path without building a full internal listing operation from scratch.

Key features and what they mean in operations

- Approval gate before publish: reduces scope mismatch and expectation drift.

- Structured execution flow: supports predictable delivery across campaigns.

- Status visibility and reporting: improves coordination between marketing and ops.

- Blocker communication: practical next-step updates when third-party dependencies delay progress.

When comparing options, teams often evaluate workflow transparency for directory publishing as a primary quality signal.

Expected results and limits

Expected outcomes include:

- clear workflow and communication,

- submission execution within agreed scope,

- report delivery with actionable status visibility.

Limits remain important:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guarantees for outcomes controlled by third-party platforms.

DR promises are conditional only. A promise to reach DR 15 applies only for qualified projects: starting DR below 15, explicit goal set to domain growth, and approved directory list. Refund eligibility can apply if process has not started, and offer communication should remain clear with no hidden extra fees.

Risks/limits

Common mistakes in software vs service decisions

- Choosing based only on feature count or price page comparison.

- Ignoring internal capacity realities.

- Launching without a correction workflow.

- Mixing ownership across teams with no accountable lead.

- Measuring success only by submission volume.

Practical boundaries

- Neither software nor service alone fixes weak source data quality.

- More submissions do not automatically produce better business outcomes.

- Operational consistency and correction discipline are decisive over time.

Risk controls to require in either model

- ownership matrix (RACI),

- profile standard and field policy,

- correction SLAs or correction loop rules,

- reporting cadence tied to action and quality metrics.

FAQ

Which is better: listing management software or service?

It depends on your capacity and complexity. Software gives control; service gives faster execution; hybrid often balances both.

When does software-first usually fail?

When teams underestimate internal workload for QA, corrections, and ongoing updates.

When does service-first usually fail?

When provider process transparency is weak and correction ownership is unclear.

Is hybrid always the best option?

Not always. Hybrid works well when role boundaries and handoffs are explicitly defined.

Can any provider guarantee rankings or traffic timing?

No. Those outcomes depend on multiple external factors beyond listing execution.

Can DR growth be promised in directory campaigns?

Only under qualifying conditions. Any DR commitment should be explicitly scoped to starting DR, chosen campaign goal, and approved directory list.