Quick answer

A strong directory submission strategy is not about blasting your site across every directory. It is about selecting relevant platforms, sequencing submissions safely, and measuring outcomes you can act on.

If you want predictable results, use a workflow that starts with relevance scoring, runs in controlled batches, and tracks what happens after each submission. That approach protects quality while improving visibility, referral opportunities, and overall search support signals.

When teams need a faster execution path, a structured business directory submission tool helps keep quality, pace, and reporting in one workflow.

sbb-itb-8e44301

Problem framing

Directory submissions fail when teams optimize for volume instead of fit. Submitting to many low-value directories can consume time, create noise, and make reporting meaningless.

The goal is not “more submissions.” The goal is “better placements that support discovery and trust.” That means every directory should pass a clear quality filter, and every batch should have a measurable objective.

Common failure patterns:

- No segmentation by audience or business model.

- One-time submission push with no follow-up checks.

- No baseline metrics before launch.

- Weak listing consistency across profiles.

A safer framing is:

- Prioritize quality and relevance.

- Execute in controlled waves.

- Track changes by period.

- Improve based on report data.

Directory prioritization framework

| Directory type | Typical value | Risk level | Priority rule |

|---|---|---|---|

| Niche industry directories | High relevance, better qualified traffic | Low to medium | Prioritize first when audience match is clear |

| Startup/product directories | Good discovery and launch visibility | Low to medium | Prioritize early for new product traction |

| General business directories | Broad coverage | Medium | Use selectively with quality threshold |

| Local directories | Important for local presence consistency | Low to medium | Prioritize for local businesses or local pages |

| Low-quality mass directories | Low sustained value | High | Avoid |

What “good execution” looks like

A healthy workflow keeps each listing accurate, clear, and aligned with your positioning. You should be able to answer three questions at any point:

- Which directories were selected and why?

- What was submitted and when?

- What changed after submission?

If you cannot answer all three, your process is operationally weak, even if submission count looks high.

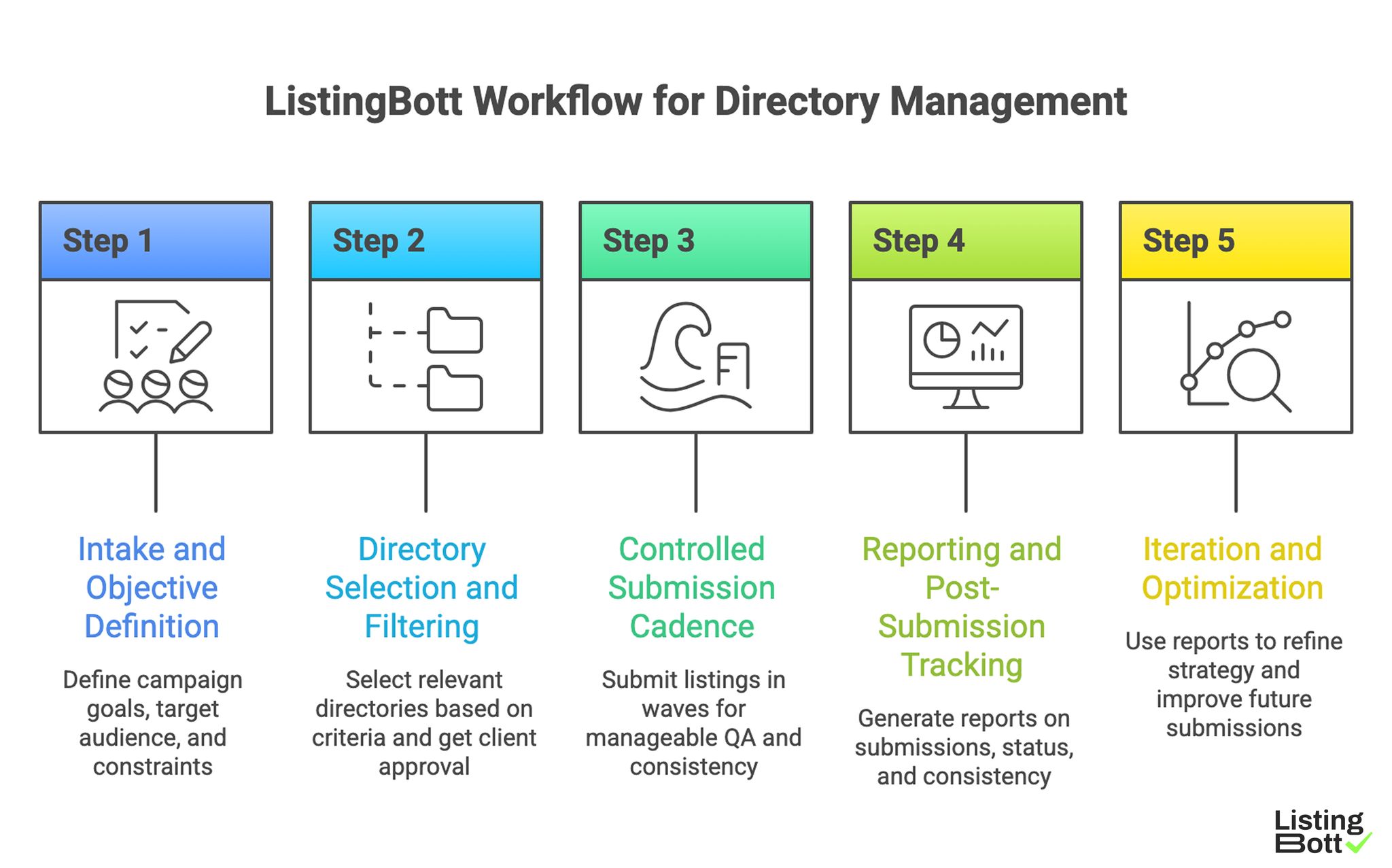

How ListingBott works

ListingBott is designed for teams that want operational consistency without manual submission overhead. The workflow combines automation support with quality control and reporting.

Step 1: Intake and objective definition

Start with a clear objective for the next 30-90 days. For example:

- increase qualified referral visits,

- improve profile presence across key categories,

- tighten listing consistency.

In ListingBott delivery, this stage starts with a complete client form and an explicit campaign goal.

Then define your constraints:

- target audience,

- regions,

- unacceptable directory types,

- messaging guardrails.

Step 2: Directory selection and filtering

Directory selection should apply relevance-first criteria:

- category match,

- audience match,

- editorial quality,

- spam-risk signals,

- profile completeness capability.

This is where low-value options are removed early. ListingBott then shares the selected directory list for approval before full publishing begins.

Step 3: Controlled submission cadence

Instead of publishing everything at once, use controlled submission waves. This keeps QA manageable and makes changes easier to measure.

A controlled cadence also helps content and messaging consistency. If one listing profile requires adjustment, you can correct future batches quickly.

Step 4: Reporting and post-submission tracking

After submissions, reporting should cover:

- submitted destinations,

- profile status,

- consistency checks,

- monitoring windows.

Teams that want to scale this layer usually automate directory submissions so process reliability does not depend on manual tracking across multiple spreadsheets. For clients, the key milestone is a clear “report is ready” handoff with status visibility and next actions.

Step 5: Iteration and optimization

Use the first reporting cycle to identify:

- listings that need profile upgrades,

- channels that deserve priority in future cycles,

- directories that should be de-prioritized.

Iteration turns directory work from one-time activity into a repeatable operating system.

ListingBott Workflow for Directory Management

Proof/results

This playbook avoids inflated promises and focuses on measurable signals. Results vary by niche, offer quality, and site fundamentals, but teams can still run a clear evidence model.

For DR-focused campaigns, ListingBott can promise growth to DR 15 only when starting DR is below 15, the goal is explicitly domain growth, and the approved directory list is in place. Outside those conditions, no blanket DR, ranking, or fixed-date traffic guarantee should be expected.

What to measure in the first 60-90 days

| KPI | Why it matters | How to measure | Healthy directional signal |

|---|---|---|---|

| Listing coverage quality | Indicates execution completeness | Track approved/high-quality listings vs planned | Coverage quality improves over each batch |

| Referral sessions from listings | Shows discoverability | Analytics segment by directory referrals | Referral contribution trends up over time |

| Profile consistency score | Reduces trust/confusion issues | Manual QA or checklist audits | Fewer inconsistencies month over month |

| Branded query visibility trend | Indicates assisted awareness impact | Search Console brand query trend | Stable to upward trend |

| Commercial page assist clicks | Measures business relevance | Internal link/CTA event tracking | More visits from listing-related content to BOFU pages |

What ListingBott contributes operationally

From project source materials, ListingBott positioning emphasizes:

- directory submission workflows across 100+ directories,

- quality-oriented execution,

- reporting visibility,

- listing ownership continuity.

Those capabilities support a process where teams can execute faster without losing control of quality decisions.

Evidence discipline

For each submission cycle, keep an evidence pack:

- baseline metrics before launch,

- submitted list with dates,

- profile snapshots,

- post-cycle KPI readout,

- action plan for next cycle.

That discipline prevents vague reporting and keeps the team focused on outcome improvement.

Implementation checklist

Use this checklist as your operating sequence.

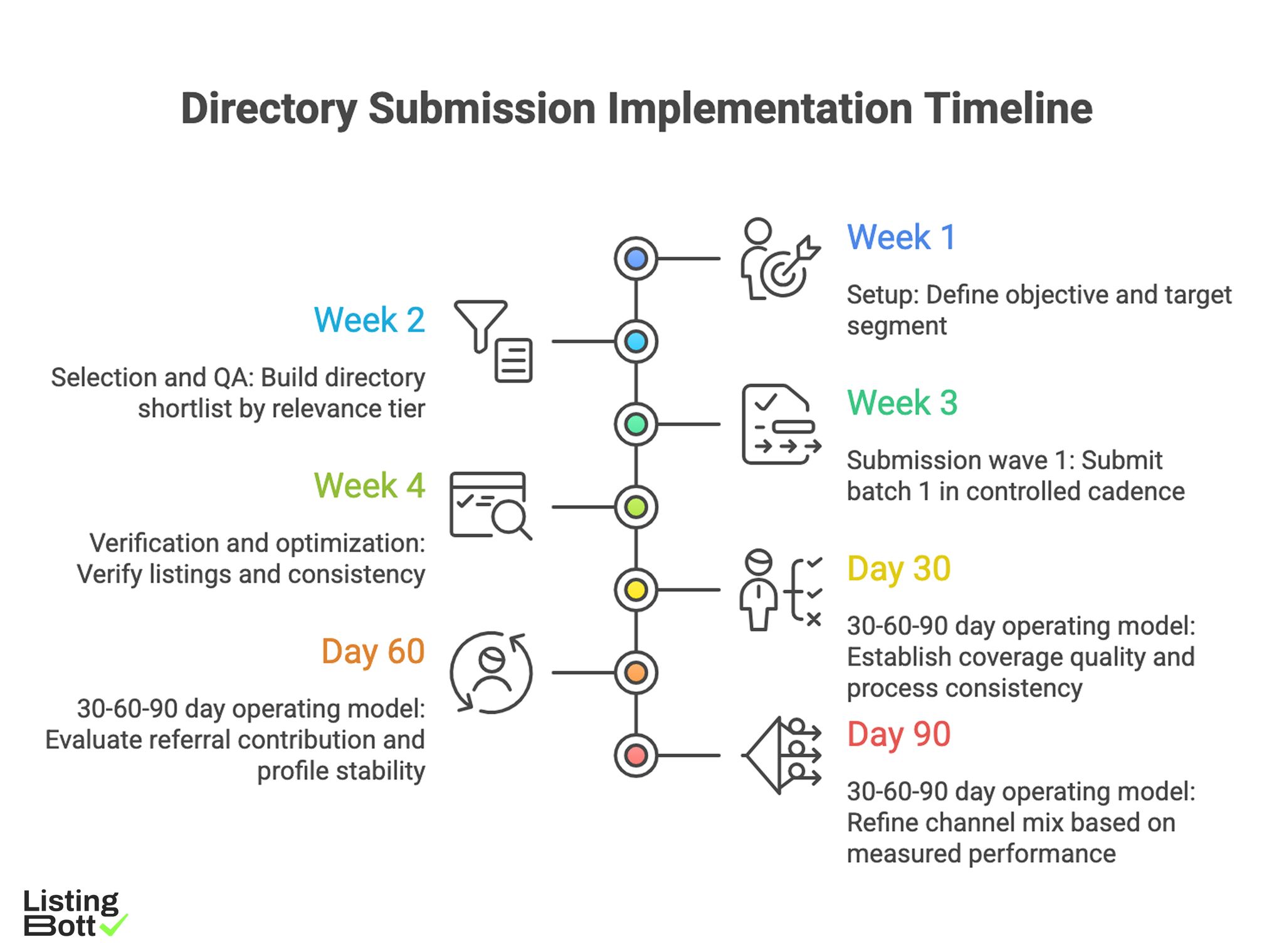

Week 1: setup

- Define objective and target segment.

- Build inclusion/exclusion criteria for directories.

- Prepare canonical profile copy (description, value proposition, key attributes).

- Set baseline metrics in analytics and Search Console.

Week 2: selection and QA

- Build directory shortlist by relevance tier.

- Remove low-quality or irrelevant options.

- Validate profile fields for consistency.

- Approve batch 1 submission set.

Week 3: submission wave 1

- Submit batch 1 in controlled cadence.

- Log destination, date, and status.

- Track profile acceptance and edits needed.

- Publish one internal progress note for stakeholders.

Week 4: verification and optimization

- Verify listings and consistency.

- Compare baseline vs early movement signals.

- Prioritize correction opportunities.

- Finalize batch 2 plan with improved criteria.

30-60-90 day operating model

- Day 30: establish coverage quality and process consistency.

- Day 60: evaluate referral contribution and profile stability.

-

Day 90: refine channel mix based on measured performance.

Directory Submission Implementation Timeline

Common mistakes and how to avoid them

Mistake 1: selecting directories by volume claims

Fix: use relevance and quality criteria first, then volume opportunities.

Mistake 2: no baseline before submissions

Fix: capture key metrics before any changes to enable clean comparison.

Mistake 3: inconsistent business description across listings

Fix: maintain a controlled source copy and update checklist.

Mistake 4: treating submissions as one-off task

Fix: run submissions as cyclical operations with reporting and iteration.

Mistake 5: ignoring conversion path

Fix: include clear path from informational pages to product action.

FAQ

1) How many directories should I submit to first?

Start with a smaller, high-relevance set and expand after you verify profile quality and early performance signals.

2) Is directory submission still useful in 2026?

It can be useful when done with quality controls, relevance filtering, and strong tracking. Low-quality mass submission remains a poor strategy.

3) Should I do this manually or with a tool?

Manual work can fit very small scopes. As complexity grows, a structured workflow is usually more reliable for consistency and reporting.

4) What should I track first?

Track listing coverage quality, profile consistency, referral sessions, and assisted traffic toward commercial pages.

5) How long until I see impact?

Early operational signals can appear in weeks, while meaningful trend validation usually needs a 60-90 day window.

6) What if some directories provide little value?

Document them, de-prioritize in future cycles, and reallocate effort toward higher-relevance placements.

Final takeaway

A strong directory strategy is an execution system, not a one-time checklist. If you prioritize quality, run controlled waves, and track outcomes consistently, you build a process that can scale with less risk and clearer business impact.