Quick answer

A business listing service can help startups move faster, but only if the service is matched to stage, budget, and execution discipline. The best choice is not usually the cheapest package or the biggest directory count. It is the option that gives reliable delivery quality, clear reporting, and a practical path to improve results after the first cycle.

For early-stage teams, the smartest buying move is to evaluate three things first: quality controls, cost structure, and operational fit with your internal bandwidth.

When evaluating providers, treat business listing service as an operating decision, not just a distribution task.

sbb-itb-8e44301

Problem Framing

Startups often buy listing support under time pressure. Launch timelines are short, team capacity is limited, and visibility goals are immediate. That combination makes "fast and cheap" offers look attractive, but it often creates rework and weak outcome clarity.

The usual pattern is familiar:

- a large promise on coverage,

- minimal explanation of selection quality,

- little detail about profile QA,

- reporting that tracks actions but not decisions.

This can still generate activity, but activity is not the same as strategic progress. If you cannot tell what worked, what failed, and what to change next, your listing budget is harder to scale efficiently.

Why startups overpay for listing services

| Buying shortcut | Why it happens | Hidden cost | Better alternative |

|---|---|---|---|

| Choosing by lowest price only | Budget pressure and urgency | More cleanup work, weaker fit, slower learning | Compare total operating cost, not invoice cost only |

| Choosing by maximum directory count | "More must be better" assumption | Low-signal placements dilute effort | Prioritize relevance-first coverage tiers |

| Skipping QA questions in sales process | Team wants quick decision | Inconsistent profile data across destinations | Require explicit QA and correction workflow |

| Treating reporting as optional | Focus on completion, not iteration | No optimization loop in cycle 2 | Demand action-oriented reporting with next steps |

A service that looks low-cost on day one can become expensive after corrections, missed opportunities, and unclear attribution.

Startup stage and cost benchmark model

Costs vary by scope, complexity, and support model. Instead of chasing universal numbers, use stage-based budget ranges and expected complexity.

| Startup stage | Typical listing objective | Service complexity | Monthly operating expectation | What to verify before buying |

|---|---|---|---|---|

| Pre-seed / idea validation | Baseline presence and discoverability support | Low to medium | Lean budget, focused shortlist execution | Clear quality filter and basic status reporting |

| Seed / early traction | Expand qualified coverage with stronger consistency | Medium | Moderate budget with tighter QA needs | Category-fit logic, correction policy, update cadence |

| Post-seed / scaling | Repeatable, multi-wave listing operations | Medium to high | Higher budget tied to process reliability | Wave-based execution, role ownership, decision-ready reporting |

| Multi-product or multi-geo startup | Standardized process across variants/locations | High | Program-level budget with workflow controls | Data governance, escalation model, change management |

Use these benchmarks as planning ranges, then adjust to your real constraints:

- team capacity,

- target markets,

- product maturity,

- expected reporting depth.

Build vs buy framework for a startup team

| Option | Works best when | Main risk | Decision rule |

|---|---|---|---|

| Fully manual in-house | Team has stable operations and low listing scope | Slow execution and inconsistent throughput | Use for small pilot only |

| Hybrid (internal + external support) | Team needs control but lacks full execution bandwidth | Role confusion and handoff gaps | Use with clear ownership map |

| Managed service workflow | Team prioritizes speed with process controls | Vendor dependency if process is opaque | Use when quality and reporting are explicit |

The right model is rarely permanent. Many startups begin hybrid, then move toward structured external execution as scope grows.

Vendor due-diligence checklist (pre-contract)

Ask these before you sign:

- How do you include and exclude directories?

- What are your profile QA steps before publishing?

- How do you handle corrections and exceptions?

- What does the report include beyond completed actions?

- How do you improve cycle 2 based on cycle 1 outcomes?

- Which parts are manual and which are automated?

If answers are vague, the service may not be mature enough for scaling.

How ListingBott works

ListingBott runs a structured workflow focused on execution clarity: client form intake, directory list approval, publish process, and report handoff.

1) Intake and scope alignment

The process starts with a complete client form so scope is defined before execution. This reduces drift and sets realistic expectations on delivery.

2) Directory list preparation and approval

A proposed list is prepared and sent for approval before broad publishing. That approval step keeps quality and fit visible before scale.

3) Controlled publishing and reporting

Publishing is managed with status visibility, then closed with a report that documents progress, pending items, and next recommendations.

4) Offer and promise boundaries

Current offer framing includes one-time payment model, publication to 100+ directories (per current website language), and no hidden extra fees (per current FAQ language). Refund can apply if process has not started.

ListingBott does not promise guaranteed ranking position, guaranteed traffic by a specific date, guaranteed indexing speed, or outcomes controlled by third-party platforms.

For DR-goal projects, DR growth to 15 can be promised only when all qualifying conditions are met: starting DR below 15, explicit domain growth goal, and approved directory list.

Teams comparing providers can evaluate fit by mapping this model against their own listing management workflow.

ListingBott Workflow

Proof/results

The best way to assess a business listing service is to separate operational quality from outcome quality. Operational quality tells you whether delivery is reliable. Outcome quality tells you whether the work is helping real business goals.

90-day evaluation scorecard

| Dimension | What to measure | Why it matters | Review cadence |

|---|---|---|---|

| Execution reliability | Planned vs completed submissions by wave | Reveals delivery consistency | Weekly during active waves |

| Data quality | Profile error/correction rate | Shows whether QA process is working | Weekly or per wave |

| Coverage relevance | Share of accepted high-fit destinations | Indicates selection quality | Per wave |

| Discovery support | Referral trend from listing sources | Signals visibility support | Monthly |

| Commercial assist | Visits/actions on money pages from listing-related paths | Connects work to business movement | Monthly |

This approach gives startups a grounded view of progress without overinterpreting short-term noise.

What a useful report should include

A good report should answer five practical questions:

- What was completed in this cycle?

- What is pending and why?

- What quality issues appeared?

- What changed versus baseline?

- What should happen next cycle?

If your report does not answer these clearly, optimization becomes guesswork.

Cost-efficiency interpretation model

Avoid binary "worth it / not worth it" judgments after one cycle. Instead, evaluate:

- correction burden per batch,

- trend in acceptance quality,

- clarity of decisions from reporting,

- stability of internal workload.

A service can be strategically efficient even before large traffic movement if it reduces operational chaos and improves decision quality.

Risk controls for startup teams

| Risk | Early warning signal | Mitigation |

|---|---|---|

| Scope creep | New requests appear outside agreed flow | Freeze scope by cycle and approve exceptions |

| Profile inconsistency | Repeated edits across destinations | Lock source-of-truth profile assets |

| Weak attribution | Activity visible, outcomes unclear | Set baseline and decision metrics pre-launch |

| Over-promising expectations | Sales language exceeds delivery reality | Use explicit promise boundaries in kickoff |

| Tool/process mismatch | Team cannot maintain handoffs | Choose service model that matches team bandwidth |

Implementation checklist

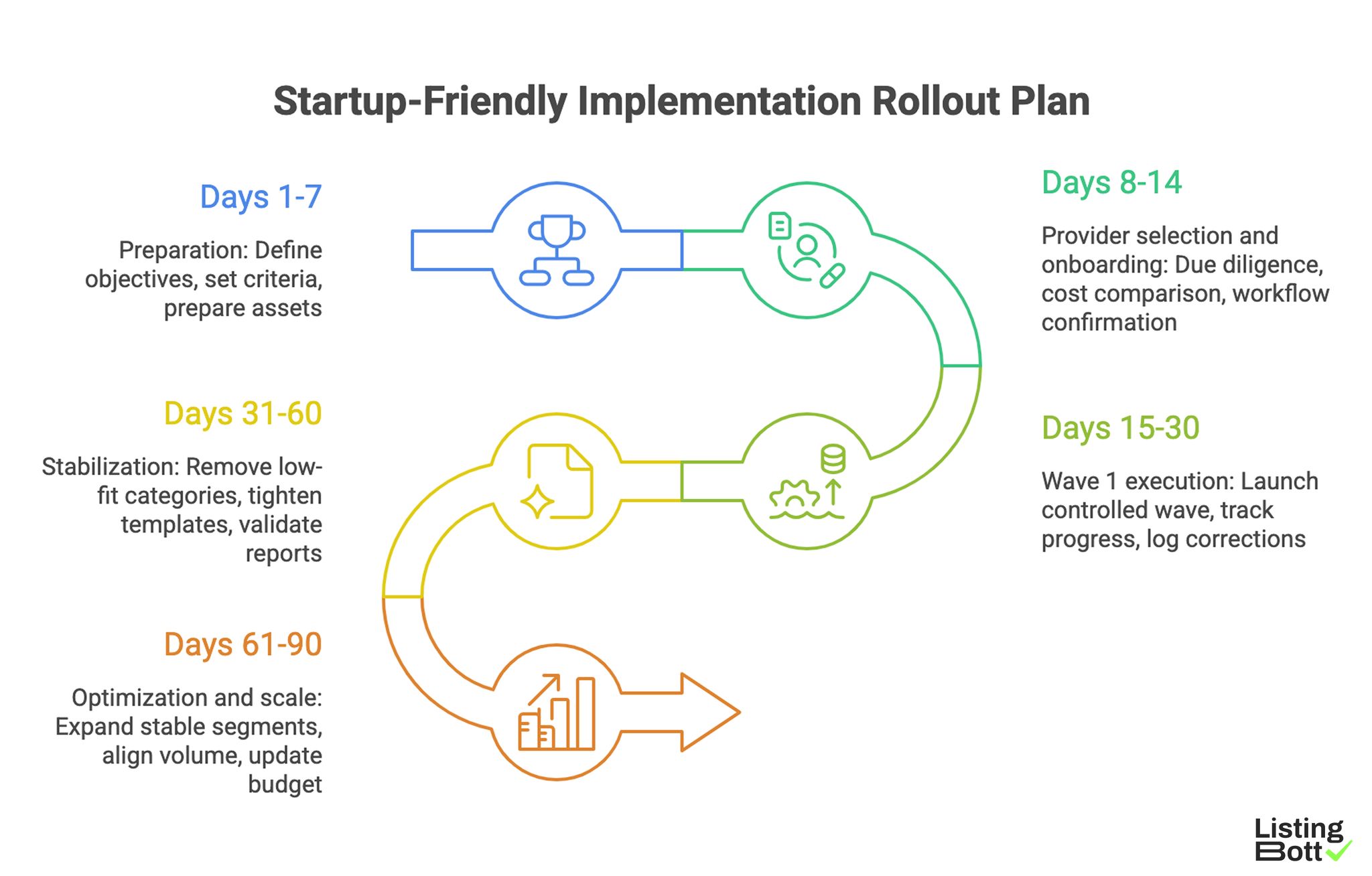

Use this rollout plan if you want startup-friendly execution with measurable control.

Phase 1: Preparation (days 1-7)

- Define the primary listing objective for this cycle.

- Set exclusion criteria for low-fit destinations.

- Prepare source-of-truth profile assets.

- Capture baseline metrics before first submission wave.

- Assign one owner for review and escalation.

Phase 2: Provider selection and onboarding (days 8-14)

- Run the due-diligence checklist across shortlisted providers.

- Compare cost by scope and reporting depth, not by count alone.

- Confirm correction workflow and response times.

- Approve initial destination list.

- Align reporting cadence and decision checkpoints.

Phase 3: Wave 1 execution (days 15-30)

- Launch controlled first wave.

- Track completed/pending/issue states.

- Log correction themes immediately.

- Verify data consistency after first accepted placements.

- Keep weekly status review short and action-focused.

Phase 4: Stabilization (days 31-60)

- Remove low-fit categories from future waves.

- Tighten profile templates where correction rates are high.

- Validate report quality against decision questions.

- Compare cost burn against process quality improvements.

Phase 5: Optimization and scale (days 61-90)

- Expand only segments with stable quality signals.

- Keep submission volume aligned to correction capacity.

- Update budget assumptions using real operational data.

-

Build next-quarter plan from measured findings.

Startup-Friendly Implementation Rollout Plan

Frequent startup mistakes and fixes

| Mistake | What it breaks | Practical fix |

|---|---|---|

| Buying the largest package first | Quality control and learning loop | Start with controlled scope and expand by evidence |

| No baseline before launch | Impact interpretation | Set baseline before first publish batch |

| Unclear internal ownership | Slow responses and unresolved blockers | Assign single accountable owner |

| Reporting without action mapping | Repeat errors in next cycle | Require report section for next actions |

| Ignoring operational fit | Team overload and churn risk | Match vendor model to team bandwidth |

FAQ

1) What should startups prioritize first: cost or quality?

Prioritize quality controls and reporting clarity first, then optimize cost within that boundary.

2) How do I know if a business listing service is mature?

A mature service can explain directory selection logic, QA steps, correction handling, and how cycle 2 improves from cycle 1.

3) Is a bigger directory count always better?

No. Relevance and execution quality usually matter more than raw count.

4) When should I switch from manual to managed service?

Switch when manual workflows start creating missed updates, inconsistent data, or delayed learning cycles.

5) Can any provider guarantee ranking results?

No reliable provider should promise guaranteed rankings or fixed-date traffic outcomes.

6) When is DR growth promise valid?

Only in the qualified case: starting DR below 15, explicit domain growth goal, and approved directory list.

Final takeaway

A startup should treat listing services as an operating system decision. Buy for process quality, decision-ready reporting, and scalable execution discipline. Those factors are what usually make cost benchmarks sustainable over time.