Quick answer

Bulk directory submission can work for multi-product launch calendars, but only when execution is organized into controlled waves with strict QA gates. Without that structure, scale usually increases error rates faster than it increases useful coverage.

The practical model is portfolio-first: segment launch assets, define wave priorities, validate quality before each wave, and monitor post-submission performance with explicit next actions.

For teams planning bulk directory submission, the key objective is predictable rollout quality across products, not maximum one-time volume.

sbb-itb-8e44301

Problem framing

Single-product submission workflows do not automatically scale to multi-launch operations. As soon as teams manage multiple products, categories, and timelines, small process gaps become expensive.

Frequent bulk-launch failure patterns:

- one shared profile template applied to mismatched product variants,

- no launch-tier prioritization,

- wave schedules that ignore correction capacity,

- no freeze windows before high-visibility launch dates,

- no post-wave monitoring standard.

The result is usually avoidable rework and lower confidence in launch execution.

Why multi-launch submissions break

| Root cause | Operational symptom | Business impact |

|---|---|---|

| Portfolio complexity underestimated | Product-specific requirements missed | Inconsistent launch quality |

| No wave governance | Too many submissions in one batch | Slow correction and delayed stabilization |

| Weak pre-wave QA | Errors discovered after publishing | Higher cleanup costs |

| No launch calendar integration | Submissions out of sync with launch timing | Reduced launch support value |

| No post-wave decision loop | Same mistakes repeated in next wave | Low compounding return |

Bulk submission should be designed as launch operations, not just submission activity.

Portfolio segmentation model

| Segment dimension | Example segments | Why segmentation matters |

|---|---|---|

| Product stage | MVP launch, growth launch, relaunch | Different risk tolerance and priority |

| Geography | core markets, expansion markets | Varies directory relevance and timing |

| Category fit | broad category, niche category | Changes shortlist composition |

| Launch criticality | flagship launch, supporting launch | Determines QA depth and escalation speed |

Segmentation creates the foundation for predictable wave planning.

Wave design framework for bulk programs

| Wave type | Typical size | Primary objective | QA intensity |

|---|---|---|---|

| Wave 1 (pilot/stability) | Small | Validate templates and process | High |

| Wave 2 (controlled scale) | Medium | Expand with quality controls | High to medium |

| Wave 3+ (repeatable scale) | Medium to large | Optimize throughput with stable controls | Medium |

Skipping Wave 1 controls is the most common source of high-cost bulk errors.

Launch-readiness checklist before each wave

| Checkpoint | Pass condition |

|---|---|

| Product data integrity | Core fields validated for each launch asset |

| Category mapping accuracy | Category choices fit product intent |

| Template variance controls | Variant rules documented and approved |

| Correction capacity | Team can handle forecasted exceptions |

| Reporting readiness | Status and issue logs prepared before launch |

No-go decision should be automatic if multiple checkpoints fail.

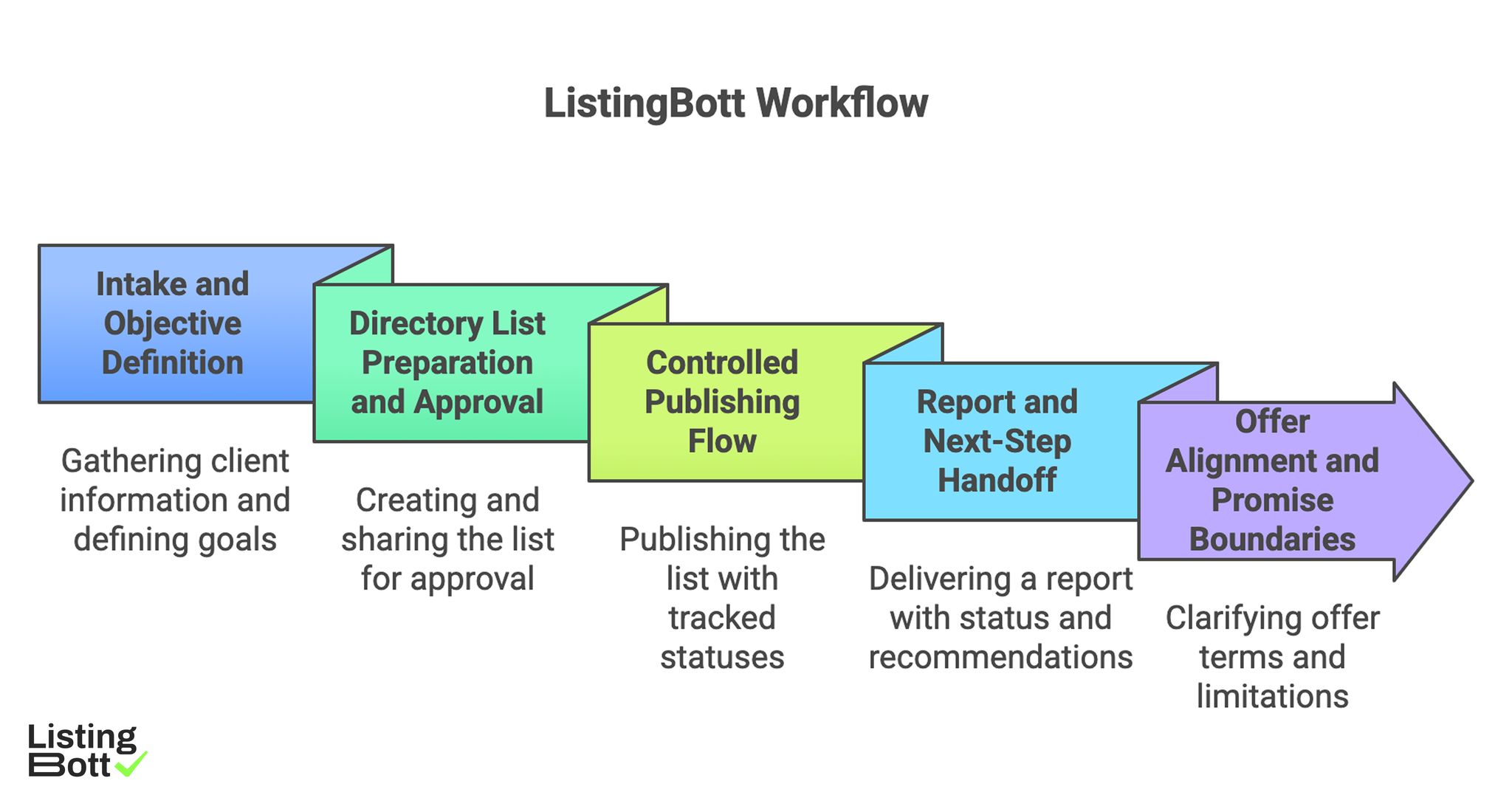

How ListingBott works

ListingBott runs a structured workflow built around intake, directory list approval, publishing, and report handoff. This keeps scope, status, and follow-up clear across cycles.

1) Intake and objective definition

Execution starts with complete client-form intake so launch scope and goals are defined upfront.

2) Directory list preparation and approval

A proposed list is prepared and shared for approval before full publishing, helping align quality expectations before scale.

3) Controlled publishing flow

Publishing is handled with tracked statuses to support wave-level visibility and issue management.

4) Report and next-step handoff

Delivery includes a report with submitted status, pending items, and next recommendations.

5) Offer alignment and promise boundaries

Current offer framing includes one-time payment model, publication to 100+ directories (per current website language), no hidden extra fees (per current FAQ language), and refund possibility if process has not started.

ListingBott does not promise guaranteed ranking position, guaranteed traffic by a specific date, guaranteed indexing speed, or outcomes controlled by third-party platforms.

For DR-goal projects, DR growth to 15 is only in the qualified setup: starting DR below 15, explicit domain growth goal, and approved directory list.

For teams coordinating large launch calendars, this model supports automated submissions at scale with clearer stage controls.

ListingBott Workflow

Proof/results

Bulk programs should be judged by reliability and repeatability before aggressive expansion. Measurable stability in early waves usually predicts better outcomes in later waves.

Multi-launch bulk KPI stack

| KPI layer | Example metrics | Review cadence | Why it matters |

|---|---|---|---|

| Readiness quality | pre-wave checklist pass rate | Per wave start | Prevents avoidable launch errors |

| Execution quality | wave completion reliability, error rate | Weekly during active waves | Tracks process stability |

| Correction quality | issue closure time, recurrence rate | Weekly | Tracks cleanup efficiency |

| Reporting quality | recommendation adoption rate | Per cycle | Tracks decision loop health |

| Outcome support | referral trend, assisted actions by launch cohort | Monthly | Tracks business support direction |

This structure gives teams a practical way to compare waves and improve systematically.

Launch calendar alignment model

Bulk submissions should map to launch calendar phases:

- pre-launch preparation window,

- launch support window,

- post-launch stabilization window.

Each phase should have explicit tasks and owners. When these phases are mixed, quality and timing both degrade.

Post-wave review template

After each wave, teams should document:

- what was delivered,

- what failed and why,

- which issues repeated,

- what process changes are required,

- what is approved for next wave.

This template creates compounding process gains across launches.

Scale gates for safe expansion

| Scale gate | Minimum requirement before larger wave |

|---|---|

| Readiness gate | High checklist pass consistency |

| Error gate | Low critical error volatility |

| SLA gate | Stable issue closure within target window |

| Reporting gate | Clear owner-based next actions in prior cycle |

| Capacity gate | Team bandwidth confirmed for next wave size |

If one gate fails, keep next wave size controlled.

Risk controls for multi-product launch portfolios

| Risk | Early signal | Control action |

|---|---|---|

| Template misuse | Product-specific mismatches increase | tighten template-variance rules |

| Batch overload | correction queue grows quickly | reduce wave size and sequence |

| Timing misalignment | submissions miss launch windows | integrate launch calendar checkpoints |

| Recurring errors | same issue categories reappear | root-cause review before next wave |

| Reporting drift | action ownership is unclear | require owner + due date for every recommendation |

These controls help scale throughput without sacrificing quality.

Expectation discipline for bulk programs

Bulk directory programs can improve operational consistency and support visibility trends, but they should not be framed as guaranteed ranking outcomes by fixed dates. Reliable programs focus on repeatable quality and directional movement over cycles.

This expectation model reduces unnecessary churn and keeps planning realistic.

Implementation checklist

Use this checklist to run multi-launch bulk directory submission with quality protection.

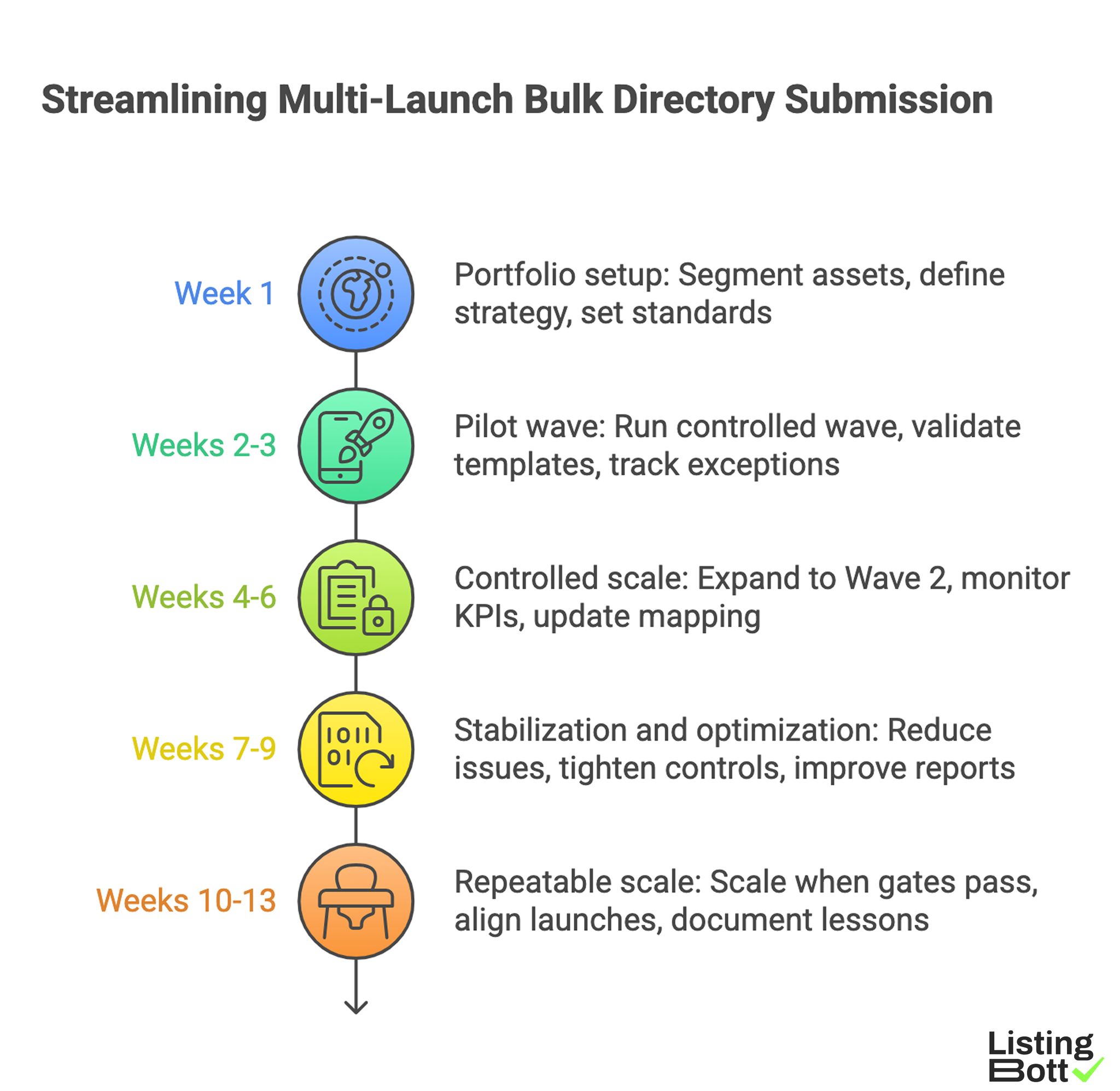

Phase 1: Portfolio setup (week 1)

- Segment launch assets by product, market, and criticality.

- Define wave strategy and preliminary sequence.

- Set checklist standards and pass thresholds.

- Assign owners for QA, execution, and reporting.

- Align submission windows with launch calendar.

Phase 2: Pilot wave (weeks 2-3)

- Run small controlled Wave 1.

- Validate template accuracy by segment.

- Track exceptions and issue root causes.

- Confirm correction SLA feasibility.

- Approve adjustments before scaling.

Phase 3: Controlled scale (weeks 4-6)

- Expand to Wave 2 with capped volume.

- Maintain pre-wave checklist enforcement.

- Monitor execution and correction KPIs weekly.

- Update launch support mapping by cohort.

- Keep no-go criteria active.

Phase 4: Stabilization and optimization (weeks 7-9)

- Reduce recurring issue categories.

- Tighten variant and category controls.

- Improve report action clarity.

- Adjust wave sizes to actual capacity.

- Prepare next phase scale recommendation.

Phase 5: Repeatable scale (weeks 10-13)

- Scale only when all gates pass.

- Keep phase-based launch alignment mandatory.

- Run post-wave review template every cycle.

- Document lessons into SOP updates.

- Publish quarter-level rollout plan.

Weekly bulk-operations checklist

- Verify upcoming wave readiness pass status.

- Review open critical issues and SLA breaches.

- Confirm owner accountability on recommendations.

- Check launch calendar alignment for pending tasks.

-

Approve or hold next wave based on gates.

Streamlining Multi-Launch Bulk Directory Submission

Common mistakes and fixes

| Mistake | Effect | Fix |

|---|---|---|

| One-size-fits-all template | Product mismatch errors | Use segment-specific template controls |

| Oversized first wave | Heavy correction backlog | Start with pilot wave and scale gradually |

| Launch timing ignored | Reduced launch support impact | Add launch-calendar gate to workflow |

| Reports without actions | Slow learning between waves | require action-owner format |

| Scaling while unstable | Error amplification | enforce multi-gate scale policy |

FAQ

1) What is the biggest risk in bulk directory submissions?

Scaling volume before process stability is validated.

2) How large should the first bulk wave be?

Small enough to validate templates, QA checks, and correction flow before expansion.

3) Should all products use the same listing template?

No. Use controlled template variants based on product and category differences.

4) How do we know when to scale bulk submissions?

Scale only when readiness, error, SLA, reporting, and capacity gates consistently pass.

5) Can bulk submissions guarantee ranking outcomes?

No. Responsible programs avoid fixed-date ranking guarantees and focus on execution reliability plus directional trends.

6) Why is post-wave review mandatory?

It turns each launch wave into a learning loop and reduces repeated errors in future cycles.

Final takeaway

Bulk directory submission works for multi-product launches when execution is managed as staged operations with strict QA and scale gates. Teams that enforce these controls usually achieve more reliable rollout quality and better long-term decision confidence.