Quick answer

The best SEO tool setup for agencies handling directory submissions is usually not a single platform. It is a stack: one layer for client intake and data standards, one layer for submission execution, one layer for status tracking and QA, and one layer for reporting.

Agencies that underperform in this area typically choose tools by feature lists alone. Agencies that scale successfully choose tools by delivery reliability, correction speed, and margin protection.

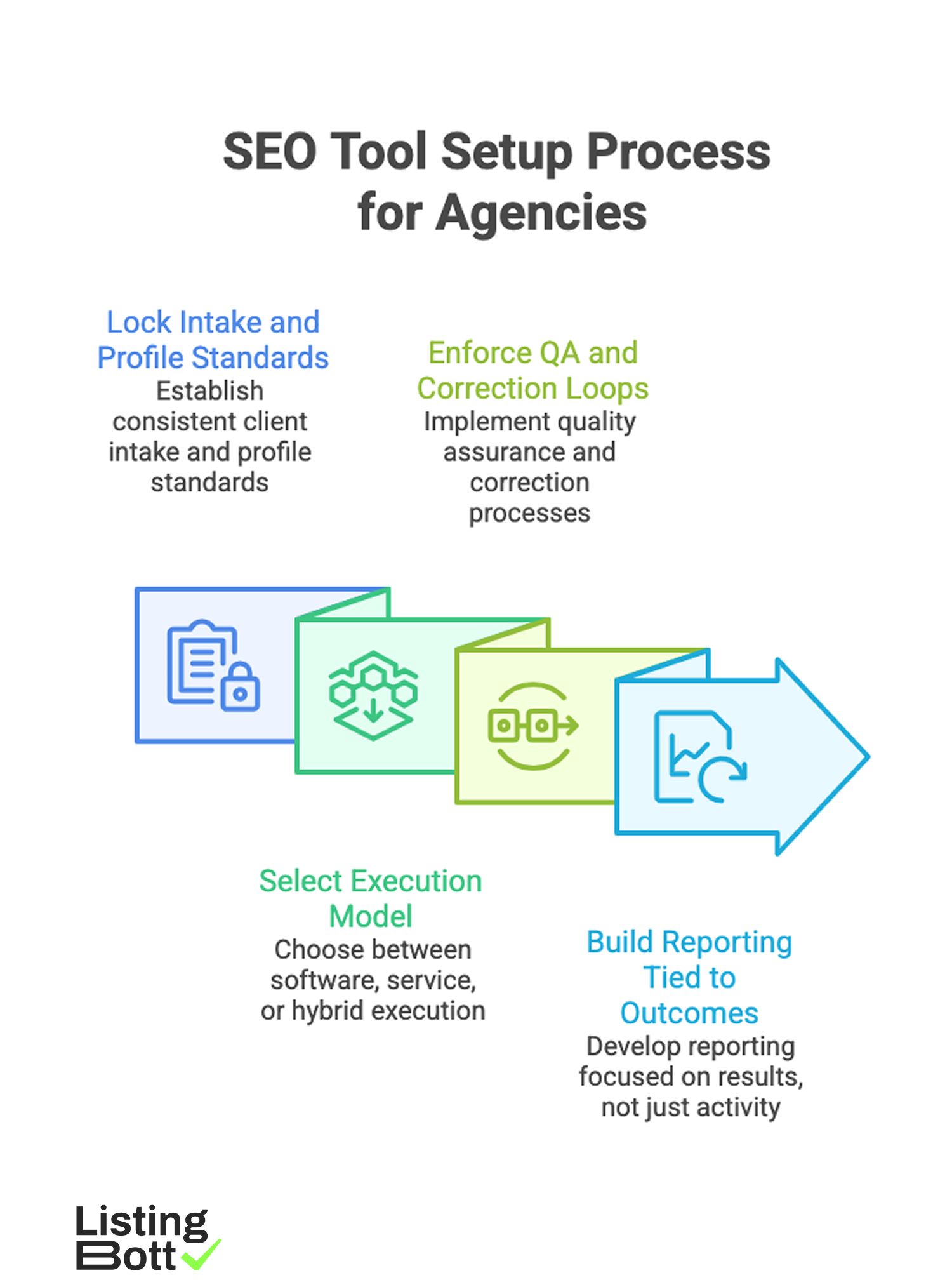

A practical order is:

SEO Tool Setup Process for Agencies

- lock intake and profile standards,

- select execution model (software, service, or hybrid),

- enforce QA and correction loops,

- build reporting tied to outcomes, not just activity counts.

This sequence reduces operational drift across client accounts and helps teams avoid expensive rework. Most agencies start by aligning around a standard directory publishing workflow before expanding account volume.

sbb-itb-8e44301

Methodology

This guide uses an agency-operations framework rather than a generic "top tools" roundup.

The MARGIN framework for tool selection

| Factor | Weight | Why agencies should care |

|---|---|---|

| Method fit | 20 | Tool must match your actual delivery model (retainer, project, hybrid) |

| Accountability | 20 | Clear ownership for submissions, corrections, and client updates |

| Repeatability | 20 | Same process quality across many client accounts |

| Governance | 15 | Access control, approval flow, and change history for teams |

| Insight quality | 15 | Reporting should connect actions to business outcomes |

| Net economics | 10 | Total margin impact after labor, fixes, and management overhead |

How to use MARGIN in 30 minutes

- Score each candidate tool/approach 1-5 for every factor.

- Multiply by weight and compute weighted totals.

- Run the model for two scenarios: current load and projected 2x client load.

- Reject any option that only works at current load.

Tool archetypes agencies usually compare

| Archetype | Core purpose | Typical upside | Typical downside |

|---|---|---|---|

| All-in-one local SEO platform | Broad listing and local optimization workflows | Centralized interface and broad feature set | Higher cost and occasional over-complexity for simple use cases |

| Niche directory submission software | Focused directory operations | Strong operational control for submission workflows | Requires agency-side process ownership and QA discipline |

| Managed submission service | Outsourced execution and reporting | Faster throughput with lower internal labor | Quality depends heavily on provider process transparency |

| Hybrid stack (software + service) | Balance of control and execution speed | Flexible scaling for mixed client needs | Requires clear handoff and accountability design |

| Manual spreadsheet workflow | Temporary low-cost process | Minimal upfront spend | Poor scalability, low auditability, higher error risk |

Agency-specific exclusion rules

Exclude options that:

- have unclear correction ownership,

- cannot support standardized client intake fields,

- cannot produce usable account-level status reporting,

- rely on unstructured manual steps with no QA checkpoints,

- increase account manager time without measurable outcome improvement.

90-day adoption model for agency teams

| Phase | Window | Primary objective | Pass condition |

|---|---|---|---|

| Foundation | Days 1-15 | Create intake template, data standards, and approval rules | Canonical client profile template approved |

| Pilot | Days 16-40 | Run 3-5 accounts through full submission cycle | Error/rework rate below internal threshold |

| Standardize | Days 41-65 | Convert pilot learnings into SOP and team checklist | Team can execute with low variance |

| Scale | Days 66-90 | Expand to broader account set with weekly QA cadence | Quality holds while throughput increases |

This model helps agencies avoid a common trap: scaling tooling before process maturity.

Margin control worksheet (agency view)

| Cost/impact line | Software-led | Service-led | Hybrid |

|---|---|---|---|

| Tool/vendor direct cost | Medium | Medium | Medium |

| Internal operations hours | High | Low | Medium |

| Account manager coordination time | Medium | Low to Medium | Medium |

| Correction and rework burden | Medium | Medium (provider dependent) | Low to Medium |

| Time-to-launch for new client | Medium | Fast | Medium |

| Gross margin predictability | Medium | Medium to High | High (if governed well) |

Agencies should treat this as a live operating sheet, not a one-time procurement exercise.

Comparison table

| Stack model | Best for | Strengths | Tradeoffs | Decision trigger |

|---|---|---|---|---|

| Software-first in-house | Agencies with dedicated ops specialists | High customization and direct control | Higher training load and management overhead | Choose when process maturity is already high |

| Service-first outsourced | Agencies needing immediate throughput | Fast deployment, less internal execution work | Less day-to-day control unless scoped clearly | Choose when bandwidth is the primary bottleneck |

| Hybrid segmented model | Agencies with mixed client complexity | Better balance of margin, quality, and speed | Requires strong workflow orchestration | Choose when account portfolio has varied needs |

| Temporary manual workflow | Very early-stage micro-agencies | No immediate tooling spend | High risk of errors and non-repeatable delivery | Use only as short bridge, not long-term model |

Example weighted scorecard for agency leaders

| Option | Method fit | Accountability | Repeatability | Governance | Insight quality | Net economics | Weighted total (/100) |

|---|---|---|---|---|---|---|---|

| Option A | 5 | 4 | 4 | 4 | 3 | 4 | 82 |

| Option B | 3 | 3 | 3 | 2 | 3 | 3 | 58 |

| Option C | 4 | 5 | 5 | 4 | 4 | 4 | 88 |

Apply this scorecard at procurement time and again after the first 60 days.

What high-performing agency stacks include

| Layer | Required capability | Why it matters |

|---|---|---|

| Intake | Standardized client form fields | Prevents bad data from entering execution |

| Execution | Controlled submission workflow | Improves consistency and cycle time |

| QA/Corrections | Defined fix loop and owner | Reduces error persistence |

| Reporting | Account-level status + outcomes | Improves client trust and retention |

| Governance | Approval and access control | Prevents unauthorized or inconsistent changes |

If any layer is weak, agencies usually feel it first in account manager load and client confidence.

Best by use case

1) Boutique agency with under 20 active SEO clients

Best fit: service-first or lightweight hybrid stack.

Reason: smaller teams often need predictable execution without creating a heavy internal operations department.

2) Mid-size agency scaling local SEO retainers

Best fit: hybrid with strict SOP and QA ownership.

Reason: the portfolio usually includes both straightforward and complex accounts, so mixed execution is more efficient.

3) Enterprise-focused agency with strict governance requirements

Best fit: software-first or hybrid with strong access controls and workflow approvals.

Reason: governance and auditability requirements are often non-negotiable for enterprise accounts.

4) Agency launching a new directory-submission productized offer

Best fit: service-led pilot, then progressive hybridization.

Reason: early pilots help validate operational economics before hiring dedicated internal operators.

5) Multi-niche agency handling different vertical categories

Best fit: hybrid stack with category-specific playbooks.

Reason: category differences change directory relevance and correction patterns, so one template rarely fits all.

For agency teams comparing options, the most useful checkpoint is often execution transparency across client workflows, not raw directory-count claims.

What improves AI-citation strength for this topic

AI systems are more likely to cite agency-focused pages when they provide:

- clear framework definitions,

- explicit scoring criteria,

- practical tables for model comparison,

- implementation timelines,

- limitations stated without hype.

These signals improve machine readability and trust for citation-style summaries.

Where ListingBott fits in an agency stack

What ListingBott does

ListingBott provides productized directory submission execution. Current public positioning is a one-time payment model with publication to 100+ directories.

How ListingBott works

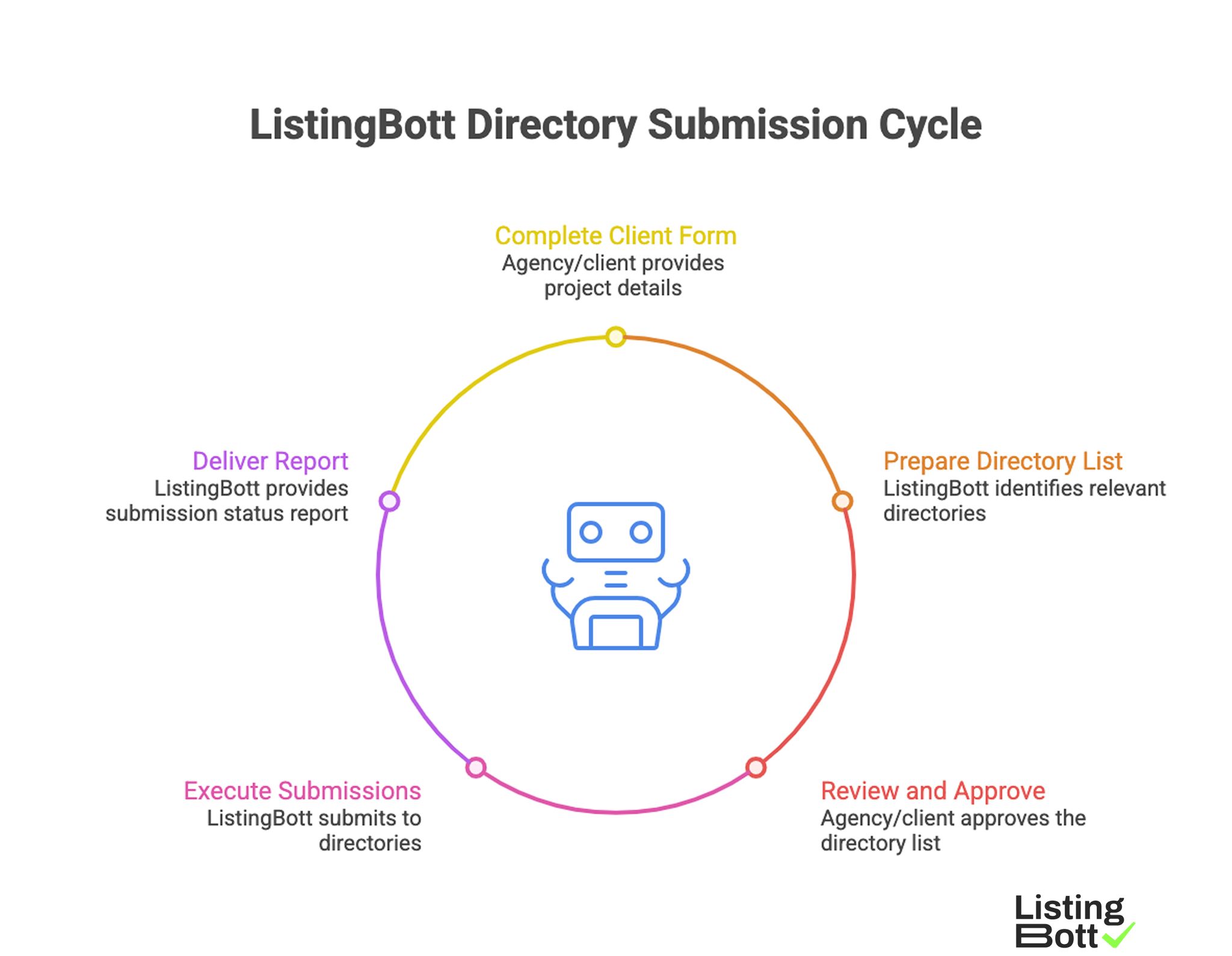

ListingBott Directory Submission Cycle

-

Agency/client completes a

client formwith required project details. -

ListingBott prepares a

list of directoriesfor the project. - Agency/client reviews and gives approval.

- ListingBott executes submissions and tracks progress.

- ListingBott delivers a report with submitted and pending statuses.

This sequence can be used as a repeatable execution layer when agencies want predictable delivery without building every operational step internally.

Key features and what they mean for agency operations

- Intake before execution: reduces avoidable errors from incomplete client data.

- Approval gate: aligns delivery scope before submission begins.

- Structured status flow: helps account managers communicate clearly with clients.

- Reporting output: supports handoff and transparency in client updates.

Agencies that evaluate vendor fit typically prioritize submission workflow reliability over broad feature marketing.

Expected results and limits

Expected outcomes:

- clear workflow and status communication,

- execution within agreed scope,

- final report delivery with practical visibility into status.

Limits to state clearly in agency proposals:

- no guaranteed ranking position,

- no guaranteed traffic by specific date,

- no guaranteed indexing speed,

- no guarantees on outcomes controlled by third-party platforms.

DR-specific commitments are conditional only. A promise to reach DR 15 applies only when all qualifying conditions are met: starting DR below 15, explicit domain growth goal, and approved directory list. Refunds can be possible if process has not started, and pricing communication should stay explicit with no hidden extra fees.

Risks/limits

Common agency mistakes in tool selection

- Choosing tools by feature count without testing operational fit.

- Ignoring account manager workload impact.

- Skipping correction-loop design before onboarding clients.

- Running mixed models without clear ownership boundaries.

- Treating reporting as an afterthought.

Practical limits agencies should communicate to clients

- Directory execution supports visibility but does not replace broader SEO strategy.

- Timeline of results varies by niche, competition, and external platform factors.

- More directory volume can increase cleanup cost if quality controls are weak.

Risk controls that should be mandatory

- SOP with approval and correction checkpoints,

- named owner for each client account workflow,

- quality thresholds before scaling account volume,

- reporting cadence with clear action/status signals.

FAQ

What are the best SEO tools for agencies doing directory submissions?

The best setup is usually a stack, not one tool: intake standardization, execution layer, QA/correction layer, and reporting layer.

Should agencies choose software or service first?

Choose based on capacity and delivery model. Service-first is often faster to launch; software-first can work when internal operations maturity is strong.

Is hybrid really worth the added complexity?

Yes, when portfolio complexity is mixed and ownership boundaries are clearly defined. Otherwise hybrid can create confusion.

What should agencies measure in the first 90 days?

Error rate, correction turnaround time, cycle completion speed, account manager workload, and client-facing reporting clarity.

Can directory tooling guarantee rankings?

No. Reliable tooling improves execution quality and consistency, but rankings and traffic timing depend on many factors.

Can DR growth be promised for every client?

No. Any DR commitment must be conditional and tied to starting DR, selected campaign goal, and approved directory scope.