Quick answer

The best online directory strategy is not about submitting to the biggest possible list. It is about selecting the right categories and platforms in the right order, with quality controls that protect consistency over time.

For most startups and SMB teams, the highest-impact approach is:

- Select directory categories by relevance and trust.

- Prioritize profiles that allow meaningful business detail.

- Roll out in waves so QA and maintenance stay manageable.

If you are mapping your first serious submission cycle, use this guide as an editorial filter for best online business directories decisions, not just a collection of links.

Directory Listing Management

sbb-itb-8e44301

Methodology

This framework is designed for operators who need repeatable decisions, not one-off experiments. It evaluates directory options at category level first, then at platform level, so teams avoid low-value expansion.

Editorial scoring model (100 points)

| Dimension | Weight | What to check | Why it matters |

|---|---|---|---|

| Relevance match | 30 | Category fit to your product and audience intent | Stronger discovery alignment and lower noise |

| Trust/editorial quality | 25 | Review standards, profile quality expectations, moderation signals | Reduces low-signal footprint risk |

| Profile depth potential | 20 | Ability to include meaningful business details and context | Better user understanding and conversion support |

| Operational feasibility | 15 | Submission friction, approval cycles, format constraints | Keeps rollout efficient |

| Ongoing maintenance burden | 10 | Update frequency, ownership complexity, correction effort | Protects long-term quality |

Total score is used to classify priority tiers.

Tiering thresholds

- Tier 1 (80-100): launch-critical categories that should be covered first.

- Tier 2 (65-79): useful expansion once Tier 1 quality is stable.

- Tier 3 (<65): conditional or usually excluded unless a clear strategic reason exists.

This prevents teams from confusing activity volume with strategic progress.

Hard filters before scoring

Before scoring, remove options that fail basic editorial standards.

| Hard filter | Pass criteria | Fail example |

|---|---|---|

| Category legitimacy | Category definitions are clear and consistent | Broad, ambiguous category buckets |

| Listing transparency | Submission and review steps are visible | Hidden workflow, unclear acceptance logic |

| Profile quality baseline | Platform supports complete and structured profiles | Thin listings with little business context |

| Reputation signal | Observable quality in published profiles | Mostly low-quality or duplicate-looking entries |

| Maintenance practicality | Updates can be handled without high overhead | Correction cycle is slow and fragmented |

If a platform fails two or more hard filters, it should not enter priority scoring.

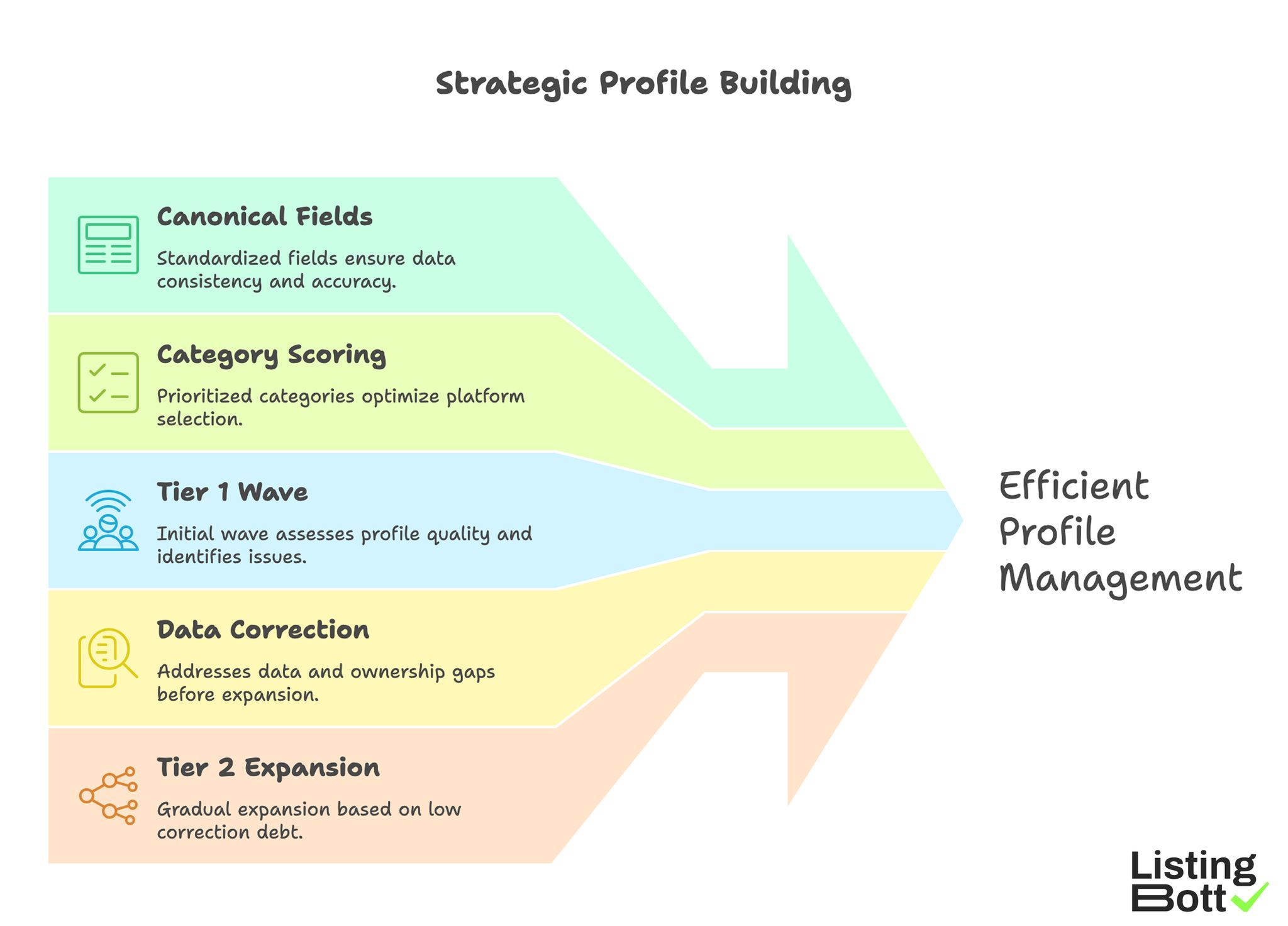

Execution sequence that avoids rework

- Build canonical business fields before first submission wave.

- Score categories, then shortlist platforms inside the winning categories.

- Run a limited Tier 1 wave and measure profile quality outcomes.

- Fix data and ownership gaps before moving to Tier 2.

- Expand only when correction debt stays low.

Teams that skip sequencing often face rollback work and inconsistent profiles.

Strategic Profile Building

Quality control checkpoints

Use explicit checkpoints to protect reliability.

| Checkpoint | Validation question | Action if failed |

|---|---|---|

| Data consistency | Are core fields identical across all drafted profiles? | Stop wave and fix source profile |

| Category accuracy | Does each platform category match real business scope? | Re-map category and re-review |

| Asset readiness | Are logos, screenshots, and descriptions current? | Refresh assets before submit |

| Ownership clarity | Is one owner assigned for changes and approvals? | Assign owner before expansion |

| Error log hygiene | Are submission issues documented with resolution status? | Normalize logging before next wave |

This creates a measurable process instead of an ad hoc submission sprint.

Comparison table

The table below compares directory groups rather than individual sites, which is usually the better planning unit for editorial execution.

| Directory group | Typical use case | Strengths | Tradeoffs | Recommended tier |

|---|---|---|---|---|

| Startup discovery directories | Product launches and early visibility | High relevance for launch-stage products, faster discovery loops | Can be competitive and noisy | Tier 1 |

| B2B software comparison directories | SaaS with clear ICP and category fit | Structured buyer-intent exposure | Requires stronger profile completeness | Tier 1 |

| Industry-specific niche directories | Vertical products or specialized services | Better intent precision and contextual fit | Narrower reach outside core niche | Tier 1 or Tier 2 |

| Local business directories | Location-sensitive services | Strong local-intent support | Quality declines if NAP consistency is weak | Tier 1 for local, Tier 2 for non-local |

| General business directories | Baseline citation and broad discovery | Wide coverage and simple inclusion | Lower average relevance | Tier 2 |

| Community/resource directories | Expert ecosystems, curated hubs | Trust signal when quality-controlled | Admission can be slower | Tier 2 |

| High-volume low-trust directories | Bulk coverage attempts | Fast count growth only | High maintenance, weak strategic value | Usually exclude |

Category-level scoring example

| Category | Relevance (30) | Trust (25) | Profile depth (20) | Operations (15) | Maintenance (10) | Total |

|---|---|---|---|---|---|---|

| Startup discovery | 26 | 20 | 16 | 12 | 8 | 82 |

| B2B software comparison | 24 | 22 | 18 | 11 | 7 | 82 |

| Niche vertical | 27 | 19 | 15 | 10 | 7 | 78 |

| Local directories | 22 | 18 | 14 | 13 | 8 | 75 |

| General directories | 16 | 14 | 10 | 14 | 7 | 61 |

This sample shows why broad directories can be useful but rarely deserve first priority.

Practical selection rules

- Pick no more than three Tier 1 groups for the first wave.

- Keep profile quality standards identical across all selected groups.

- Do not add Tier 2 groups while unresolved QA issues remain in Tier 1.

- Record exclusions and reasons, so future expansion decisions are traceable.

Shortlist by use case

Different teams need different priority stacks. The same "top directory list" is rarely optimal for every business model.

1) Early-stage SaaS (new product launch)

Recommended order:

- Startup discovery directories.

- B2B software comparison directories.

- One high-fit niche vertical category.

Why this works:

- balances fast discovery and buyer-intent visibility,

- gives enough category diversity without creating operational overload,

- supports learning cycles before broader expansion.

2) Local or regional service business

Recommended order:

- Local business directories.

- Niche service-category directories.

- Selected general business directories.

Execution note:

- treat business name, address, service area, and contact fields as controlled source data,

- validate consistency before every wave.

3) Multi-location brand

Recommended order:

- Local directories with location-level profile control.

- General directories that support structured multi-location records.

- Niche categories only where location intent is clear.

Risk control:

- expand by cluster, not by all locations at once,

- run spot audits each wave to catch drift early.

4) B2B agency or consultancy

Recommended order:

- Niche professional directories.

- B2B comparison and resource directories.

- Select general directories for baseline coverage.

What to avoid:

- publishing generic profile text across all platforms,

- prioritizing count over clarity of services and positioning.

5) Marketplace or two-sided platform

Recommended order:

- Startup discovery categories.

- Relevant vertical directories aligned to one side of the marketplace.

- Editorial communities that include product/resource listings.

Operating tip:

- keep one canonical value proposition statement and adapt only the context lines per category.

6) Lean team with limited capacity

Recommended order:

- Highest-scoring two Tier 1 categories only.

- Delayed expansion until corrections stay consistently low.

This is usually the safest model for teams balancing directory work with core product delivery.

Rollout cadence template (90 days)

| Window | Primary objective | Target output |

|---|---|---|

| Days 1-14 | Build source profile and shortlist categories | Approved Tier 1 plan |

| Days 15-40 | Execute Tier 1 submissions | Core coverage complete |

| Days 41-65 | QA correction and profile strengthening | Error backlog near zero |

| Days 66-90 | Conditional Tier 2 expansion | Incremental coverage without quality drop |

A wave model turns directory execution into a repeatable system instead of a one-time campaign.

Where a product workflow fits

If your team wants to reduce manual coordination, use a structured workflow rather than disconnected spreadsheets and ad hoc checklists.

ListingBott is built for one-time purchase directory execution with intake, approved directory list, publication workflow, and report handoff. It is positioned as a tool workflow, not a consulting retainer.

If you want a scalable directory listing service workflow without managing every submission step manually, this operating model can reduce coordination overhead while keeping approval control.

Risks/limits

Even strong directory plans have constraints. Decision quality matters, but outcomes still depend on external systems and broader site performance.

Common failure patterns

| Failure pattern | Typical cause | Mitigation |

|---|---|---|

| Over-expansion | Too many categories launched at once | Limit first wave to highest-confidence categories |

| Profile inconsistency | Multiple editors without shared source profile | Enforce canonical fields and one approval owner |

| Weak category fit | Using broad directories as default | Validate intent fit before submit |

| Correction backlog | No error log or ownership model | Track issues and set correction SLAs |

| Misleading reporting | Counting submissions instead of quality outcomes | Pair activity metrics with QA outcomes |

What you can and cannot expect

What this process can improve:

- execution quality,

- consistency of business representation,

- directional visibility support through stronger category selection.

What this process cannot guarantee:

- fixed ranking positions,

- guaranteed traffic by a specific date,

- guaranteed indexing speed,

- outcomes controlled by third-party platforms.

DR-focused promise boundary

When a DR-related promise is discussed, the qualified condition is specific: starting DR below 15, goal explicitly set to domain growth, and approved directory list. Outside these conditions, DR outcomes should be treated as non-guaranteed.

Commercial policy alignment snapshot

Current policy framing should stay consistent with approved language:

- one-time payment model,

- publication to 100+ directories (per current website language),

- refund is possible if process has not started,

- no hidden extra fees (per current FAQ language).

FAQ

1) How many online directory groups should I start with?

For most teams, two to three Tier 1 groups are enough for Wave 1. Add more only after QA stability is proven.

2) Are general business directories still useful?

Yes, but usually as Tier 2 support, not as first-priority channels, because relevance and intent can be weaker.

3) Should local businesses use the same shortlist as SaaS companies?

No. Local businesses usually prioritize local directories first, while SaaS teams often prioritize startup and software comparison categories.

4) Is directory volume the main driver of results?

Usually no. Category fit, profile quality, and maintenance discipline are more predictive than raw submission count.

5) Can directory work guarantee ranking improvements?

No. Directory execution can support visibility and consistency, but rankings depend on many external factors.

6) What is the safest way to scale after Wave 1?

Use a wave-based plan, resolve correction backlog, and expand only when ownership and QA controls remain stable.