Table of Contents

- Why this Matters Now

- Best-Fit Directory Comparison List (2026)

- Step-by-Step Rollout Checklist

- What to Avoid When Choosing Directories

- 90-Day Execution Plan

- FAQ

sbb-itb-8e44301

Quick Answer

The best ai tools directories for seo are not the biggest lists. They are the directories where your profile quality, category fit, and ongoing maintenance can produce measurable discovery and trust value.

A practical 2026 approach is:

- score each directory by intent and profile quality,

- launch in controlled tiers,

- validate listing integrity after publish,

- keep only channels with clear contribution.

Teams that submit broadly without quality controls usually get noisy visibility, stale profiles, and weak referral quality.

Why this Matters Now

AI tool discovery behavior changed. Buyers, operators, and creators often evaluate tools through directory clusters before they visit your site directly. Search and AI systems also use recurring third-party references to interpret your product identity.

So directory work now supports three layers:

- discoverability: appearing in relevant category and comparison paths,

- trust: consistent external profile quality,

- entity clarity: stable product references across sources.

For SEO, this is valuable only when channels are curated and maintained. A high-count portfolio with low integrity can dilute effort and reporting quality.

What “Best” should Mean for SEO Teams

When teams ask for the “best” directories, they usually mean different things. Clarify which “best” applies:

- Best for qualified discovery Channels where users actively compare and evaluate AI tools.

- Best for profile trust Channels where complete, structured profiles improve credibility.

- Best for operational sustainability Channels your team can maintain without creating correction debt.

- Best for portfolio diversity A mix of core and support channels that avoids over-reliance on one source.

If your definition is only “highest traffic domain,” you will often over-select channels that do not support meaningful outcomes.

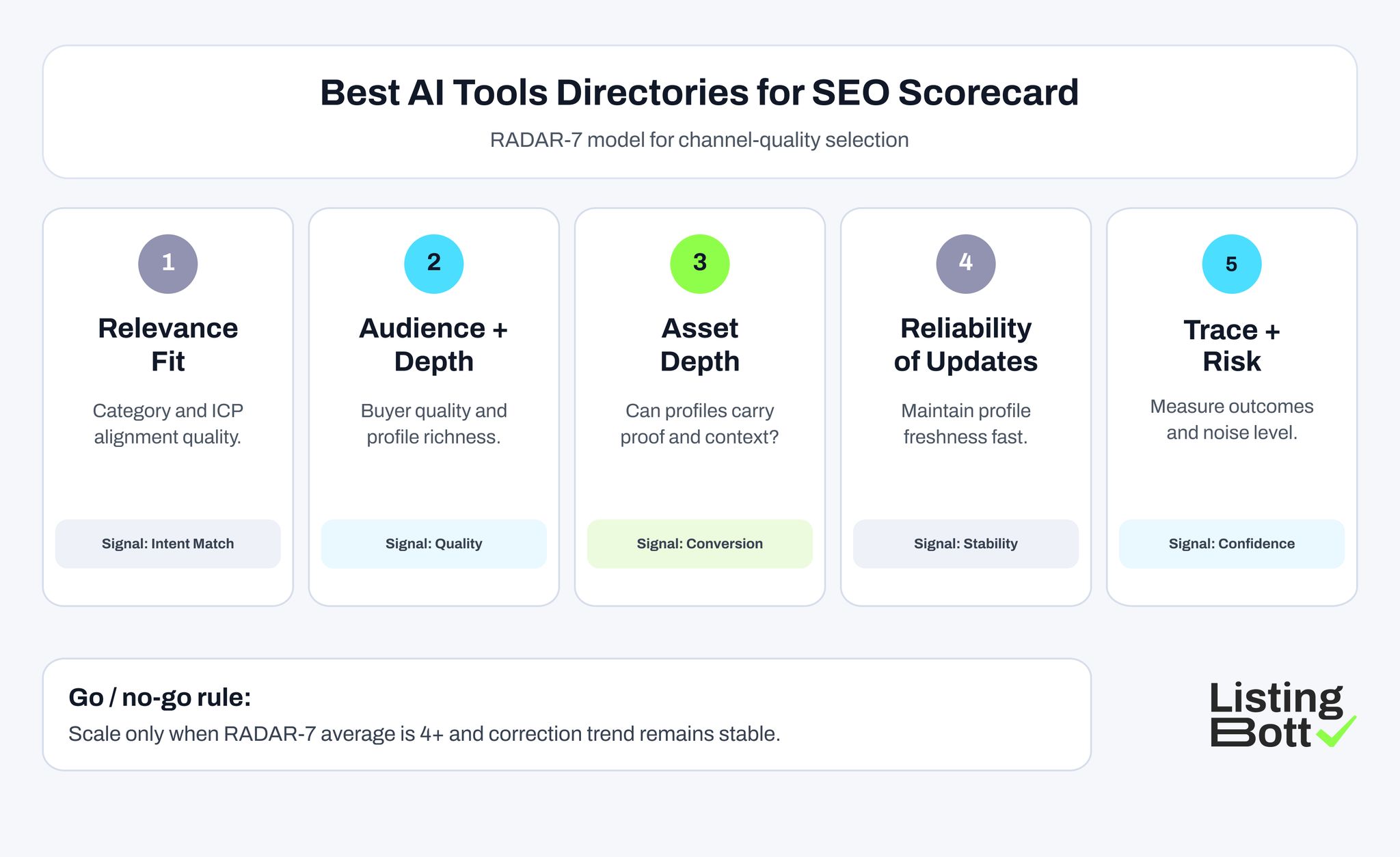

The RADAR-7 Scoring Model for AI Tool Directories

Use this model to rank directories before launch.

| Criterion | Practical question | Why it matters | Score (1-5) |

| Relevance fit | Does the platform match your AI-tool category and ICP? | Improves intent alignment | 1-5 |

| Audience quality | Are users on this channel likely to evaluate tools seriously? | Filters low-intent visits | 1-5 |

| Directory structure | Is taxonomy clear and discoverable? | Supports category visibility | 1-5 |

| Asset depth | Can your profile include enough product context and proof? | Improves conversion readiness | 1-5 |

| Reliability of updates | Can you edit and maintain profile quality quickly? | Prevents drift over time | 1-5 |

| Outcome traceability | Can you track referral quality and assists? | Enables keep/de-prioritize decisions | 1-5 |

| Risk and noise control | Are spam/duplicate/policy-friction risks manageable? | Protects portfolio quality | 1-5 |

Threshold rules:

- 28-35: core directory candidates

- 22-27: pilot/support candidates

- below 22: de-prioritize unless strategic reason exists

This scoring system is simple enough for recurring monthly use.

Best AI tools Directories for SEO Scorecard

Best-Fit Directory Comparison List (2026)

The table below uses your project dataset plus your provided reference set. It is a practical shortlist, not a submit-everywhere template.

| Directory | URL | Best use in SEO portfolio | Best-fit company profile | Operational note |

| RankMyAI | https://www.rankmyai.com/rankings/top-100-ai-tools-directories-overall | market scan + benchmark reference layer | teams mapping competitive directory landscape | use as selection input before committing wave scope |

| AI Tools Directory | https://aitoolsdirectory.com/ | core discovery candidate for general AI-tool search intent | multi-use-case AI tools with clear product framing | keep category mapping precise to avoid mismatch |

| Best AI Tools | https://www.bestaitools.com/ | strong discovery-support channel with meaningful category navigation | product-led AI tools targeting broad use cases | update profile after major release cycles |

| Best AI Agents Directory | https://www.bestaiagents.directory/ | targeted channel for agent-oriented products | AI agent and automation products | align copy to agent-specific value outcomes |

| AI Agents Directory | https://ai-agents-directory.com/ | support channel for agent category coverage | early and growth-stage AI agent products | treat as pilot tier until KPI quality stabilizes |

| Automation Tools Directory | https://www.automationtools.directory/ | workflow-automation discovery support | AI products tied to operational automation | map destination URLs to intent-specific pages |

| NEEED Directory | https://neeed.directory/ | broad product exposure support tier | teams expanding controlled channel diversity | monitor quality closely to avoid noisy traffic |

| DirectoryFame | https://directoryfame.com/ | citation/support-layer diversification | teams scaling secondary visibility footprint | keep governance strict for profile consistency |

| HyzenPro AI Tools Directory | https://hyzenpro.com/ai-tools-directory/ | experimental niche channel for test waves | early-stage AI tools testing category traction | use as experiment, not core, until performance proves value |

How to read this list

Use three tags for each channel:

- core: strong fit, quality, and maintainability,

- support: useful but secondary,

- experiment: controlled test only.

Do not promote experiments to core unless they pass repeated quality and outcome checks.

Recommended Channel Mix by Team Maturity

Early-stage AI startup (small team)

- 3-4 core channels,

- 1-2 support channels,

- maximum 1 experiment channel.

Focus on profile quality and correction speed, not channel count.

Growth-stage SaaS AI team

- 4-6 core channels,

- 2-3 support channels,

- 1-2 experiments with strict gates.

Prioritize outcome measurement and monthly portfolio pruning.

Agency or multi-client operators

Teams using seo tools for agencies, white label seo tools, and seo tools custom dashboard stacks can manage larger portfolios, but only if governance is tight.

Practical setup:

- shared scoring model,

- client-level ownership matrix,

- standardized QA checks,

- channel-level keep/stabilize/de-prioritize rules.

The tooling stack helps coordination, but channel quality decisions still require human review.

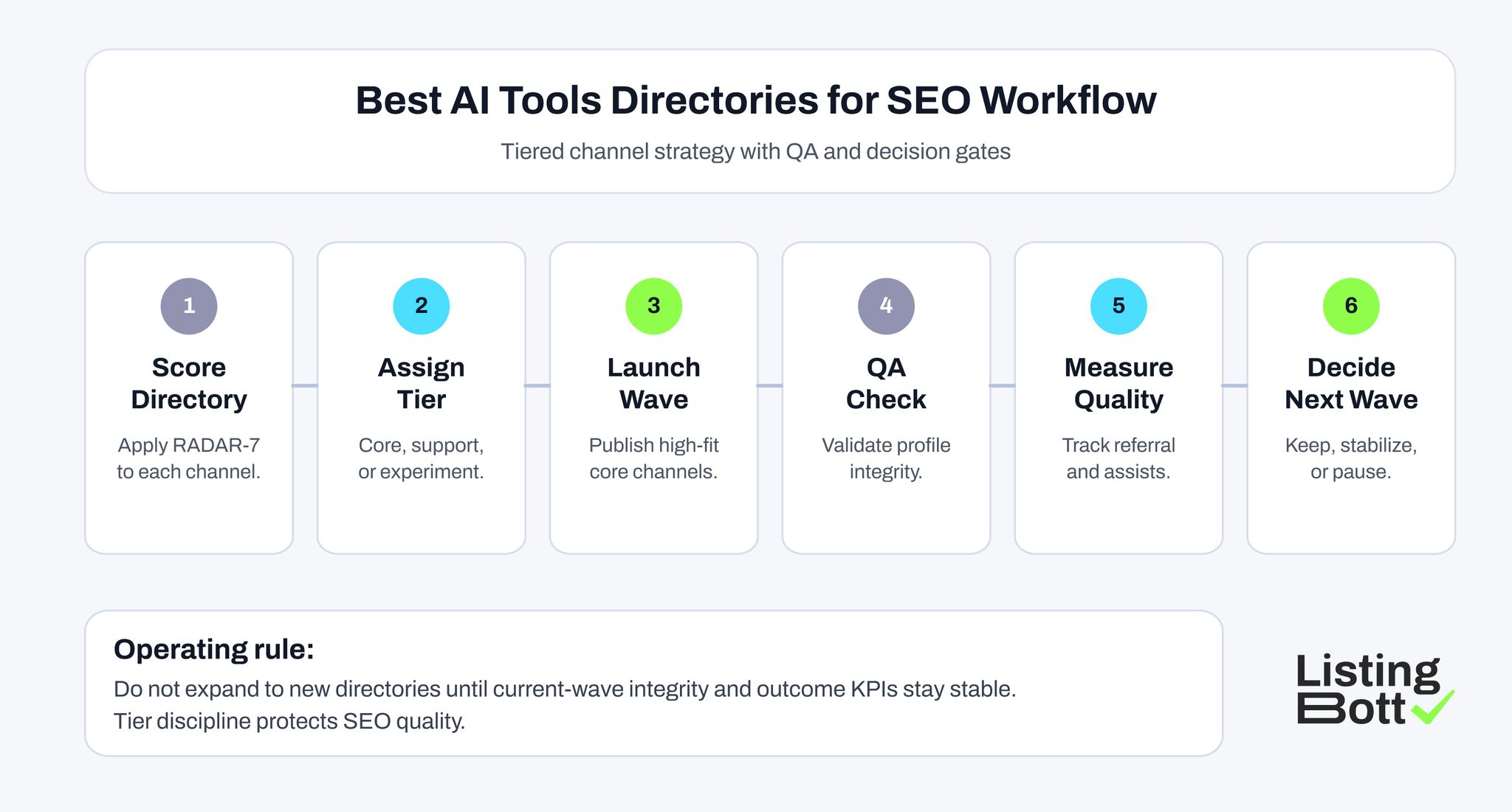

Best AI Tools Directories for SEO Workflow

Step-by-Step Rollout Checklist

Step 1: build canonical profile pack

Include:

- approved product naming variants,

- one-line + full profile descriptions,

- category and subcategory map,

- destination URL map by intent,

- screenshot/proof asset bundle.

Step 2: score every candidate with RADAR-7

Block channels that fail fit or governance thresholds.

Step 3: define wave scope

Limit Wave 1 to channels with highest combined score and manageable maintenance expectations.

Step 4: publish in batches

Track submission status, approvals, and correction requests in one central ledger.

Step 5: run 72-hour QA pass

Check live profiles for:

- naming consistency,

- category accuracy,

- URL correctness,

- proof/asset completeness,

- duplicate listing risks.

Step 6: run monthly maintenance loop

Monthly cycle should include:

- profile refresh,

- correction closure,

- outcome review,

- channel classification decisions.

This process keeps the directory program useful beyond initial publication.

What to Avoid When Choosing Directories

Mistake 1: using one giant list without scoring

Fix:

- apply RADAR-7,

- cap wave scope,

- expand only after measured results.

Mistake 2: treating all directories as equal

Fix:

- assign each platform a role (core/support/experiment),

- avoid duplicated role channels.

Mistake 3: skipping post-launch QA

Fix:

- set mandatory QA window after publish,

- block next wave until prior QA is clean.

Mistake 4: reporting only clicks

Fix:

- track referral quality and assisted conversion signals,

- remove channels with high noise and low contribution.

Mistake 5: no lifecycle governance

Fix:

- schedule recurring review cadence,

- enforce keep/stabilize/de-prioritize decisions.

KPI Board for Directory Quality Decisions

| KPI | Why it matters | Healthy signal | Risk signal |

| Listing integrity rate | profile consistency across channels | 95%+ on core set | growing mismatch trend |

| Correction closure speed | operational quality and accountability | stable cycle time | backlog age increasing |

| Referral quality | visitor relevance and engagement | improving engagement depth | high bounce/low intent |

| Assisted conversion trend | channel contribution to outcomes | stable assist contribution | flat or declining influence |

| Maintenance load ratio | scalability of operations | predictable team effort | rising effort with weak return |

These KPIs are stronger than raw listing counts and help protect portfolio quality over time.

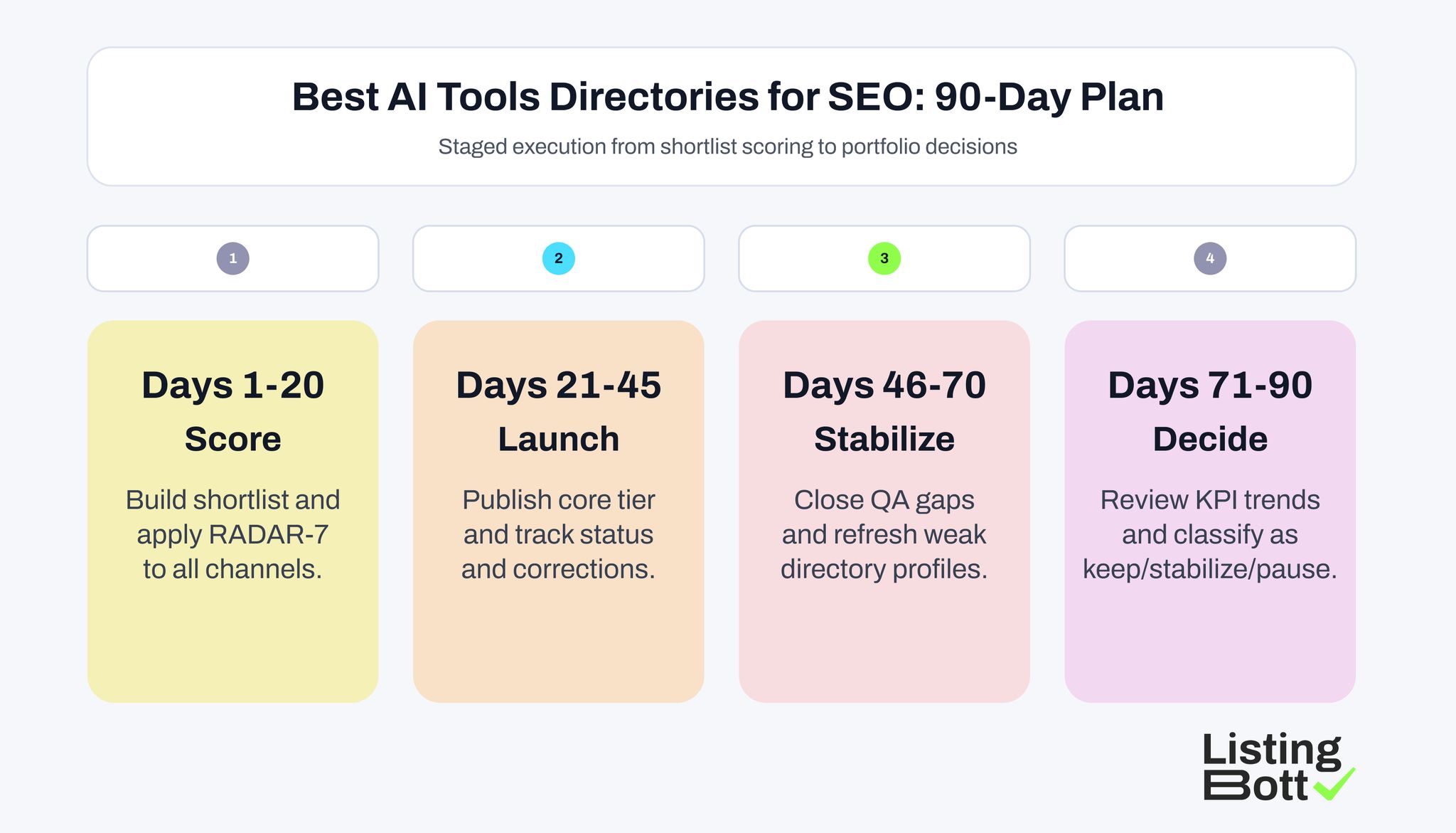

90-Day Execution Plan

Best AI Tools Directories for SEO: 90-Day Plan

Days 1-20: shortlist and scoring

- finalize profile pack,

- score candidate directories,

- lock core/support/experiment tiers.

Days 21-45: first-wave publishing

- publish core set,

- track approvals/rejections,

- run initial QA and corrections.

Days 46-70: stabilization

- close correction backlog,

- refresh weak listings,

- validate profile consistency trends.

Days 71-90: performance decisions

- review KPI board,

- classify channels by value,

- open next wave only if quality remains stable.

Landing-Page Mapping for Directory Traffic Quality

Many teams lose value after directory publication because all channels point to the same homepage URL. For best ai tools directories for seo, this is usually a missed opportunity.

Use intent-based destination mapping:

- category-discovery directories: link to a category or use-case page,

- comparison-intent directories: link to a feature comparison or alternatives page,

- conversion-ready directories: link to a clear product page with proof and pricing context.

When you run this mapping, track quality by destination type instead of only by directory name. This gives you clearer optimization actions:

- if category pages get strong engagement but low trial starts, improve CTA flow and proof blocks,

- if product pages get low engagement, improve message-match between directory snippet and page headline,

- if comparison pages get good engagement and assisted conversions, expand similar directory channels.

A practical route is to keep one destination matrix in your operations sheet:

- directory name,

- audience intent tag,

- destination URL,

- expected user action,

- 30-day quality signal.

This structure reduces random linking decisions and makes channel quality easier to compare. It also helps teams using mixed stacks like seo tools for agencies, local seo tools, and custom reporting dashboards align directory activity with broader conversion goals.

How this Connects to Broader SEO Operations

For organizations already running best seo tools for agency processes, directories should be treated as one layer in a broader SEO stack, not the only growth channel.

A balanced SEO operating model often combines:

- technical/on-site SEO foundations,

- editorial content and comparison coverage,

- external profile and citation consistency,

- performance-led channel pruning.

Teams using local seo tools or workflows like pleper local seo tools for geo checks can add consistency safeguards where local visibility matters, but these checks should support strategy, not replace it.

Review Rhythm for AI-directory Channel Decisions

A practical review rhythm keeps channel decisions objective:

- weekly checks during the first launch month,

- biweekly checks once correction load is stable,

- monthly keep-or-prune decisions based on quality and contribution signals.

This cadence prevents teams from expanding too quickly after one positive week. It also helps protect editorial quality in profiles, which is often where long-term performance gains are won or lost.

Where ListingBott Fits

ListingBott is a tool workflow for structured directory execution and reporting.

Typical process:

- complete onboarding form,

- review and approve listing selection,

- publication runs,

- receive report with status and next steps.

Offer alignment:

- one-time payment model,

- publication to 100+ directories,

- no hidden extra fees,

- refund possible if process has not started.

Promise limits:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guaranteed outcomes controlled by third-party platforms.

Qualified DR statement: DR growth to 15 is promised only when starting DR is below 15, the selected goal is domain growth, and the approved directory list is in place.

FAQ: Best AI Tools Directories for SEO

What are the best ai tools directories for seo in practice?

The best options are directories with strong category relevance, reliable profile depth, and manageable maintenance that can produce measurable discovery and assist value.

How many AI directories should we start with?

Most teams should start with 5-8 high-fit channels in a controlled first wave, then scale only after QA and performance review.

Should we submit to every AI directory list we find?

No. Use list resources for research, then score channels and prioritize quality over volume.

Can agencies manage larger AI directory portfolios safely?

Yes, but only with strict governance: channel scoring, ownership rules, recurring QA, and clear keep/de-prioritize policies.

Are directory listings enough for SEO growth on their own?

No. They should support a broader SEO system that includes technical, content, and conversion-focused work.