Table of Contents

- Why this Matters in 2026

- Step-by-Step Implementation Checklist

- Common Mistakes and Fixes

- 90-Day Rollout Plan

- FAQ

sbb-itb-8e44301

Quick Answer

AI tools directory listing services work best when teams optimize for qualified discovery and profile quality, not for maximum submission volume.

A practical model is:

- choose directories by audience and category fit,

- publish high-depth profiles with clear use cases,

- run post-launch quality checks,

- keep only channels with measurable contribution.

Most teams underperform because they scale too fast across mixed-quality directories, then spend months fixing profile drift and low-intent traffic.

Why this Matters in 2026

AI directory ecosystems expanded quickly, but not all growth produced quality. Some platforms are strong discovery channels with relevant buyers. Others are mostly index pages with weak intent and low conversion value.

In 2026, listing strategy affects three core outcomes:

- buyer discovery quality: whether the right users see your tool in relevant categories,

- trust consistency: whether your tool profile is complete, current, and credible,

- entity clarity for search and AI engines: whether external references consistently describe your product.

For teams comparing ai tools directory listing services, the key question is not "how many directories can we publish to". The key question is "which directories create durable, measurable value with manageable maintenance".

What Good Service Quality Looks Like

Strong listing execution is operational, not cosmetic. A good service or workflow should provide:

- structured platform selection criteria,

- profile readiness and taxonomy mapping,

- controlled launch waves,

- correction loops and monthly QA,

- performance visibility that supports keep/pause decisions.

Weak execution usually looks like:

- mass submission without fit scoring,

- thin profile content copied everywhere,

- no ownership after publish,

- no correction SLA,

- no evidence of referral quality.

If you buy volume without quality control, you get noisy listings rather than a reliable demand-support channel.

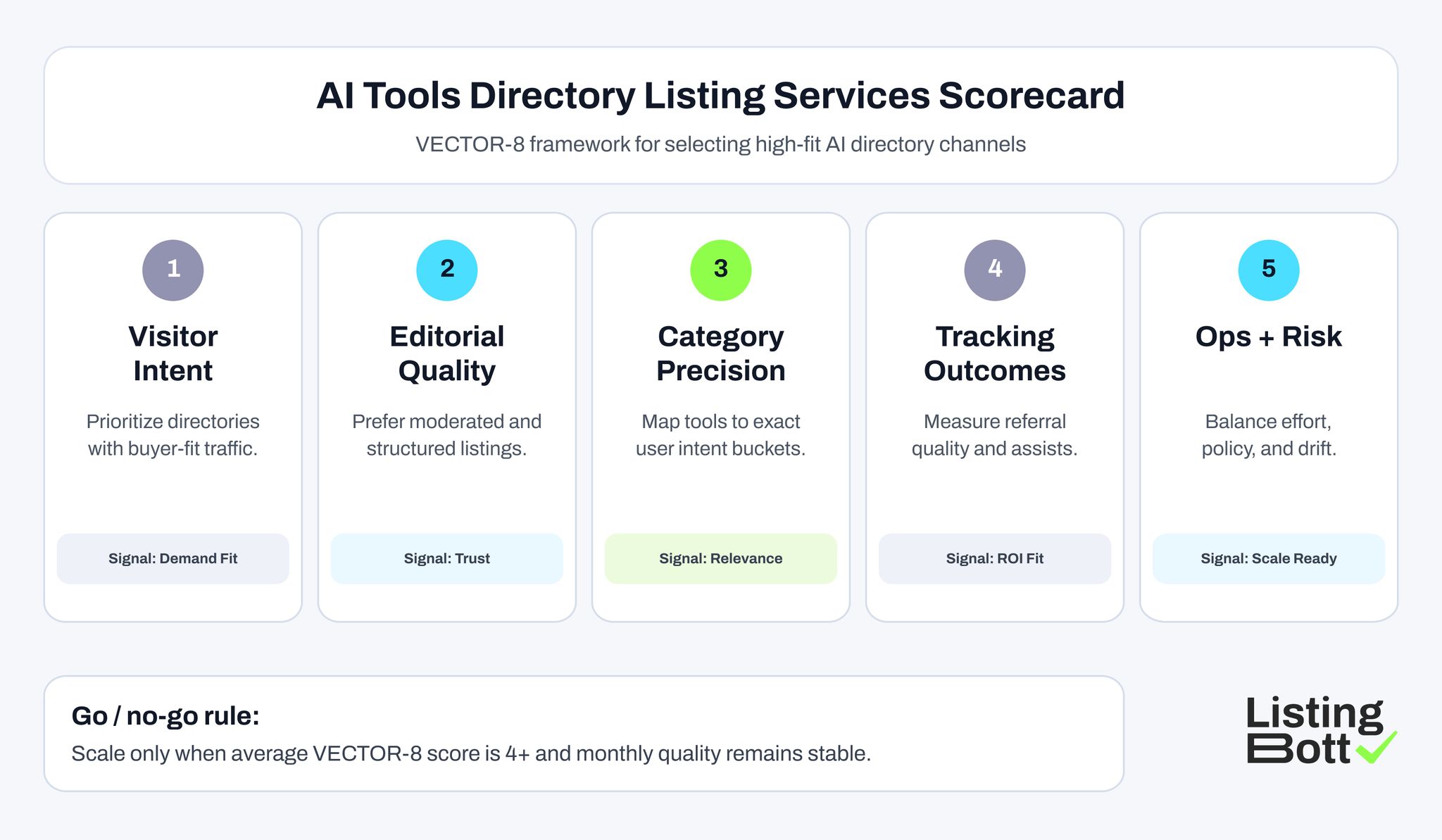

The VECTOR-8 Framework for AI Directory Decisions

Use this framework before approving any directory in your listing program.

| Dimension | Decision question | Why it matters | Score (1-5) |

| Visitor intent | Do users on this platform match your ICP and buying stage? | Filters low-intent visibility | 1-5 |

| Editorial standards | Is there meaningful moderation and category quality? | Reduces low-trust exposure | 1-5 |

| Taxonomy precision | Can your tool be mapped to accurate categories/use cases? | Improves relevance and conversion quality | 1-5 |

| Content depth | Can you publish rich profile content (features, proof, positioning)? | Supports conversion readiness | 1-5 |

| Update control | Can your team update profile data quickly after changes? | Prevents profile drift | 1-5 |

| Referral quality | Is traffic from this channel useful, not just high-volume? | Prioritizes qualified discovery | 1-5 |

| Operational cost | Is maintenance effort proportional to expected value? | Keeps program sustainable | 1-5 |

| Risk profile | Are spam, duplication, or policy-friction risks manageable? | Protects long-term portfolio health | 1-5 |

Scoring thresholds:

- 32-40: core portfolio channels

- 24-31: controlled test channels

- below 24: deprioritize unless strategic reason exists

This framework helps teams evaluate ai tools directory listing services on outcomes, not marketing promises.

AI Tools Directory Listing Services Scorecard

Best-Fit Listing Platforms for AI Tools Directory Listing Services

Use this table as a selection reference. It is a fit-based shortlist, not a submit-everywhere instruction.

| Platform | URL | Why this is a fit | Ideal company profile | Submission note |

| RankMyAI (rankings) | https://www.rankmyai.com/rankings/top-100-ai-tools-directories-overall | Useful benchmark for directory discovery and competitive landscape mapping | AI tools evaluating channel opportunities | Use as research input before choosing launch channels |

| AI Tools Directory | https://aitoolsdirectory.com/ | Dedicated AI-tool discovery context with category browsing behavior | AI SaaS with clear feature-to-use-case mapping | Keep category and positioning copy precise |

| Best AI Tools | https://www.bestaitools.com/ | Established AI-tools listing ecosystem with active discovery intent | B2B and prosumer AI products | Ensure profile highlights concrete outcomes, not generic claims |

| Best AI Agents Directory | https://www.bestaiagents.directory/ | Focused environment for agent-oriented products and workflows | AI agent builders and automation products | Match listing language to agent-specific user intent |

| AI Agents Directory | https://ai-agents-directory.com/ | Additional agent-centric citation/discovery layer | New and growth-stage AI agent tools | Validate policy and update process before scaling |

| Automation Tools Directory | https://www.automationtools.directory/ | Relevant for workflow automation and ops-focused AI products | Automation and productivity AI vendors | Route traffic to intent-matched landing pages |

| NEEED Directory | https://neeed.directory/ | Broad product discovery environment with tool-category exposure | Product-led teams testing discovery channels | Treat as test/support tier until quality is validated |

| DirectoryFame | https://directoryfame.com/ | Supplemental listing channel for diversified profile footprint | Teams expanding support-layer citations | Use only with strong QA and correction governance |

| HyzenPro AI Tools Directory | https://hyzenpro.com/ai-tools-directory/ | Niche AI directory context for additional visibility experiments | Early-stage AI products exploring new channels | Keep it in controlled pilot tier initially |

How to build your first launch wave

A practical first wave for most teams:

- 4 core channels with high VECTOR-8 scores,

- 2 support channels for citation/trust diversification,

- 1-2 experimental channels with strict review gates.

This keeps expansion disciplined while still testing upside opportunities.

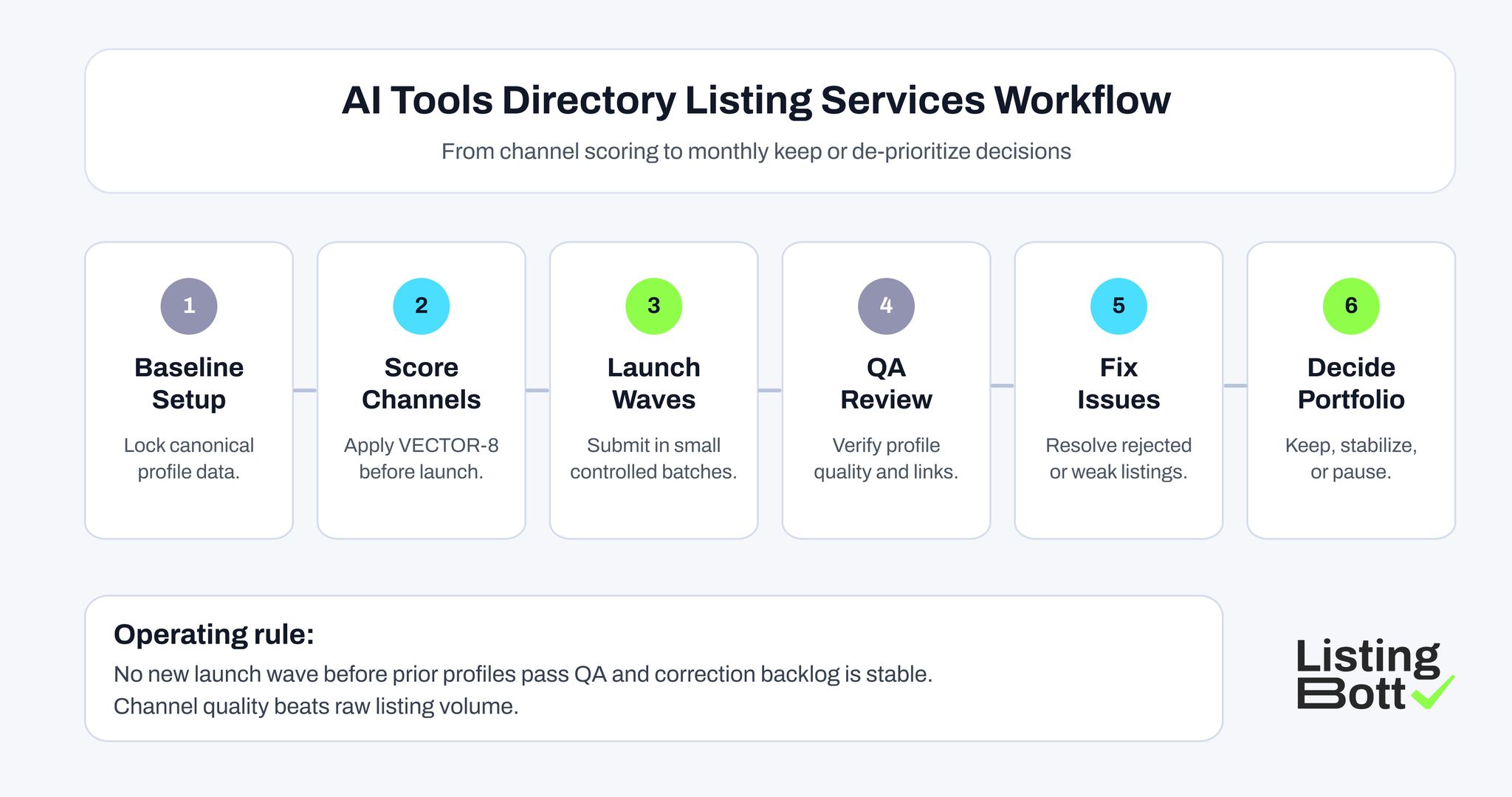

AI Tools Directory Listing Service Workflow

=Step-by-Step Implementation Checklist

Step 1: define one canonical profile baseline

Create a single source that includes:

- product name and approved variants,

- one-sentence value proposition,

- category and use-case map,

- feature proof blocks,

- destination URL map by intent,

- media assets and screenshot policy.

This baseline prevents fragmentation across listings.

Step 2: map categories before submission

For each platform, map your tool to categories using buyer language. If category fit is weak, do not submit yet. Wrong taxonomy is one of the biggest quality failures in AI directories.

Step 3: assign owner roles

Set explicit responsibility:

- launch owner (submission operations),

- profile owner (content quality),

- QA owner (post-launch verification),

- analytics owner (performance review).

Without named owners, listings decay quickly.

Step 4: launch in controlled batches

Avoid one-time bulk submission. Publish in waves and log:

- submission date,

- profile URL,

- status (pending/live/rejected),

- correction requests,

- final publish confirmation.

Step 5: run 72-hour post-launch QA

Check each live profile for:

- correct product name and category,

- accurate links,

- complete feature and proof text,

- visual consistency,

- duplicate listing risk.

Step 6: run monthly maintenance cycles

Monthly operations should include:

- profile refresh after product updates,

- correction closure,

- referral-quality review,

- keep/stabilize/de-prioritize tagging.

This is where the long-term value of ai tools directory listing services is created.

How to Evaluate Providers before You Buy

Use this buyer checklist when comparing vendors or internal workflows.

Selection quality

- Do they explain platform-selection methodology clearly?

- Do they use fit scoring or just raw volume promises?

- Can they justify why each directory belongs in your portfolio?

Execution quality

- Do they build from canonical baseline inputs?

- Do they provide submission logs and status visibility?

- Do they include post-launch QA and correction handling?

Governance quality

- Are ownership, timelines, and escalation paths defined?

- Is there a recurring maintenance cycle?

- Is there a clear removal/de-prioritization rule for weak channels?

Measurement quality

- Can they show referral quality, not only click counts?

- Do they track assisted conversions and downstream impact?

- Do reports support decision-making instead of vanity metrics?

A provider that cannot answer these clearly is likely selling activity, not outcomes.

Commercial Fit for Agencies and Multi-client Teams

Teams running agency workflows often use seo tools for agencies, white label seo tools, and seo tools custom dashboard stacks to centralize reporting. That can help operations, but it does not replace channel-fit logic.

The practical sequence is:

- use your tool stack for visibility,

- use VECTOR-8 for channel decisions,

- use QA and correction governance to preserve quality.

Even teams using best seo tools for agency setups still need disciplined listing policy. Platform quality is a strategic decision, not a dashboard setting.

For hybrid local motions, some organizations also combine AI-directory monitoring with local seo tools, including workflows similar to pleper local seo tools, when they need map/citation consistency checks across local surfaces.

Common Mistakes and Fixes

Mistake 1: submitting to every AI directory found in listicles

Fix:

- score platforms first,

- limit first wave size,

- expand only after evidence review.

Mistake 2: using the same generic description everywhere

Fix:

- keep canonical core data,

- adapt by platform category context and buyer intent.

Mistake 3: no post-launch verification

Fix:

- run fixed QA windows,

- track and close corrections before next wave.

Mistake 4: prioritizing click volume over quality

Fix:

- use referral-quality and assist metrics,

- de-prioritize channels with weak downstream value.

Mistake 5: no removal rules

Fix:

- define keep/stabilize/de-prioritize criteria upfront,

- avoid portfolio bloat.

KPI Board for AI Directory Programs

| KPI | What to measure | Healthy signal | Risk signal |

| Approval quality | % submissions published with minimal edits | stable acceptance | repeated rejection loops |

| Profile integrity | % listings with accurate core fields | 95%+ on core set | rising inconsistency rate |

| Referral quality | engagement quality by directory source | stable depth/relevance | low-intent traffic spikes |

| Assist impact | assisted conversion contribution | measurable support trend | flat influence over cycles |

| Maintenance load | effort per active listing channel | predictable monthly effort | rising effort with weak return |

This KPI set keeps commercial decisions grounded and prevents vanity reporting.

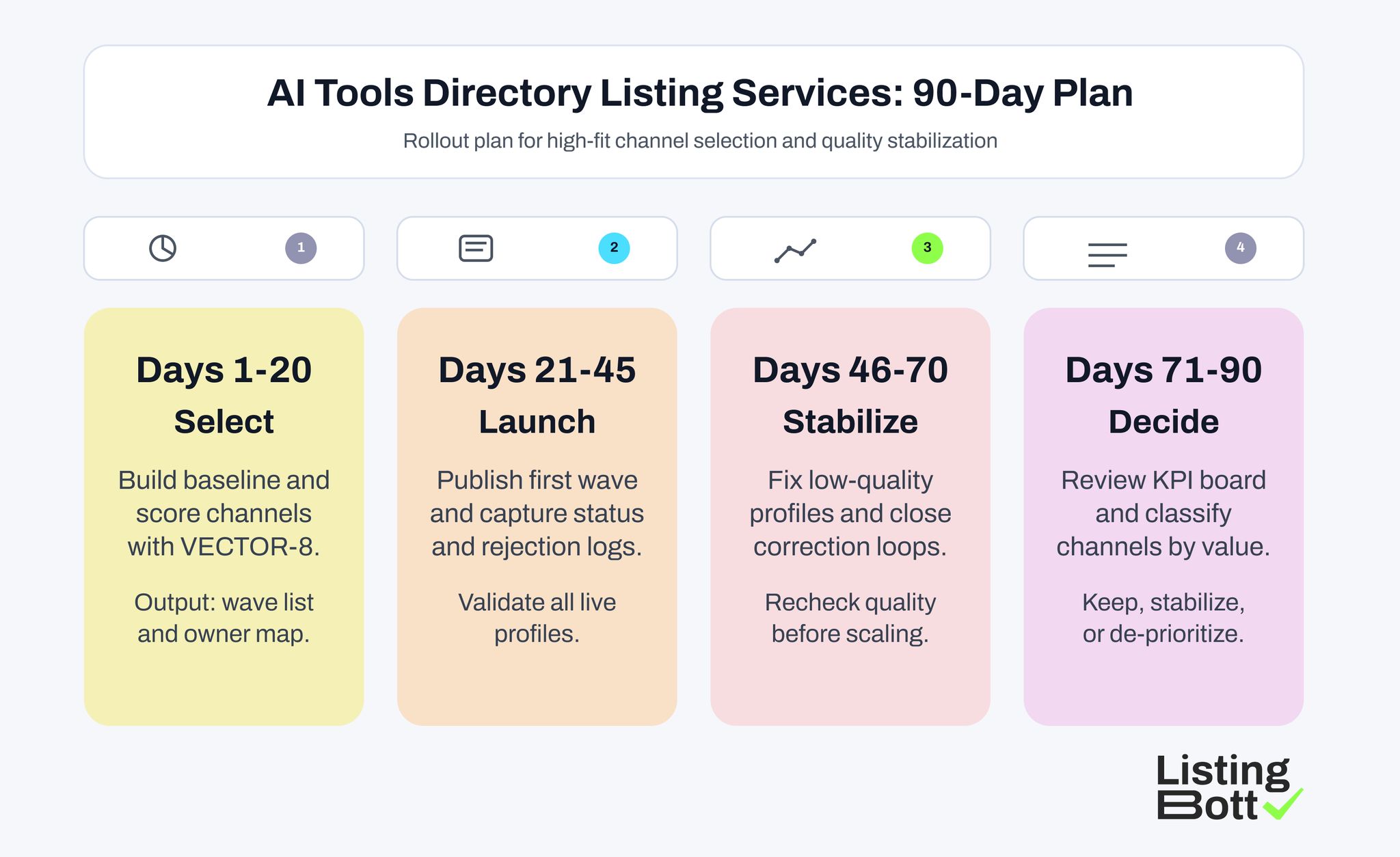

90-Day Rollout Plan

AI Tools Directory Listing Service: 90-Day Plan

Days 1-20: baseline and selection

- finalize canonical profile baseline,

- score channels with VECTOR-8,

- lock first-wave directory set.

Days 21-45: controlled launch

- publish first-wave listings,

- capture status and rejection logs,

- validate live profiles.

Days 46-70: stabilization cycle

- resolve correction backlog,

- refresh weak profiles,

- recheck category and destination accuracy.

Days 71-90: portfolio decisions

- review KPI board,

- tag channels (keep/stabilize/de-prioritize),

- open next wave only when quality is stable.

Buyer-side Red Flags before Selecting a Directory Service

When evaluating ai tools directory listing services, watch for these red flags:

- no explanation of how platforms are prioritized by intent,

- no correction workflow after initial publication,

- no channel-level performance view in reporting,

- heavy promises without clear quality controls.

A strong provider should explain not only where your tool will be listed, but how listing quality will be protected after launch. This is especially important for AI tools where positioning and category fit can change quickly as features evolve.

Where ListingBott Fits

ListingBott is a tool workflow for structured directory publication and reporting.

Typical process:

- complete onboarding form,

- review and approve listing selection,

- publication runs,

- receive report with status and next steps.

Offer alignment:

- one-time payment model,

- publication to 100+ directories,

- no hidden extra fees,

- refund possible if process has not started.

Promise limits:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guaranteed outcomes controlled by third-party platforms.

Qualified DR statement: DR growth to 15 is promised only when starting DR is below 15, the selected goal is domain growth, and the approved directory list is in place.

FAQ: AI Tools Directory Listing Services

What are ai tools directory listing services in practice?

They are structured workflows that select, publish, and maintain AI-tool profiles across relevant directories with quality controls and measurable outcomes.

How many directories should AI tools start with?

Most teams should start with 6-8 high-fit channels in a controlled first wave, then expand only after QA and performance review.

Should we submit to every AI directory we can find?

No. Use fit scoring, maintenance capacity, and referral-quality evidence before adding channels.

Can agency stacks automate this completely?

Automation helps tracking and reporting, but strategic channel selection and profile-quality governance still require explicit operating rules.

What is the biggest risk in AI directory expansion?

The biggest risk is quality drift: outdated or inconsistent profiles across multiple platforms that reduce trust and waste maintenance effort.