Table of Contents

- Best-fit Listing Platforms for AI Tools Business Listing Sites

- Implementation Checklist from Launch to Stable Operations

- AI-specific Risk Controls

- Common Mistakes Teams Make

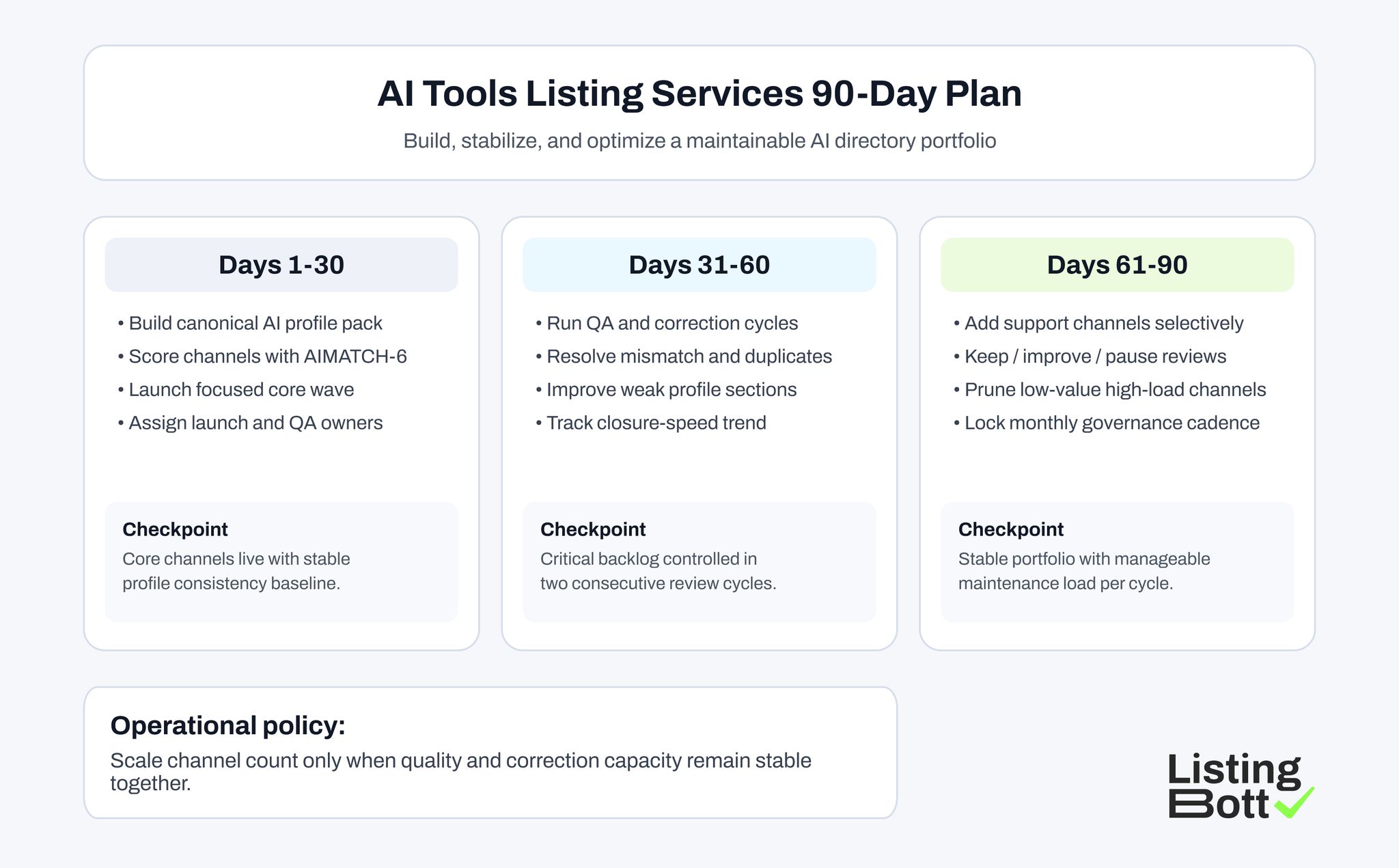

- 90-Day AI Listing Plan

- FAQ

sbb-itb-8e44301

Quick Answer

AI tools gain stronger long-term visibility when listing execution is selective and quality-controlled. The most effective teams publish to a focused set of high-fit AI and software directories, validate profile quality quickly, and expand only when maintenance capacity remains stable.

A practical 2026 sequence is:

- define one canonical product profile baseline,

- score candidate platforms for audience and intent fit,

- launch a controlled first wave,

- run recurring QA and correction cycles,

- keep, improve, or pause channels based on evidence.

This approach usually outperforms mass submission because AI-tool marketplaces change quickly and low-quality listings become stale fast.

Why AI Listing Strategy Changed in 2026

AI discovery behavior is fragmented. Users now evaluate products across AI-specific directories, launch communities, software comparison sites, and search-assisted summaries. That means listing quality matters at more stages than just initial discovery.

If profile data is inconsistent or weak, three problems appear quickly:

- lower trust during evaluation,

- poor intent match from directory traffic,

- rising maintenance workload from fragmented profiles.

This is why a large, static list of free listing sites is not enough. Teams need an operating process that keeps information accurate and messaging consistent as product positioning evolves.

For agencies managing multiple products, this is closely connected to broader seo tools for agencies workflows. Directory execution only works when profile governance and measurement are part of the system.

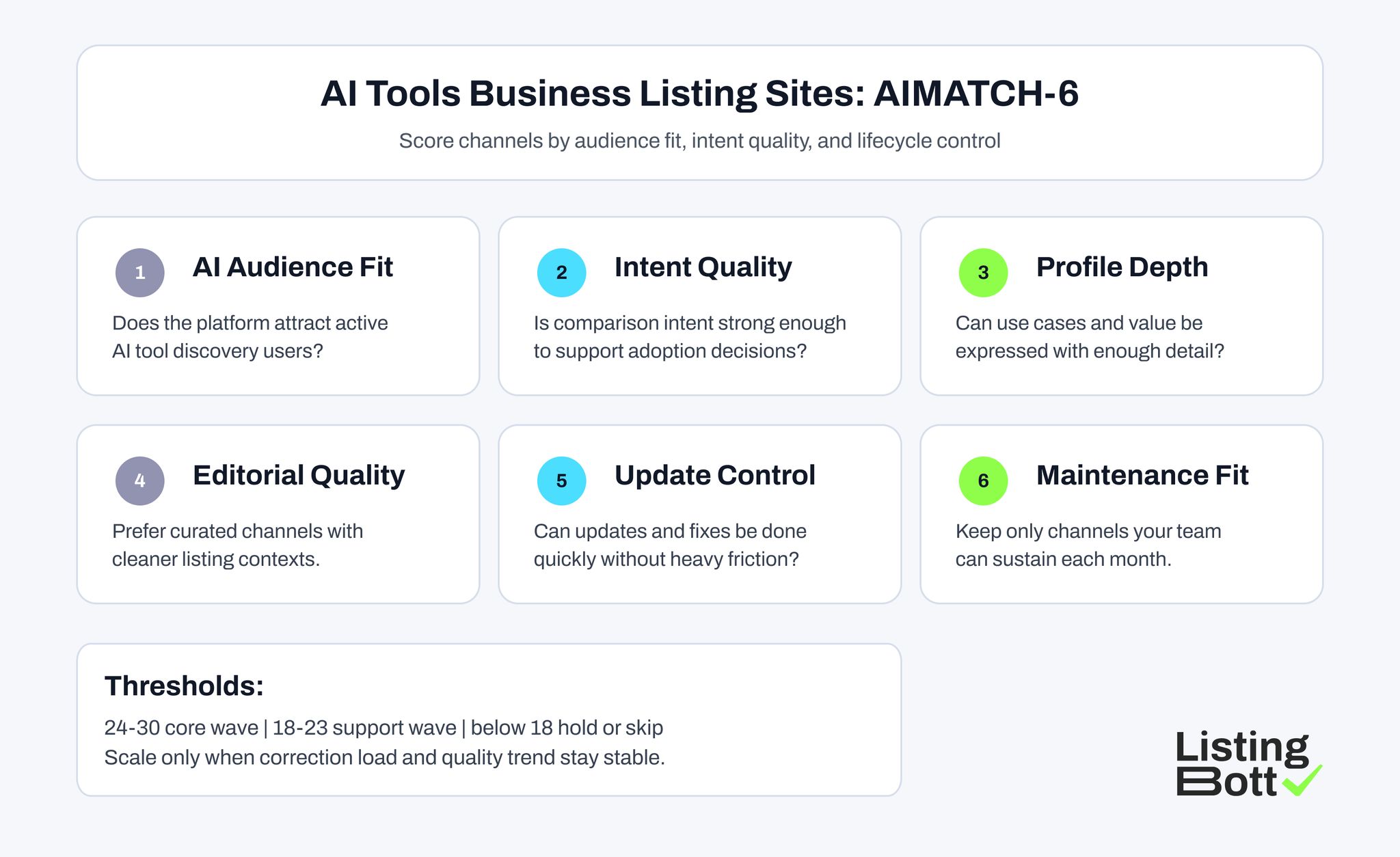

The AIMATCH-6 Framework for Selecting AI Listing Sites

Use this framework before adding any platform to the active portfolio.

| Factor | Practical question | Why it matters | Score (1-5) |

| AI audience fit | Does this platform attract users actively researching AI tools? | improves discovery relevance | 1-5 |

| Intent quality | Are users comparing tools with buying or adoption intent? | supports conversion quality | 1-5 |

| Profile depth | Can you clearly publish use cases, pricing context, and differentiators? | improves trust and qualification | 1-5 |

| Editorial cleanliness | Is the platform reasonably curated and not overloaded with low-quality entries? | protects listing quality context | 1-5 |

| Update control | Can your team update key fields and fix errors quickly? | reduces profile drift risk | 1-5 |

| Maintenance effort | Can this channel remain accurate without excessive monthly overhead? | keeps scaling sustainable | 1-5 |

Suggested thresholds:

- 24-30: core wave,

- 18-23: support wave,

- below 18: hold/skip.

This model gives teams a practical alternative to volume-first publishing and keeps article submission site decisions tied to business outcomes.

AI Tools Business Listing Sites: AIMATCH-6

Best-fit Listing Platforms for AI Tools Business Listing Sites

This list combines AI-native directories and high-intent software discovery platforms.

| Platform | URL | Why it is a best fit | Ideal company profile | Submission note |

| AI Tools Directory | https://aitoolsdirectory.com/ | Strong AI-focused discovery context and niche audience relevance | early and growth-stage AI products | keep category and use-case framing precise |

| There's An AI For That | https://theresanaiforthat.com/ | Broad AI tool exploration behavior and high category coverage | consumer and prosumer AI tools | clear one-line value statement is critical |

| Futurepedia | https://www.futurepedia.io/ | AI-native directory ecosystem with active product browsing | AI SaaS and workflow automation tools | maintain feature updates to avoid stale entries |

| Toolify | https://www.toolify.ai/ | AI tool listing environment with broad discovery reach | product-led AI startups | optimize profile depth and screenshots |

| TopAI.tools | https://topai.tools/ | AI-specific visibility layer with structured category discovery | AI products targeting broad user segments | keep tags and categories aligned with landing pages |

| RankMyAI | https://www.rankmyai.com/ | AI directory and ranking-oriented discovery signal | founders seeking AI-community visibility | focus on positioning clarity over hype copy |

| Product Hunt | https://www.producthunt.com/ | High launch visibility and user feedback ecosystem | newly launched AI tools and fast iterating products | prepare launch assets and proof before publish |

| G2 | https://www.g2.com/ | High-intent comparison behavior for software buyers | B2B AI tools with clear business use cases | category mapping and profile completeness are essential |

| Capterra | https://www.capterra.com/ | Mid-funnel software evaluation traffic with buying intent | AI tools solving business workflows | align profile claims with product pages |

| AlternativeTo | https://alternativeto.net/ | Useful discovery from users comparing alternatives | mature AI tools competing in known categories | maintain competitor positioning accuracy |

How to use this platform set:

- select 5-6 channels for the core wave,

- keep 3-5 channels as support candidates,

- expand only after quality signals remain stable.

Core Wave Design for AI Tools

A strong first wave usually includes:

- 2-3 AI-native directories,

- 1 launch community channel,

- 1-2 high-intent software comparison platforms.

This mix gives enough discovery diversity without immediate maintenance sprawl.

Practical first-wave checklist:

- approve one canonical profile baseline,

- define audience segments and primary use-case messaging,

- map each platform to one destination page,

- assign ownership for launch and corrections,

- schedule a QA review window within 7-14 days.

If your distribution strategy also includes local business listing sites for region-specific offers, keep that as a separate controlled track so quality rules stay clear.

Launch Models: What Works for AI Teams

Teams choosing AI tools business listing sites generally operate in one of four modes.

| Model | Best for | Main risk | What to validate |

| Founder-led manual submissions | very early stage products | inconsistency during fast product changes | owner bandwidth and checklist discipline |

| Freelancer submission support | short launch bursts | variable standards and weak lifecycle control | QA routine and correction ownership |

| Agency-managed execution | teams outsourcing channel operations | provider quality varies widely | platform-fit method and reporting clarity |

| Tool-led controlled workflow | teams scaling repeatable listing operations | weak outcomes when governance is missing | baseline rules, QA cadence, accountability |

The winning model is usually the one that can maintain profile quality for months, not just launch quickly.

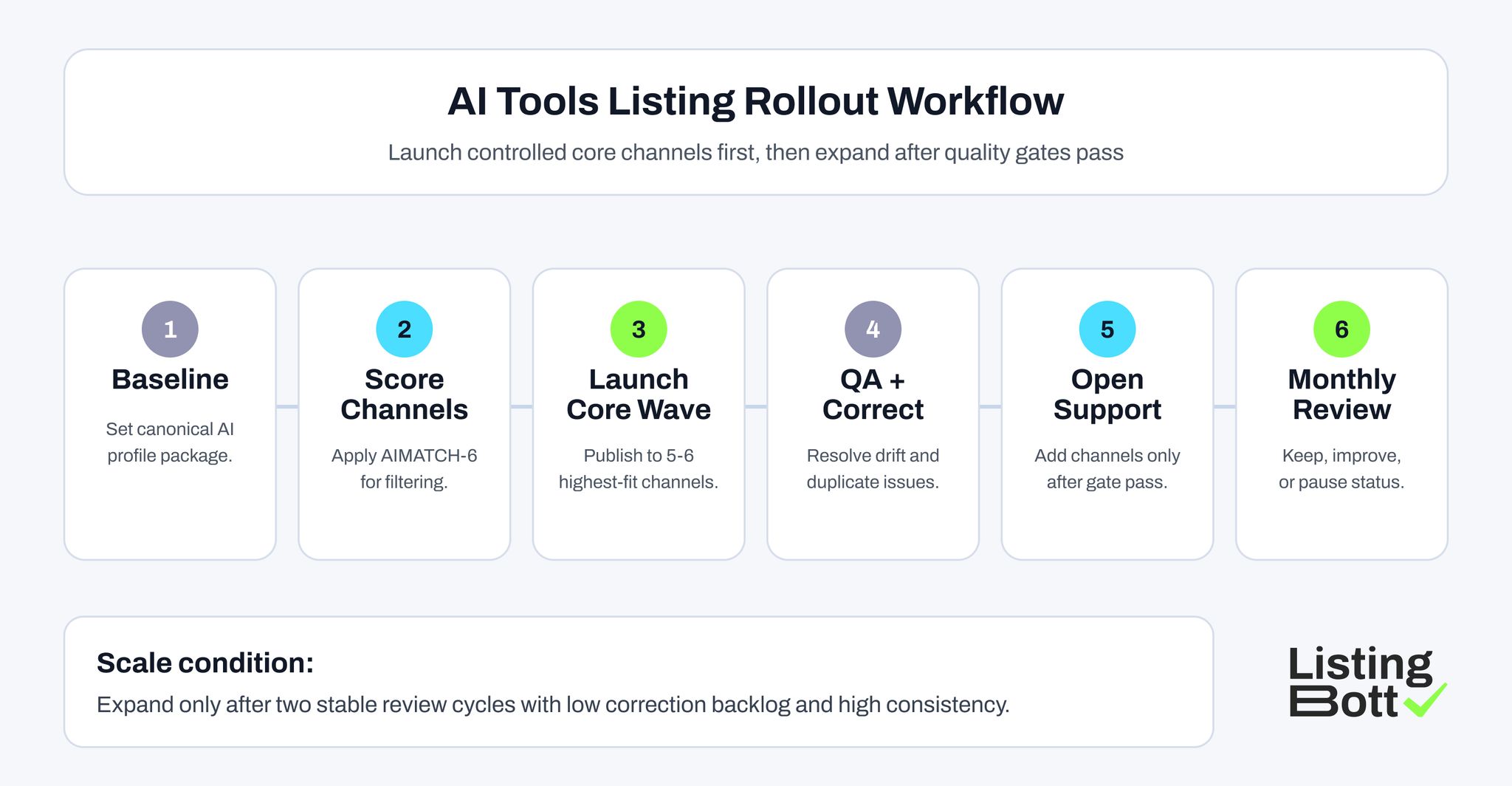

AI Tools Listing Rollout Workflow

Implementation Checklist from Launch to Stable Operations

Step 1: build canonical AI profile pack

Include:

- product summary (short and long versions),

- category and use-case taxonomy,

- pricing and plan context,

- trust/proof assets,

- destination URL mapping.

Step 2: score channel candidates

Apply AIMATCH-6 and classify each platform as core, support, or hold.

Step 3: prepare reusable listing assets

Before launch, prepare:

- short and long descriptions,

- feature and use-case snippets,

- image/logo set,

- FAQ-ready profile answers,

- QA checklist.

Step 4: launch core wave

Publish to core channels and record:

- submission date,

- approval state,

- live URL,

- assigned owner.

Step 5: run post-launch QA

Verify:

- category and tag accuracy,

- URL correctness,

- profile completeness,

- duplicate risk,

- messaging consistency.

Step 6: close critical issues before expansion

Do not open support wave while high-impact mismatches are unresolved.

Step 7: open support wave selectively

Add support channels only after two stable review cycles.

Step 8: run monthly keep/improve/pause decisions

Evaluate each platform by:

- profile quality,

- correction load,

- traffic/engagement quality,

- contribution potential.

This process is especially useful when combining AI directories with broader local citation sites or general business channels.

KPI Board for AI Directory Performance

Use a compact KPI board that supports action.

| KPI | Why it matters | Healthy signal | Risk signal |

| Profile consistency rate | confirms data and messaging stability | 95%+ and stable | recurring mismatch trend |

| Correction closure speed | indicates operational control | predictable closure cycle | growing aged backlog |

| Duplicate profile rate | reflects listing hygiene | low and declining | repeated duplicate creation |

| Channel intent quality | indicates discovery relevance | stable high-intent engagement | low-intent traffic dominates |

| Maintenance load ratio | tracks scalability | controlled effort per cycle | rising effort without quality gain |

This board makes platform decisions evidence-based instead of assumption-driven.

AI-specific Risk Controls

1) Category drift prevention

AI products evolve quickly, so category and use-case labels can drift. Run periodic checks to keep listings aligned with current positioning.

2) Feature claim consistency

Ensure listing claims match live product pages and onboarding flow. Inconsistency reduces trust during evaluation.

3) Fast correction ownership

Define clear ownership for profile updates and response SLAs.

4) Duplicate suppression routine

Run duplicate checks after launch waves and major product renaming events.

5) Expansion gate

Require two stable review cycles before increasing channel count.

6) Quarterly channel pruning

Pause platforms with high maintenance load and weak contribution quality.

These controls prevent operational drag and protect the quality of AI-tool visibility channels.

When to Combine AI Directories with Local Channels

Some products should run a dual-track strategy: AI-native directories for broad discovery and local business listing sites for geo-specific demand capture. This is common for AI tools sold by regional agencies, consulting teams, or local service operators.

A practical split looks like this:

- use AI-specific directories for category discovery and product comparison,

- use local citation sites only for pages where location intent is clear,

- keep profile messaging consistent across both tracks,

- report channel performance separately so signals are not mixed.

This avoids a frequent error where teams blend all channels into one metric and cannot tell which track is producing qualified activity. The same principle applies to free business listing sites usa campaigns: only keep local channels that maintain data quality and support a clear geo-intent objective.

Common Mistakes Teams Make

1) Submitting everywhere from one list

Large list coverage without governance creates recurring correction debt.

2) Reusing identical copy on every platform

Platform context and audience intent differ. One generic message weakens relevance.

3) Treating free channels as zero-cost

Free business listing sites usa and other open directories still require QA and updates.

4) Ignoring post-launch QA

Unreviewed listings drift quickly and reduce profile trust.

5) No destination-page strategy

Routing all listings to one page reduces intent match.

6) No retirement rule

Weak channels remain active and consume team capacity indefinitely.

7) Reporting only submission counts

Launch volume does not show quality or business contribution.

90-Day AI Listing Plan

Days 1-30: foundation

- finalize profile baseline,

- score platforms with AIMATCH-6,

- launch core wave,

- assign ownership.

Days 31-60: stabilization

- run QA cycles,

- close critical mismatches,

- resolve duplicates,

- refresh weak profiles.

Days 61-90: optimization

- open support wave if quality gates pass,

- pause weak channels,

- refine mapping and profile depth,

- lock monthly review cadence.

By day 90, the target is a maintainable discovery portfolio, not just a larger list of listings.

AI Tools Listing Services 90-Day Plan

Where ListingBott Fits

ListingBott supports structured directory publication and reporting workflows for teams that need repeatable execution.

Typical flow:

- onboarding details are collected,

- listing scope is approved,

- publication is executed,

- reporting is delivered.

Offer alignment:

- one-time payment model,

- publication to 100+ directories,

- no hidden extra fees,

- refund possible if process has not started.

Promise limits:

- no guaranteed ranking position,

- no guaranteed traffic by a specific date,

- no guaranteed indexing speed,

- no guaranteed outcomes controlled by third-party platforms.

Qualified DR statement: DR growth to 15 can be promised only when starting DR is below 15, the selected goal is domain growth, and the approved listing set is in place.

FAQ: AI Tools Business Listing Sites

How many AI listing platforms should we launch first?

Most teams should start with 5-6 high-fit platforms, then expand only after quality and maintenance metrics stabilize.

Are AI-native directories better than general software directories?

They serve different jobs. AI-native directories improve niche discovery while general software directories can support broader evaluation traffic.

Should we include free channels in the first wave?

Only if they pass fit and maintenance checks. Free access does not eliminate lifecycle work.

Can listing sites guarantee SEO rankings?

No. Listings support visibility and entity trust signals, but rankings depend on broader SEO execution and competition.

How often should AI tool listings be reviewed?

Monthly reviews are a practical baseline, with faster updates after feature, pricing, or positioning changes.